The Strategic Tech Stack: Why Enterprises Prefer .NET AI

February 25, 2026 / Bryan Reynolds

The Strategic Tech Stack: Why Enterprises Are Choosing .NET and Semantic Kernel Over Python and LangChain for AI

The integration of artificial intelligence into enterprise operations has decisively transitioned from a phase of speculative prototyping into a mandate for secure, production-grade deployment. As organizations move beyond initial experimentation and small-scale proofs-of-concept, Chief Technology Officers (CTOs) and enterprise architects confront a critical architectural divergence: the choice of the underlying technology stack for AI orchestration. The central dilemma often revolves around whether to utilize the ubiquitous, Python-based LangChain framework or to adopt Microsoft’s Semantic Kernel, built natively upon the robust C#.NET ecosystem.

For enterprise clients who prioritize strict type safety, predictable reliability, and deep, frictionless integration with the broader Microsoft ecosystem, the architectural decisions made today will dictate total cost of ownership, system maintainability, and organizational security posture for the next decade. This comprehensive analysis explores the technical, economic, and operational drivers behind the enterprise shift toward the .NET AI stack, providing a granular evaluation of Semantic Kernel, its evolution into the Microsoft Agent Framework, and the long-term strategic advantages of statically typed AI orchestration for large-scale business applications.

The Macroeconomic Context of Enterprise AI Investments

The global business landscape is currently experiencing the fastest technology adoption curve in enterprise software history, significantly outpacing the historical transitions to cloud computing and mobile technology.

To accurately contextualize the technical choices between frameworks, it is essential to first examine the staggering financial stakes involved in modern enterprise AI development. These decisions fit into a broader shift in how organizations approach AI-driven software development in 2026, where architecture and governance can make or break ROI.

Worldwide IT spending is projected to reach an unprecedented $5.61 trillion by the end of 2025, representing an increase of 9.8% from the previous year, with enterprise software spending alone eclipsing $1.24 trillion. Within this massive capital allocation, artificial intelligence commands an outsized portion of strategic investment. Global enterprise spending on AI and generative AI (GenAI) initiatives is forecasted to reach $1.5 trillion by the end of 2025.

This massive influx of capital indicates that enterprises are investing unprecedented resources into intelligent systems designed to optimize workflows, improve productivity, and deliver exceptional customer experiences.

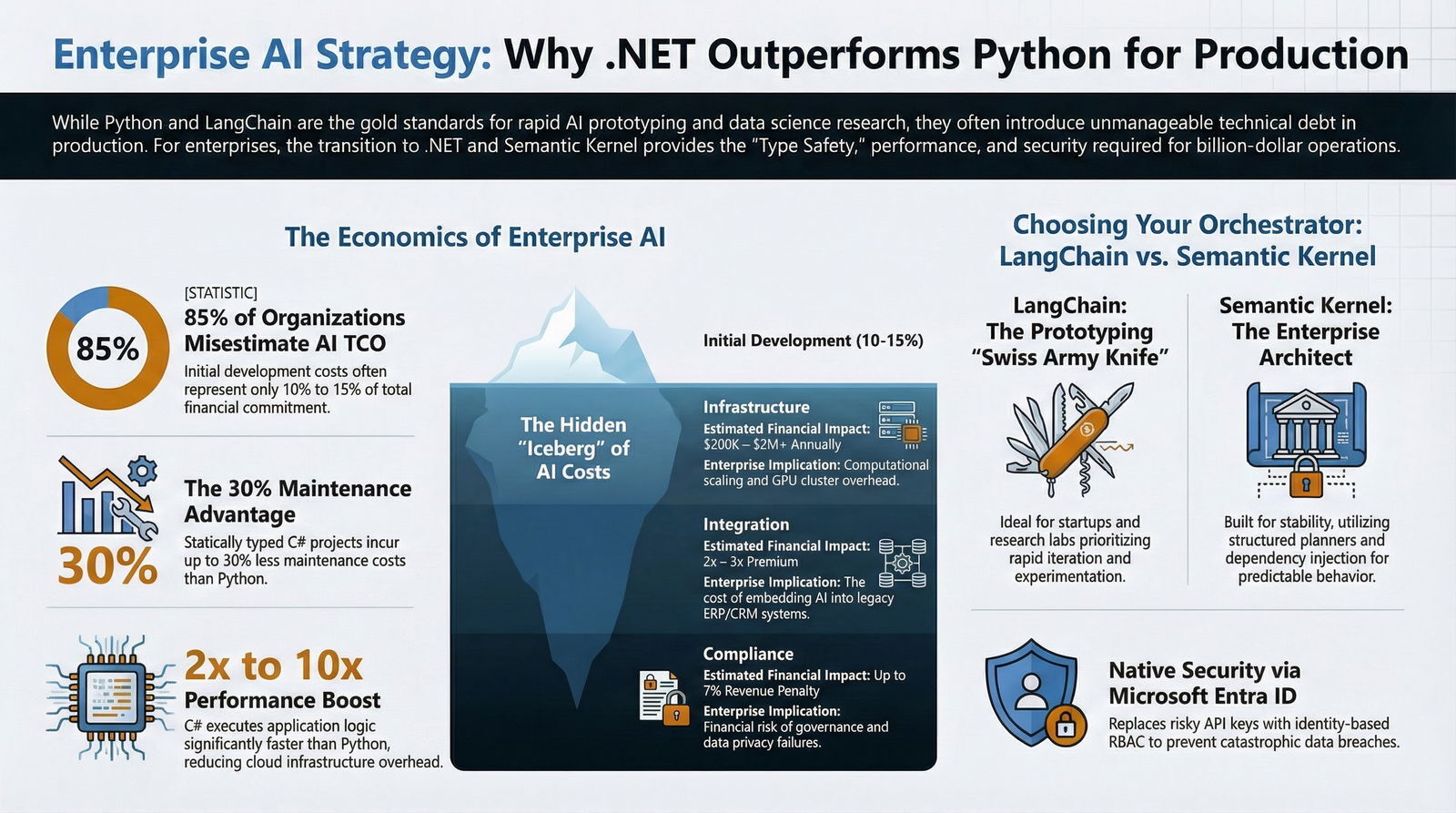

However, unlike traditional software development lifecycles, the economics of artificial intelligence are highly deceptive and notoriously difficult to forecast accurately. Industry research indicates that 85% of organizations misestimate the total cost of ownership (TCO) for AI projects by margins exceeding 10%.

The financial drain typically does not stem from initial model training, fine-tuning, or basic API access costs, but rather from hidden technical debt multipliers that compound over time.

The total cost of ownership encompasses all expenses associated with acquiring, operating, maintaining, and eventually decommissioning an asset over its entire lifecycle.

In the context of AI, the initial purchase price or development cost often constitutes less than 10% to 15% of the total financial commitment. The remaining costs operate beneath the surface, much like an iceberg, demanding a comprehensive framework for evaluating long-term asset investments. This is where disciplined approaches to AI technical debt and TCO become critical for enterprise leaders.

The Hidden Cost Multipliers of Enterprise AI Deployment

Data engineering and infrastructure scaling constitute the vast majority of ongoing AI expenses, heavily overshadowing the initial acquisition of model access. The following table outlines the critical components that inflate the TCO of enterprise AI:

| Cost Component | Estimated Long-Term Financial Impact | Description & Enterprise Implication |

|---|---|---|

| Infrastructure | 200K – 2M+ annually | Computational resource scaling, GPU clusters, auto-scaling mechanisms, and multi-cloud environment overhead. |

| Data Engineering | 25% – 40% of total spend | Continuous data pipeline processing, data quality monitoring, and complex data transformations required for RAG (Retrieval-Augmented Generation). |

| Talent Acquisition | 200K – 500K+ per engineer | Compensation for highly specialized machine learning engineers and AI systems architects, plus retention costs. |

| Model Maintenance | 15% – 30% overhead | Real-time model performance monitoring, drift detection, hallucination mitigation, and automated retraining workflows. |

| Integration Complexity | 2x – 3x implementation premium | The premium cost of embedding AI into legacy systems (ERP, CRM) due to interoperability and architecture mismatches. |

| Compliance & Governance | Up to 7% revenue penalty risk | Costs associated with automating regulatory compliance, auditing data privacy, and the financial risk of governance failures. |

In this high-stakes, high-cost environment, the choice of programming language and orchestration framework acts as a critical risk mitigation strategy. A framework that allows for rapid, weekend prototyping but fractures under the weight of enterprise security constraints will drastically inflate the TCO through exorbitant maintenance, continuous refactoring, and emergency operational support. This is why many leaders now look beyond surface-level productivity and focus on governed agentic engineering approaches that keep long-term value intact.

Language Fundamentals: The C#.NET vs. Python Dichotomy

The conversation regarding AI orchestration inevitably begins with the underlying programming language. Python is undeniably the lingua franca of machine learning research, data science, and initial model training. Its massive, mature ecosystem, which includes highly optimized libraries like NumPy, Pandas, Scikit-learn, PyTorch, and TensorFlow, makes it absolutely indispensable for data scientists and AI researchers.

Consequently, when Large Language Models (LLMs) first emerged into the mainstream, foundational orchestration frameworks like LangChain were naturally built in Python to cater to this existing, highly active demographic.

However, integrating an LLM into an enterprise application—such as a heavily regulated mortgage processing pipeline, a HIPAA-compliant healthcare patient management system, or a high-frequency financial reporting dashboard—is fundamentally a software engineering and application development challenge, not a data science experiment. This critical distinction is precisely where the C#.NET ecosystem demonstrates severe, structural advantages for the enterprise.

The Maintenance and Scalability Advantage of Static Typing

Python is an interpreted, dynamically typed language. While dynamic typing enables highly concise syntax, rapid prototyping, and unmatched speed during the initial experimentation phase, it frequently results in unmanageable technical debt in large-scale, production-grade enterprise applications.

In Python, variable types are determined at runtime. If an AI agent attempts to pass a malformed JSON string generated by an LLM into a downstream business function, a Python application may not register the type error until the exact moment of execution. In a production environment, this can cause a critical, cascading system failure, disrupting operations and degrading the user experience.

While this problem can be somewhat mitigated by adopting structured web frameworks like Django or Flask, the core dynamic nature of the language remains a persistent maintenance hurdle and often accelerates the kind of “cheap AI code” trap that later explodes in operational cost.

Conversely, C# is a compiled, statically typed, object-oriented programming language developed by Microsoft.

Strong typing requires developers to explicitly define the data types for variables, parameters, and function returns during the coding phase.

This introduces rigorous compile-time checking.

In a C# AI orchestration pipeline, if an LLM is expected to return a specific, complex data structure, the .NET compiler ensures that the interface matches precisely before the software is ever allowed to deploy.

Type systems essentially eliminate entire classes of illegal programs; an error simply cannot occur at runtime if the compiler has already verified the structural integrity of the inputs and outputs.

The financial and operational impact of this architectural difference is substantial over a multi-year timeline. Industry success stories and benchmark analyses consistently indicate that large enterprise projects developed in C# incur up to 20% to 30% less in maintenance costs over their lifecycle compared to similar dynamic-language projects.

The structured design patterns and object-oriented principles enforced by C# make massive codebases highly readable, scalable, and manageable, even as development teams inevitably rotate and evolve over the years.

Performance, Computational Efficiency, and Infrastructure Optimization

While the majority of the computational heavy lifting in AI occurs remotely on cloud GPUs hosting the large language models, the application layer itself—responsible for routing prompts, managing vector database memory buffers, executing complex enterprise logic, and handling the network transit—requires exceptional performance.

C# applications run on the Common Language Runtime (CLR) and are compiled to Intermediate Language (IL), which is then just-in-time (JIT) compiled to highly optimized native machine code. For performance-critical or CPU-intensive operations—such as parsing massive vector database query returns, routing concurrent multi-agent workflows, or managing thousands of simultaneous user sessions in a web application—C# typically executes two to ten times faster than native Python.

Python's dynamic nature and its reliance on the Global Interpreter Lock (GIL) can lead to significantly increased memory consumption and execution bottlenecks in highly concurrent enterprise web applications.

While Python's performance can be improved by integrating C/C++ libraries or using tools like Cython, this adds layers of complexity. For an enterprise processing millions of automated transactions, the scalability of C# allows for highly efficient resource utilization, directly reducing the cloud infrastructure costs that constitute the largest portion of AI TCO. This aligns well with modern DevOps efficiency practices that aim to keep performance, cost, and reliability in balance.

Dissecting the Orchestrators: LangChain vs. Semantic Kernel

An AI model, operating in isolation, is merely a stateless text predictor. To build an intelligent AI agent capable of interacting with external APIs, reading proprietary databases, making decisions, and completing complex workflows, developers must utilize an AI orchestration framework. These frameworks serve as the critical middleware that translates human intent into executable actions. Currently, two frameworks dominate the enterprise landscape: LangChain and Semantic Kernel.

The decision between these two platforms represents a choice between two fundamentally different engineering philosophies. Building reliable AI agents that can handle complex reasoning, memory management, and tool orchestration is a highly complex endeavor; without the right framework, an “intelligent agent” rapidly degrades into little more than an expensive, unreliable chatbot.

LangChain: The Open-Source Swiss Army Knife

LangChain, primarily utilized by developers working in Python and JavaScript/TypeScript, is renowned across the industry for its explosive growth, active community, and massive open-source ecosystem.

It is highly flexible, acting as a “Swiss Army knife” for AI development, providing a vast array of out-of-the-box tools, document loaders, and vector database integrations.

Architecture and Mechanics: LangChain is built around the core concepts of “Chains” (pre-defined sequences of actions) and “Agents” (dynamic decision-making loops where the AI determines which tools to call based on the evolving context of the user input).

It features advanced memory systems, including episodic memory for conversation history, and has recently evolved to include LangGraph, an orchestration layer that provides more controllable, cyclic agent execution paths.

The Prototyping Edge: For startups, research teams, or innovation labs looking to build an interactive AI application in a matter of days, LangChain offers unparalleled freedom to experiment quickly without feeling boxed in by rigid architectural constraints.

Its multi-package, modular architecture allows developers to rapidly swap between hundreds of different LLMs and experimental third-party tools with virtually no engineering overhead.

The Enterprise Trade-off: However, the immense flexibility of LangChain is a double-edged sword in a corporate environment. It does not natively prioritize enterprise governance and relies heavily on external configurations for security, policy control, and state management.

In massive, aging codebases, LangChain's highly dynamic chains can become opaque and “ad hoc,” leading to severe maintenance debt because the execution flow is often difficult to trace, debug, and secure—a familiar pattern in many early “vibe coding” experiments discussed in depth in our research on the Vibe Coding hangover.

Semantic Kernel: The Enterprise Architect

Semantic Kernel is a lightweight, open-source software development kit created by Microsoft, explicitly designed from inception to seamlessly integrate the latest AI models into existing C#, Python, and Java codebases.

Rather than acting as a loose collection of experimental tools, it functions as a highly structured, enterprise-grade middleware. At its core, Semantic Kernel operates as a dependency injection container that manages all the services, models, and plugins required to run an AI application safely and predictably.

- Skills-Based Architecture: Semantic Kernel prioritizes architectural predictability. It is built on a “Skills-based” (or Plugin) architecture. Instead of dynamic, unstructured tool calling that can lead to unpredictable behaviors, developers define highly structured, reusable, modular capabilities that can be strictly governed.

Planner-Driven Orchestration: When a complex, multi-step user request is received, Semantic Kernel utilizes an intelligent “Planner.” The Planner automatically decomposes the complex request into a logical, execution-ready sequence utilizing only the explicitly authorized plugins available to it.

This structured development approach forces developers to engage in upfront design and capacity planning, resulting in highly predictable, auditable AI behavior and aligning well with an AI-native SDLC for CTOs who need clear guardrails.

The Enterprise Edge: Semantic Kernel is explicitly built for stability, robust security, and seamless integration with complex business logic.

It provides a single, centralized location for developers to configure, orchestrate, and, most importantly, monitor their AI agents.

Because it relies heavily on standard enterprise software patterns—such as dependency injection, strongly typed interfaces, and inversion of control—a C# development team can adopt Semantic Kernel without abandoning their established software engineering principles.

It allows AI functions to act like standard, testable code blocks that plug cleanly into existing systems.

Architectural Comparison: Semantic Kernel vs. LangChain

The following comparison matrix juxtaposes the structural and philosophical differences between the two frameworks, highlighting why enterprise organizations lean heavily toward Microsoft's solution for production workloads:

| Architectural Dimension | Semantic Kernel (Microsoft) | LangChain |

|---|---|---|

| Primary Target Audience | Enterprise organizations, deeply integrated corporate teams, and developers prioritizing long-term stability. | Startups, fast-moving research teams, and environments prioritizing rapid prototyping and iteration. |

| Core Architecture & Orchestration | Structured orchestration utilizing explicitly defined Skills and intelligent Planners that automatically break down tasks into logical sequences. | Highly dynamic orchestration utilizing sequence Chains and autonomous Agents optimized for dynamic, multi-step reasoning. |

| Integration Philosophy | Enterprise-first design; deeply tied to the Microsoft technology stack, including Azure AI, Microsoft 365, and Copilot infrastructure. | Wide-reaching ecosystem prioritizing massive integrations with third-party LLMs, vector databases, and community-driven tools. |

| Long-Term Maintainability | Highly structured and predictable. Codebases are easier to reason about, reducing technical debt for enterprise-grade applications. | Highly flexible, but prone to ad-hoc design. Can lead to significant maintenance debt if complex chains are not aggressively managed. |

| Primary Language Focus | C#.NET, Java, and Python. Best suited for enterprise developers leveraging statically typed environments. | Python and JavaScript/TypeScript. Best suited for data scientists and developers prioritizing script-based, dynamic agility. |

Deep Integration with the Microsoft Ecosystem

For enterprise clients—particularly those operating in highly regulated industries such as healthcare, finance, telecommunications, and enterprise software—the choice of an AI technology stack cannot exist in a vacuum. It must align perfectly with existing cloud infrastructure, identity management systems, and stringent corporate data governance policies.

Nearly 60% of Fortune 500 companies actively utilize C# for their large-scale business applications, largely due to its profound, native integration with the Microsoft ecosystem, including Azure cloud services and Windows APIs.

Semantic Kernel is inherently designed to operate within this exact ecosystem, providing several non-negotiable architectural advantages for enterprise deployment that competing frameworks struggle to replicate.

Native Azure OpenAI Integration and Entra ID Security

A common, and highly dangerous, vulnerability in rapid AI prototyping is the mismanagement of API authentication keys. Developers moving quickly often hardcode OpenAI access keys directly into environment variables or, worse, inadvertently commit them into source control repositories. If these highly privileged secret keys are leaked, malicious actors can rapidly rack up massive cloud computing bills, exhaust rate limits, or access highly sensitive organizational data.

Standard key-based authentication fundamentally violates the principle of least privilege, providing elevated permissions regardless of who is using them or what specific task is being performed.

Semantic Kernel mitigates this catastrophic risk through deep, native integration with Microsoft Entra ID (formerly Azure Active Directory). Instead of relying on static, easily compromised secret keys, enterprise Semantic Kernel applications can authenticate to Azure OpenAI and other Azure AI services utilizing identity-based mechanisms. Developers configure the AzureAIInferenceChatCompletion client to securely connect using Entra ID tokens.

This implements robust, enterprise-grade Role-Based Access Control (RBAC) across the entire AI pipeline.

By utilizing Microsoft Entra ID, an organization ensures that the application only accesses the specific AI models it is explicitly authorized to use. The identity and access management solution guarantees that authentication is handled securely at the infrastructure level, satisfying the stringent compliance requirements of enterprise security audits and significantly lowering the risk of governance penalties, which can account for up to 7% of revenue in severe breach scenarios. For organizations building their own private “walled gardens,” these capabilities pair naturally with the patterns described in our guide on how to build a corporate AI fortress.

Microsoft Graph and Microsoft 365 Extensibility

Modern enterprises run their daily operations on the Microsoft 365 suite, relying on Teams, OneDrive, SharePoint, and Outlook as their primary data silos. Semantic Kernel provides a standardized, highly secure pathway to connect custom AI agents directly into this proprietary organizational data.

Through the use of native Semantic Kernel plugins, a .NET AI agent can securely query the Microsoft Graph API. For example, a development team can architect an internal productivity agent that accesses a specific user's calendar, parses the textual content of a complex email thread from a manager, summarizes the action items, and automatically drafts an event schedule. This complex orchestration is handled seamlessly through native C# plugins such as the CalendarPlugin and MessagesPlugin, which support natural language date parsing and multi-step confirmations.

Furthermore, because Semantic Kernel utilizes the exact same OpenAPI specifications as Microsoft 365 Copilot, the extensions and proprietary plugins built by the enterprise development team can be shared, reused, and deployed across the organization’s wider Copilot ecosystem, empowering low-code developers to leverage high-code innovations.

The 2026 Evolution: The Microsoft Agent Framework

The artificial intelligence landscape shifts with breathtaking speed. By the beginning of 2026, Microsoft recognized that the enterprise software market needed a unified, comprehensive solution that brought together the best aspects of various experimental AI patterns into a single, cohesive developer experience. The result of this initiative is the Microsoft Agent Framework (MAF), the open-source, direct next-generation successor to both Semantic Kernel and the highly popular multi-agent research project, AutoGen.

Historically, enterprise developers faced a difficult dichotomy: they could either use Semantic Kernel for robust, single-agent enterprise integrations, typed safety, and stable telemetry, or they could use AutoGen for highly experimental, multi-agent conversational patterns where different specialized AI personas collaborate, debate, and verify data to solve highly complex problems.

Microsoft strategically merged these capabilities, stating publicly that enterprise developers should not have to choose between the cutting-edge innovation of multi-agent orchestration and the enterprise trust, security, and stability of Semantic Kernel.

Architectural Imperatives of the New Framework

The Microsoft Agent Framework, supporting both .NET and Python, acts as the definitive, unified foundation for enterprise AI moving forward. It retains the core philosophy of its predecessors while introducing several critical capabilities designed explicitly for complex enterprise deployment:

Explicit Graph-Based Workflows: Modern real-world business challenges—such as end-to-end customer journey management, multi-source data governance, or deep human-in-the-loop review processes—rapidly exceed the capabilities of a single, monolithic AI prompt or a simple sequential chain.

The Microsoft Agent Framework introduces explicit, graph-based workflows that grant developers deterministic control over multi-agent execution paths.

This allows a network of specialized, atomic AI agents (e.g., a “Research Agent” passing data to a “Review Agent”) to coordinate effectively, mirroring how a high-performing corporation relies on specialized departments.

Robust State Management and Sessions: Managing the context of a conversation or process over hours, days, or weeks is a massive technical challenge in AI development. The Agent Framework introduces an

Agent Sessionfor highly durable state management.This allows AI agents to pause execution, request explicit human approval (human-in-the-loop), and seamlessly resume complex workflows without losing context, a mandatory requirement for automating critical enterprise processes.

Type-Safe Routing and Intercepting Middleware: True to its robust .NET roots, the framework deeply supports type-based routing and middleware interception.

This means that before an AI agent is ever allowed to execute a database query, initiate an API call, or send an automated email, the middleware can intercept the action. It validates the payload against strict C# type definitions, applies enterprise content moderation filters, and logs the exact telemetry via industry-standard OpenTelemetry protocols.

Migrating existing Semantic Kernel codebases to the new Microsoft Agent Framework involves straightforward namespace updates, tool registration modifications, and simplified agent creation patterns, but the core architectural philosophy of skills, plugins, and dependency injection remains identical.

This deliberate continuity ensures that the financial and technical investments made in .NET AI infrastructure today are fully future-proofed against the next generation of highly autonomous agentic AI capabilities.

How Tech Stack Affects Long-Term Maintainability

The strategic decision between a Python-based LangChain approach and a C#.NET Semantic Kernel approach ultimately converges on the fundamental concepts of software maintainability, operational predictability, and deep organizational alignment.

When a custom software development provider, such as Baytech Consulting, architects a solution for sophisticated enterprise clients, the ultimate goal is never merely to demonstrate a functional, localized prototype. The objective is to achieve Rapid Agile Deployment paired inextricably with a Tailored Tech Advantage. This means delivering systems that are highly adaptive, operationally transparent, and built unequivocally upon enterprise-grade quality standards.

A successful enterprise architecture relies on stabilizing, established infrastructure. Organizations that are already heavily invested in Microsoft SQL Server for relational data, Postgres via pgAdmin, Azure DevOps On-Premise for continuous integration, and Kubernetes clusters for container orchestration require applications that build, deploy, and scale predictably. C#.NET applications compile into highly optimized binaries that run exceptionally cleanly and efficiently within Docker containers deployed to environments like Harvester HCI, Rancher, or OVHCloud servers. The deterministic nature of C# memory management and its efficient garbage collection prevents the application layer from unexpectedly consuming massive amounts of cluster resources—a notoriously common operational issue when attempting to run heavy, concurrent Python interpreters at scale.

Furthermore, integrating advanced AI capabilities into an existing, mature .NET backend is nearly frictionless when utilizing Semantic Kernel. Developers do not need to build brittle, high-latency REST APIs simply to bridge an isolated Python AI microservice with a core C# business application. Instead, Semantic Kernel operates natively within the exact same process space. It leverages standard ASP.NET Core dependency injection patterns, allowing the newly minted AI agent to interface directly with existing database contexts (such as Entity Framework) securely, efficiently, and with zero architectural impedance mismatch. For teams that need help stabilizing existing systems, this approach pairs naturally with a project rescue engagement to unwind fragile architectures and rebuild them on stronger foundations.

Observability, Policy Control, and System Auditing

In highly regulated environments—such as global finance, healthcare, or government contracting—an AI agent operating as an opaque “black box” is completely unacceptable. LangChain's highly dynamic nature, while incredibly flexible, can make tracing the exact chain of thought, data access, and execution path exceedingly difficult, often requiring the implementation of complex third-party monitoring tools that add both latency and licensing costs.

Semantic Kernel, and by direct extension the Microsoft Agent Framework, embeds observability deeply into the core of the stack. Because it is architecturally structured around discrete planners, explicitly defined skills, and strongly typed interfaces, every single action the AI attempts to take can be logged, validated, and intercepted. Enterprise governance policies can be strictly enforced at the code level. If an AI agent hallucinates and attempts a function call that violates an internal security policy or attempts to access unauthorized data, the statically typed middleware intercepts the request immediately. It halts execution, prevents the action, and flags the anomaly via native Azure telemetry before any sensitive data is ever exfiltrated or corrupted. This approach is central to modern thinking on software development security and AI governance, where oversight and traceability are not optional.

Actionable Industry Applications and Personas

The advantages of the .NET AI stack manifest differently depending on the specific operational requirements of the industry. For executives leading the charge, understanding the contextual application of Semantic Kernel is critical for realizing ROI.

- Finance and Banking (Strategic CFO): For a CFO overseeing automated risk assessment or algorithmic trading compliance, predictability is paramount. Semantic Kernel's native Entra ID integration ensures that agents querying highly sensitive financial databases operate under strict RBAC, eliminating the risk of rogue AI transactions. The C# environment ensures that high-volume, concurrent financial data parsing operates at maximum efficiency, which becomes even more important as CFOs rethink build-vs-buy decisions in light of the vibe coding revolution.

- Healthcare and Medical Technology (Visionary CTO): Healthcare systems require absolute data privacy (HIPAA compliance) and zero tolerance for runtime errors. A CTO deploying an AI agent to summarize patient histories or assist in diagnostics benefits immensely from C#'s strict type safety, which guarantees that patient records are formatted precisely before being processed by backend systems.

- Real Estate and Mortgage (Innovative Marketing Director): A Marketing Director utilizing AI to generate property descriptions or manage client inquiries across multiple channels can leverage Semantic Kernel's Microsoft Graph integration. Agents can autonomously schedule viewings by directly accessing enterprise Outlook calendars and summarizing client emails securely within the Microsoft 365 boundary.

- Software and LMS/Education (Driven Head of Sales): For sales organizations using Learning Management Systems, AI agents can be deployed to analyze massive volumes of learner engagement data. The Microsoft Agent Framework allows for multi-agent workflows where a “Data Gathering Agent” pulls student metrics and a “Coaching Agent” formulates personalized outreach strategies, all governed by the strict state management of the .NET backend.

Conclusion: The Strategic Imperative of .NET AI

The rapid, unprecedented advancement of artificial intelligence has created immense pressure on B2B enterprises to innovate swiftly. However, prioritizing speed at the expense of architectural integrity—innovation that compromises security, ignores long-term maintenance costs, or fractures existing infrastructure—will ultimately erode business value and inflate the total cost of ownership.

While Python and frameworks like LangChain remain exceptional, best-in-class tools for foundational data science, rapid prototyping, and startup agility, they frequently lack the structural rigidity, type safety, and governance features required for complex, legacy-integrated enterprise software.

For enterprise organizations prioritizing long-term predictability, strict type safety, predictable execution performance, and seamless integration with existing Microsoft identity and cloud infrastructure platforms, the C#.NET ecosystem emerges as the superior strategic choice. Microsoft’s Semantic Kernel, and its powerful evolution into the unified Microsoft Agent Framework, provides the exact, enterprise-grade middleware required to bridge state-of-the-art Large Language Models with reliable, compiled corporate logic.

By deliberately choosing the .NET AI stack, executive leaders safeguard their technical architecture against compounding technical debt, ensuring their AI investments remain highly scalable, uncompromisingly secure, and easily maintainable for the next generation of software development. When paired with disciplined AI-powered engineering services, this stack becomes a durable competitive advantage rather than a risky experiment.

Strategic Next Steps for Enterprise Leaders

- Audit Existing AI Prototypes: Conduct a thorough evaluation of any current Python/LangChain experiments operating within the organization to determine if dynamic typing or ad-hoc chaining is silently creating hidden technical debt or security vulnerabilities. Where needed, introduce structured migration plans to more governed, agentic patterns so you avoid the “chatbot ceiling” and move toward Action Agents that drive real outcomes.

- Assess Infrastructure Alignment: If your organization already relies heavily on Azure DevOps, Microsoft 365, SQL Server, and Entra ID, calculate the specific integration and security costs saved by utilizing a native .NET AI framework that avoids architectural impedance.

- Engage Enterprise Development Experts: Partner with seasoned engineering teams that possess specialized, deep expertise in custom enterprise application management and the Microsoft Agent Framework to architect a secure, scalable transition from local prototypes to production-grade AI. In many cases this means engaging a dedicated development team that treats AI as part of a long-term partnership, not just a one-off project.

Further Reading

- https://medium.com/@kanerika/semantic-kernel-vs-langchain-the-ultimate-ai-development-framework-guide-3b8d9b244158

- https://xenoss.io/blog/total-cost-of-ownership-for-enterprise-ai

- Microsoft Agent Framework Overview

Frequently Asked Question

Why should an enterprise choose Microsoft’s Semantic Kernel (C#/.NET) over Python/LangChain for AI development?

Enterprises should firmly choose Semantic Kernel on C#.NET when their core requirements encompass long-term maintainability, strict type safety, and seamless, highly secure integration with complex corporate infrastructures. While Python and LangChain are excellent for rapid prototyping and data science experimentation, Python's dynamic typing can frequently lead to unpredictable runtime errors, fragile integrations, and significantly higher maintenance costs when scaled to massive enterprise applications.

C# provides compiled, statically typed performance that catches structural errors before deployment, resulting in up to 30% lower maintenance costs over the software's lifecycle. Furthermore, Semantic Kernel acts as true enterprise-grade middleware, offering structured planner-driven orchestration, native integration with Microsoft Entra ID for secure Role-Based Access Control (RBAC), and a clear, unbroken evolutionary path into the highly capable, multi-agent Microsoft Agent Framework. This strategic alignment ensures that AI applications remain secure, auditable, highly performant, and fully compliant with existing Microsoft ecosystems.

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.