Kill the Dashboard: The Rise of Proactive AI

February 23, 2026 / Bryan Reynolds

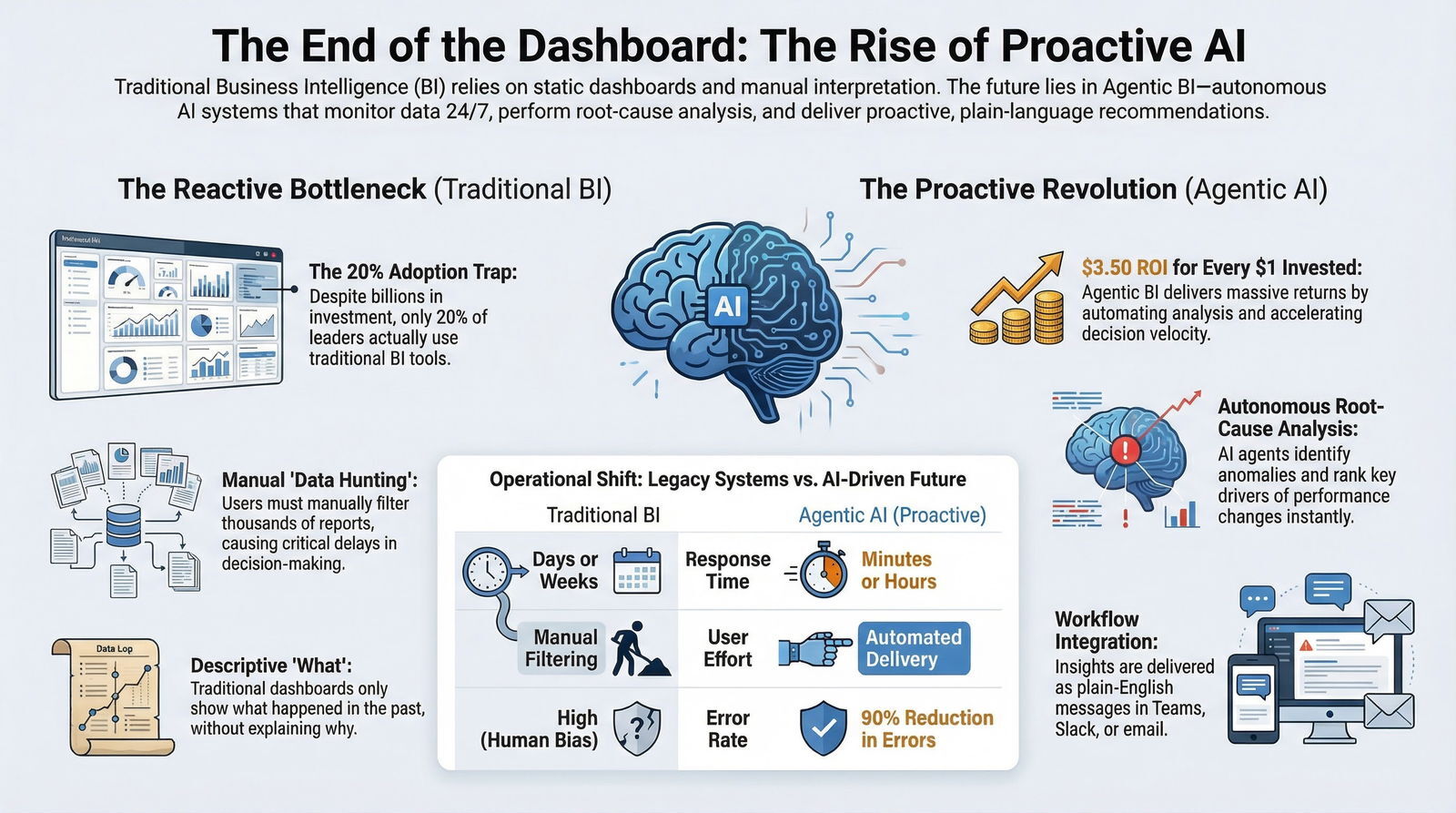

The End of the Dashboard: Why Proactive AI is the Future of Business Intelligence

You sit down at your desk with your morning coffee, open your laptop, and load up the same massive executive dashboard you look at every day. As a visionary CTO, a strategic CFO, a driven Head of Sales, or an innovative Marketing Director, you are searching for a clear answer to a simple question: Are we on track, and if not, why?

Instead of an answer, you are greeted by a wall of charts, a maze of drop-down filters, and a sea of red and green trendlines. You notice that revenue for a key product line is down 5% this week. But the dashboard doesn’t tell you why. Is it a supply chain bottleneck? A sudden drop in marketing conversion rates? A technical glitch in the software? To find out, you have to manually adjust date ranges, pivot data tables, and inevitably, send a message to your data analyst asking them to dig into the numbers. Hours—or sometimes days—pass before you get a clear answer. By then, the opportunity to course-correct in real time has vanished.

Clients, executives, and department leaders shouldn’t have to filter dashboards manually to uncover the story hidden in their data. We are entering an era where that familiar, frustrating ritual of data hunting is becoming as obsolete as the Rolodex.

The future of enterprise data is not about building more complex dashboards; it is about deploying intelligent systems that do the heavy lifting for you. Organizations are shifting toward building and deploying AI agents that analyze vast data ecosystems daily, detect meaningful anomalies, and autonomously email or message you with proactive insights. Imagine waking up to a simple, plain-English message in Microsoft Teams that says: "Revenue in the EMEA region is down 5% because ad conversion rates dropped on our latest campaign; I have paused the underperforming segments and recommend reallocating the budget."

This exhaustive report explores the systemic failures of traditional reactive data viewing, the architectural shift toward proactive AI reporting and Business Intelligence AI, the profound financial return on investment (ROI) of Automated Insights, and the evolving role of the human data analyst in 2026 and beyond.

The Fatal Flaws of Traditional Reactive Dashboards

For decades, traditional dashboards have served as the undisputed cornerstone of enterprise data visibility. They were designed to provide concise visual summaries of operational metrics, utilizing familiar formats like bar charts, line graphs, and gauge key performance indicators (KPIs) to deliver a high-level snapshot of company performance.

When implemented correctly, data-driven organizations are historically five times more likely to make faster, accurate decisions compared to those relying purely on intuition.

However, as data volumes have exploded into the petabytes and the velocity of global business has accelerated to a breakneck pace, the foundational architecture of the traditional dashboard has revealed critical, unignorable weaknesses. We must understand why traditional business intelligence is failing to pave the way for Data Analysis Agents.

The Adoption Crisis and the Illusion of Visibility

Despite investing billions of dollars globally into modern data stacks, data warehouses, and visualization tools, the adoption rate of traditional BI tools among business decision-makers has remained stubbornly low, hovering around an abysmal 20%.

The remaining 80% of enterprise leaders continue to rely heavily on the technical skills of the minority who can actually navigate these applications, effectively turning highly paid data scientists into glorified report-pullers.

This low adoption is driven primarily by information overload and a severe lack of contextual clarity. Dashboards frequently display data without sufficient context, making it incredibly challenging for users to understand the strategic significance of a metric or what immediate actions should be taken.

Think about what legacy business intelligence systems produce: hundreds, often thousands, of dashboards and reports. Some enterprises boast over 10,000 active reports in their systems at any given time.

But the uncomfortable truth is that nobody is actually reading the vast majority of those reports or taking action on the information contained within them. When confronted with a drop in sales performance, a business user typically opens a dashboard, extracts a single numerical value, and immediately transitions to a communication platform like Slack, Microsoft Teams, or email to ask a colleague, "What exactly does this mean?". The dashboard serves only as the frustrating starting point for an investigation, rather than the authoritative conclusion of one.

The Reactive Bottleneck: Waiting for the Data to Speak

Traditional dashboards are inherently reactive; they require human initiation. They visualize historical data effectively, but they fall entirely short when organizations require real-time decision support, predictive insights, or continuous operational optimization.

The entire workflow relies on a human user logging in, remembering to check the dashboard, noticing a subtle, easily missed shift in a trendline, and then manually drilling down through countless filters to isolate the issue.

This manual process creates severe, costly delays. Historically, data quality and performance issues have been addressed reactively—a metric breaks, someone eventually notices a few days later, a support ticket is filed, and hours or days later, the issue is diagnosed.

By the time the dashboard reveals a drop in conversions, a spike in supply chain costs, or a rise in customer churn, the financial damage has already been incurred. In a modern business environment where competitors leverage real-time algorithms, static snapshots and delayed reports create unacceptable blind spots. Traditional BI's fatal assumption is that busy professionals actually want to spend their time exploring charts and learning the language of pivot tables. They do not.

The Disconnect Between Analysis and Action

Even when a traditional dashboard successfully highlights a problem, it stops short of suggesting a solution.

The metrics remain siloed across different departments—marketing data isolated in one platform, financial data locked in an ERP, and operational metrics in a third proprietary system. This prevents a comprehensive, holistic view of the business, hiding the complex, cross-functional relationships that advanced analytics can reveal.

Mere visibility is no longer a strategic differentiator. Leaders don't need a dashboard with prettier colors or deeper drill-through menus; they need fast, precise explanations of why performance changed, what specific factors are driving those changes across various functions, and which actionable steps will deliver the best financial outcomes. The companies that dominate their markets today are those that can diagnose issues instantly and respond with clarity and speed. This requires an intelligence layer capable of performing true root-cause analysis—something a traditional, reactive BI setup simply cannot do.

The Rise of Proactive Analytics and Agentic BI

The solution to dashboard fatigue is not building a slightly better dashboard. The future of business intelligence is moving aggressively toward "no-UI analytics," where intelligent AI systems proactively detect issues, make complex decisions, and trigger actions.

Instead of humans hunting for insights in dashboards, intelligent systems deliver outcomes automatically through governed, agentic workflows. This transformation is driven by augmented analytics and the deployment of Data Analysis Agents, and it sits squarely inside an enterprise shift from passive chatbots to active Action Agents that actually do work on your behalf.

Defining the AI Agent in Business Intelligence

To understand this shift, we must first define what an AI agent actually is. Put simply, an AI agent is an artificial intelligence system that uses various tools to accomplish predefined goals.

Unlike standard large language models (LLMs) or simple chatbots that merely generate text based on an immediate prompt, AI agents possess a much higher level of cognitive architecture.

They have the ability to observe their digital environment, plan complex sequential tasks, execute code, access external databases securely, and remember context across extended, multi-day interactions.

In a business data context, these agents act as always-on, autonomous data analysts that never sleep.

They extend far beyond basic human conversation to perform complex analytical tasks based on natural language, executing plans based on user-provided context.

The transition to true proactive intelligence relies heavily on multi-agent AI systems.

Rather than relying on a single AI model to perform all tasks—which often leads to data hallucinations, syntax errors, or logical leaps—enterprise-grade BI solutions deploy specialized agents that collaborate, much like a human team.

For example, a robust multi-agent architecture might include:

- The Planning Agent: Breaks down a complex business question into smaller, sequential steps.

- The SQL Writing Agent: Translates the natural language into precise database queries.

- The Reviewer Agent: Double-checks the code for accuracy, efficiency, and security compliance before it ever touches the database.

- The Summarizer Agent: Takes the raw data output, applies business context, and translates it into a coherent, actionable business narrative.

From Manual Filtering to Automated Delivery

The fundamental, world-changing difference between legacy systems and agentic BI is the shift from passive observation to proactive delivery.

Rather than requiring a CEO or Marketing Director to log in, authenticate, and search for insights, proactive AI agents continuously monitor the vast data environment in the background, 24/7.

These sophisticated agents learn independently from historical patterns.

They understand the natural rhythm of your business, seamlessly adjusting to seasonal variations, weekend dips, and new trends without requiring an engineer to manually adjust alert thresholds.

When a meaningful shift occurs—using advanced statistical modeling to separate actual business signals from irrelevant statistical noise—the agent automatically generates an alert.

Crucially, these insights are delivered directly to where the users already work. Data and insights should be accessible in the daily workflow, embedded within applications like Microsoft 365, Teams, Slack, email, or a mobile device.

Instead of receiving a sterile link to a dashboard showing a red downward trending line, a Chief Marketing Officer receives a proactive natural language message via email or Teams stating: "Customer acquisition costs in the EMEA region increased by 12% over the last 48 hours. This is primarily driven by a drop in ad conversion rates on the newly launched mobile campaign. I have proactively paused the underperforming segments to prevent further spend and recommend reallocating $50,000 to the high-performing search channels to maintain your quarterly targets."

This is the essence of Proactive Analytics—and it lines up with how modern AI-driven software development strategies are reshaping day-to-day operations across the enterprise.

Bridging the Gap: How We View Data Delivery

At Baytech Consulting, where we specialize in custom software development and application management, we consistently see enterprise leaders struggling to bridge the gap between raw data collection and actionable insight. Businesses have spent fortunes amassing data, yet extracting value remains painfully slow.

The shift to Agentic BI requires more than just buying a new software license; it requires a fundamental rethinking of how information flows through an organization. The goal is to move from a state where the human interrogates the machine, to a state where the machine serves the human, whispering the right insight at precisely the right moment.

Uncovering the "Why": AI-Driven Root Cause Analysis

The most profound and highly sought-after capability of an AI data agent is its ability to transition from descriptive analytics (telling you what happened) to diagnostic analytics (telling you why it happened) at machine speed.

Traditional Root Cause Analysis (RCA) is a notoriously time-intensive, frustrating process.

It often requires analysts to spend hours, days, or even weeks tracing symptoms across fragmented IT infrastructures, business processes, and operational environments to locate the underlying cause.

This manual method is highly prone to human error, cognitive bias, and operational blind spots.

Agentic AI transforms this reactive, tedious process into a proactive, intelligent system capable of identifying root causes within minutes of symptom detection.

This is achieved through a governed, multi-step analytical framework known in the industry as augmented analytics, which directly supports an AI-native SDLC for CTOs who want consistent, production-grade results.

The Augmented Analytics Workflow

Augmented analytics unlocks rapid, robust data-driven decision-making for everyone, regardless of their technical background.

When an anomaly is detected by the background monitoring systems, or when a user asks a complex natural language question, the AI agent initiates an "agentic flow"—an autonomous, multi-step workflow that can parse billions of variables simultaneously.

The agentic RCA process typically involves several automated insight types chained together to find the definitive root cause:

- Goal Understanding: The AI uses Natural Language Processing (NLP) to understand the exact business goal. It translates a plain-English query (e.g., "Why did our SaaS retention drop in Q2?") into a rigorous analytical plan.

- Automated Anomaly Detection: The system continuously scans vast datasets to identify hidden patterns, unexpected variance from expected outputs, or unusual shifts in performance metrics that a human observer would likely miss entirely.

- Key Driver Analysis: Once an anomaly is flagged, the agent evaluates all available dimensions and variables to calculate which specific factors had the most mathematically significant impact on the metric change. Is it pricing? A specific sales rep? A regional economic downturn? The AI ranks the drivers.

- Cohort Comparisons: The AI systematically compares different segments of data—such as geographic regions, customer demographics, or product lines—to pinpoint exactly where performance diverges from the mean.

Reasoning, Action, and Explainability

Agentic AI represents a massive paradigm shift because it actively investigates, hypothesizes, and validates findings with human-like reasoning but operates at machine speed.

Unlike basic AI tools, agents "perceive, reason, and act". They adapt in real-time as they uncover new data, determining the optimal analytical path forward without requiring manual, step-by-step human intervention.

However, for these systems to be genuinely trusted by skeptical C-suite executives, they must provide complete transparency and explainability. A "black box" AI that simply spits out a directive to "fire the Western regional sales team" will never be adopted.

Once the root cause is definitively identified, generative AI creates customized narrative summaries explaining the complex mathematical findings in clear, natural language.

To ensure accuracy and build trust, every insight is traceable. Enterprise leaders and analysts can view the underlying data sources, the exact transformations applied, and the specific LLM or SQL logic the agent utilized to reach its conclusion.

This eliminates the opacity often associated with early AI implementations, ensuring that the Business Intelligence AI is both highly actionable and thoroughly auditable. The same mindset—governed, explainable automation—also underpins modern approaches to AI technical debt and long-term TCO across your entire stack.

The Architecture of Intelligence: Building it Securely

To achieve this level of proactive intelligence, organizations require a robust, meticulously engineered, enterprise-grade data architecture. The promise of conversational BI and Automated Insights cannot be realized if the underlying data is fragmented, unsecured, or improperly governed. For mid-market and enterprise organizations, transitioning from legacy BI to agentic AI requires bridging natural language models with structured, relational databases securely and efficiently.

Integrating LLMs with Relational Databases

Database Management Systems (DBMSs) have served as the foundational infrastructure of modern enterprises for decades.

Most of a company's true enterprise value is locked within structured relational databases. The challenge has traditionally been accessibility: business users desperately need the insights but lack the SQL expertise to extract them, while technical database teams understand the code but often miss the nuanced business context behind the executives' questions.

Modern AI agents bridge this exact gap by functioning as a highly intelligent translation layer between human language and complex database queries. Through semantic knowledge layers and advanced schema introspection, multi-agent systems can read data directly from robust relational databases.

When a user asks a question, or when an agent is running its daily automated analysis, it leverages the semantic layer to ensure consistent business definitions are applied. This is critical: it ensures that the definition of "Net Revenue" or "Active User" is mathematically standardized across all queries, preventing AI hallucinations and data discrepancies.

The system then seamlessly translates the natural language request into syntactically correct SQL, executes the query against the database, and retrieves the results. This text-to-SQL capability democratizes data access instantly without requiring data to be unnecessarily moved, duplicated, or exported into clunky, secondary visualization tools.

Security, Governance, and Custom Infrastructure

The integration of generative AI into highly sensitive corporate data environments naturally raises massive concerns regarding data privacy, security compliance, and AI hallucinations. A poorly implemented LLM might accidentally expose Personally Identifiable Information (PII) to an unauthorized user, or generate syntactically invalid, looping queries that consume massive amounts of expensive cloud compute resources.

To mitigate these severe risks, enterprise solutions must be built on custom-crafted, highly secure architectures. This is an area where Baytech Consulting’s Tailored Tech Advantage deeply influences how these systems are architected. Rather than relying entirely on generic public cloud LLMs that might ingest and compromise proprietary corporate data, enterprises require precise, controlled deployments.

A modern, secure AI deployment requires a symphony of cutting-edge technologies. Engineering teams utilize rigorous CI/CD pipelines managed through Azure DevOps On-Prem to ensure all code is strictly version-controlled and audited. Developers utilizing VS Code/VS 2022 build the agent logic. The underlying data often resides in battle-tested databases like Postgres (managed via pgAdmin) and Microsoft SQL Server.

To ensure the AI agents operate in a highly secure, scalable, and isolated manner, they are frequently containerized using Docker and orchestrated via Kubernetes. For organizations with strict compliance requirements, these Kubernetes clusters are deployed on advanced hyperconverged infrastructure (HCI) such as Harvester or managed via Rancher, running securely on high-performance OVHCloud servers. Network perimeters and data gateways are rigorously protected using enterprise firewalls like pfSense.

In these controlled, meticulously engineered environments, privacy is built-in from the ground up, not bolted on as an afterthought. PII and PHI detection, alongside real-time data redaction, ensure that sensitive information can be safely queried and analyzed by AI agents without ever violating strict compliance frameworks like HIPAA or GDPR.

Furthermore, the multi-agent design itself acts as a native safeguard; the internal "Reviewer Agent" automatically audits the "SQL Agent's" code against strict corporate security policies before execution, entirely preventing malicious SQL injections or unauthorized data access. This kind of governance-first mindset mirrors the principles behind software development security and AI governance that keep long-term risk under control.

Once the data is securely analyzed, the insights are delivered directly to the tools the enterprise already uses—whether that is via automated emails, direct messages in Microsoft 365 and Teams, or reports deposited into secure OneDrive or Google Drive folders. This Rapid Agile Deployment ensures that organizations achieve timely, adaptive, and highly transparent implementation of AI Reporting.

Industry Perspectives: Proactive Agents in Action

The implementation of proactive, agentic intelligence is not a theoretical white-paper concept or a distant future state; it is actively transforming operations right now across highly competitive, fast-paced sectors. By the year 2025, it is estimated that 90% of business leaders will rely heavily on AI-generated insights just to maintain a competitive baseline.

The specific applications vary widely by industry, but the core benefit remains universally the same: transitioning from reactive data discovery to proactive operational optimization. Let’s examine how different B2B personas are utilizing Data Analysis Agents today.

Advertising, Adtech, and Mobile Gaming

The digital advertising and mobile gaming sectors operate in hyper-dynamic, cutthroat environments where milliseconds matter. The global adtech market is expected to grow at a massive rate of 22.4% through 2030.

In mobile gaming alone, in-app purchases generate roughly $80 billion annually.

However, rising user acquisition (UA) costs, extreme ad saturation, and plummeting consumer attention spans put immense pressure on profit margins.

Traditional A/B testing and manual cohort analysis via dashboards are simply too slow to keep pace with rapidly shifting player behaviors and bidding wars.

AI agents are revolutionizing this space through dynamic creative optimization and proactive predictive modeling.

Generative AI agents rapidly analyze real-time campaign performance across thousands of variables, automate multi-variant testing, and continuously optimize ad placements without human input.

Furthermore, these agents proactively monitor player telemetry data to predict churn before it happens. In one notable case study, an AI-driven segmentation agent proactively identified high-lifetime-value dormant users, automatically triggering hyper-targeted retention offers that successfully boosted Average Revenue Per User (ARPU) by 33%.

These agents monitor campaign performance autonomously, reallocating marketing budgets across ad platforms instantly based on real-time ROI and conversion efficiency, completely bypassing the need for a Marketing Director to manually monitor a dashboard. For revenue leaders, this dovetails with broader predictive AI revenue strategies that turn data into a continuous growth engine.

Real Estate, Mortgage, and Finance

In highly complex financial environments, such as real estate-focused family offices, property management firms, and mortgage lenders, the reliance on reactive reporting creates severe operational friction. Financial teams often spend weeks manually consolidating spreadsheets, PDFs, and flat files across multiple regional property managers, frantically trying to reconcile disparate charts of accounts and track down hidden expenses.

This manual data triage exhausts the finance team, delays strategic decision-making, and severely obscures portfolio risks.

Proactive AI agents solve this intense data fragmentation. By connecting directly to underlying property management software and financial databases, agents autonomously track metrics in the background, applying a universal semantic layer to normalize the data.

If a specific property manager categorizes a major capital improvement as a routine operational repair, the AI agent instantly detects the anomaly. It flags the meaningful shift across dimensions, separates the noise from the signal based on business context, and emails an alert to the Chief Financial Officer before the monthly reporting cycle even begins.

By automating these highly repetitive, error-prone tasks, agentic AI allows human real estate professionals to step away from data compilation and focus entirely on high-value negotiations, relationship building, and strategic acquisitions. For CFOs, the same logic that applies to avoiding cheap AI code traps also applies here: speed is only valuable when it preserves long-term asset value.

Healthcare and Education (Learning Management Systems)

While the regulatory environments of healthcare and education are strict, the data challenges are remarkably similar. In the education sector, specifically for platforms providing Learning Management Systems (LMS), student engagement and retention are paramount. Traditional dashboards might show a generalized drop in course completion rates at the end of the semester.

Proactive AI reporting changes the paradigm entirely. By utilizing continuous anomaly detection, an AI agent monitoring an LMS can detect subtle behavioral shifts—such as a specific cohort of students taking 20% longer to complete a particular module, or logging in less frequently. The system immediately emails the academic administrators, pinpointing the exact curriculum module causing the friction, allowing educators to intervene and support the students weeks before they formally drop out.

Similarly, in healthcare administration, proactive analytics is moving beyond simple historical reporting. Agents can analyze historical patient intake flows, correlate them with seasonal health data, and proactively alert hospital administrators to impending staffing shortages or supply chain bottlenecks, catching issues before they impact patient care.

Telecom and Software/High-Tech

Fast-growing tech startups, SaaS providers, and massive telecom operators manage astronomical volumes of streaming data. In these environments, addressing data quality and performance issues reactively is a recipe for disaster. If a telecom network experiences localized latency, or if a SaaS product suffers a micro-outage, waiting for a customer support ticket to be filed means trust is already broken.

Automated systems can now detect emerging issues in real time. Instead of waiting for monthly reports to flag a problem, organizations receive alerts within hours or even minutes of a trend developing.

AI agents are becoming early warning systems that identify churn signals before customer loss becomes significant.

In the telecom sector, the deployment of AI analytics to automate operational tasks and reduce errors has resulted in massive cost savings, with some operations saving upwards of $5 million through proactive downtime reduction.

For the Visionary CTO, the shift from "we discovered a problem" to "the system alerted us before it escalated" marks a monumental turning point in digital experience management—and reinforces the need for secure AI walled gardens and private AI stacks that keep this insight engine fully under your control.

Redefining the Role: Will AI Replace the Data Analyst?

As organizations increasingly recognize the profound power of AI to automate complex data pipelines, flawlessly execute complex SQL queries, and generate plain-language narrative reports, a critical, existential question emerges across the enterprise: Does this technology replace the human data analyst?

The short, emphatic answer is no. AI replaces the routine, tedious reporting tasks of the analyst, but it does not replace the analyst themselves.

The integration of agentic AI represents a necessary evolution of the business intelligence profession, rather than its extinction.

The Elimination of the "Report Factory"

Historically, many highly skilled analytics teams have been forced to function as internal "report factories." They spend the vast majority of their valuable time diagnosing past issues, manually cleaning messy data, fixing broken data pipelines, and fulfilling endless ad-hoc requests from executives asking for minor dashboard modifications.

This reactive workflow severely limits their ability to drive genuine, forward-looking business value.

AI eliminates the excruciating wait time for basic answers.

The automated systems happily take over the tedious, repetitive tasks of data preparation, continuous anomaly detection, and routine historical insight generation.

This is not a tragedy for the analyst; it is a liberation.

The Rise of the Strategic Business Partner

Freed from the crushing burden of building and maintaining thousands of unused dashboards, the role of the data analyst in 2026 and beyond shifts dramatically toward strategic partnership and high-level data stewardship.

The organizations that will excel in the coming years are the ones that successfully pair modern, AI-driven interaction methods with reliable, well-designed reporting architecture.

The future data analyst must focus heavily on business acumen, advanced data storytelling, and understanding the psychology of executive decision-making.

As AI generates a massive flood of proactive insights, analysts are desperately needed to curate these findings, align them with the overarching corporate strategy, and ensure broad organizational data literacy.

Furthermore, AI output must be rigorously validated by human experts. Business definitions must hold steady across disparate teams, and the visual outputs must reflect how human beings actually interpret market signals in the real world.

The data professional transitions from being a mere information gatekeeper to an advanced AI trainer, system architect, and strategic advisor. They become responsible for designing the complex semantic layers, setting the strict governance rules, and ensuring that the multi-agent systems have the correct business context to operate safely and effectively. In essence, the highest-impact analysts will be those who excel at blending deep technical data oversight with high-level business strategy—a mindset very similar to what finance leaders need to adopt in the vibe coding revolution where CFOs are building, not just buying.

The Tangible ROI of Proactive Intelligence

In a tightened economic climate where every single technological investment must deliver clear, measurable value, skeptical IT and business leaders rightfully demand proof of Return on Investment (ROI) before abandoning their legacy BI systems.

The data overwhelmingly indicates that the shift to AI-powered, proactive analytics is not merely a futuristic trend or industry hype, but a highly lucrative, foundational strategic enabler of growth.

Research demonstrates that AI analytics can deliver an astonishing average return of $3.50 for every $1 invested, provided the implementation is aligned closely with clear business objectives and integrated properly into existing systems.

The financial impact of agentic BI manifests powerfully across three primary pillars: cost reduction, decision velocity, and direct revenue growth.

| ROI Pillar | Business Impact & Examples | Traditional BI Constraint | Agentic BI Advantage |

|---|---|---|---|

| Cost Reduction & Efficiency | Automating time-intensive manual analysis reduces operational overhead. Robotic process automation slashes report preparation from days to under an hour. | High infrastructure costs for data warehouses; high labor costs for manual ETL pipelines. | Lower infrastructure costs; reduces data processing errors by up to 90%. |

| Decision Velocity (Speed) | Real-time, proactive insights cut decision-making delays by 50%. Supply chain forecasting errors are reduced instantly. | Slow time-to-insight; requires executives to manually log in and hunt for answers. | Instant, automated alerts delivered directly to Slack/Teams allow immediate course correction. |

| Revenue Growth | Utilizing AI for predictive analytics, personalized marketing optimization, and dynamic customer segmentation. | Focuses solely on historical reporting; cannot predict future outcomes. | Boosts campaign ROI by 20-30%; increases sales-ready leads by 25%. |

To evaluate the success of a transition to proactive BI, leadership teams track specific, hard ROI metrics that move far beyond basic software usage statistics. These metrics include:

- Time-to-Insight Ratio: Measuring exactly how rapidly users extract actionable information compared to legacy methods.

- Cost per Report: Calculating the hard financial expense of manual compilation by data analysts versus automated, machine-generated processes.

- Decision Velocity: Tracking the organizational speed at which data-driven decisions are executed.

- Error Reduction Percentage: Quantifying the decrease in costly mistakes caused by relying on stale, inaccurate, or misinterpreted historical data.

Organizations that master these metrics and deploy AI agents at scale consistently achieve the strongest overall enterprise ROI. "Profitability masters" who balance these digital initiatives with rigorous KPI tracking frequently achieve massive EBITDA margins, attributing up to 70% of their enterprise ROI directly to advanced digital and AI initiatives. Many of them also modernize their tech stack—adopting approaches like Full-Stack JavaScript for speed—to make sure their BI and AI layers don’t sit on top of brittle, slow-moving platforms.

Conclusion: Embracing the Insight-First Enterprise

The era of hunting through static, complex dashboards to glean basic business insights is rapidly drawing to a close. Traditional business intelligence, constrained by its inherently reactive nature, low user adoption, and frustrating user interfaces, has proven entirely insufficient for the velocity and complexity of modern commerce.

Today's executives do not need more data visualization; they need clear, immediate, actionable answers. They need to know exactly why their business is changing and what specific steps they must take to capitalize on those shifts.

Proactive intelligence, driven by sophisticated multi-agent AI systems, represents a fundamental, necessary reinvention of how organizations interact with their data. By automating root cause analysis, continuously monitoring databases for anomalies 24/7, and delivering plain-language narrative insights directly into daily workflows like Teams and email, agentic AI completely eliminates the friction between data discovery and strategic action. This technology does not render human analytical expertise obsolete; rather, it liberates your data professionals to function as high-level strategic partners, ensuring that insights actually translate into measurable revenue growth and operational efficiency.

To remain competitive in 2026 and beyond, organizations must prioritize the modernization of their data architecture, bridging their structured databases with secure, enterprise-grade AI models. The monumental shift from "we discovered a problem on a dashboard three days late" to "the agentic system solved the problem before it escalated" is the definitive hallmark of the future-ready enterprise.

Are you ready to stop filtering and start acting? By partnering with experienced teams to leverage tailored, cutting-edge tech and rapid agile deployment, you can transform your data from a chaotic burden into your most powerful proactive asset—and avoid the kind of AI hangover that leaves CTOs cleaning up rushed, fragile systems instead of leading strategic change.

Further Reading

- https://www.forbes.com/councils/forbestechcouncil/2025/03/24/why-business-leaders-cant-wait-for-ai-to-kill-the-dashboard/

- https://www.tellius.com/resources/blog/ai-for-analytics-how-augmented-analytics-is-transforming-bi

- https://querio.ai/articles/the-roi-of-adopting-ai-powered-analytics-tools

Frequently Asked Questions

Why are traditional dashboards becoming obsolete? Traditional dashboards are becoming obsolete because they are inherently passive, reactive, and require significant manual effort, resulting in a low organizational adoption rate of roughly 20%. They require busy executives to manually log in, navigate complex filters, and interpret raw data visualizations, leading to severe information overload. Dashboards provide a static snapshot of what happened in the past, but completely fail to provide the necessary context required to understand why it happened or what strategic actions should be taken next. As the speed of business increases, relying on delayed, manual reporting creates unacceptable competitive blind spots.

How can AI tell me why my data changed, not just what changed? AI achieves this through advanced, automated Root Cause Analysis (RCA) powered by multi-agent systems. When a metric fluctuates, the AI does not just flag the change on a chart; it instantly runs complex, multi-step analytical workflows in the background. It performs continuous anomaly detection across billions of variables, conducts key driver analysis to mathematically determine the primary cause of the shift, and runs cohort comparisons to isolate the issue. The system then translates this incredibly complex mathematical investigation into a simple, plain-language narrative summary, emailing you the exact drivers behind the change and suggesting corrective actions.

Can AI replace my data analyst for routine reporting? Yes, AI can and absolutely will replace the manual, routine aspects of data reporting. AI agents excel at autonomous data extraction, automated formatting, anomaly detection, and basic narrative generation—tasks that currently consume the majority of an analyst's time. However, AI does not replace the human analyst entirely. Instead, it significantly elevates the analyst's role. Freed from operating as an internal "report factory," data professionals evolve into highly valuable strategic business partners. They manage AI governance, ensure data quality, design complex semantic architectures, and align the AI's proactive insights with your high-level corporate strategy.

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.