The Silent Enterprise: Giving Legacy Systems a Voice with AI

March 09, 2026 / Bryan Reynolds

The Silent Enterprise: Modernizing Brownfield Applications and Giving Legacy Systems a Voice with AI

The most pervasive challenge in modern enterprise architecture is not the adoption of emerging technologies, but the gravitational pull of the past. Across global industries—from high-tech and finance to healthcare, real estate, and logistics—organizations rely on established, deeply entrenched software systems to run core business operations. These existing environments, universally known in software engineering as "brownfield" deployments, often contain applications that are fifteen, twenty, or even thirty years old. They are reliable, heavily customized, and functionally critical, yet they represent a significant barrier to digital transformation.

For the visionary Chief Technology Officer, the strategic Chief Financial Officer, the driven Head of Sales, or the innovative Marketing Director, these legacy systems pose a relentless series of operational paradoxes. The software processes transactions flawlessly, but it isolates critical market data. The underlying databases are robust, but they completely lack the modern Application Programming Interfaces (APIs) required to communicate with cloud-native analytics, mobile applications, or contemporary customer relationship management (CRM) platforms. Consequently, vast reservoirs of enterprise data remain siloed behind monolithic architectures, AS/400 terminal green screens, and aging on-premises SQL servers. The software is functional, but it is entirely silent.

The traditional response to this dilemma has been the "rip and replace" migration—a strategy fraught with exorbitant costs, operational peril, and catastrophically high failure rates. However, a profound paradigm shift is currently occurring in enterprise technology. The integration of Artificial Intelligence (AI), specifically generative AI and intelligent autonomous agents, offers a novel, non-invasive approach to brownfield modernization. By overlaying sophisticated AI interfaces, constructing semantic data layers, and orchestrating workflows through Robotic Process Automation (RPA), organizations can effectively give their oldest, most silent software a modern voice and an array of new capabilities.

This comprehensive analysis explores the strategic, financial, and technical methodologies for implementing AI within legacy enterprise systems. It addresses the fundamental inquiries shared by enterprise leaders: the absolute feasibility of applying AI to software deployed over a decade ago, the complex engineering required to automate systems entirely lacking APIs, and the profound, often hidden risks associated with allowing large language models to interact with legacy codebases.

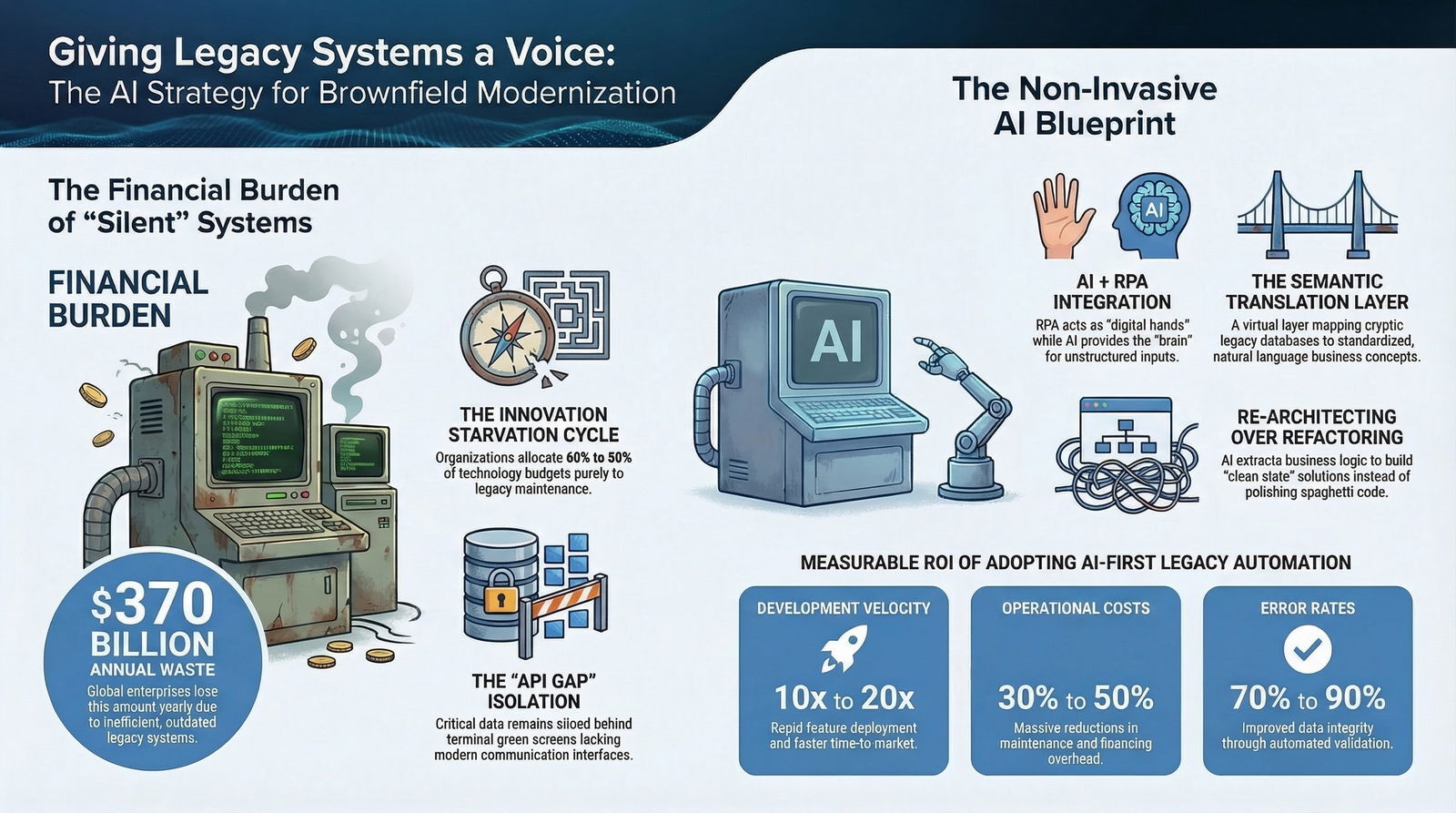

The Financial and Operational Gravity of the Brownfield Reality

To understand the urgency of brownfield modernization, it is necessary to examine the profound financial burden imposed by legacy technical debt. Legacy systems do not merely slow down operations; they actively consume capital that could otherwise fund strategic innovation, creating a cycle of stagnation that threatens competitive viability in a rapidly evolving digital marketplace.

The True Cost of Maintenance, Operations, and Technical Debt

The economic drain of outdated technology is staggering. Analyses indicate that the average global enterprise wastes approximately $370 million annually due to the inability to efficiently modernize outdated, inefficient legacy systems. The time invested in traditional, resource-heavy legacy transformation processes accounts for nearly 134 million of that waste, followed by 58 million annually on failed initiatives, and $56 million solely dedicated to maintaining and updating obsolete applications.

Organizations currently allocate between 60% and 80% of their total technology budgets exclusively to the maintenance of legacy infrastructure. This disproportionate spending starves innovation and traps engineering talent in an endless cycle of "keeping the lights on." According to industry analyses, enterprises maintaining legacy systems spend up to 42% more on operational overhead compared to those that have modernized to supported cloud-native platforms. For a single, massive legacy system, the average cost to operate and maintain the architecture can reach $30 million annually, contributing to a global technical debt estimated at over $1.14 trillion. This is why many CFOs are rethinking the traditional build-versus-buy calculus and exploring strategies like AI-augmented custom development to turn software into a true balance-sheet asset instead of a cost sink.

Furthermore, IT teams spend an average of 17 hours per week—nearly half their working hours—simply maintaining these systems, with annual maintenance costs averaging $40,000 per legacy application. In sectors like manufacturing and utilities, this cost easily exceeds $53,000 per application.

Industry-Specific Vulnerabilities: Finance, Healthcare, and Beyond

The financial penalty of legacy software is uniquely amplified in highly regulated industries. In the banking and financial services sector, outdated core systems are projected to cost institutions up to $57 billion annually by 2028 due to outages, inefficiencies, and compliance failures. Financial institutions are finding that technical debt is not merely an IT concern, but a business imperative; the burden of outdated systems impairs the ability to innovate, slows down the adoption of crucial technologies like AI, and hinders the ability to leverage data for strategic decisions.

In healthcare, leaders face pressing imperatives to promote sustainable financial health, address patient affordability, and strengthen cybersecurity. Health systems have historically lagged behind other industries in technological maturity, leading to a fragmented patient financial experience and operational inefficiencies. A staggering 43% of health system executives state that their top priority for the coming years is investing in core business technology solutions, including enterprise resource planning (ERP) systems, electronic health records (EHR), and automation. The inability to easily extract and analyze patient data from legacy EHRs prevents the deployment of predictive AI that could otherwise reduce clinical errors and optimize care delivery.

Security, Downtime, and Compliance Risks

Beyond direct maintenance costs, legacy systems introduce severe vulnerabilities that can devastate an enterprise's reputation and bottom line. Outdated platforms often lack modern security protocols, such as native multi-factor authentication (MFA), advanced encryption, or real-time threat detection capabilities, rendering them prime targets for cyberattacks. Approximately 36% of businesses report increased vulnerabilities and an inability to handle advanced threats precisely because they are utilizing outdated technology.

Exploiting public-facing legacy applications is a primary breach method, and the consequences are costly. The average cost of a data breach for organizations with complex, legacy environments reaches $5.28 million, compared to $3.92 million for those with modernized, streamlined environments. In highly regulated sectors, the average cost of a breach spikes to $6.08 million.

When legacy systems fail under the strain of modern workloads, the financial impact is immediate. Legacy systems are four times more likely to experience downtime than modern architectures. Depending on the industry and the specific workload, this downtime costs enterprises between 100,000 and 540,000 per hour. Recovering data from these outdated systems can take three times longer than modern cloud-native solutions, exacerbating the financial hemorrhage of every failure.

The following table illustrates the cascading priorities and challenges identified by IT professionals regarding legacy modernization in the current enterprise landscape:

| Modernization Driver | Percentage of IT Professionals Citing | Strategic Implication for Brownfield Environments |

|---|---|---|

| Improved Performance/Speed | 48% | Legacy latency is actively bottlenecking core business operations and real-time decision making. |

| Cloud-Based/Remote Access | 45% | Rigid on-premises boundaries prevent distributed workforce efficiency and modern collaboration. |

| Greater Scalability/Flexibility | 44% | Monolithic architectures cannot handle sudden market demands or horizontal scaling. |

| Integration with Modern Tools | 44% | The complete lack of APIs isolates legacy data from the broader organizational technology stack. |

| Security Vulnerabilities | 43% | Aging software lacks defenses against contemporary cyber threats and ransomware syndicates. |

The Paradigm of Non-Invasive Modernization: Feasibility on 15-Year-Old Software

A recurring and critical inquiry among business leaders is whether cutting-edge artificial intelligence can genuinely interface with software that was architected fifteen, twenty, or even thirty years ago. The answer is unequivocally affirmative, provided the organization abandons the traditional assumption that modernization absolutely requires altering or replacing the legacy source code.

The most successful approach to integrating AI with old software relies on the implementation of "non-invasive AI layers." Rather than engaging in a complex, multi-year re-platforming effort—which carries massive capital expenditure and perilously high risks of business disruption—enterprises are utilizing solutions that sit safely atop existing systems. This methodology allows organizations to extract information, orchestrate modern workflows, and apply generative AI capabilities without fundamentally altering the core legacy architecture, thereby drastically reducing the risk of system fragility and operational downtime.

CapEx vs. OpEx in Brownfield Development

Deciding between traditional legacy integration and modern AI-driven overlays is fundamentally a battle between Capital Expenditure (CapEx) and Operational Expenditure (OpEx).

Traditional legacy integration typically incurs low-to-medium upfront costs, primarily driven by middleware licensing and the manual development hours required to build rigid, point-to-point connections. However, the ongoing OpEx is phenomenally high due to the compounding "maintenance debt" of keeping aging servers alive, paying expensive older licensing fees, and retaining scarce specialists (such as COBOL, RPG, or legacy SQL administrators).

Conversely, non-invasive AI modernization shifts this financial paradigm. While implementing AI, robust data connectors, and cloud-native environments requires significant upfront investment for re-architecting the data flow and setting up infrastructure, the ongoing OpEx drops precipitously. Cloud-native environments reduce hardware dependencies and leverage pay-as-you-go models. Modern platforms eliminate the need for dedicated server maintenance and manual scaling processes, providing consumption-based pricing models that align costs with actual usage rather than peak capacity planning.

The return on this investment is substantial and rapid. Financial services firms have reported an average 20% productivity gain across operations, including software development and customer service, simply by deploying generative AI to interface with their existing operational data. In broader manufacturing and B2B contexts, organizations deploying AI-first development and automation have reported ten to twenty times improvements in development velocity, accompanied by 30% to 50% reductions in operational costs. These outcomes line up closely with the emerging AI-native SDLC approach, which bakes AI into every stage of the lifecycle instead of treating it as an afterthought.

By targeting bounded, high-impact use cases—such as automating document classification, surfacing knowledge from legacy repositories, predicting maintenance schedules, or deploying autonomous agents for specific data-entry tasks—enterprises can achieve rapid agility without succumbing to the complexity of a total system overhaul.

The AI Layer as a Strategic Interface

For enterprises concerned with system fragility, the selected AI solution must support flexible deployment models. Intelligent platforms must be capable of running on-premises, in the cloud, or in hybrid setups to align with strict IT policies and infrastructure constraints. This allows the AI to function within the enterprise's existing security perimeter, effectively connecting to local, "messy," or siloed data repositories without exposing sensitive information to external networks.

The AI layer utilizes advanced Enterprise Search to extract insights from outdated content or proprietary formats. This technology provides precision and context-awareness, allowing the AI to "understand" and process information even when a single source of truth is absent in the fragmented legacy environment. Intelligent agents developed within these layers can detect issue patterns from historical support tickets, automate the classification of incoming vendor documents, and surface relevant knowledge directly to customer service representatives, all without disrupting the underlying core business operations. Architecturally, this aligns with the shift away from fragile "super-bots" toward orchestrated teams of specialized AI agents that handle narrow, well-governed tasks.

Giving a Voice to the APILess: Orchestrating the Unconnected

The fundamental technical hurdle of a brownfield application is isolation. Modern digital ecosystems communicate seamlessly via RESTful APIs, GraphQL, and event-driven webhooks. Legacy systems—such as the venerable IBM AS/400 (now IBM i), monolithic mainframes, or deeply customized, on-premises ERPs—were built in an era before these standards existed. They expect human operators to interact with command-line interfaces, rigid terminal screens, or direct, manual database queries.

To automate systems that lack modern APIs, organizations must synthesize Artificial Intelligence with Robotic Process Automation (RPA) and Event-Driven Architectures.

Bridging the Gap with RPA and AI Agents

Automation must be applied at the presentation or process layer, circumventing the need to rewrite or replace the core AS/400 or ERP system itself. Robotic Process Automation serves as the digital "hands" of the operation. Software bots are programmed to interact with the legacy system exactly as a human operator would—handling intricate screen navigation, executing keystrokes, extracting data directly from 5250 terminal emulation sessions (the classic "green screens" of the AS/400), and executing rule-based actions.

However, RPA alone is brittle; it relies on perfectly structured, unvarying inputs. If a terminal screen layout changes slightly, or if an incoming vendor invoice contains free-text communication rather than a structured table, a standard RPA bot will fail and require human intervention. This is precisely where Artificial Intelligence provides the essential "brain."

AI complements RPA by managing unstructured inputs and operational exceptions. Intelligent AI agents can read emails, classify complex PDF documents, utilize optical character recognition (OCR) combined with natural language understanding to extract the necessary variables, and format the data. The agent then hands this perfectly structured data to the RPA bot, which rapidly and flawlessly keys the information into the legacy interface. Together, AI and RPA allow a monolithic, isolated AS/400 system to operate as a seamless, high-speed node in a modern digital workflow, rather than acting as a continuous operational bottleneck.

Instead of staff manually running reports on the AS/400, exporting files, validating totals, and emailing results to stakeholders, AI-driven bots can schedule report runs, extract the data, perform complex validation checks, and distribute outputs automatically. RPA bridges the legacy system with cloud platforms such as Salesforce or modern ERPs by synchronizing data directly from green screens into modern applications, removing double entry and reducing data latency.

Generative AI Interfaces and Natural Language Translation

The integration goes beyond mere data entry automation. Advanced implementations overlay generative AI interfaces directly onto legacy applications. This innovation allows non-technical staff—such as sales representatives or marketing directors—to query complex, command-line-based ERPs using plain English.

When an executive asks a natural language question via a modern interface (like Slack or Microsoft Teams), the AI agent acts as a semantic translator. It interprets the human intent, translates the natural language into the rigid, archaic syntax or keystroke sequence required by the legacy system, executes the query through an RPA pipeline, and translates the terminal output back into a coherent, human-readable summary. Beyond simple queries, these autonomous agents can navigate legacy user interfaces to perform multi-step operations, such as updating a customer's address across multiple siloed databases simultaneously, effectively freeing human agents from mundane data entry.

Shifting from Batch Processing to Event-Driven Agility

Legacy systems are historically constrained by batch processing—large, resource-intensive jobs that run overnight to synchronize data or generate reports. In a modern B2B environment, data latency is unacceptable; decisions must be made in real-time.

To overcome this, engineers implement intelligent orchestration workflows (using platforms like n8n or proprietary integration hubs) and event-driven architectures. Instead of waiting for a nightly batch job, event listeners are deployed to monitor the legacy database or message queues for changes. When an event occurs—for instance, a new sales order is created in a 20-year-old SQL database—it instantly triggers a sophisticated workflow. In real-time, the automation can update a modern cloud CRM, trigger a Slack notification to the regional sales team, and generate a PDF invoice to be emailed to the client. This event-driven responsiveness effectively forces decades-old technology to operate at the speed of modern commerce, without changing the underlying legacy code.

Giving Databases a Voice: Semantic Layers and Text-to-SQL

A significant portion of enterprise technical debt resides in massive, aging relational databases—specifically early iterations of Microsoft SQL Server, PostgreSQL, or proprietary legacy data stores. While these systems may not require terminal emulation, they are often heavily siloed, poorly documented, and riddled with complex schemas, bizarrely named columns (e.g., USR_FLG_01), and implicit relationships that have evolved through decades of patchwork updates.

The ultimate goal of brownfield modernization is to allow AI agents to read and write to these databases directly, enabling Text-to-SQL capabilities. In this scenario, an executive can type a natural language question (e.g., "What were our premium customer revenue trends last quarter?") and receive an instant, accurate data visualization without requiring a data analyst to write a single line of SQL.

However, connecting a Large Language Model (LLM) directly to a raw, undocumented legacy database is a recipe for catastrophic failure. Standard text-to-SQL technology often misses the business context buried in weirdly named columns or implicit relationships. The AI will fail to understand the proprietary business logic, resulting in syntactically correct but factually inaccurate SQL queries. Many teams discover the hard way that generic vector-search style RAG is not enough here; you need production-grade SQL agents built for numbers and relational logic to get safe, auditable answers from financial or operational data.

The Necessity and Construction of the Semantic Layer

To resolve this critical translation issue, engineers construct a "Semantic Layer." At its core, a semantic layer is a virtual translation layer that sits between the physical, legacy data warehouse and the data consumers (whether they are AI agents, Business Intelligence tools, or end-users).

Instead of forcing the AI to decipher a database containing thousands of cryptically named tables, the semantic layer abstracts the complexity. It maps raw tables, foreign keys, and complex joins to core, standardized business concepts. Building this layer requires a methodical, step-by-step approach that prioritizes business metrics over raw data models:

Assess Existing Architecture: Map the current data sources, legacy warehouses, and existing reporting tools.

Identify Core Metrics: Stakeholders from Sales, Finance, and Operations must identify and standardize core business metrics, agreeing on the exact mathematical calculation for metrics like "Revenue" or "Active Customer".

Define Dimensions and Relationships: Engineers map the dimensions (time, geography, product lines) and the complex relationships (joins, hierarchies) that support those core metrics.

Centralize Governance: Establish robust access controls to ensure the AI agent cannot access restricted tables or execute destructive write commands.

Dynamic Query Rewriting: When an AI agent requests a business metric (e.g.,

average_order_value), the semantic layer automatically translates the simple business request into the complex, optimized SQL required by the legacy database.

This ensures that the AI operates with a single, governed source of truth, guaranteeing consistent business definitions across all analytics tools and preventing the LLM from hallucinating data structures.

Knowledge Graphs for Deep Context

For highly complex legacy SQL databases lacking explicit foreign key constraints, advanced implementations utilize Knowledge Graphs (such as Neo4j) to map the database schema.

In this architecture, a framework builds a graph where nodes represent databases, schemas, tables, and columns. Generative AI is used to synthesize properties for these nodes, adding plain-English business descriptions and identifying implicit relationships. For example, the knowledge graph can recognize that users.email_address in an older table is semantically equivalent to employees.contact_email in a newer table, despite lacking a hard-coded constraint. The resulting knowledge graph serves as a human- and machine-readable map. Before attempting to write a complex SQL query, the AI agent first queries the knowledge graph to understand the exact context, relationships, and structure of the legacy data, ensuring accuracy.

Orchestration: LangChain vs. LlamaIndex for Legacy SQL

When connecting these on-premises databases to modern AI applications, developers generally rely on advanced orchestration frameworks. The two dominant frameworks are LangChain and LlamaIndex, and the choice between them dictates the capabilities of the modernization solution.

| Framework Feature | LangChain | LlamaIndex |

|---|---|---|

| Primary Use Case | Building complex AI workflows, autonomous agents, and tool chaining. | Advanced search, precise data indexing, and Retrieval-Augmented Generation (RAG). |

| Data Structure Handling | Capable of handling complex data structures with a highly modular interface and multimodal support. | Highly efficient at semantic similarity and deep retrieval over large, static document collections. |

| Workflow Control | Excels at orchestration, allowing agents to query databases, evaluate results, and trigger external APIs. | Focuses heavily on the ingestion, structuring, and retrieval of information. |

| Application in Brownfield | Ideal for creating agents that must read from legacy SQL, calculate a result, and write back to a CRM system. | Ideal for creating an internal search engine to query thousands of legacy PDF manuals or unstructured reports. |

For a brownfield application where an AI agent must not only query an on-premises SQL Server but also evaluate the result, interact with a calculator tool, and then format a message for a modern SaaS platform, LangChain provides the necessary operational flexibility. Modern enterprise tools, such as the Model Context Protocol (MCP) servers, can securely expose an on-premises SQL Server to LangChain workflows, removing the need for complex Extract, Transform, Load (ETL) pipelines and enabling real-time, bidirectional interaction with live data.

The Architectural Trap: Risks of Touching Legacy Code with AI

While using AI as a non-invasive semantic layer or RPA orchestrator is highly effective, enterprise leaders often ask a more perilous question: What is the fundamental risk of using AI to directly touch, refactor, or rewrite the legacy codebase itself?

The temptation to paste a five-year-old, monolithic "spaghetti code" file into an AI coding assistant and demand it be "made clean" is immense. However, directly applying generative AI to legacy codebases introduces profound, systemic risks regarding code quality, organizational security, and intellectual property.

Hallucinations, Bias, and Security Vulnerabilities

AI models are not inherently factual engines; they are probabilistic text generators based on pattern recognition. When tasked with refactoring complex, poorly documented legacy code, models are highly prone to "hallucinations"—fabricating functions, variables, or logic paths entirely. Relying blindly on AI-generated code, without rigorous human oversight, invites critical bugs, architectural flaws, and performance degradation into production environments.

Furthermore, AI models are trained on vast public code repositories that contain historical vulnerabilities and deprecated practices. If unguided, generative AI tools can inadvertently introduce severe security flaws into the newly generated code, such as incorrect input validation, weak encryption protocols, or insecure access controls. In highly regulated sectors like finance or healthcare, using unsupported or flawed software presents a severe risk to national economic stability, patient privacy, and regulatory compliance. These are the kinds of risks that have led many boards to demand stronger AI governance and technical-debt oversight before greenlighting large-scale automation.

Additionally, the intellectual property (IP) and copyright implications remain incredibly murky. AI algorithms trained on public data raise unresolved questions about code ownership. If an organization feeds proprietary legacy algorithms into a public LLM without strict data governance and privacy controls, there is a severe risk of exposing proprietary trade secrets and customer data to the public domain, resulting in major compliance violations.

The Refactoring Fallacy: Polishing Spaghetti

The most insidious risk of using AI to refactor legacy code is the illusion of improvement. When an AI receives flawed, convoluted code, it is fundamentally biased by the technical structure it was provided. The model will attempt to preserve the original logic flow, the outdated variable naming conventions, and the structural architecture—even if that architecture was inherently defective or built on flawed assumptions.

The result is rarely a modernized, scalable system; it is merely a polished, syntax-updated version of the exact same bad architecture. The technical debt is not eliminated; it is simply translated into a newer programming language.

The Solution: Reverse Engineering and the "Clean Slate" Build

To safely and effectively utilize AI for legacy code modernization, engineering teams must abandon the concept of direct refactoring and embrace "Re-Architecting." This is achieved through a deliberate, two-step reverse engineering process that leverages the analytical reasoning power of the LLM without inheriting the flaws of the legacy code.

Step 1: Extract the Intent (The "What"): Instead of asking the AI to fix the code, developers instruct the AI to completely ignore the existing technical structure. The AI is tasked solely with analyzing the legacy file to extract the pure business logic, rules, and outcomes. The output of this step is a high-level Business Requirement Document (BRD). This methodology strips away the decades of technical debt, leaving only the technological-agnostic intent of the software.

Step 2: The "Clean Slate" Build (The "How"): The engineering team then takes the freshly generated, technology-agnostic BRD and feeds it into a new AI prompt acting as a "Master Architect". The AI is instructed to build a new solution from scratch, using modern best practices, scalable microservices architectures, and current security standards to satisfy the specific requirements of the BRD.

This reverse-engineering methodology ensures that the organization does not carry the "ghosts" and mistakes of previous developers into the new system. It allows for seamless migration (e.g., moving from legacy Java to a modern Node.js backend) while guaranteeing that the new system is built on a foundation of modern design principles.

Real-World ROI: Case Studies in B2B Modernization

The theoretical frameworks of semantic layers, RPA, AI agents, and reverse engineering translate into profound, measurable financial and operational impacts across global B2B sectors. When deployed correctly, these technologies shift IT departments from burdensome cost centers to dynamic innovation powerhouses.

In the retail and logistics sector, for instance, a global enterprise successfully integrated their highly isolated IBM i systems with their modern e-commerce platform using REST APIs and integration hubs, resulting in a staggering 40% increase in the speed of order processing. In healthcare, institutions have linked massive, legacy patient data repositories with advanced AI diagnostic tools, accelerating decision-making processes and significantly reducing clinical errors, while also addressing the systemic burnout associated with manual data entry.

Within B2B sales and marketing, the deployment of AI agents to interface with historical, on-premises CRM data is fundamentally altering the commercial landscape. Intelligent agents automate the extraction of insights from complex sales histories and orchestrate Go-To-Market strategies. This yields an additional 20% to 30% in new opportunities contacted and boosts conversion rates from outreach by up to 30%. Beyond the raw financial spreadsheet, the returns manifest in more predictable market forecasting based on real market signals, reduced employee burnout from wasted effort on dead-end prospects, and vastly improved cycle times.

In manufacturing contexts, automating these repetitive tasks has driven employee productivity gains of up to 40%, while error rates in compliance and data handling have plummeted by over 75%.

The following table summarizes the typical Return on Investment (ROI) metrics experienced by enterprises adopting AI-first development and legacy automation:

| Metric Category | Typical Improvement Range | Business Impact |

|---|---|---|

| Development Velocity | 10x to 20x improvement | Rapid deployment of new features and faster time-to-market. |

| Infrastructure Costs | 50% to 80% savings | Elimination of redundant servers, cooling costs, and expensive legacy licenses. |

| Bug Rate / Error Rate | 70% to 90% reduction | Improved data integrity and compliance through automated validation. |

| Productivity Gains | 20% to 40% increase | Employees focus on high-value strategic tasks rather than manual data entry. |

The ultimate economic potential of generative AI, particularly when used to bridge the gap between human operators and legacy data stores, is monumental. Macroeconomic analyses suggest that by accelerating coding processes, automating workflow integrations, and providing immediate access to structured insights, AI could increase global GDP by 1.5% by 2035, fundamentally altering the trajectory of enterprise efficiency over the next decade.

Strategic Execution and the Enterprise Blueprint

The transition from a siloed, high-cost brownfield environment to an agile, AI-integrated enterprise is not merely a technological upgrade; it is a complex strategic maneuver that requires meticulous execution. Organizations navigating this complexity require partners who understand both the rigid, uncompromising constraints of legacy systems and the expansive, rapidly evolving frontier of modern artificial intelligence.

Firms specializing in custom software development and sophisticated application management, such as Baytech Consulting, represent the ideal blueprint for this execution. Success in modernizing deeply entrenched systems relies heavily on core differentiators like tailored technological advantages and rapid, agile deployment methodologies. Rather than forcing a generic, one-size-fits-all product onto a fragile legacy architecture, specialized engineering teams custom-craft solutions that integrate seamlessly with the organization's specific operational realities. Highly skilled engineers ensure enterprise-grade quality, providing on-time delivery while maintaining clear communication throughout the transformation process.

The technical stack required to support these modern AI overlays is robust and versatile. Whether an organization is deploying entirely on-premises to satisfy strict data sovereignty requirements or utilizing hybrid cloud infrastructures, the architecture must be unassailable. Utilizing enterprise-grade tools—ranging from Azure DevOps On-Prem, VS Code/VS 2022, PostgreSQL, and SQL Server for data integrity, to Kubernetes and Docker for scalable containerization, managed via Harvester HCI and Rancher—ensures that the new AI capabilities are securely anchored. This level of highly skilled engineering ensures that intelligent layers can read and write to legacy databases without compromising the security or stability of the core business operations. Many enterprises are standardizing around a .NET- and Azure-first AI stack for exactly this reason: it fits their existing Microsoft ecosystem while adding modern agent frameworks and observability.

Ultimately, the goal is not to destroy the brownfield application, but to cultivate it. By strategically deploying non-invasive AI agents, RPA orchestration, and robust semantic layers, B2B enterprises can preserve the reliability of their decades-old software while granting it the voice, agility, and intelligence required to dominate a modern digital economy. This also means being realistic about how much work should be handed to machines. Architectures that lean on a 90% automation, 10% human-in-the-loop model help balance speed with oversight, so legacy modernization does not create new risks even as it removes old ones.

Frequently Asked Questions

Can AI be utilized if an enterprise software system is 15 years old? Yes. The most effective strategy is not to rewrite the 15-year-old code, but to implement a non-invasive AI layer that sits on top of the existing infrastructure. By leveraging flexible deployment models (cloud, on-premises, or hybrid) and AI agents, organizations can extract insights, query data, and automate workflows without fundamentally altering the core legacy architecture or risking massive business disruption.

How do organizations automate legacy systems that completely lack modern APIs? When APIs are absent, systems are automated by combining Artificial Intelligence with Robotic Process Automation (RPA). RPA acts as the digital hands, interacting directly with legacy user interfaces (such as AS/400 5250 terminal screens) exactly as a human would to input or extract data. The AI acts as the brain, processing unstructured inputs, interpreting natural language requests, and orchestrating complex logic to guide the RPA bots seamlessly, turning isolated systems into connected nodes.

What is the inherent risk of touching or refactoring legacy code with generative AI? Directly applying AI to refactor legacy code is highly risky due to AI hallucinations, the introduction of security vulnerabilities, and intellectual property concerns. Furthermore, AI tends to simply translate bad architecture into newer languages—polishing the "spaghetti code" rather than fixing the underlying structural issues. The secure method is to use AI to "Reverse Engineer" the code, extracting only the pure business logic into a technology-agnostic requirements document, and then using that document to architect a brand new, clean-slate solution.

How should we think about AI costs when adding these layers on top of legacy systems? While AI can dramatically accelerate workflows, poorly designed deployments can generate runaway API bills. Enterprises modernizing brownfield systems should design for efficiency from day one—using tactics like model routing, semantic caching, and prompt compression—to avoid paying a "token tax" on every query. A structured approach, like the one outlined in Baytech's guide on optimizing LLM costs, helps keep modernization ROI-positive over the long term.

Is this type of AI modernization only for large enterprises, or can startups benefit too? Startups that rely on a legacy vendor platform or inherited systems face similar constraints, just at a smaller scale. For them, AI-driven overlays can be a way to move faster than competitors while avoiding a risky full rewrite. The key is to treat AI as part of the product and moat strategy, not just a wrapper on top of someone else's data. Baytech's playbook on building durable AI moats is especially relevant for founders who need their modernization work to translate into long-term defensibility.

Supporting Articles

- https://www.allganize.ai/en/blog/guide-to-implementing-ai-in-legacy-systems-without-losing-your-mind

- https://www.itconvergence.com/blog/cost-of-maintaining-legacy-systems-vs-one-fully-supported/

- https://www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/unlocking-profitable-b2b-growth-through-gen-ai

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.