Secure AI Code: A 7-Stage Regulatory Compliance Framework

April 07, 2026 / Bryan Reynolds

A Practical Framework for Approving AI-Generated Code in Regulated Enterprises

The intersection of generative artificial intelligence and enterprise software development has triggered a profound operational shift. Engineering organizations across the globe are adopting large language models (LLMs) to automate boilerplate generation, accelerate complex refactoring, and dramatically reduce time-to-market. However, for organizations operating within the stringent confines of regulated industries—such as finance, healthcare, telecommunications, and high-tech—this acceleration introduces a critical tension. The demand for rapid innovation directly collides with the non-negotiable requirements of regulatory compliance, software governance, and audit readiness.

The core challenge facing B2B enterprises is no longer deciding whether to adopt AI-assisted coding, but rather determining how to govern it effectively. When developers engage in "vibe coding"—the practice of relying on an AI assistant to generate functional application logic without explicitly defining the underlying security and compliance requirements—they inadvertently shift critical architectural decisions to probabilistic models. This delegation creates an immense volume of security debt if left unchecked. Regulated enterprises require a structured, practical approval framework that enables the safe utilization of AI-generated code while satisfying the strict oversight demands of regulatory bodies, compliance officers, and internal audit teams.

Following the principles of absolute transparency and comprehensive analysis, this report provides a definitive blueprint for establishing an AI code approval process. It examines the empirical vulnerability rates of AI-generated code, explores the global regulatory landscape affecting specific industry sectors, defines the foundational governance frameworks, and outlines a rigorous seven-stage pipeline for securing the modern software development lifecycle.

The Empirical Reality: Analyzing AI-Generated Code Vulnerabilities

To architect a functional governance framework, an enterprise must first possess a clear, empirical understanding of the specific risk profile introduced by the technology. The prevalent assumption that syntactically correct code generated by an AI is inherently secure represents a dangerous fallacy in modern software engineering.

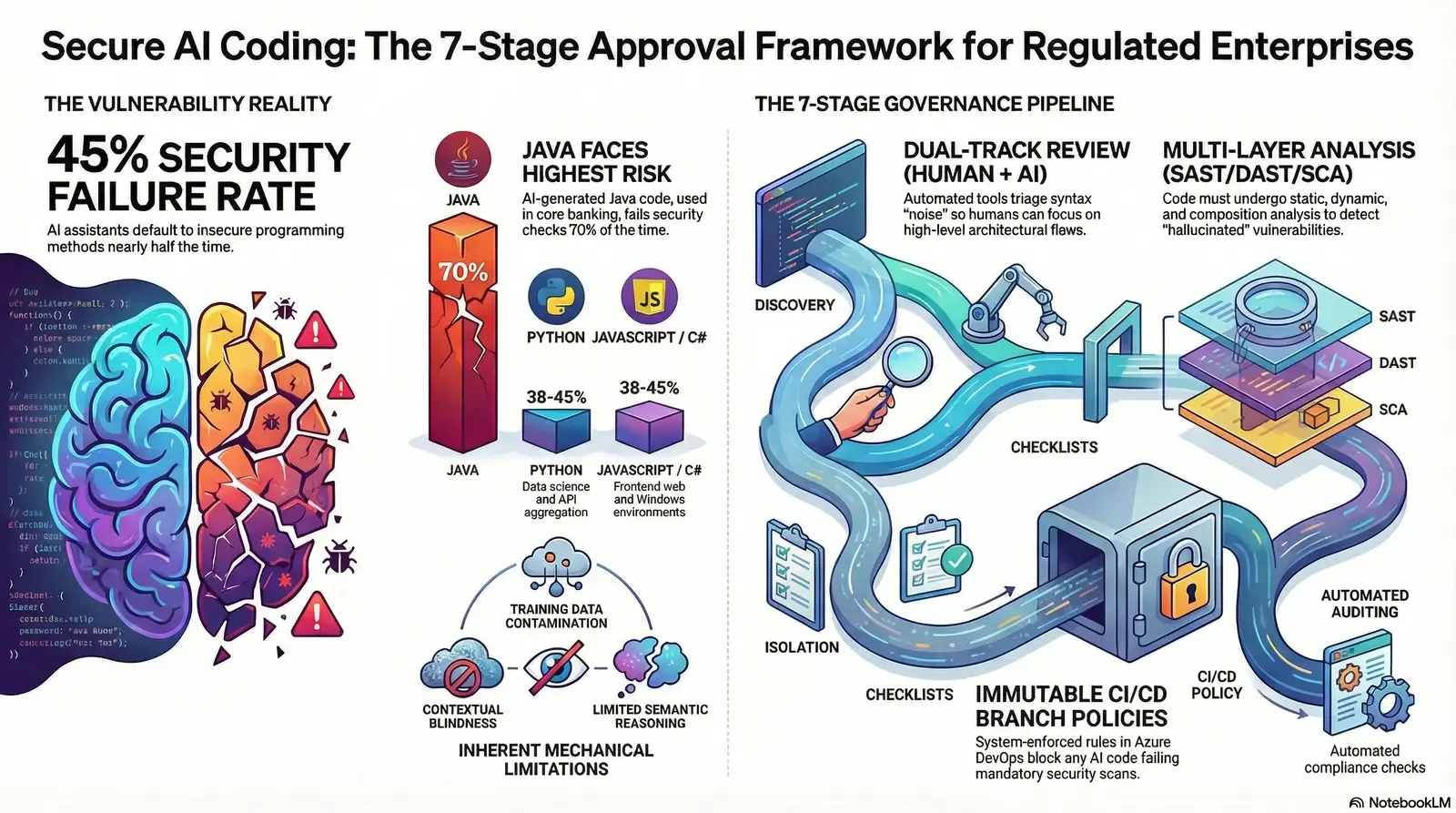

The Mathematics of AI Code Failure

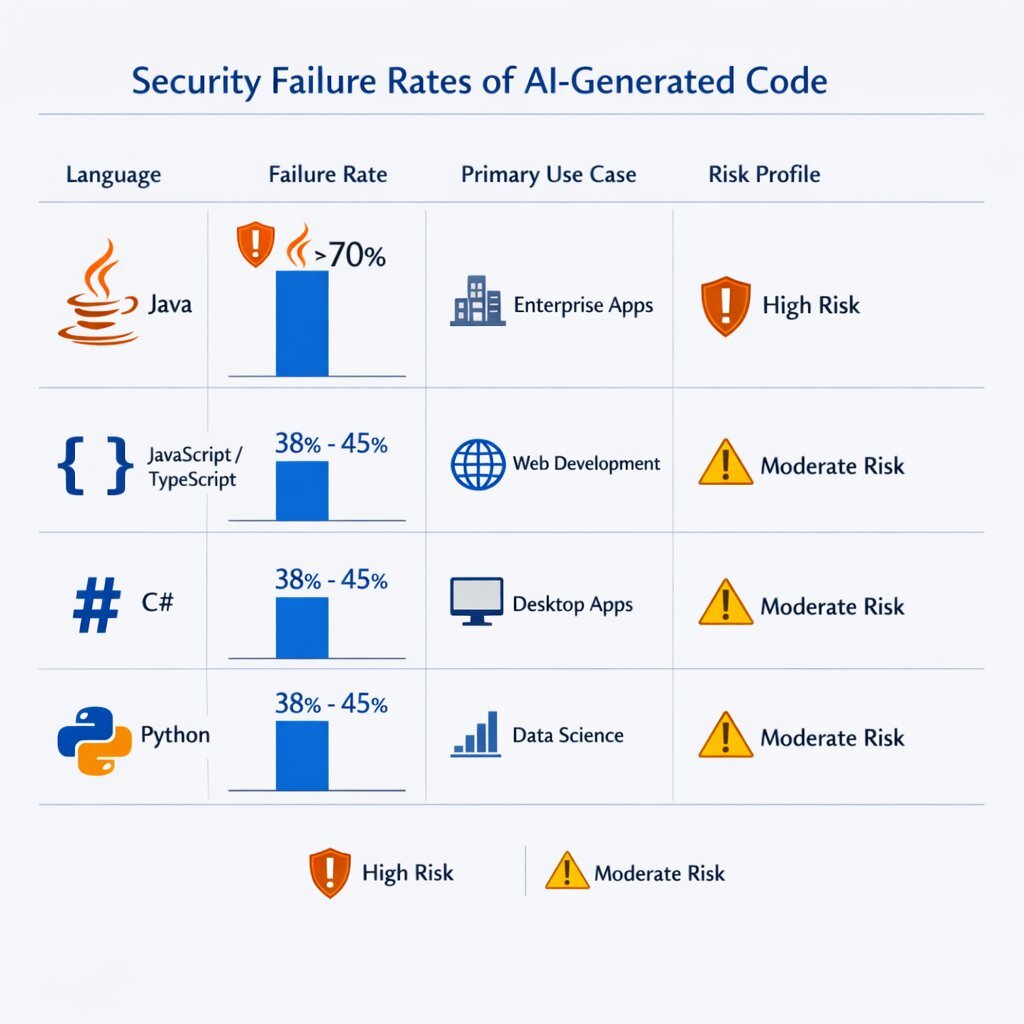

Extensive analysis of AI-generated code reveals a stark statistical reality regarding its security posture. According to the 2025 GenAI Code Security Report, which analyzed 80 curated coding tasks across more than 100 distinct large language models, AI produces functional code with a remarkably high success rate, but it introduces critical security vulnerabilities in 45% of cases. When these models are presented with a choice between utilizing a secure programming method versus an insecure method to solve a given logic problem, they default to the insecure option nearly half the time.

The risk profile is not uniform; it varies significantly depending on the programming language being utilized. Java, deeply entrenched in the backend systems of financial institutions, core banking infrastructure, and legacy enterprise applications, exhibits the highest risk profile, with a staggering security failure rate exceeding 70% in AI-generated outputs. Other widely adopted languages, including Python, C#, and JavaScript, demonstrate security failure rates ranging between 38% and 45%.

Table 1: AI-Generated Code Security Failure Rates by Programming Language

| Programming Language | Security Failure Rate | Primary Enterprise Use Cases | Corresponding Risk Severity Profile |

|---|---|---|---|

| Java | > 70% | Core banking, legacy enterprise applications, high-volume backend microservices | Critical: High potential for deep system compromise and regulated data exposure. |

| JavaScript / TypeScript | 38% - 45% | Frontend web interfaces, Single Page Applications (SPAs), Node.js serverless functions | High: Severe risk of client-side exploitation, Cross-Site Scripting (XSS), and DOM manipulation. |

| C# | 38% - 45% | Windows enterprise environments, Azure applications, .NET internal tooling | High: Risks related to Active Directory integration, insecure deserialization, and enterprise network traversal. |

| Python | 38% - 45% | Data science pipelines, MLOps, backend automation, API aggregation layers | High: Vulnerabilities surrounding data poisoning, insecure library imports, and remote code execution. |

Data sourced from extensive LLM performance testing and the 2025 GenAI Code Security Report.

The Mechanical Origins of Vulnerability

The high failure rate of AI models in producing secure code is not an arbitrary glitch; it is driven by three fundamental mechanical limitations inherent to current LLM architectures:

- Training Data Contamination: Large language models are trained on vast, unfiltered datasets drawn from public code repositories. These repositories contain decades of legacy code, deprecated libraries, and demonstrably insecure programming patterns. The models ingest these historical vulnerabilities and subsequently amplify them across thousands of new, enterprise implementations. Consequently, an AI coding assistant is statistically likely to reproduce a widely used, yet insecure, architectural pattern simply because of its overwhelming prevalence in the model's training data.

- Contextual Blindness: Generative models operate within a strictly limited context window. A model tasked with generating database connection logic cannot inherently "see" or comprehend an organization's centralized secrets management system, its complex network boundary configurations, or its broader architectural security policies. Without this critical architectural context, the AI might suggest hardcoding credentials directly into the application layer or bypassing established authentication gateways to achieve immediate functionality.

- Semantic Limitations: While models excel at recognizing syntax and mimicking common structural patterns, they struggle profoundly with novel security architectures, distributed system error handling, and sophisticated input validation for uncommon data types. They lack the abstract reasoning required to threat-model the code they produce.

These limitations consistently manifest in highly specific vulnerability clusters. Analysis indicates that LLMs fail to secure code against Cross-Site Scripting (CWE-79) in 86% of cases, and fail to prevent Log Injection (CWE-117) in 88% of cases. Furthermore, SQL Injection (CWE-89) and Cryptographic Failures (CWE-327) remain deeply pervasive in AI outputs due to the models' tendency to concatenate strings rather than utilize parameterized queries, alongside their persistent reliance on deprecated cryptographic algorithms like MD5 or SHA-1. For numerical and reporting workloads, enterprises increasingly turn to production-ready SQL Agents instead of generic vector search so that natural-language questions still resolve into exact, auditable queries.

The Regulatory Maelstrom: Industry-Specific Compliance Mandates

For regulated enterprises, the deployment of vulnerable code extends far beyond mere operational risk; it invites severe legal liability, devastating financial penalties, and catastrophic reputational damage. Global regulatory bodies and compliance frameworks have recognized the rapid integration of generative AI and are aggressively updating their enforcement postures. An enterprise's approval framework must directly address the distinct regulatory environments governing its specific industry vertical.

Finance, Real Estate, and Algorithmic Trading

In the financial sector, the Financial Industry Regulatory Authority (FINRA) and the Securities and Exchange Commission (SEC) have adopted an uncompromising stance on algorithmic accountability. FINRA's 2026 Annual Regulatory Oversight Report explicitly targets generative AI, signaling that AI is no longer viewed merely as an experimental innovation, but as a core, load-bearing component of a firm's operational and supervisory infrastructure.

FINRA maintains a technology-neutral posture, dictating that existing regulations—such as FINRA Rule 3110 regarding supervision—apply unequivocally to AI-driven processes. The regulatory expectation is clear: if an AI agent generates code that executes trades, summarizes financial data, or interacts with client portfolios, the firm must be capable of explaining the model's underlying logic, exhaustively documenting its interactions, and demonstrating continuous human supervisory control. The defense that "the AI made a coding error" is entirely invalid during a regulatory audit. Firms engaging in algorithmic trading or automated mortgage underwriting are required to subject all software and code development to rigorous, cross-disciplinary testing prior to production implementation, ensuring no AI-hallucinated logic creates systemic market risk.

Healthcare, Telemedicine, and Data Sovereignty

For organizations operating in healthcare, the processing of Protected Health Information (PHI) is governed by the Health Insurance Portability and Accountability Act (HIPAA) in the United States, alongside the FDA's strict guidelines for Artificial Intelligence/Machine Learning-based Software as a Medical Device (SaMD). AI code analysis introduces complex data sovereignty and privacy risks in these environments.

If a developer arbitrarily pastes proprietary code containing embedded credentials, API keys, or even obfuscated sample patient data into an unsecured, public AI chatbot for debugging purposes, it constitutes an immediate, reportable compliance breach. Standard commercial LLMs are frequently not HIPAA compliant, as their parent organizations do not execute Business Associate Agreements (BAAs) with covered entities. Healthcare software engineering teams must strictly enforce environments where AI models are completely isolated from production PHI, and where the code generated by the AI undergoes stringent validation to ensure it does not bypass Electronic Health Record (EHR) access controls.

Advertising, Telecommunications, and High-Tech Startups

Enterprises in advertising, telecommunications, and the broader high-tech landscape operate under the heavy scrutiny of comprehensive privacy frameworks, notably the European Union's General Data Protection Regulation (GDPR) and the newly enforceable EU AI Act (Regulation (EU) 2024/1689).

The EU AI Act represents the world's first comprehensive legal framework governing artificial intelligence, enforcing a strict, risk-based approach. For enterprises processing the data of European citizens, the Act mandates stringent requirements for high-risk AI deployments, including the logging of all activity to ensure the traceability of results, the maintenance of detailed documentation providing transparency to authorities, and appropriate human oversight measures. Non-compliance with these legislative mandates carries devastating financial implications, with penalties potentially reaching EUR 35 million or up to 7% of an organization's total global annual turnover. For fast-growing SaaS startups, failure to maintain SOC 2 or ISO 27001 compliance due to AI-generated security flaws can instantly terminate enterprise sales cycles and destroy investor confidence. That is why high-growth teams increasingly treat compliance bots and secure automation as a growth enabler, investing in internal AI compliance bots that constantly scan code and content for violations before they land in production.

Table 2: Global Compliance Matrix for AI-Generated Code

| Industry / Sector | Primary Regulatory Body & Frameworks | Core AI Code Compliance Requirements | Potential Penalties for Non-Compliance |

|---|---|---|---|

| Financial Services / Real Estate | FINRA, SEC, SOX, PCI-DSS | Strict adherence to FINRA Rule 3110; mandatory human supervision of AI outputs; comprehensive audit trails for algorithmic trading code. | License revocation, massive SEC fines, institutional trading bans, reputational destruction. |

| Healthcare / Biotech | HIPAA, FDA (SaMD), ISO 42001 | BAAs required for all AI tool vendors; strict separation of AI models from PHI; rigorous validation of logic impacting patient outcomes. | Severe HIPAA violation fines, FDA product recalls, class-action patient lawsuits. |

| Global High-Tech / Telecom / AdTech | EU AI Act, GDPR, CCPA/CPRA | Mandatory activity logging for traceability; adherence to data minimization principles; prevention of copyrighted code infringement. | Fines up to EUR 35M or 7% of global annual turnover; mandatory cessation of data processing. |

| Education (LMS) / Gaming | FERPA, COPPA, IP Law | Protection of minor data; securing proprietary game engine logic against leakage into public LLM training datasets. | Intellectual property loss, severe regulatory fines, loss of institutional contracts. |

Compliance data synthesized from global regulatory guidelines and AI legal frameworks.

The Strategic Imperative for C-Suite Personas

The implementation of a formal AI code approval process is not merely a technical exercise for the engineering department; it is a strategic imperative that directly impacts the core key performance indicators (KPIs) of the enterprise executive team.

- The Visionary CTO: The Chief Technology Officer is tasked with maximizing engineering velocity without compromising system integrity. They view AI as a mechanism to eliminate boilerplate coding and accelerate feature delivery. However, the CTO understands that an unmanaged AI deployment will lead to an insurmountable accumulation of technical and security debt. A structured approval framework provides the CTO with the assurance that the organization is achieving "Tailored Tech Advantage"—crafting cutting-edge solutions rapidly, but safely. Many CTOs now pair this with a portfolio of autonomous AI agents that handle repetitive workflows under tight guardrails rather than leaving every decision to a single chat-style assistant.

- The Strategic CFO: The Chief Financial Officer evaluates technology through the lens of risk versus return. While AI promises significant reductions in development costs, the CFO is acutely aware that a single regulatory fine (such as a 7% GDPR penalty) or a major data breach will instantly obliterate those cost savings. The CFO requires an approval framework that guarantees audit readiness and quantifies the exact return on investment for compliance tooling. That lens should also extend to AI infrastructure choices so the organization does not quietly bleed money on LLM usage; a disciplined cost strategy like the one outlined in The Token Tax: Stop Paying More Than You Should for LLMs belongs alongside security KPIs in any board report.

- The Driven Head of Sales: Enterprise sales cycles frequently hinge on the successful completion of exhaustive vendor security questionnaires. If a B2B firm cannot demonstrably prove to a prospective client that its AI-assisted development processes are secure, audited, and compliant with SOC 2 or ISO 27001, the deal will stall. The Head of Sales relies on a transparent code approval framework as a distinct competitive differentiator in the marketplace.

- The Innovative Marketing Director: The Marketing Director must protect and elevate the brand's reputation. In an era hyper-sensitive to "AI washing" and data privacy scandals, marketing leadership requires absolute confidence that the firm's core product is built on a foundation of trustworthy, compliant, and ethically governed software.

Establishing the Governance Foundation: NIST and OWASP

To navigate the complex web of vulnerabilities and industry-specific regulations, enterprises must anchor their approval frameworks to universally recognized, authoritative industry standards. Attempting to invent a proprietary governance model from scratch is both inefficient and legally perilous. Two critical frameworks form the bedrock of secure AI adoption: the NIST AI Risk Management Framework (AI RMF) and the OWASP AI Security and Privacy Guide.

Operationalizing the NIST AI RMF

The National Institute of Standards and Technology (NIST) released the AI Risk Management Framework 1.0 to assist organizations in systematically managing risks across the entire artificial intelligence lifecycle. The framework is structurally divided into four core functions that must be integrated directly into a regulated enterprise's operations:

- Govern: Establishing the cultural and structural accountability for AI risk. This involves creating cross-functional AI oversight committees (comprising legal, compliance, and senior engineering stakeholders) and drafting strict, unambiguous Acceptable Use Policies (AUPs) that define exactly which AI coding assistants are legally approved for enterprise use.

- Map: Identifying, categorizing, and inventorying the AI systems currently in use. Enterprises must aggressively hunt for "Shadow AI"—unapproved consumer tools covertly utilized by developers—and meticulously map the data flow of approved tools to ensure proprietary source code is not being inadvertently utilized to train third-party models.

- Measure: Implementing quantitative, objective metrics to evaluate AI output. This includes establishing strict baselines for vulnerability detection rates in AI-generated code versus human-written code, tracking the "security debt" introduced by AI tools over time, and monitoring the frequency of post-merge hotfixes required for AI-assisted logic.

- Manage: Deploying rigorous technical and procedural safeguards. This includes configuring strict Continuous Integration/Continuous Deployment (CI/CD) pipelines, enforcing dual-track code reviews, and configuring complex role-based access controls (RBAC) to limit blast radiuses.

Integrating the OWASP AI Security Pillars

While NIST provides the overarching enterprise risk management structure, the OWASP AI Security Guidance provides the tactical, granular engineering controls required at the keyboard level. A secure code approval process must physically enforce OWASP's foundational pillars:

- Data Security and Privacy Protection: Ensuring through cryptographic controls and network boundaries that sensitive enterprise data, trade secrets, and API credentials are never leaked into the prompts transmitted to external AI coding assistants.

- Model Integrity and Authenticity: Guaranteeing that the AI plugins and extensions operating within the developer's Integrated Development Environment (IDE) are cryptographically signed, rigorously version-controlled, and safeguarded against supply chain poisoning attacks.

- Governance and Accountability: Establishing definitive, legally binding chains of custody for every single line of code committed to the central repository, ensuring human oversight is an immutable prerequisite prior to merging into production.

Table 3: Mapping Foundational Frameworks to Enterprise SDLC

| Development Phase | NIST AI RMF Function | OWASP Implementation Pillar | Actionable SDLC Control |

|---|---|---|---|

| Planning & Policy | Govern | Governance and Accountability | Establish AI Acceptable Use Policy; define approved IDE extensions; assign AI risk owners. |

| Development & Coding | Map | Data Security and Privacy | Isolate development environments; implement IDE pre-commit hooks to block sensitive data leakage in prompts. |

| Code Review & Testing | Measure | Adversarial Resilience | Execute automated SAST/DAST against AI code; track vulnerability rates; perform bias and logic testing. |

| Deployment & Audit | Manage | Model Integrity and Authenticity | Enforce immutable CI/CD branch policies; generate automated audit evidence packages; continuous runtime monitoring. |

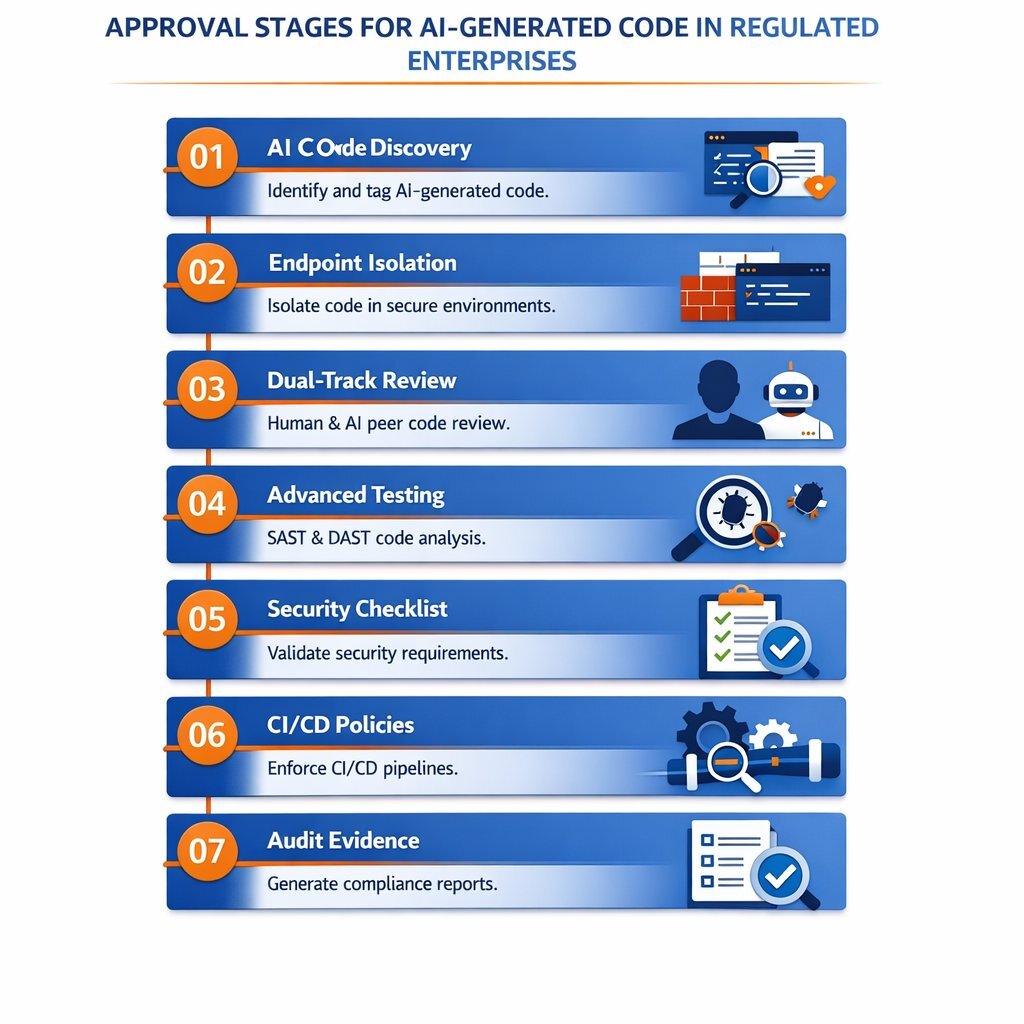

The 7-Stage Practical Approval Framework

Translating theoretical regulations and broad risk management frameworks into daily, friction-free engineering operations requires a highly structured, automated, and enforceable pipeline. Organizations that successfully leverage architectures designed for enterprise-grade quality—such as those adopting the Tailored Tech Advantage methodologies championed by specialized technology partners like Baytech Consulting—engineer their pipelines to support Rapid Agile Deployment without sacrificing a single degree of security. Utilizing robust, enterprise-controlled infrastructure, such as Azure DevOps On-Prem, Visual Studio Code, and deeply structured database environments (Postgres, SQL Server), provides the ideal ecosystem to enforce these strict governance controls. For many teams, this also means modernizing brownfield systems with safer patterns—like sidecar services and non-invasive AI overlays—rather than risky rewrites, as discussed in the AI Sidecar pattern for legacy applications.

The following seven-stage framework provides an exhaustive, practical mechanism for reviewing, auditing, and approving AI-generated code in the most highly regulated enterprise environments.

Stage 1: AI Code Discovery, Inventory, and Traceability

The absolute prerequisite for any approval process is total visibility. An enterprise cannot effectively govern what it cannot see. The first stage demands the implementation of mandatory traceability mechanisms that explicitly differentiate AI-generated logic from human-authored logic.

- Comprehensive AI Inventory: Organizations must conduct a forensic audit to identify all AI usage across the engineering department. This includes cataloging approved enterprise tools, uncovering "Shadow AI" instances, and documenting custom models in development.

- Interaction Logging and Telemetry: Development teams must exclusively utilize enterprise-tier AI assistants that are contractually bound not to train on customer data, and which inherently log prompts, the AI's generated outputs, and the developer's subsequent manual modifications.

- Mandatory Commit Tagging: Version control systems must be systematically configured to require specific metadata tags on commits that contain AI-assisted logic. By parsing commit messages for mandatory keywords (e.g.,

[AI-Generated],[Copilot-Assisted]), security teams can isolate, query, and precisely quantify the concentration of AI code within specific, high-risk repositories.

Stage 2: Endpoint Isolation and IDE Controls

Vulnerabilities must be intercepted and neutralized as close to their point of origin as possible—a philosophy commonly referred to as "shifting left." In the context of AI-generated code, the developer's Integrated Development Environment (such as VS Code or Visual Studio 2022) serves as the primary battleground.

- Sanctioned Tooling Enforcement: Network administrators must utilize advanced firewall configurations (e.g., pfSense) to aggressively block unauthorized, consumer-grade LLMs on corporate networks, preventing developers from pasting proprietary code into unsecured web interfaces. Only sanctioned, enterprise-licensed AI tools—configured to operate within Virtual Private Clouds (VPCs) or air-gapped installations—should be accessible via approved IDE extensions. Many enterprises pair this perimeter with a dedicated AI firewall middleware layer that sits between applications and LLMs to stop prompt injection and data exfiltration before it happens.

- Local Pre-Commit Hooks: Engineering teams must configure local pre-commit hooks to execute lightweight, rapid static analysis directly within the IDE before the code is even pushed. If the AI assistant hallucinates code containing hardcoded secrets, database credentials, or deprecated cryptographic algorithms, the pre-commit hook must instantly reject the change locally. This forces the developer to remediate the vulnerability before the code ever reaches the centralized, audited repository.

Stage 3: The Dual-Track Review Process (Human + AI)

Historically, code review relied entirely on manual, line-by-line human inspection. Today, attempting to manually review the sheer, overwhelming volume of code generated by high-velocity AI assistants creates an unsustainable engineering bottleneck. Conversely, relying solely on automated AI reviewers invites catastrophic context failures and security blind spots. The optimal solution is a hybrid, dual-track review process.

The workflow must operate sequentially to maximize efficiency and security:

- The Automated Baseline Triage: Upon the submission of a Pull Request (PR), automated AI review agents and traditional static analysis tools immediately scan the code diff. These systems handle the repetitive, high-volume checks: identifying syntax errors, enforcing style guide adherence, checking dependency hygiene, and flagging known CVE patterns. This automated triage strips away the "noise," allowing the PR to be processed rapidly.

- The Human Contextual Escalation: Once the code passes the automated baseline, it is routed to a senior human reviewer. Because the human engineer is no longer wasting cognitive energy hunting for missing semicolons, obvious SQL injections, or formatting errors, they can focus entirely on high-level, complex evaluations: Does this AI-generated logic align precisely with the specific business requirements? Does it expose the system to logical state bypasses? Are there compliance implications regarding how this PII is stored and transmitted?

Table 4: The Dual-Track Code Review Strategy

| Review Dimension | Automated / AI-Assisted Review | Manual / Human Peer Review |

|---|---|---|

| Primary Engineering Strength | Speed, massive scalability, perfect consistency, pattern-based vulnerability detection. | Deep context awareness, business logic validation, architectural oversight, complex threat modeling. |

| Execution Latency | Real-time (Seconds to Minutes) | High latency (Hours to Days, depending on PR size) |

| Ideal Target Vulnerabilities | SQL injection signatures, XSS checks, dependency hygiene, linting, secret scanning. | Complex state management flaws, race conditions, PII handling nuances, third-party integrations. |

| Inherent Limitations | Total context blindness; high false-positive rates; completely unable to understand business intent. | Prone to reviewer fatigue; inconsistent application of rules; difficult to scale with growing repository sizes. |

Data synthesized from software engineering performance metrics and review tool comparisons.

Stage 4: Advanced Static, Dynamic, and Composition Analysis

Because generative AI models are highly prone to "hallucinations" and suffer from severe architectural blindness, standard linting and basic testing are wholly insufficient. Regulated enterprises must employ a comprehensive suite of security testing methodologies specifically tuned to catch the four major AI flaw categories (CWE-89, CWE-79, CWE-327, CWE-117).

- Static Application Security Testing (SAST): SAST tools analyze the source code at rest, looking for structural flaws before the application is compiled or executed. Enterprises must create dedicated SAST profiles optimized specifically for AI-generated code. For example, the SAST tool must aggressively flag any Postgres or SQL Server database query that utilizes string concatenation instead of prepared statements, or any network call lacking proper Transport Layer Security (TLS) configuration.

- Dynamic Application Security Testing (DAST): Because AI models cannot comprehend the runtime environment or how disparate services interact, DAST is critical. DAST tools interact with the running application in an ephemeral environment, simulating external attacks to identify runtime vulnerabilities—such as broken authentication or application state manipulation—that static analysis cannot possibly observe.

- Software Composition Analysis (SCA): AI models frequently hallucinate non-existent libraries or confidently suggest the inclusion of highly vulnerable, outdated open-source dependencies. SCA tools must automatically scan the project's dependency manifest to ensure no malicious, outdated, or inappropriately licensed packages (e.g., restrictive GPL licenses) are merged into the enterprise codebase.

Stage 5: OWASP-Aligned Security Checklists and Domain Scrutiny

Prior to finalizing a review, human reviewers must validate the AI-generated code against an explicit, OWASP-aligned secure code checklist. Relying on intuition is entirely unacceptable in audited environments. The checklist must mandate the verification of over 120 individual security controls, prioritizing the most frequently failed categories:

- Authentication and Authorization Validation: The reviewer must forensically verify that every database query generated by the AI includes strict record ownership verification (e.g., ensuring

SELECT * FROM users WHERE id = ? AND owner_id = ?is executed in Postgres) to prevent horizontal privilege escalation (CWE-639). Reviewers must actively hunt for commented-out security logic or unauthorized bypass annotations (e.g.,@AllowAnonymous) hallucinated by the model. When those queries power customer dashboards or internal analytics, many enterprises now combine this checklist with deterministic Text-to-SQL layers, like the data-readiness and SQL-governance practices used for production AI reporting. - Session Management and Cryptographic Integrity: AI-generated authentication flows must be verified for cryptographic strength. Reviewers must confirm the implementation of secure random generators for session tokens, forced token regeneration post-login (mitigating CWE-384), and the strict application of

Secure,HttpOnly, andSameSite=Strictcookie flags. Furthermore, reviewers must ensure that no deprecated algorithms (DES, MD5, RC4, SHA-1) are present in the final code, enforcing modern standards (AES-256, RSA ≥ 2048 bits) in absolute alignment with NIST SP 800-57 guidelines. - Infrastructure and Container Security: When utilizing infrastructure-as-code or reviewing Kubernetes manifests and Dockerfiles destined for deployment on environments like Harvester HCI, Rancher, or OVHCloud servers, reviewers must verify critical constraints. AI frequently generates overly permissive container configurations. Reviewers must ensure

runAsNonRoot: trueis enforced, Linux capabilities are dropped (capabilities.drop: [ALL]), and the root filesystem is set to read-only. - Input Validation and Injection Prevention: Reviewers must manually trace the flow of user input through the AI-generated logic. Output encoding must be context-specific (HTML, JS, CSS) to mitigate the massive XSS risks prevalent in AI code, and the use of shell interpreters or dynamic execution commands (e.g.,

system(),eval()) must be strictly prohibited or subjected to extreme scrutiny to prevent command injection (CWE-88).

Stage 6: Enforcing Governance via CI/CD Branch Policies

The theoretical policies established by the Governance phase and the meticulous checklists of the Review phase are meaningless if they can be bypassed by an engineer rushing a deployment. The rules must be physically enforced by the infrastructure itself. In robust environments managed via platforms like Azure DevOps On-Prem, security controls can be hardcoded into the repository's branch policies, creating an immutable, technological barrier between vulnerable AI code and production deployment. This same philosophy applies when integrating AI copilots into SaaS products or internal tools: following a structured playbook like the one in From Chat Widgets to Copilots helps teams treat every AI touchpoint as part of a governed system, not a side experiment.

To guarantee compliance, the main or release branches must be protected by mandatory rules that cannot be disabled or circumvented by individual developers:

Table 5: Recommended Azure DevOps Branch Policies for AI Governance

| Azure DevOps Policy Setting | Required Configuration | Compliance and Security Rationale |

|---|---|---|

| Require a minimum number of reviewers | Enabled (Minimum: 2) | Enforces the critical "Four Eyes Principle." An automated AI agent plus one human is insufficient for high-risk modules; secondary human validation is legally required. |

| Check for linked work items | Enabled (Required) | Ensures absolute traceability. Every AI-generated PR must be tied to a documented business requirement, proving the code was authorized, intentional, and scoped. |

| Build Validation | Enabled (Blocking) | The Pull Request cannot be completed unless the code successfully compiles and passes all automated unit tests in an ephemeral pipeline. |

| Status Check Policies (SAST/DAST/SCA) | Enabled (Blocking) | Third-party security scanners must successfully execute and post a "Success" status to the PR. Any detected Critical/High CVE automatically blocks the merge. |

| Automatically included reviewers | Enabled (Security/Architecture Teams) | If the AI code modifies sensitive paths (e.g., /auth, /crypto, azure-pipelines.yml, or database schema definitions), the policy automatically requires sign-off from specialized security personnel. |

Configurations modeled on Azure DevOps Git repository settings and optimal governance policies.

Stage 7: Automated Evidence Collection and Audit Readiness

The final stage of the approval framework bridges the critical gap between technical engineering operations and legal compliance. Regulated environments are subject to rigorous, exhausting internal and external audits to maintain certifications like SOC 2, ISO 27001, HIPAA, or PCI-DSS. Historically, compliance teams spent weeks manually capturing screenshots of configurations and sampling code reviews to prove adherence to policies.

In the high-velocity era of AI-assisted coding, manual sampling is obsolete and dangerous. The speed of AI development requires continuous, automated compliance monitoring.

- Full Population Testing: Instead of auditing a mere 5% to 10% sample of pull requests, AI-powered compliance tools can continuously monitor 100% of repository transactions. This ensures that every single instance of AI-generated code was subjected to the required branch policies, SAST scans, and human reviews.

- Automated Evidence Packaging: Modern compliance workflows utilize browser automation and AI agents to systematically navigate through DevOps consoles, executing tests, capturing timestamped visual evidence, and organizing the data into secure, immutable storage buckets. These systems can compile comprehensive HTML reports linking the AI-generated code, the SAST scan results, and the human reviewer's approval signature into a single, undeniable audit artifact, dramatically reducing the cost and disruption of regulatory audits.

- Model Documentation and Data Cards: For enterprises deploying custom, internally fine-tuned models, governance requires maintaining extensive model documentation. This includes clearly documenting the model's intended purpose, the exact dataset used for fine-tuning, known limitations, and rigorous bias and fairness assessments.

Defining Accountability: Organizational Roles in the AI Lifecycle

A technological framework is only as effective as the personnel enforcing it. The organizational ambiguity regarding "who ultimately owns the AI's output" must be aggressively eliminated. The OWASP AI Security Guidance emphasizes the absolute necessity of establishing clear, documented organizational accountability.

- The Chief Information Security Officer (CISO): The CISO holds ultimate enterprise accountability. They must establish the enterprise-wide AI security policy, align it with NIST, FINRA, and EU AI Act requirements, and secure the necessary budget for advanced testing and monitoring infrastructure. Executive sponsorship from the CEO is also critical to drive the cultural shift required for compliance.

- AI Governance Officers and Compliance Teams: These individuals act as the crucial bridge between dense legal mandates and technical execution. They translate complex regulations (such as HIPAA rules or FINRA Rule 3110) into actionable pipeline logic, maintain the overarching AI Risk Matrix, and continuously monitor the enterprise's exposure to data privacy and intellectual property risks.

- MLOps and Engineering Infrastructure Teams: This team is responsible for the physical implementation of the governance framework. They configure the complex Azure DevOps branch policies, maintain the API integrations with SAST/DAST/SCA tools, and ensure that the enterprise AI models are securely deployed—operating strictly within Virtual Private Clouds (VPCs) or air-gapped environments to prevent sensitive data exfiltration.

- The Individual Developer: The developer acts as the vital first line of defense. They are legally, operationally, and ethically responsible for the code they commit to the repository, regardless of whether a generative AI produced it. They must strictly adhere to the Acceptable Use Policy, rigorously validate inputs, and proactively reject insecure or hallucinated AI suggestions. For many teams, this responsibility now includes collaborating with an internal AI automation stack that codifies routine checks and approvals so developers can focus on higher-level design instead of repetitive busywork.

Conclusion

The deployment of AI-generated code presents a profoundly transformative opportunity for B2B enterprises, offering unparalleled advantages in development speed, cost reduction, and market agility. However, the empirical data unequivocally demonstrates that generative AI models are not inherently secure; they are highly susceptible to reproducing critical vulnerabilities, hallucinating logic, and violating strict architectural boundaries. For regulated enterprises operating in finance, healthcare, telecommunications, and high-tech, the unmanaged deployment of this technology poses unacceptable legal, financial, and reputational risks.

To harness the immense power of AI safely, organizations must abandon ad-hoc oversight and immediately implement a rigorous, systemic approval framework. By anchoring their processes to the authoritative NIST AI RMF and OWASP security guidelines, establishing strict dual-track review workflows, and enforcing immutable CI/CD branch policies within robust environments like Azure DevOps, enterprises can successfully mitigate the risks of AI generation.

The future of enterprise software engineering is undeniably AI-assisted. However, the organizations that ultimately dominate their markets will not be those that simply generate code the fastest, but those that architect the most resilient, automated frameworks to govern, audit, and secure that code at scale. By embedding compliance directly into the deployment pipeline, utilizing principles of Rapid Agile Deployment, technical leaders can empower their engineering teams to innovate aggressively while maintaining the ironclad security posture demanded by global regulators.

Frequently Asked Questions

What approval process should regulated enterprises use for AI-generated code?

Regulated enterprises must utilize a rigorous, multi-staged, hybrid approval pipeline. This process begins with strict environment isolation and local pre-commit hooks to catch obvious errors and data leaks locally. It then moves to a dual-track review system where automated SAST, DAST, and SCA tools scan for known CVEs and structural flaws (like SQL injection or weak cryptography), followed by mandatory human peer review focused on business logic. Finally, the code must pass through immutable CI/CD branch policies (e.g., Azure DevOps build validation and status checks) that physically block the merge of any code failing compliance or security benchmarks.

Who should be responsible for reviewing AI-assisted output?

Accountability is hierarchical but functionally rests firmly on the human developer. The developer who initiates the pull request is fundamentally responsible for the AI's output. A secondary human peer reviewer is required to validate business logic, architectural alignment, and complex security threats. At the enterprise level, the CISO is accountable for the overarching policy, while MLOps and AI Governance Officers are responsible for ensuring the automated review tools, infrastructure, and branch policies are functioning correctly and logging evidence.

What checks should be required before merging code?

Before merging into a main branch, AI-generated code must successfully pass:

- Automated syntax and dependency linting checks.

- Static Application Security Testing (SAST) specifically tuned for AI hallucination patterns (e.g., identifying hardcoded secrets and string concatenation).

- Dynamic Application Security Testing (DAST) for runtime evaluation in ephemeral environments.

- Software Composition Analysis (SCA) to verify no malicious or unlicensed packages were introduced by the model.

- An OWASP-aligned manual checklist verifying authentication, authorization, session management, and strict input validation.

How do compliance and audit teams fit into the process?

Compliance and audit teams shift their operational model from periodic, disruptive manual sampling to continuous, real-time monitoring. They utilize AI-enhanced audit tools and browser automation to automatically capture timestamped evidence of branch policy adherence, SAST scan results, and human approval sign-offs for 100% of repository transactions. They are responsible for mapping the engineering workflows to specific regulatory requirements (like the EU AI Act, FINRA Rule 3110, or HIPAA) and maintaining the organization's overarching AI Risk Matrix.

What documentation should be maintained?

Enterprises must maintain comprehensive, easily accessible documentation, including an inventory of all approved and restricted AI tools (combating the risk of "Shadow AI"). For every pull request, version control logs must explicitly indicate the origin of the code (AI vs. Human) via commit tags. Organizations must also retain detailed interaction logs (prompts and outputs), evidence of passing security scans, explicit human approval signatures, and Model Data Cards detailing the parameters, training data, and known limitations of any internal custom models.

How can enterprises ensure traceability and accountability?

Traceability is ensured by configuring version control systems (like Git within Azure DevOps) to require specific metadata tags identifying AI-generated commits. Branch policies must link every pull request to a documented work item or Jira ticket, proving the code fulfills a specific, authorized business requirement. Accountability is enforced through strict Role-Based Access Control (RBAC), ensuring that only authorized senior engineers can approve and merge high-risk modifications, leaving an immutable digital paper trail for auditors.

Which code categories should require stricter review?

Stricter, multi-layered human review is absolutely mandatory for any code interfacing with highly sensitive domains. This includes authentication and authorization modules, cryptographic implementations, secrets management, and payment processing or financial transaction logic (heavily governed by SOX or PCI-DSS). Additionally, any code handling Protected Health Information (PHI), Personally Identifiable Information (PII), or architectural changes to the CI/CD pipeline and Kubernetes manifests themselves demands extreme scrutiny and mandatory sign-off from specialized security personnel to prevent systemic compromise. Where those systems are also being augmented with AI copilots or autonomous workers, many organizations follow the build-vs-buy thinking in When to Move Off Zapier to keep their most sensitive automations under a tightly controlled, custom architecture.

Supporting Articles

- https://owasp.org/www-project-ai-security-and-privacy-guide/

- Navigating compliance risks in AI code analysis

https://www.nist.gov/itl/ai-risk-management-framework

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.