Catch Violations Before They Happen: AI Compliance Bots

March 20, 2026 / Bryan Reynolds

How Do We Catch Compliance Violations Before They Happen? The Ultimate Guide to Internal AI Compliance Bots

The enterprise technology landscape has crossed a critical and irreversible threshold. The rapid acceleration of digital transformation, coupled with the exponential proliferation of generative artificial intelligence, has fundamentally altered the attack surface and regulatory risk profile of modern businesses. Executives at B2B firms—spanning finance, healthcare, real estate, gaming, education, telecom, and high-tech software—are facing a profound operational dilemma. As business units enthusiastically leverage AI to generate code, draft marketing copy, and process documents at unprecedented speeds, the traditional, manual mechanisms for compliance and risk management are failing to keep pace.

The prevailing questions echoing through boardrooms and executive strategy sessions today reflect this acute anxiety: “How can AI help us stay compliant? Can we automate PII redaction? How do we catch compliance violations before they happen?”

The answer to these questions requires an architectural shift from reactive, post-incident auditing to proactive, automated interdiction. This is the emerging domain of the internal compliance bot—a localized, highly specialized artificial intelligence agent designed to scan, evaluate, and sanitize code commits, marketing assets, and outbound communications in real-time, long before they ever leave the corporate network.

Following the transparent, comprehensive approach to solving complex business problems, this report explores the mechanics, the economics, and the practical implementation of AI-driven internal risk management. It provides actionable intelligence for visionary CTOs engineering secure pipelines, strategic CFOs managing risk and vendor budgets, innovative Marketing Directors navigating strict advertising regulations, and driven Heads of Sales seeking to close enterprise deals without compliance bottlenecks.

The High Stakes of Non-Compliance in the Generative Era

To truly grasp the necessity of automated internal risk management, one must first examine the current economic realities of data breaches, regulatory enforcement, and the hidden costs of shadow IT. The financial penalties for non-compliance and the collateral damage of data exfiltration have reached historic, business-threatening highs.

The IBM 2025 Cost of a Data Breach Analysis

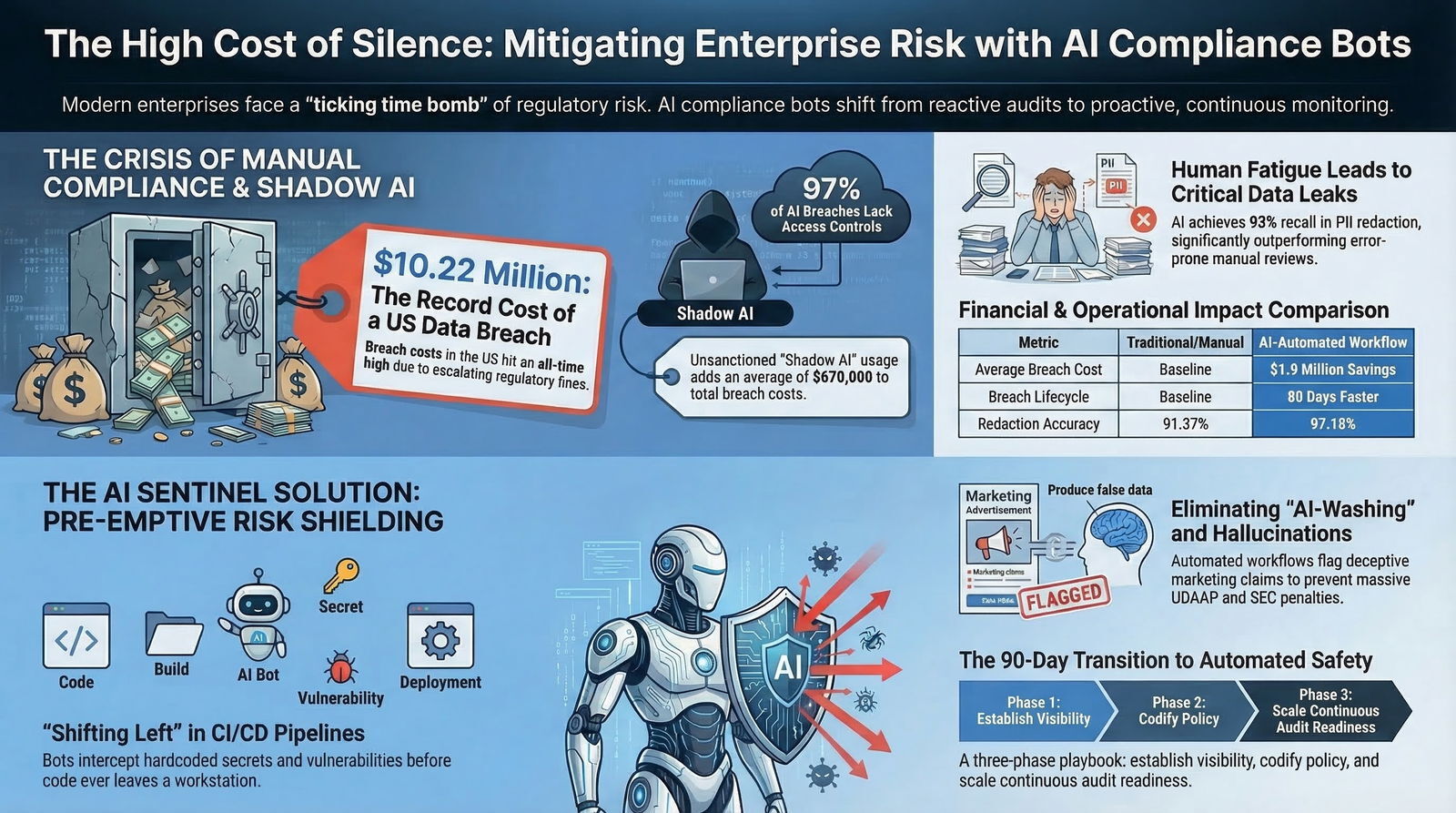

According to the exhaustive 2025 Cost of a Data Breach Report published by IBM, while the global average cost of a data breach saw a slight reduction to $4.44 million (a 9% decrease from 2024), the financial impact within the United States surged in the opposite direction.

The average cost of a U.S. data breach increased by 9.2% to an all-time high of $10.22 million.

This stark geographic disparity is largely attributed to a highly aggressive regulatory environment in the U.S., marked by escalating fines from federal agencies and inherently higher costs associated with incident detection, legal escalation, and consumer remediation.

The healthcare sector remains the most severely impacted industry, suffering the highest average breach costs of any vertical for 14 consecutive years. In 2025, the average healthcare data breach in the U.S. cost $7.42 million. Furthermore, these breaches required an average of 279 days to identify and contain—more than five weeks longer than the global average breach lifecycle across other industries. The financial services sector followed closely, bearing average costs of $5.9 million per incident.

However, the 2025 data also reveals a highly promising counter-trend that serves as the business case for AI compliance investments: organizations that extensively deployed security AI and automation tools cut their breach lifecycle by 80 days and saved an average of $1.9 million per incident compared to organizations relying on legacy, manual security postures.

The Proliferation of "Shadow AI"

The introduction of generative AI into the corporate workforce has exacerbated these baseline risks. "Shadow AI"—the unsanctioned, unmonitored use of public consumer AI tools (like public ChatGPT or Claude interfaces) by employees attempting to boost their productivity—was cited as a direct contributing factor in 20% of all data breaches analyzed by IBM.

When an employee pastes a proprietary customer list or a block of proprietary code into a public Large Language Model (LLM) to "format it quickly," they are effectively causing a data exfiltration event. Shadow AI incidents added an average of $670,000 to breach costs and frequently exposed massive volumes of personally identifiable information (PII) to public models. Astonishingly, 97% of AI-related security incidents occurred in organizations that lacked proper AI access controls, and 63% operated without established AI governance policies. For a deeper look at how to govern AI-assisted development itself and avoid this kind of untracked usage, see our analysis of vibe coding risks and AI governance for 2026 and beyond.

This aggressive threat landscape dictates that passive, periodic compliance testing is entirely obsolete. What modern B2B organizations require is an active, persistent layer of automated governance operating directly within the digital workflows of the enterprise.

The Anatomy of Internal Compliance Bots

Internal compliance bots are highly specialized, often agentic AI systems integrated directly into enterprise technology stacks. Unlike generative LLMs designed to creatively write text or code, compliance bots operate as analytical sentries and strict gatekeepers. Their primary function is to ingest structured and unstructured data streams—ranging from incoming PDF documents and internal chat messages to live code commits and outbound marketing assets—and evaluate that data against a strict ruleset derived from regulatory frameworks (e.g., HIPAA, SOC2, GDPR, CCPA) or internal corporate playbooks.

These tools represent a fundamental shift from reactive risk management to proactive operational readiness. According to the 2025 Global Compliance Risk Benchmarking Survey, 57% of compliance officers view AI and predictive analytics as top compliance priorities, reflecting a broader market move toward connected risk data. Efficiency and cost savings are the primary motivators for this adoption, with larger organizations reporting overwhelmingly high satisfaction levels due to the automation of repetitive, manual governance tasks.

Core Mechanisms of Operation

When properly configured, these autonomous or semi-autonomous agents execute three primary functions:

- Continuous Integration Scanning: Monitoring systems of record, communication channels, and code repositories in real time, serving as an invisible layer of friction that only triggers when a policy is violated.

- Contextual Semantic Analysis: Utilizing Natural Language Processing (NLP) to understand the actual semantic meaning of data. This drastically reduces the "alert fatigue" and false positives caused by legacy keyword-matching tools that lacked contextual awareness.

- Automated Interdiction and Routing: Redacting sensitive information on the fly, blocking non-compliant code from merging into the main branch, or routing problematic marketing assets to human legal counsel for a final decision before publication.

| Legacy Risk Management | AI Compliance Bot Automation |

|---|---|

| Audit Frequency | Periodic (Quarterly/Annually) |

| Detection Method | Manual review, static keyword matching |

| Action Taken | Post-incident remediation and fines |

| Resource Dependency | High (Requires large teams of human auditors) |

| Primary Challenge | Fatigue, human error, slow velocity |

Use Case 1: Automating PII Redaction at Scale

The safeguarding of Personally Identifiable Information (PII) and Protected Health Information (PHI) is a foundational, non-negotiable requirement across all regulated industries. Traditional redaction methods demand large teams of human reviewers manually reading documents, watching videos, or listening to audio to draw digital black boxes over sensitive data.

This process is inherently slow, highly expensive, and profoundly susceptible to human error—a tired reviewer missing a single Social Security Number in a 400-page PDF can trigger a multi-million dollar GDPR or HIPAA violation. As more organizations move their document handling and back-office workflows into AI-powered software environments, the pressure to modernize redaction with automation only increases.

With the advent of advanced NLP and LLM technologies, automated PII redaction has transitioned from a theoretical concept to a highly reliable operational reality. AI-driven redaction bots do not merely look for standard regex patterns (like Social Security Numbers structured as XXX-XX-XXXX); they analyze the surrounding syntax to identify unstructured PII, such as names, obscure geographical identifiers, or contextual health references.

Performance and Efficacy Metrics

Empirical data overwhelmingly supports the superiority of AI redaction over manual processes. In rigorous testing, AI-driven redaction tools have demonstrated profound improvements in both accuracy and operational velocity:

- Accuracy Advancements: A recent academic evaluation of specialized redaction software (iDox.ai) demonstrated that AI intervention increased redaction accuracy from a manual human baseline of 91.37% to an AI-assisted rate of 97.10%. This improvement was statistically significant (p-value = 0.00004), illustrating that machines are objectively better at maintaining focus across vast document troves than fatigued human reviewers. Another study involving Imprima LLM-driven technology showed an average recall rate of 93%, indicating a highly effective capability to locate the vast majority of terms requiring redaction without generating excessive false positives.

- Time and Cost Compression: In a real-world enterprise application by TCDI, process-driven AI was utilized to redact PII across 439,000 documents. The project was completed in just five weeks—compressing the timeline by two entire months compared to manual projections—and yielding a 38% direct cost savings for the client. Similarly, Kanini reported a 50% reduction in overall redaction time and a 70% decrease in manual human effort, achieving 90-95% accuracy on highly diverse, unstructured PDF formats through AI-driven matching.

In enterprise healthcare environments, the financial impact of deploying AI for data sanitization, coding, and billing is immense. Case studies reveal that healthcare organizations have slashed annual coding costs by $1.3 million, boosted charge capture by 10%, and cut denied and unbilled claims in half. Another provider reported a 64% reduction in manual coding, trimmed coding delays by 3.6 days, and automated 71% of their processes, fundamentally accelerating cash flow and ensuring strict HIPAA compliance prior to data transmission.

Use Case 2: Securing the Codebase via CI/CD Pipeline Scanning

For B2B software engineering teams, the primary vector of compliance risk often originates directly from the developers' keyboards. The inadvertent committal of hardcoded API keys, database connection strings, or unencrypted PII into source code repositories constitutes a severe breach of SOC2, ISO27001, and GDPR standards.

The modern approach to neutralizing this risk relies on "shift-left" security methodologies. This involves embedding automated compliance bots directly into Continuous Integration and Continuous Deployment (CI/CD) pipelines. By integrating scanning tasks into the build pipeline, every single pull request and code commit is autonomously evaluated before the code ever reaches production environments.

The Mechanics of Infrastructure Scanning

Enterprise-grade custom software development relies on robust, complex infrastructure. Code is frequently written in local environments like VS Code or VS 2022, managed through platforms like Azure DevOps On-Prem, containerized via Docker, and orchestrated on Kubernetes clusters managed by Rancher, running on Harvester HCI servers. Data is stored across Postgres, pgAdmin, and SQL Server instances. Within this vast ecosystem, compliance bots act as automated, unyielding gateways.

For organizations seeking to bridge the gap between rapid development and strict compliance, custom software development and application management partners provide a critical lifeline. Firms like Baytech Consulting specialize in delivering a Tailored Tech Advantage—solutions custom-crafted with cutting-edge tech—while maintaining rapid, agile, and secure deployments. Integrating compliance bots into this stack ensures that enterprise-grade quality is never compromised for speed. This is especially true when teams embrace an AI-native SDLC that bakes security and compliance checks into every phase of design, build, and deployment.

When integrated into such an environment, the bots perform several critical functions:

- Secret and PII Detection: Tools such as GitHub Advanced Security for Azure DevOps, Microsoft Defender for Cloud CLI, and Datadog Secret Scanning utilize advanced pattern recognition and NLP to scan code repositories for exposed credentials and PII. If a developer attempts to commit code containing a secure Microsoft 365 administrative token or a Postgres database password, the bot intercepts the commit, fails the pipeline immediately, and issues a pull-request annotation requiring immediate developer remediation before the build can proceed.

- Dependency and Vulnerability Analysis: Open-source libraries frequently harbor known vulnerabilities. Dependency scanning bots cross-reference newly committed code against global vulnerability databases (such as CVEs). If a developer attempts to pull in a compromised open-source component, the bot flags the alert in the build log, blocks the integration, and offers straightforward remediation guidance on which version of the library is secure.

- Infrastructure as Code (IaC) and Container Scanning: In modern containerized environments, misconfigurations in the infrastructure setup can lead to massive data leaks. In Kubernetes environments managed by Rancher, tools like Kube-bench autonomously verify that clusters are deployed in strict adherence to Center for Internet Security (CIS) benchmarks. Advanced Cloud Native Application Protection Platforms (CNAPP), such as AccuKnox, utilize cutting-edge eBPF (Extended Berkeley Packet Filter) technology to provide deep runtime visibility, enforcing zero-trust policies and micro-segmentation across Azure VMs and containers without slowing down the CI/CD workflow.

This level of integrated automation ensures that enterprise-grade quality is maintained consistently. By automating the auditing of underlying code structures and infrastructure, organizations completely eliminate the reliance on manual peer code reviews for security compliance, which frequently fail to spot deeply obfuscated vulnerabilities. For many teams, adopting a modern .NET, Docker, and Kubernetes stack provides the right foundation for plugging in these compliance agents with minimal friction.

Use Case 3: Marketing Copy and the AI-Washing Trap

The marketing and sales functions of B2B enterprises have aggressively adopted generative AI to scale content production. Chatbots generate localized website copy, LLMs draft email sequences in tools like Microsoft Copilot, and AI agents schedule multi-channel social media posts.

However, this proliferation of synthetic content exponentially increases the surface area for severe regulatory violations.

The Risks of Synthetic Content and "AI-Washing"

In marketing compliance, the volume of AI-generated content vastly outpaces the capacity of human review teams to read it. In the first quarter of 2024 alone, automated monitoring platforms flagged 1 in 5 marketing assets for potential compliance issues, underscoring the urgent need for scaled monitoring. At the same time, investors are pushing back on shallow “AI-washing” across the industry, as outlined in our playbook on why generic AI startups are dead and how to build real moats.

The primary risks for marketing directors and sales leaders include:

- AI-Washing: This involves overstating the extent to which a product actually "uses AI" or making unproven, exaggerated claims about AI performance. The Securities and Exchange Commission (SEC) has actively penalized firms for making false and misleading statements regarding their AI capabilities, including settled charges against investment advisers totaling $400,000 in penalties in early 2024.

- Hallucinated Claims and Offer Inflation: LLMs frequently fabricate specific details to make marketing copy sound more persuasive—inventing phrases like "instant approval," "guaranteed lowest rate," "100% secure," or "no hidden fees." In heavily regulated industries like mortgage lending, banking, and finance, these fabrications map directly to Unfair, Deceptive, or Abusive Acts or Practices (UDAAP) violations.

- Chatbot Misrepresentation: Consumer-facing chatbots that hallucinate policy details, pricing, or refund eligibility expose companies to severe legal liability. In the landmark 2024 case Moffatt v. Air Canada, a tribunal found the airline liable for negligent misrepresentation after its customer service chatbot provided a passenger with inaccurate bereavement fare information. Crucially, the tribunal ruled that standard "terms and conditions" disclaimers did not shift responsibility away from the company; businesses are legally bound by the representations made by their automated bots.

Building a Defensible AI Marketing Compliance Workflow

To counteract these massive risks without killing marketing growth, forward-thinking organizations deploy compliance bots upstream in the content creation process. The operational architecture for a defensible marketing compliance program relies on strict automation workflows rather than post-publication takedowns.

A robust AI compliance automation workflow functions as an assembly line: Intake → Map → Pull → Draft → Verify → Review → Export.

- Claim Inventory and Negative Libraries: The compliance bot is grounded in an authorized internal knowledge base consisting of every approved factual, comparative, and pricing claim the brand is legally permitted to make. Simultaneously, it is fed a "negative claim library"—a database of prohibited phrases and regulatory red flags specific to the industry (e.g., "guaranteed approval" in finance).

- Automated Pre-Screening: As marketing copy is drafted by human creatives or generative AI tools, the internal compliance bot scans the text in real-time. If it detects a prohibited phrase, an unsubstantiated claim, or an unapproved use of copyrighted material, it immediately flags the text and prevents the asset from progressing to the next stage of approval.

- Human-in-the-Loop Escalation: Routine content that passes the scan is routed for standard publication. However, complex claims or high-risk assets are automatically routed by the bot to designated compliance officers or legal counsel. The software documents all revisions, approvals, and the exact prompts utilized, creating an immutable audit trail required by regulators to prove ongoing oversight. Architecturally, this mirrors the 90/10 Human-in-the-Loop “trust architecture” described in our report on why 90% automation and 10% humanity wins in 2026.

| Marketing Workflow Step | Traditional Manual Process | AI Compliance Bot Process |

|---|---|---|

| Drafting | Copywriters draft text in silos. | Humans or LLMs draft text within monitored platform. |

| Fact-Checking | Legal teams manually verify claims against static PDF brand guidelines. | Bot instantly verifies claims against a live, digital Claim Inventory database. |

| Risk Detection | Relies on human memory to spot prohibited UDAAP phrases. | Bot automatically flags any phrase matching the Negative Claim Library. |

| Approval Routing | Messy email chains, lost attachments, version control issues in Google Drive. | Automated, rules-based routing directly to the correct stakeholder. |

| Audit Trail | Fragmented documentation, difficult to produce during regulatory audits. | Immutable log of all prompts, edits, timestamps, and approvals. |

Sector-Specific Compliance Nuances

The implementation of internal compliance bots is not a one-size-fits-all endeavor. The architecture must vary significantly depending on the regulatory frameworks governing specific industries. B2B software architectures must be deeply tailored to accommodate these divergent, highly strict requirements.

Real Estate, Mortgage, and Finance

In the real estate and mortgage sectors, predictive analytics and agentic AI are rapidly transforming operations. AI agents are utilized to extract relevant data from complex financial documents to assess default risk, summarize closing documents, and even automate property management workflows like vendor coordination, payment processing, and maintenance scheduling.

However, these applications carry profound Fair Housing Act and anti-discrimination risks. Real estate compliance bots must be explicitly programmed to monitor for algorithmic discrimination. Emerging state regulations, such as the Colorado Artificial Intelligence Act (CAIA)—set to take effect in June 2026—require deployers of high-risk AI systems to disclose how they manage foreseeable risks of algorithmic discrimination and to prominently notify consumers when they are interacting with an AI system rather than a human. Compliance bots in this sector must continuously audit internal algorithms to ensure that pricing models, loan originations, and property recommendations do not inadvertently rely on protected demographic characteristics, preventing systemic redlining.

Education (LMS) and EdTech

For organizations developing Learning Management Systems (LMS) or EdTech platforms, data privacy regarding student and employee records is paramount.

Federal agencies adopting LMS platforms must adhere to stringent frameworks such as FISMA (Federal Information Security Management Act), FedRAMP, and NIST 800-53. Compliance bots in an LMS environment continuously scan system logs and audit access controls, ensuring that PII related to student performance, disability accommodations, and onboarding is encrypted. They also verify that role-based access controls (RBAC) are strictly enforced to prevent unauthorized data exposure among different administrative tiers. Without automated compliance tracking, weak authentication protocols and unencrypted PII vastly increase breach risks and jeopardize federal contracts. For these public-sector and education builds, leaning on a mature enterprise application architecture is essential to keep AI features aligned with security baselines.

The Gaming Industry (iGaming)

In the highly regulated iGaming sector, AI bots operate as real-time, transparent auditors. They automatically verify payout algorithms, analyze player behavior for signs of problem gambling, and monitor random number generators (RNG) to guarantee systemic fairness and adherence to localized jurisdictional regulations. This concept of "Compliance-as-a-Service" utilizes transparent, automated reporting to build player trust and accelerate market entry across diverse regulatory borders. By 2026, compliance in gaming is transitioning from a cost center to a core competitive advantage that sustains brand credibility.

The Build vs. Buy Economic Dilemma

For engineering leaders, visionary CTOs, and strategic CFOs, the decision to implement internal compliance bots inevitably leads to a critical architectural and financial decision: Should the organization build a custom AI compliance layer in-house, or purchase a pre-built commercial Software-as-a-Service (SaaS) solution?

The economic realities of AI maintenance often shock organizations that default to building in-house, assuming it will be a simple wrapper around an OpenAI API. A custom-built enterprise AI governance layer is not a static software project; it is a permanent software commitment requiring continuous tuning, model updates, and infrastructure scaling. This is where many organizations run into the “velocity paradox” of fast AI builds creating long-term overhead—a trap we explore in depth in our research on Agentic Engineering vs. Vibe Coding.

The True Cost of Building In-House

- Upfront Capital Expenditure (CapEx): Developing a custom AI compliance solution typically requires a massive upfront investment ranging between 500,000 and 1.2 million. This budget is largely consumed by hiring specialized Machine Learning engineers (150k-250k each), establishing complex infrastructure (vector databases, compute clusters), and managing heavy LLM API usage fees.

- Ongoing Operational Expenditure (OpEx): The ongoing maintenance costs are severely underestimated, averaging between 300,000 and 600,000 annually. This includes the labor of 2-4 full-time compliance engineers and data scientists who must constantly update the model's rulesets, prompts, and validations to align with shifting global regulations (such as the EU AI Act or the upcoming AI regulations in the U.S.). In highly regulated sectors like healthcare and finance, these maintenance costs can inflate by an additional 20% to 40% due to strict data security needs.

- The Hidden Cost of Engineering Attention: The most punitive cost of building in-house is the diversion of core engineering talent. Every sprint dedicated to debugging retrieval-augmented generation (RAG) pipelines for an internal compliance bot, or implementing Single Sign-On (SSO) for an internal tool, is a sprint not spent iterating on the company's primary, revenue-generating product.

- The Compliance Audit Burden: When pursuing enterprise contracts, B2B organizations must present extensive security audits. Explaining the bespoke nuances of a homegrown system to a prospect's security team—answering a 200-question vendor due diligence questionnaire—is vastly more difficult and time-consuming than simply handing over established SOC2 Type II and GDPR certifications provided by a mature commercial platform.

The Strategic Value of Buying (or the Hybrid Approach)

The prevailing consensus among system architects and financial modelers heavily favors buying commercial solutions, or utilizing a hybrid approach. Organizations should buy the core platform—leveraging decades of built-in edge cases, guaranteed uptime, established audit trails, and pre-certified compliance frameworks—and then build custom integrations and workflows on top of that robust foundation.

This hybrid approach aligns seamlessly with the operational philosophy of elite custom software development firms. By expertly integrating robust, pre-existing infrastructure—such as Microsoft 365 environments, OVHCloud servers, pfSense firewalls, and specialized commercial compliance APIs (like Drata, Vanta, or Datadog)—engineering teams avoid reinventing the cryptographic wheel. Instead, they focus entirely on delivering a customized workflow that maps perfectly to the client's unique business processes, ensuring rapid deployment and enterprise-grade security without the bloated overhead of maintaining proprietary machine learning models. For legacy environments, it is often safer to add AI as an external “sidecar” than to rewrite core systems; our guide on minimizing risk and maximizing ROI with AI sidecars explains how to do this incrementally.

Limitations, Guardrails, and Legal Realities

While the capabilities of internal compliance bots are formidable, treating them as infallible, magical systems introduces a new taxonomy of enterprise risk. AI systems operate autonomously and at massive scale; when they fail, they fail exponentially. Executive leadership must understand these limitations and enforce strict guardrails.

- The Privacy Paradox (Data Ingestion Risks): The fundamental paradox of AI compliance bots is that to detect PII, they must ingest and process PII. If an organization routes its sensitive internal documents through a public, multi-tenant LLM via an unsecured API, they are actively causing the data breach they are attempting to prevent. To mitigate this, organizations must enforce strict data residency controls, utilize private, single-tenant enterprise models, and ensure API contracts guarantee zero data retention by the vendor (no-retention mode). In practice, that often means selecting a secure, enterprise-grade .NET AI tech stack with strong Azure and governance integration rather than ad hoc consumer tools.

- Multimodal Metadata Expansion: As bots evolve to scan audio, images, and video, the definition of what constitutes "identifiable" information expands rapidly. Metadata, such as cursor trails, login times, movement patterns in a video file, or voice prints, can triangulate identity just as effectively as a written name. Redaction strategies and compliance rules must scale to cover these new multimodal vectors, treating metadata with the same strict governance as traditional PII.

- The Autonomy Threat and Code Execution: Agentic AI bots that possess the ability to execute code, change infrastructure configurations, or access the live internet present significant security vulnerabilities. The recent case of the "Hackerbot-claw"—an autonomous AI agent that achieved remote code execution and compromised multiple popular GitHub repositories (including projects from Microsoft and DataDog) by scanning for misconfigured CI/CD workflows—demonstrates the severe danger of unconstrained agentic behavior. Internal bots must be strictly bounded. A bot should be permitted to flag a non-compliant commit or draft a remediation report, but it should never possess the administrative credentials to unilaterally alter production infrastructure without human approval.

Implementing Policy-as-Code

To mitigate these limitations and secure the enterprise, organizations are transitioning to a "Policy-as-Code" methodology. This involves translating complex legal and regulatory requirements into deterministic, machine-readable rules integrated directly into the deployment pipeline. The AI handles the semantic interpretation (e.g., reading a complex legal document to understand its intent), but the deterministic, hard-coded rules execute the final enforcement (e.g., blocking the network transfer if the AI flags the document). This hybrid approach marries the nuanced, flexible understanding of Large Language Models with the absolute, rigid reliability of traditional software engineering.

Summary and Next Steps

The transition from manual risk auditing to automated AI compliance is not a speculative future trend; it is a current, urgent operational necessity driven by the severe financial realities of data breaches and the accelerating speed of digital business. Internal compliance bots offer a highly reliable, economically advantageous mechanism to automate PII redaction, secure code repositories at the point of commit, and prevent hallucinated marketing claims from reaching the public domain.

By integrating these specialized agents deep within the corporate technology stack, B2B organizations can successfully decouple their operational velocity from their regulatory risk. The organizations that thrive in this generative era will be those that view AI compliance not as a defensive tax or a bottleneck, but as a strategic enabler—a localized sentry that allows the broader enterprise to innovate, code, and market with absolute confidence.

For executives ready to transition from reactive auditing to proactive automation, the next step is a comprehensive stack assessment. Evaluate your current CI/CD pipelines, document management systems, and content creation workflows to identify integration points for automated governance. By partnering with experts in custom software development and application management, your organization can architect a secure, compliant, and highly efficient digital ecosystem. If you are starting from older systems, consider layering these capabilities on top of existing apps using non-invasive AI overlays for legacy systems rather than risky rip-and-replace projects.

Frequently Asked Questions

How can AI help us stay compliant?

Artificial intelligence helps maintain compliance by shifting the operational paradigm from reactive, post-incident audits to continuous, real-time monitoring. Instead of waiting for a quarterly review or an external audit to find critical errors, specialized AI agents act as internal, always-on sentries. They integrate directly into existing workflows—scanning outgoing emails, analyzing marketing drafts against approved legal claim libraries, and monitoring infrastructure configurations. By processing vast amounts of unstructured data using Natural Language Processing, these bots understand semantic context, allowing them to instantly flag regulatory violations, policy breaches, or discriminatory language, thereby preventing non-compliant actions before they materialize and leave the corporate network.

Can we automate PII redaction?

Yes. Advancements in Large Language Models and Named Entity Recognition have made automated PII redaction highly effective, accurate, and economically viable. Unlike legacy systems that relied on rigid keyword searches or specific numerical formats (which frequently missed contextually embedded sensitive data, like a name hidden in a paragraph), modern AI can read and understand the surrounding text. Empirical testing has shown that AI-assisted redaction tools can achieve accuracy rates exceeding 97% while simultaneously cutting processing time by up to 50%. This technology is actively used to sanitize millions of documents in the healthcare, legal, and financial sectors, significantly reducing human error and the massive labor costs associated with manual redaction teams.

How do we catch compliance violations before they happen?

Catching violations pre-emptively requires embedding compliance checks directly into the creation and deployment pipelines—a concept known in software engineering as "shifting left." In custom software development, this means integrating security bots into CI/CD pipelines (such as Azure DevOps On-Prem or GitHub) so that every time a developer attempts to commit code, the bot automatically scans for hardcoded secrets, PII, or vulnerable open-source dependencies. The commit is instantly blocked if an issue is found. In marketing and sales, it involves routing generated copy through an automated review gate where an AI agent verifies the text against a "negative claim library" and approved facts. Only content that passes this semantic verification is allowed to be published, while risky assets are routed to human legal teams for review.

Recommended Reading

- https://www.ibm.com/reports/data-breach

- https://performline.com/blog-post/ai-marketing-compliance-risks-real-world-violations/

- https://www.credo.ai/blog/the-build-vs-buy-math-why-custom-ai-governance-tools-often-fail

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.