Busywork to Brilliance: AI Automation That Actually Works

April 03, 2026 / Bryan Reynolds

AI Automation for Operations: Turning Repetitive Work Into Reliable Workflows

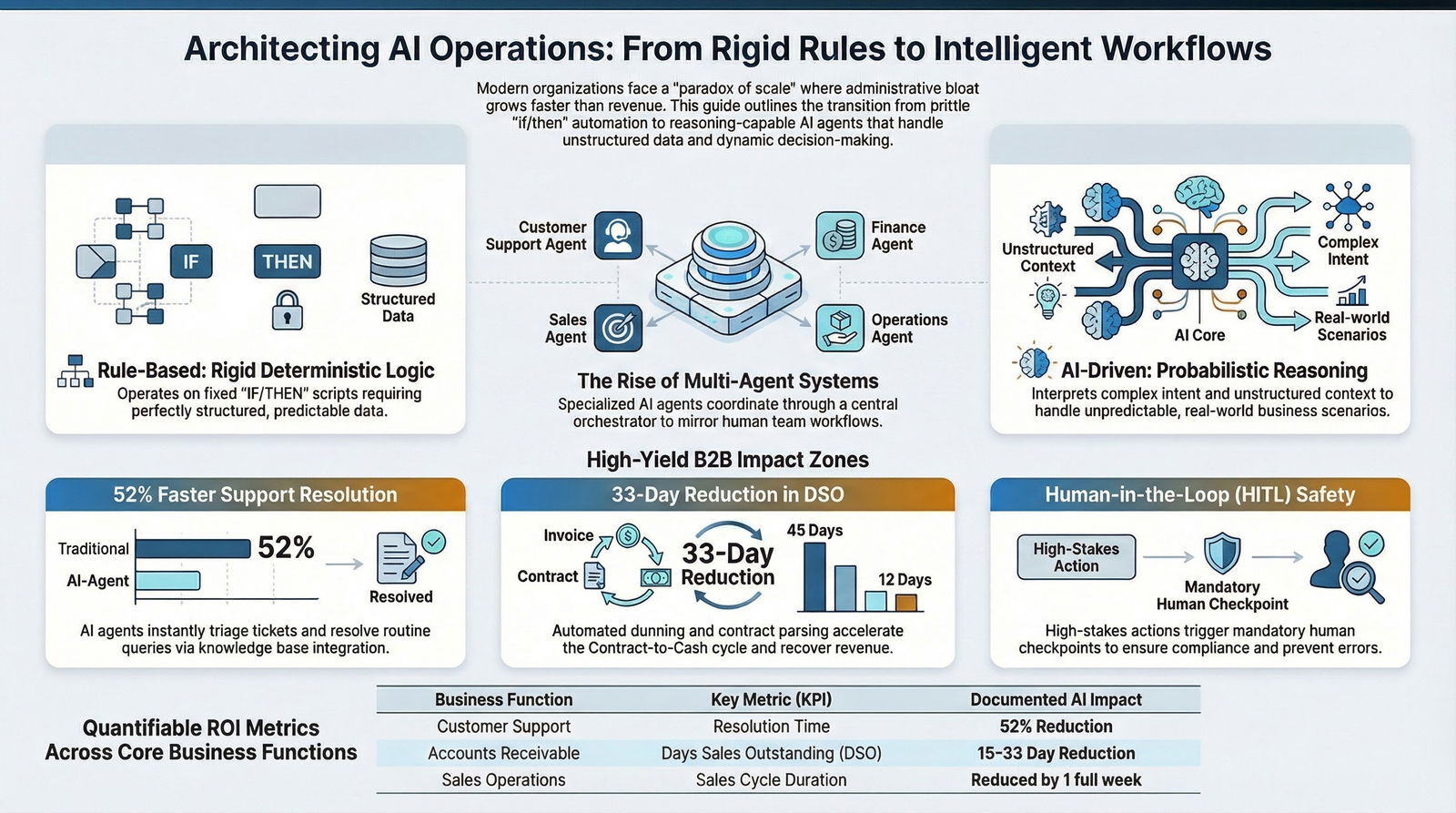

The relentless pursuit of operational efficiency has driven enterprise technology strategies for decades. Executive leadership—from visionary Chief Technology Officers and strategic Chief Financial Officers to driven Heads of Sales and innovative Marketing Directors—is constantly seeking ways to optimize the business engine. However, the modern business landscape presents a unique and frustrating paradox: as organizations successfully scale their revenue and client base, the volume of repetitive, administrative, and back-office work scales disproportionately. This administrative bloat threatens to overwhelm the very teams tasked with driving strategic growth.

For many B2B firms operating in fast-paced sectors such as software, telecom, healthcare, real estate, and digital advertising, the solution to this bottleneck is no longer found in simply hiring more personnel. Expanding headcount linearly with revenue growth erodes profit margins and introduces immense logistical complexity. Similarly, traditional business process outsourcing (BPO) often introduces friction regarding data security, brand voice, and agility. Instead, the paradigm has decisively shifted toward a more profound structural transformation: implementing artificial intelligence to convert fragile, manual processes into resilient, intelligent, and highly automated workflows.

The core question echoing through boardrooms and strategic planning sessions today is precise: How can an organization leverage AI automation to turn repetitive work into reliable workflows without breaking existing processes?

Generative AI (GenAI) and agentic automation frameworks are currently reshaping B2B operations, effectively leveling the playing field for agile organizations. These technologies offer enterprise-grade capabilities to mid-market and fast-growing businesses, allowing lean teams to operate with the capacity of massive enterprise departments. By transitioning from traditional, brittle automation to dynamic, reasoning-capable AI systems, businesses can achieve a state of continuous operational fluidity.

This comprehensive research report explores the strategic implementation of AI automation across core business functions. It delineates the critical technical and philosophical differences between legacy rule-based systems and modern AI agents. Furthermore, it identifies the highest-yield operational targets for automation, establishes rigorous best practices for maintaining human oversight, and outlines the complex, enterprise-grade architectural requirements necessary to integrate these advanced systems securely into existing corporate infrastructure.

The Automation Paradigm Shift: Rule-Based Systems vs. AI-Driven Agents

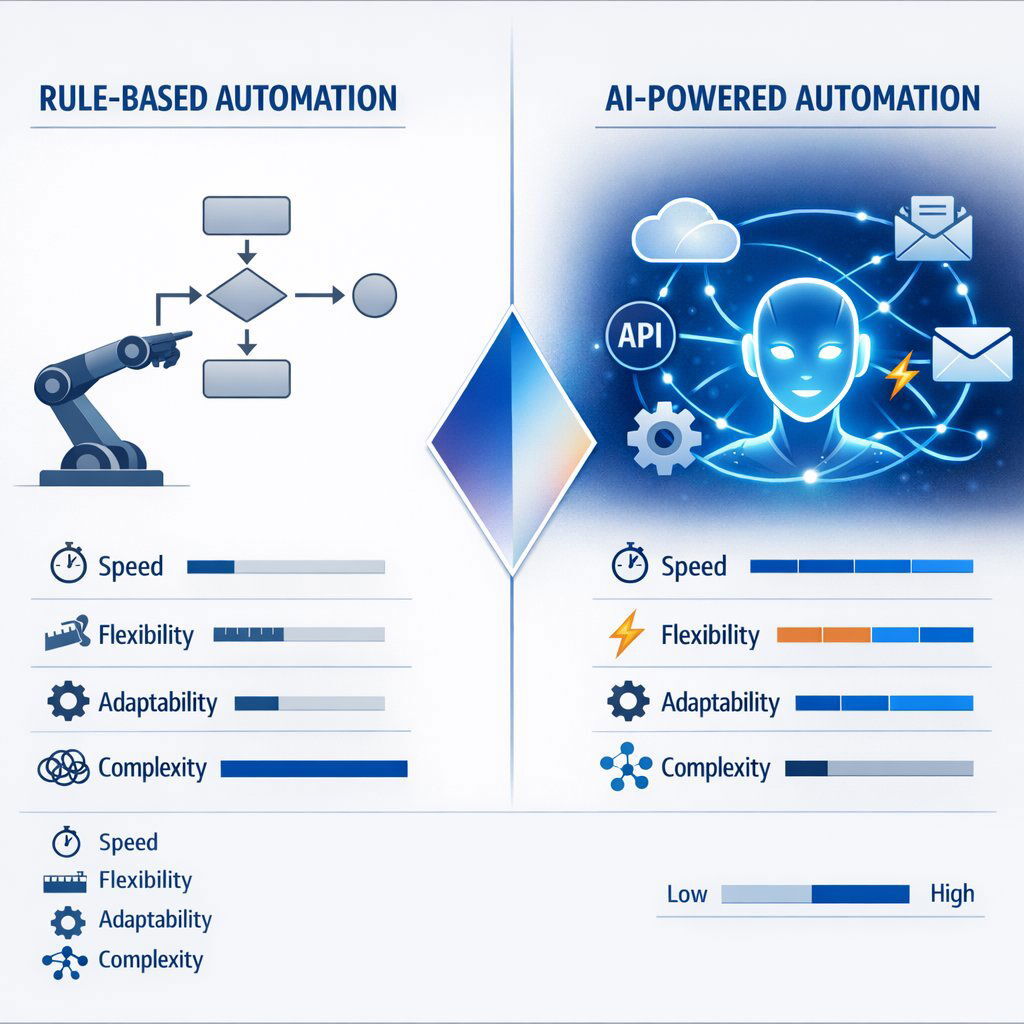

To understand the current trajectory of operational technology, it is essential to distinguish between the automation paradigms of the past decade and the AI-driven automation of the present. While both methodologies fundamentally seek to reduce manual labor and accelerate digital processes, their underlying mechanics, capabilities, and scalability differ at a foundational level. Understanding this divergence is the first step for any CTO or operations leader looking to modernize their technology stack.

The Architecture of Rule-Based Automation

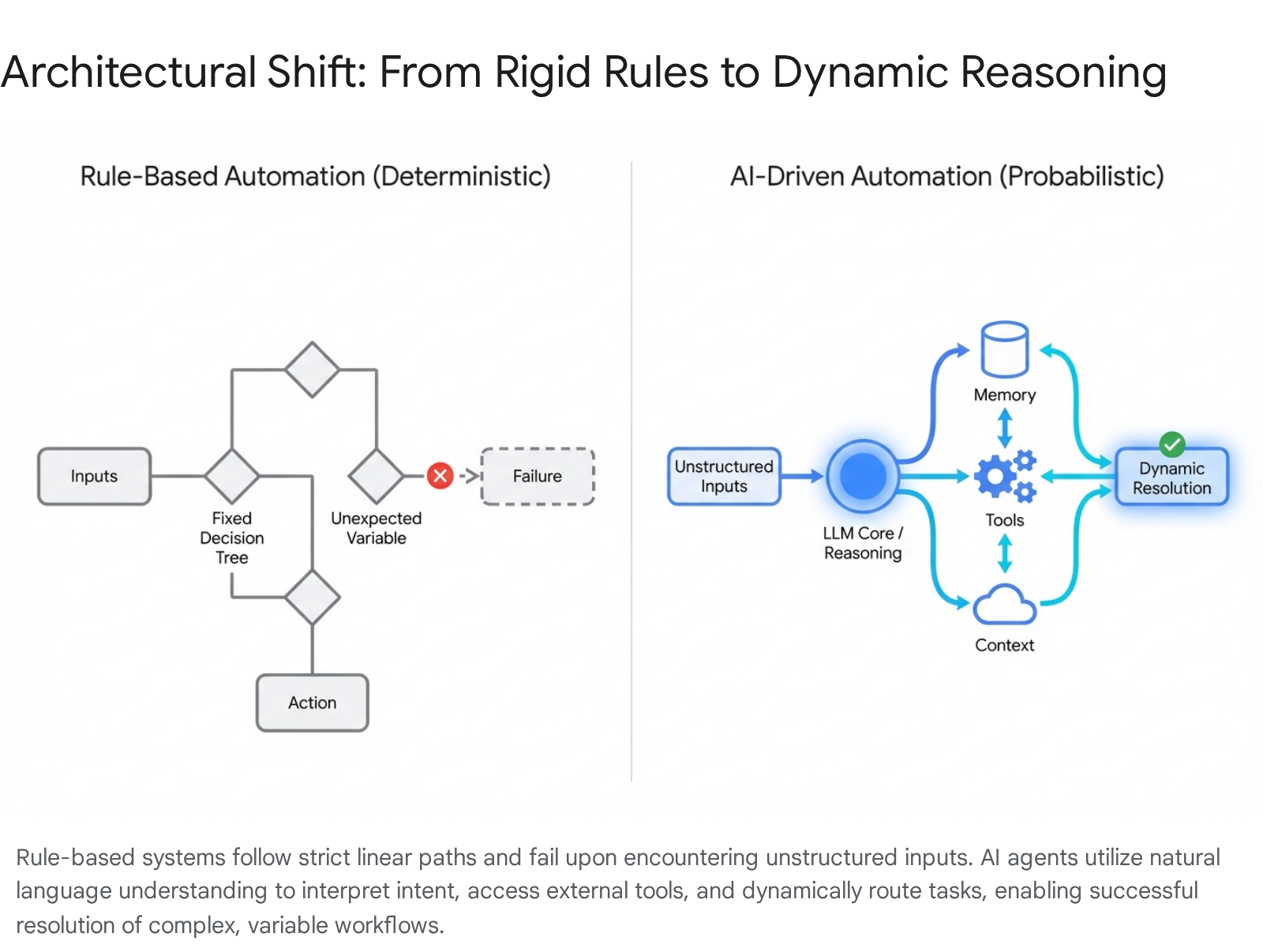

Rule-based automation, often synonymous with Robotic Process Automation (RPA) and standard digital workflow tools, operates strictly on predefined, deterministic logic. These systems function essentially as complex, high-speed flowcharts, executing a rigid sequence of commands based on the premise: “IF condition A is met, THEN trigger action B.”

The operational mechanism of rule-based bots relies on static, pre-programmed scripts. They require exact matches to specific keywords, predetermined data structures, or fixed user interface coordinates to trigger an action. For example, a rule-based system can flawlessly extract data from a highly standardized vendor invoice if the “Total Amount” field is always in the exact same pixel location or XML tag.

The primary strengths of rule-based workflows lie in their high reliability for highly repetitive, perfectly predictable tasks. Because the logic is entirely transparent and human-authored, they are easy to audit, explain, and troubleshoot. When applied to simple, static data synchronization tasks—such as copying a newly entered lead from a marketing platform directly into a static spreadsheet—they perform adequately.

However, the limitations of rule-based automation are severe in a dynamic business environment. The primary flaw is its extreme rigidity. Rule-based systems cannot adapt to unexpected queries, unstructured data, conversational nuances, or slight variations in input outside their programmed logic. If a customer submits a support ticket using colloquial language or typos rather than specific keywords, the rule-based bot fails immediately and must route the ticket to a human agent. Furthermore, scaling these systems requires relentless manual rule updates. Every new operational scenario, product launch, or minor change in a vendor's invoice format demands a new line of code or logic, eventually creating a brittle, unmanageable web of interdependent rules that become a nightmare to maintain.

The Emergence of Agentic AI Automation

AI-driven automation, powered by Large Language Models (LLMs) and agentic programming frameworks, represents a massive technological leap from deterministic execution to probabilistic reasoning. These systems do not merely follow rigid instructions; they possess “agency,” meaning they can interpret complex intent, formulate multi-step plans, and execute processes dynamically based on the context of the situation.

The operational mechanism of AI agents utilizes advanced natural language processing (NLP) and machine learning algorithms to understand unstructured context, process raw data, and make context-based decisions in real-time. Crucially, modern AI agents are capable of tool use. They can interact with external APIs, query relational databases, search internal knowledge bases, and adapt their responses and actions based on the specific nuances of a developing situation.

The strengths of AI-driven systems allow them to handle a vast, unpredictable range of inquiries with incredible flexibility. They continuously learn from interactions, utilizing feedback loops to improve their accuracy and efficiency over time without requiring manual script updates from a developer. For instance, in Contract Lifecycle Management (CLM), an AI-native orchestration system can instantly recognize nuanced language variations across millions of disparate vendor contracts. It can automatically flag unusual indemnification terms and route approvals based on an intelligent, real-time risk assessment of the text, rather than relying on rigid hierarchical rules based merely on dollar amounts.

Furthermore, the scalability of AI agents is virtually limitless. They expand their capabilities seamlessly by integrating new enterprise tools and ingesting larger context windows. They manage dynamic, multi-step processes requiring genuine reasoning, making them highly suitable for complex B2B environments where market conditions and customer demands change rapidly.

Strategic Application: When to Deploy Each Architecture

The choice between legacy rule-based automation and modern AI-driven automation is not a binary decision. Optimal enterprise architectures utilize both paradigms in a complementary, layered manner to maximize efficiency and cost-effectiveness.

Rule-based automation remains the superior, cost-effective choice for high-volume, low-complexity tasks where variance is absolutely non-existent and strict auditability of every computational step is paramount. Examples include simple, scheduled data synchronization between two highly structured databases, transferring files between secure servers, or triggering a standard, unpersonalized email receipt upon a payment gateway confirmation. In these scenarios, the computational overhead of an LLM is unnecessary.

Conversely, AI-driven automation is strictly required when operational processes involve unstructured data, natural language interpretation, or dynamic decision-making. If a workflow requires reading and interpreting a vendor's email, extracting specific payment terms from an attached, non-standard PDF contract, checking those terms against historical agreements in a database, and drafting a contextual, polite reply based on company policy, an AI agent is the only viable technological solution. In modern B2B operations, the vast majority of high-value tasks fall into this latter category.

The Pivot to Multi-Agent Systems

As generative AI moves from proof-of-concept pilots to mission-critical workloads, enterprise implementations are advancing rapidly. The initial wave of corporate AI projects typically involved standing up a single, generalized “do-everything” agent—a single large language model wrapped with basic prompt engineering and a handful of API connectors. While this pattern is excellent for narrow internal FAQ bots, it fundamentally collapses under the weight of real-world enterprise constraints. A single agent struggles to possess domain expertise across dozens of diverse business lines while adhering to strict data-sovereignty and complex model-access policies.

To counteract this, organizations are pivoting toward multi-agent systems. These are architectures consisting of collections of autonomous, highly task-specialized AI agents that coordinate their efforts through a central orchestrator. This design mirrors how cross-functional human teams tackle complex, multi-disciplinary work. In a multi-agent system, one agent might be specialized entirely in querying a SQL database, another in drafting legal language, and a third in analyzing financial risk. The breakthrough lies not in the isolated intelligence of one massive model, but in the emergent, highly accurate behavior that surfaces when many specialized agents share context, divide labor efficiently, and merge their distinct outputs into a cohesive, reliable workflow.

For leaders mapping this evolution, it often helps to think about it as moving from a single “Super-Bot” to a coordinated team of AI agents that mirrors how your best people already work together.

Prime Candidates for AI Automation in B2B Operations

Identifying the correct workflows for AI automation is the most critical strategic step toward successful operational transformation. Attempting to automate the wrong processes can lead to wasted engineering cycles and employee frustration. The highest-yield candidates are typically processes that are highly data-intensive, require significant manual cognitive effort to parse information, yet ultimately follow broadly predictable strategic or business patterns.

Across various B2B sectors—from SaaS and Finance to Real Estate, Healthcare, and Education—specific workflows are emerging as the undeniable prime targets for profound AI disruption.

Customer Support Triage and Resolution

Lean B2B customer support teams are frequently overwhelmed by high volumes of routine, repetitive inquiries. This constant barrage of simple questions delays responses to critical, high-value technical issues that threaten major accounts. AI transforms this tier-one support layer from a traditional cost center into a seamless, high-velocity resolution engine.

Data spanning over 14,000 digital merchants indicates that deploying AI automation in support environments yields profound operational metrics. AI agents, equipped with advanced natural language processing and securely connected to internal knowledge bases, product documentation, and CRM data, can resolve incoming tickets almost instantaneously. This implementation leads to a staggering 37% reduction in first-response times and a 52% reduction in overall resolution times.

Beyond mere FAQ deflection, these intelligent systems execute highly nuanced triage. When a customer query surpasses the AI's internal confidence threshold, or if the system detects elevated negative sentiment requiring sensitive intervention, the workflow does not simply drop the ticket. Instead, the AI agent gathers all relevant context, summarizes the issue, and routes the ticket to the appropriate, specialized human engineer. This ensures the human agent begins the interaction fully informed, eliminating the frustrating process of asking the customer to repeat themselves.

The result of this automation is not a degradation of service quality; rather, organizations deploying these systems note an immediate 1% baseline increase in Customer Satisfaction (CSAT) scores, as clients receive immediate, accurate answers for routine queries and focused, empathetic human attention for complex technical issues.

Billing, Collections, and Accounts Receivable (AR)

The Contract-to-Cash cycle in B2B environments—particularly in SaaS, telecommunications, and professional services—is historically fraught with friction and manual intervention. Median Days Sales Outstanding (DSO) typically hovers around 56 days, tying up crucial working capital. Furthermore, B2B SaaS companies often lose between 5% and 7% of their total Monthly Recurring Revenue (MRR) to failed payments, accidental churn, and manual process gaps. AI automation is fundamentally restructuring this critical financial workflow.

Modern AI-powered collections platforms operate at remarkable automation levels, often exceeding 90% autonomy. These systems ingest complex billing data and execute highly nuanced dunning strategies that rule-based systems cannot match. Advanced platforms are capable of ingesting unstructured contracts—such as processing massive legal documents via DocuSign or PandaDoc integrations. The AI reads the natural language to automatically extract precise payment terms, complex ramp schedules, and custom discount structures negotiated by sales teams. This ensures that the generated invoices match the signed legal agreements perfectly, drastically reducing client disputes by up to 40%.

Furthermore, AI algorithms optimize payment retry logic. Instead of executing simplistic, rule-based daily retries that often trigger fraud alerts or permanent card blocks, predictive AI analyzes vast datasets of historical success patterns, card network types, and geographic data. The system then attempts to process charges at the exact moment of highest probable success, recovering significant revenue that standard rule-based billing systems simply abandon as uncollectible. Organizations implementing comprehensive AI-driven AR automation report an average DSO reduction of up to 33 days, radically accelerating cash flow and improving the corporate balance sheet.

B2B Client Onboarding and Human Resources

Onboarding—whether it is provisioning new internal enterprise talent or verifying new B2B corporate clients in heavily regulated sectors like finance and healthcare—is traditionally a manual, document-heavy process that is highly vulnerable to human error and compliance breaches.

In financial and institutional B2B onboarding, AI tools dramatically accelerate complex data processing workflows. Computer vision models combined with natural language processing extract, verify, and standardize critical data from complex corporate incorporation documents, tax forms, and beneficial ownership structures in mere seconds. Crucially, these systems continuously monitor vast regulatory databases, conduct dynamic risk assessments, and flag potential Anti-Money Laundering (AML) or Know Your Customer (KYC) compliance issues instantly. By automating the extraction of unstructured client data and standardizing it into the core ERP and CRM systems, organizations can handle rapidly growing client volumes without requiring linear, expensive increases in compliance staffing, ensuring faster time-to-revenue for new institutional clients.

Similarly, in Human Resources operations, AI automation handles the intricate, multi-departmental logistics of employee onboarding. Systems automatically provision IT accounts, configure software access based on role-based access controls (RBAC), deliver customized, role-specific training schedules, and orchestrate the signing of compliance paperwork. This intelligent automation allows HR professionals to focus their valuable time on the cultural and strategic integration of new hires, coaching, and talent retention, rather than functioning as glorified administrative task managers.

Real Estate Operations, Mortgages, and Valuations

The commercial real estate, property management, and mortgage sectors are incredibly data-dense industries highly dependent on analyzing vast datasets, historical property valuations, and complex, localized legal agreements. Research indicates that AI has the profound potential to automate up to 37% of total operational tasks in the real estate sector, paving the way for an estimated $34 billion in operating efficiencies by the year 2030.

AI automation thrives in property management through predictive maintenance and advanced analytics. By ingesting decades of historical repair data combined with real-time IoT sensor outputs from building HVAC and plumbing systems, AI models forecast critical equipment failures weeks before they actually occur. This allows maintenance teams to fix issues proactively, dramatically reducing emergency repair costs and preventing operational downtime that frustrates high-value commercial tenants.

Furthermore, in lease management and asset optimization, AI algorithms analyze vast arrays of leasing history, hyper-local seasonal trends, and broader market demand indicators to dynamically recommend pricing structures. These AI pricing models maximize portfolio revenue while maintaining optimal building occupancy rates, adapting to market shifts faster than a human analyst could calculate.

In the appraisal and mortgage underwriting workflows, AI agents analyze immense property datasets—including tax records, satellite imagery, and localized economic data—to identify subtle valuation patterns that human appraisers might easily miss. By accelerating the tedious data collection and complex computation phases, loan origination cycles close significantly faster. This allows financial firms and brokerages to manage far greater transaction volumes while outputting superior, defensible, and highly evidence-based valuations.

Learning Management Systems (LMS) and Education Administration

In both corporate training environments and massive educational technology deployments, administrative overhead frequently stifles program innovation and content quality. Education directors spend more time managing spreadsheets, tracking compliance, and assigning courses than they do developing impactful curriculum. AI-enabled Learning Management Systems (LMS) resolve this friction by entirely automating content delivery mechanisms and administrative tracking workflows.

Modern intelligent systems leverage AI to analyze an individual learner's historical behavior, performance data, skill assessments, and specific job roles to construct highly dynamic, personalized learning paths. Instead of presenting a static, overwhelming course catalog, the AI-driven LMS functions similarly to a modern media streaming platform. It proactively recommends precise content to address specific organizational skills gaps or compliance requirements for that exact user.

On the administrative backend, AI automates massive logistical operations. It handles complex enrollment protocols across thousands of users, executes automated deadline tracking with personalized reminders, performs initial grading of assessments, and manages complex, multi-state certification compliance reporting. By processing this massive administrative workload autonomously, enterprise education teams can easily scale their continuing education and corporate training programs globally without a proportional, costly scaling of manual administrative effort.

B2B Demand Generation and Marketing Automation

The top of the funnel is also undergoing massive AI-driven operational shifts. Modern B2B marketing relies heavily on complex data analysis to identify target accounts. AI automation is highly effective in predictive lead scoring and demand generation.

By analyzing massive datasets of web activity, demographic data, and firmographics, AI models can accurately predict which prospects are most likely to convert into Sales Qualified Leads (SQLs). This allows marketing teams to focus their budget and effort purely on high-propensity accounts. Organizations leveraging AI for sales research and personalized outreach report incredible gains. Data shows that 38% of B2B sellers using AI to research leads save over 1.5 hours per week of manual data gathering, while those utilizing AI-driven personalized messaging see an average 28% lift in response rates. The automation of content creation, social media scheduling, and A/B testing further streamlines marketing operations, allowing lean teams to execute highly sophisticated, multi-channel campaigns at scale.

For go-to-market teams, this is also where a specialized AI sales engineer or proposal agent can plug directly into your RevOps stack to auto-generate RFP responses and security questionnaires, turning slow, manual work into a fast, repeatable workflow.

| B2B Operational Workflow | Traditional Manual Process | AI-Automated Workflow Enhancement | Primary Business Benefit |

|---|---|---|---|

| Accounts Receivable | Manual spreadsheet tracking, static 30-day follow-up emails, human dispute resolution. | Predictive payment retries, automated contract parsing for exact terms, dynamic dunning based on client health. | Reduces DSO by 15-33 days; recovers 3-7% of lost revenue. |

| Customer Support | Humans read every ticket, categorize manually, search static KBs, and type responses. | AI instantly reads intent, fetches relevant data via RAG, drafts accurate response, and routes edge cases instantly. | 52% reduction in resolution time; 36% increase in repeat purchases. |

| HR/Client Onboarding | Manual data entry from PDFs into CRM/HRIS, manual compliance checks against databases. | Computer vision extracts data, standardizes it, and cross-references regulatory databases in seconds. | Near-instant compliance scaling; zero data-entry errors; rapid time-to-value. |

| Real Estate Management | Static pricing based on annual reviews; reactive maintenance when equipment breaks. | Dynamic pricing based on real-time market data; predictive maintenance alerts via IoT sensor analysis. | Maximized portfolio revenue; significant reduction in emergency repair capital expenditures. |

| B2B Demand Generation | Manual lead scoring based on simple rules; generic mass-email blasts. | Predictive lead scoring based on complex behavioral data; hyper-personalized AI-drafted outreach. | 28% higher response rates; massive reduction in Customer Acquisition Cost (CAC). |

The Architecture of Integration: Building the Core Engine

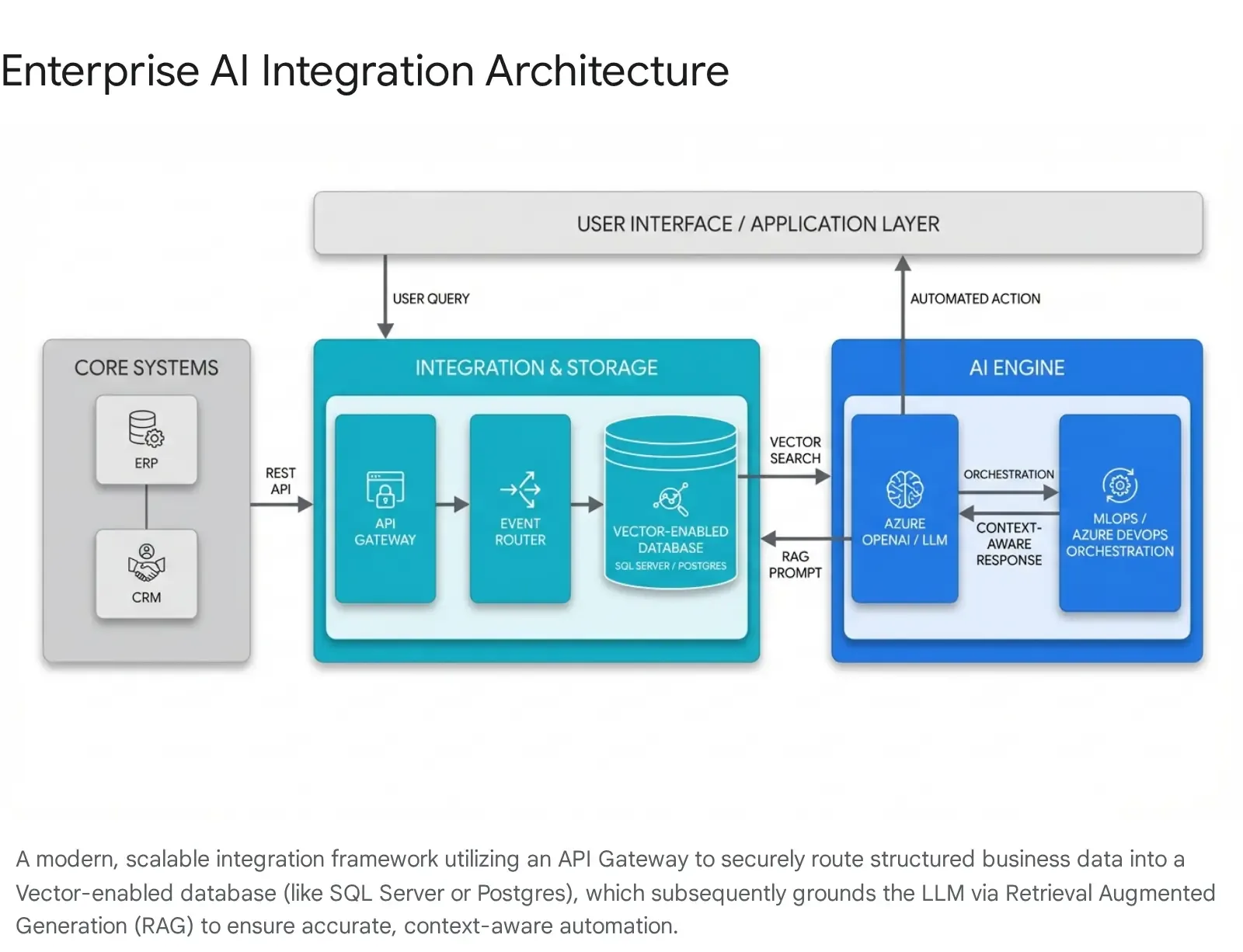

Deploying an AI agent in isolation—such as asking an employee to copy and paste data into a standalone web interface—yields only marginal utility and introduces immense data security risks. The true, transformational value of AI automation is unlocked only when these sophisticated intelligence layers are deeply, securely, and natively integrated into the organization's core data ecosystems. Specifically, AI must communicate seamlessly with Enterprise Resource Planning (ERP) platforms, Customer Relationship Management (CRM) systems, and central database architectures.

Creating this interconnected, high-performance ecosystem is not a simple configuration task. It requires robust custom software development, sophisticated on-premise or hybrid cloud infrastructure, and enterprise-grade engineering practices. Organizations seeking a tailored tech advantage must leverage expert development teams and modern, highly resilient tech stacks. Utilizing comprehensive toolsets—such as Azure DevOps On-Prem for secure lifecycle management, PostgreSQL and Microsoft SQL Server for advanced data handling, and Kubernetes for scalable orchestration—provides a distinct structural advantage in deploying these AI solutions safely, rapidly, and reliably. Many enterprises are now standardizing on a secure .NET-first AI stack to keep this integration maintainable and aligned with existing Microsoft infrastructure.

Architecting the Integration Layer and Middleware

The integration layer serves as the central nervous system of the automated enterprise, securely connecting traditional, often legacy business software with advanced AI models. Best practices for 2025 and 2026 dictate moving decisively away from brittle, point-to-point API connections toward modular, scalable frameworks.

Modern integration architecture relies heavily on robust middleware, containerized microservices, and specialized API gateways. Platforms like Integration Platform as a Service (iPaaS) provide the necessary infrastructure to route data securely between a CRM (like Salesforce) and an ERP (like SAP, Oracle, or Microsoft Dynamics) while seamlessly embedding AI processing nodes directly within the data flow.

Furthermore, to ensure that AI agents react instantaneously to fluid business events, organizations are increasingly adopting event-driven architectures. Rather than waiting for inefficient, hourly batch syncs to update a database, event-driven systems respond to live changes on the network. For example, the exact millisecond a user signs a contract or a supply chain tracking API reports a disruption, the event router triggers immediate AI analysis and automated downstream actions across all connected platforms.

This level of integration demands strict adherence to enterprise security protocols. Custom integrations must enforce TLS 1.3 transit encryption, AES-256 data-at-rest encryption, and robust OAuth 2.0 authentication. Furthermore, API compatibility must be verified across legacy and modern systems, and comprehensive error handling and rate-limiting protocols must be established. This ensures that a sudden spike in AI queries does not inadvertently execute a Denial of Service (DoS) attack against the company's own core ERP database. High-performance firewalls and routing solutions, such as pfSense, are critical components in securing the network perimeter and managing these internal microservice traffic flows securely.

Leveraging Advanced Database Capabilities: SQL Server and Postgres

The effectiveness, accuracy, and intelligence of any generative AI model are strictly bounded by the quality, structure, and accessibility of the data it can reference. An AI without data is simply a conversational engine; an AI securely connected to enterprise data becomes a powerful operational agent. Modern relational databases have evolved rapidly to support massive AI workloads directly at the data tier.

Microsoft SQL Server and RAG Patterns: For enterprises operating heavily on Microsoft technology stacks, SQL Server 2025 and Azure SQL Database introduce powerful, native AI capabilities that simplify architecture. The dominant, most effective design pattern for business AI is Retrieval Augmented Generation (RAG). RAG grounds a Large Language Model's responses by retrieving highly specific, domain-relevant data from the enterprise database just before generating an answer. This ensures that the AI's actions and responses are contextual, informed, and far less likely to hallucinate.

SQL Server facilitates RAG natively through the introduction of the new VECTOR data type and the highly optimized VECTOR_DISTANCE function. Rather than the complex, risky process of constantly moving sensitive corporate data out of the secure database environment to a separate, external vector store, engineering teams can handle everything internally. Using system stored procedures like sp_invoke_external_rest_endpoint, SQL Server can call Azure OpenAI services directly to generate embeddings (complex mathematical vector representations of text, contracts, or unstructured data). These vectors are stored directly alongside traditional relational data. When a user or an automated agent asks a question, SQL Server executes a lightning-fast similarity search using cosine similarity mathematics natively via T-SQL. This allows an AI agent to query the highly secure ERP database using natural language, executing highly complex analytical workflows securely and efficiently. Furthermore, integrations with Microsoft 365, Teams, and OneDrive allow the RAG pattern to search secure corporate documents, emails, and chats simultaneously, providing the AI with a complete 360-degree view of corporate knowledge.

PostgreSQL and Kubernetes Orchestration: For highly scalable, cloud-native, or hybrid deployments—perhaps hosted on flexible OVHCloud servers—the combination of PostgreSQL and Kubernetes provides unparalleled, enterprise-grade flexibility for AI automation workflows. Deploying agentic AI requires not just the language model itself, but a highly resilient, fault-tolerant environment to execute complex, multi-agent operations simultaneously.

Kubernetes provides the orchestration engine for containerized AI microservices built in Docker. When paired with PostgreSQL functioning as the secure transactional memory and state-management store for the AI agents, the architecture achieves massive reliability. Advanced enterprise implementations utilize Kubernetes Operators—specifically tools like the CloudNativePG operator—to fully automate the entire lifecycle of the Postgres database directly within the cluster. This operator automation handles seamless deployment, continuous automated backups, precise Point-In-Time Recovery (PITR), and high-availability failover without requiring any human database administrator intervention. To manage these complex Kubernetes clusters seamlessly across bare-metal and virtualized environments, operations teams frequently rely on hyperconverged infrastructure solutions like Harvester HCI and management planes like Rancher. By leveraging these sophisticated, highly automated technology stacks, custom software development teams ensure that the foundational infrastructure supporting the AI is exactly as resilient, scalable, and automated as the business processes the AI is designed to execute.

Orchestrating the ML Lifecycle with Azure DevOps

Deploying a custom AI model or agent into a production environment is never a singular, finalized event. Models degrade over time as real-world data shifts, requiring continuous monitoring, rapid retraining, and strict governance—a specialized engineering discipline known as Machine Learning Operations (MLOps).

Enterprise implementations heavily utilize robust platforms like Azure DevOps On-Prem to automate the entire machine learning lifecycle securely. Developers utilizing advanced IDEs like VS Code and VS 2022 write the code that defines the AI agents. An Azure DevOps pipeline then orchestrates the entire deployment sequence. It handles the extraction and transformation of vast ERP datasets, triggers the intensive training of custom models on scalable compute clusters, and deploys the resulting intelligent agents as highly secure, private web services.

Crucially, this DevOps pipeline continuously monitors the deployed AI models in production. It scans relentlessly for “data drift”—scenarios where the current incoming business data deviates significantly from the data the model was originally trained on. If the AI's prediction accuracy or confidence scores fall below strictly established operational thresholds, the DevOps pipeline automatically triggers a complete retraining cycle. This advanced MLOps automation ensures that the automated business workflows remain highly reliable and accurate even as underlying corporate data, market conditions, and customer behaviors evolve.

Safeguarding Operations: The "Human in the Loop" (HITL) Framework

The introduction of powerful autonomous agents into mission-critical business processes introduces inherent, significant operational risks. To absolutely prevent AI systems from breaking existing workflows, making irreversible strategic errors, hallucinating incorrect data, or violating strict compliance regulations, organizations must meticulously architect robust “Human in the Loop” (HITL) frameworks.

It is vital to understand that an HITL requirement is not a failure of the automation initiative. Rather, it is a formally engineered, highly intentional control layer that elegantly balances the high-speed execution capabilities of AI with the essential, nuanced judgment of a human expert.

If you zoom out, this is part of a broader “trust architecture” trend where the winning model is often 90% automation and 10% human oversight—enough human review to stay safe, without giving up the benefits of scale.

Identifying the Absolute Necessity for Human Intervention

The core philosophical principle of HITL involves allowing the AI agent to run completely autonomously at machine speed until it naturally encounters a high-stakes decision point, a sensitive action, or an ambiguous edge case it cannot resolve with mathematically high confidence. Key operational scenarios requiring strict human oversight include:

- Irreversible or Highly Sensitive Actions: Any automated action that could result in accidental data loss, significant financial transactions, or permanent modifications to core CRM customer records requires a hard human checkpoint. For example, an AI agent managing AR collections can autonomously draft emails, answer billing queries, and even negotiate standard, pre-approved payment plans. However, executing a command to overwrite a critical account status, delete a client record, or issue a massive financial refund must be automatically paused and routed to a human financial controller for authorization.

- Regulatory and Compliance Gating: In heavily regulated industries such as pharmaceutical manufacturing, fintech, or healthcare, AI-enabled systems mandate incredibly structured human governance. In environments adhering to Digital Good Manufacturing Practices (Digital GMP) or FDA regulations, human operators must provide formal, auditable decision gating and exception handling. This ensures that the autonomous agents operate strictly within validated, legally defensible, and ethically bounded production parameters.

- Ambiguity, Nuance, and Low Confidence: When an AI model processes highly unstructured or deeply ambiguous input—such as a heavily nuanced, custom legal contract from a new vendor, or a multi-threaded, irate customer complaint—and its internal confidence score falls below a predetermined safety threshold, the workflow must fail gracefully. It must instantly pause, summarize the context, and escalate the entire thread to a human subject matter expert.

Technical Implementation of HITL in Production Workflows

Integrating human approvals into an automated, high-speed digital pipeline presents a highly unique and difficult engineering challenge: the computational system must pause its execution gracefully without timing out, crashing, or losing massive amounts of conversational data in memory.

- Long-Term State Persistence: Human review processes operate on entirely unpredictable timelines. A busy CFO might take three minutes, three days, or three weeks to review and click “Approve” on a massive transaction. The technical architecture must maintain perfect computational state consistency throughout this entire waiting period. Utilizing advanced architectural technologies like “Durable Objects” or deploying long-running, resilient workflow orchestrators (such as Temporal) ensures that the original request, the intermediate AI reasoning, and all temporary operational states are preserved flawlessly in the database until the human finally acts.

- Timeouts and Escalation Logic: Despite state persistence, critical business workflows cannot wait indefinitely in a queue. Intelligent HITL systems utilize complex scheduling parameters to manage delays. They can automatically send pings or reminders via Microsoft Teams or email after a set period of inactivity. If a primary manager is on vacation, the system can automatically escalate the approval to alternate directors, or even execute a safe, default “auto-rejection” based on strict corporate business rules to prevent bottlenecks.

- Immutable Audit Trails: Every single time a human intervenes, overrides, or approves an AI action, the system must meticulously record the interaction in a secure, immutable SQL database log. Capturing exactly who made the decision, the precise timestamp, the justification provided, and all relevant metadata provides absolute regulatory defensibility and operational transparency.

- The Continuous Improvement Loop: HITL is not merely a static safety net; it is the primary mechanism for actively training and refining the AI model. When human experts correct an AI error, reject an action, or approve an edge case, that specific interaction data is fed directly back into the Azure DevOps MLOps pipeline. This structured, constant feedback loop continuously fine-tunes the underlying model, rapidly increasing its accuracy and progressively, permanently reducing the need for human intervention on that specific type of task over time.

Measuring Success and ROI in AI Automation

The transition to AI-driven operations requires a substantial commitment of capital, infrastructure upgrades, custom software development, and organizational change management. Consequently, corporate leadership demands rigorous, highly quantifiable metrics to evaluate the Return on Investment (ROI) of these initiatives. The hard data emerging from enterprise deployments in 2024, 2025, and early 2026 demonstrates definitively that AI automation delivers profound, measurable impacts on both the top and bottom lines.

Macro Adoption Rates and Strategic Impact

AI is rapidly transitioning from an experimental competitive advantage to a mandatory, baseline operational requirement for survival in the B2B space. According to recent McKinsey and industry reports, a staggering 78% of B2B companies are currently utilizing AI across at least one major business function. This represents a massive shift into mainstream adoption.

In the highly competitive B2B sales domain, adoption is deeply entrenched. Over 56% of sales professionals now report utilizing AI tools on a daily basis to execute their roles. The macroeconomic impact of this shift is staggering; analysts project an aggregate $1 trillion global productivity increase driven directly by AI applications and automation within B2B environments. Organizations that fail to adopt these workflows are actively choosing to operate at a massive efficiency deficit compared to their peers.

Quantifiable Operational Metrics and ROI

Evaluating the true success of AI integration requires tracking highly specific Key Performance Indicators (KPIs) tailored directly to the automated workflow. The data proves that the ROI is rarely subtle.

| Operational Business Function | Key Performance Indicator (KPI) Monitored | Documented AI Automation Impact | Primary Strategic Business Value |

|---|---|---|---|

| B2B Sales Operations | Overall Sales Cycle Duration | 69% of sellers actively using AI report cutting their sales cycles by an average of one full week. | Radically accelerates revenue recognition; increases overall sales pipeline throughput and velocity. |

| Sales & Lead Generation | Prospect Outreach Response Rate | 28% average lift in prospect response rates utilizing AI-driven, hyper-personalized outreach at scale. | Generates significantly higher quality leads, lowering the Cost Per Lead (CPL) and boosting ROI. |

| Customer Support / CX | Average Time to Resolution (TTR) | 52% massive reduction in overall support ticket resolution times. | Deflects immense routine volume, allowing tier-two staff to proactively manage complex, high-value retention efforts. |

| Accounts Receivable (AR) | Days Sales Outstanding (DSO) | Average reduction of 15 to 33 days through intelligent, AI-powered collections automation. | Instantly unlocks trapped working capital; dramatically reduces bad debt write-offs and cash flow gaps. |

| Employee Productivity | Weekly Time Saved on Manual Tasks | 38% of B2B sellers save over 1.5 hours per week previously wasted on manual corporate research. | Shifts highly expensive human capital away from administrative execution directly into strategic revenue generation. |

Driving Revenue Growth and Amplifying Customer Satisfaction

Beyond the obvious benefits of operational efficiency and dramatic cost reduction, AI automation acts as a direct, highly potent catalyst for top-line revenue growth and deep customer loyalty.

In the realm of B2B demand generation, organizations deploying mature, AI-driven marketing automation strategies benefit from a 61% lower cost per lead compared to legacy competitors relying on manual targeting processes. Furthermore, predictive AI models utilized in dynamic pricing strategies and sales engagement platforms have been shown to improve final conversion rates by 20% to 30%. This efficiency translates into direct, bottom-line corporate profit margin lifts, sometimes averaging as high as 12%. Data also shows that B2B sales professionals utilizing AI daily are twice as likely to exceed their rigorous sales targets.

Crucially, removing the friction, delays, and errors from customer interactions yields measurable, long-term loyalty. B2B merchants successfully deploying advanced customer service automation report a remarkable 36% overall increase in repeat purchase rates. By ensuring that all corporate systems are instantly responsive, highly accurate, and deeply personalized, organizations simultaneously reduce their internal operational expenditures while maximizing the long-term lifetime value (LTV) of their entire client base.

Strategic Conclusion and Next Steps

The deep integration of artificial intelligence into B2B operational workflows is no longer an experimental venture or a futuristic concept; it is an imperative, immediate structural upgrade required for competitive survival. As the data definitively demonstrates, transitioning from rigid, legacy rule-based systems to dynamic, reasoning-capable AI-driven agents empowers organizations to radically reduce administrative overhead, massively accelerate financial and sales cycles, and deliver unprecedented, frictionless levels of customer service.

However, realizing this immense operational potential requires significantly more effort than simply purchasing off-the-shelf SaaS software and providing logins to staff. It demands a deliberate, highly secure, and deeply customized enterprise architectural strategy. Connecting sophisticated large language models to highly sensitive ERP data, orchestrating resilient, fault-tolerant Kubernetes clusters, executing RAG patterns via SQL Server, and establishing foolproof Human-in-the-Loop governance pipelines require specialized, elite engineering expertise. Organizations achieving enterprise-grade quality in their automation initiatives rely heavily on a tailored tech advantage, utilizing robust, secure stacks to build solutions perfectly fitted to their unique operational bottlenecks. For many mid-market firms, this starts with a realistic AI adoption roadmap that aligns use cases, budget, and risk.

To initiate this profound transformation and guarantee a rapid, agile deployment, organizations should adopt a strict, phased approach:

- Conduct a Rigorous Data Readiness Assessment: Carefully evaluate the current state of CRM, ERP, and internal database hygiene. AI models require clean, structured, and accessible foundational data to operate effectively and avoid hallucinations. A structured data readiness assessment often uncovers hidden blockers and quick wins.

- Identify High-Friction Workflows for Piloting: Target one specific, highly measurable workflow—such as accounts receivable collections, tier-one support triage, or HR compliance document parsing—for an initial, tightly controlled pilot program. Where legacy systems are involved, consider an incremental AI sidecar pattern so you can add “chat with data” and automation without risky rewrites.

- Partner with Elite Engineering Experts: Engage with specialized custom software development firms capable of building secure, API-driven middleware, orchestrating complex container deployments, and utilizing advanced database features to enable private, deeply grounded AI applications within your secure corporate network. For many buyers, shortlisting the right software development partner is just as important as choosing the right models and cloud services.

By thoughtfully and securely applying AI to repetitive, high-volume operations, enterprise leaders can permanently eliminate the friction of scale, unlock the true strategic potential of their human workforce, and transform administrative operational drag into a sustainable, highly automated competitive advantage.

Frequently Asked Question

What is the difference between simple rule-based automation and AI-driven automation, and when do I need each?

Rule-based automation (commonly known as traditional RPA) operates entirely on strict, developer-coded “IF/THEN” deterministic logic. It requires highly structured, predictable data and fails completely if inputs deviate even slightly from its rigidly programmed script. It is best utilized as a cost-effective solution for perfectly predictable, high-volume, low-complexity tasks, such as automatically syncing standard data fields between two databases at midnight.

In contrast, AI-driven automation utilizes Large Language Models (LLMs) and agentic frameworks to understand broad context, reason through ambiguity, and make probabilistic, intelligent decisions in real-time. It can easily process highly unstructured data, such as reading a complex vendor contract, analyzing the sentiment of a conversational customer email, or deciding which software tools to use to solve a novel problem. You absolutely need AI-driven automation when a business workflow requires adaptability, natural language comprehension, dynamic decision-making, or any task that would otherwise require active human cognitive effort to parse and resolve. As you design these workflows, you should also plan for secure AI integration so models can safely access the systems and data they need.

Supporting Resources

- https://www.cio.com/article/3530814/level-the-playing-field-with-genai-top-smb-use-cases.html

- https://unity-connect.com/our-resources/blog/generative-ai-models/

- https://www.flowlu.com/blog/productivity/small-business-ai-automation/

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.