SMB AI Adoption Guide: Use Cases, Costs, Roadmap

March 30, 2026 / Bryan Reynolds

Practical AI for Small & Mid‑Sized Businesses in 2026: Where to Start

The strategic question echoing through the boardrooms of companies with 20 to 200 employees is no longer whether artificial intelligence is relevant to their sector, but rather: “Practical AI for Small & Mid‑Sized Businesses in 2026: Where to Start?”

For the past three years, the commercial market has been heavily saturated with generative artificial intelligence hype, leaving many mid-market executives—from visionary Chief Technology Officers to strategic Chief Financial Officers—struggling to separate experimental novelties from genuine business utility. However, the corporate landscape in 2026 has shifted dramatically from initial exploration to rigorous, outcome-driven execution. Artificial intelligence has transitioned from a specialized tool to a fundamental strategic asset and a profound equalizer for small and mid-sized businesses (SMBs) aiming to stay resilient, reduce operational overhead, and accelerate data-driven decision-making.

The historical technology gap between massive, heavily funded global enterprises and agile mid-sized firms is shrinking at an unprecedented rate. In early 2024, large enterprises utilized artificial intelligence at nearly double the rate of small businesses.

By the latter half of 2025, that adoption gap had virtually closed. Small business artificial intelligence usage reached 8.8% of daily core operations, while large business adoption slightly contracted to 10.5% as massive corporations wrestled with compounding legacy technical debt and complex bureaucratic governance.

Today, 58% of small businesses report using generative artificial intelligence in some capacity—more than double the adoption rate seen in 2023.

Furthermore, the implementation of these advanced systems is not resulting in the mass workforce reductions that early critics feared; rather, 82% of artificial intelligence-using SMBs reported increasing their workforce over the past year, indicating that the technology acts as a growth multiplier rather than a simple replacement for human capital.

Despite this aggressive momentum, a stark operational reality remains: 80% of artificial intelligence projects fail to scale properly, and 51% of business-to-business (B2B) organizations implement the technology without achieving their expected financial outcomes.

The organizations succeeding in 2026 are those treating artificial intelligence not as a magic panacea, but as an infrastructure enhancement that requires highly structured data, strategic organizational alignment, and rigid security governance.

This comprehensive report is engineered to answer the critical questions facing mid-market executives today. It defines artificial intelligence in plain business language, isolates the lowest-risk and highest-return use cases that can be deployed in under 90 days, provides a blueprint for data readiness, breaks down realistic investment costs, and outlines a phased adoption roadmap for immediate execution.

Demystifying Artificial Intelligence: Plain Business Language for 2026

To successfully implement artificial intelligence, cross-functional leadership—from the software engineering floor to the executive C-suite—must share a common, demystified vocabulary. The U.S. Small Business Administration (SBA) emphasizes that understanding these foundational concepts is absolutely critical for making informed purchasing, deployment, and risk-management decisions.

At its most fundamental core, Artificial Intelligence refers to computer systems programmed to solve problems and execute tasks by imitating human cognitive functions.

It is not a monolithic, sentient entity; rather, it is a broad mathematical and computational discipline encompassing various technologies designed to process massive amounts of data, recognize subtle patterns, and automate complex decisions. For many mid-sized firms, that starts with choosing the right platform and integrating AI safely into the systems they already rely on.

For a 20- to 200-person company, artificial intelligence acts as a sophisticated orchestration engine. It translates raw, unstructured data—such as thousands of customer emails, dense financial spreadsheets, and complex website traffic logs—into actionable workflows and predictive intelligence.

The Key Technologies Powering SMB Operations

The modern technological ecosystem relies on several interconnected layers of computational architecture. Understanding these distinctions helps executives procure the correct tools for specific business problems:

Machine Learning (ML): This represents the process of using historical data to produce computational models that can perform complex tasks without requiring explicit, rigid, rule-based programming for every conceivable scenario.

Instead of writing a manual line of code that dictates "if an invoice states the words 'past due', flag it," machine learning analyzes thousands of past invoices to independently learn what a high-risk financial document looks like based on myriad contextual clues.

- Algorithms: These are the specific, step-by-step mathematical rules or instructions that guide the computer on how to perform a task, sort information, or analyze the data within a broader machine learning model.

Large Language Models (LLMs): These are complex statistical models that utilize advanced mathematics, specifically neural networks, to recognize, translate, and predict language patterns.

LLMs are the underlying conversational engines powering modern chatbots, semantic search tools, and automated translation services.

- Generative AI (GenAI): A specific, highly popular subset of LLMs and machine learning that is capable of creating net-new content. While traditional artificial intelligence might analyze an existing dataset to predict next quarter's sales volume, Generative AI can autonomously draft the accompanying sales presentation, write the outbound marketing copy, and generate the necessary product imagery.

- Agentic AI: This represents the defining technological breakthrough of 2026. While early generative models required constant human prompting to function (acting merely as a digital "co-pilot"), Agentic AI refers to highly autonomous systems trained to understand context, utilize external software tools, follow complex multi-step instructions, and complete entire workflows from start to finish without ongoing human intervention. For a deeper dive into why teams of specialized agents now outperform a single “Super-Bot,” see why teams of AI agents win.

Within the generative space, mid-sized businesses are also increasingly leveraging specialized model architectures:

- Generative Adversarial Networks (GANs): These systems produce photorealistic images and media by training two separate neural networks against each other to continuously refine and perfect outputs.

- Variational Autoencoders (VAEs): These models compress complex data into smaller representations, making them highly ideal for product design and simulation variations.

- Diffusion Models: These models remove mathematical "noise" from data to produce high-quality visual outputs, commonly used for generating marketing artwork, product visuals, and branding assets.

- Transformers: The architecture that powers natural language processing systems, enabling conversational chatbots and dynamic code generation.

What Artificial Intelligence Can Actually Do for a Mid-Sized Company Today

For mid-sized B2B firms, the true commercial value of artificial intelligence is not found in replacing human intelligence or eliminating entire departments. Instead, its primary value lies in removing administrative bottlenecks and operational friction. Artificial intelligence serves as a profound equalizer, allowing a 50-person advertising agency or a 150-person software firm to deliver the deeply personalized customer experiences and operational efficiencies historically reserved for massive global enterprises.

By adopting these systems, businesses can streamline daily operations, drastically reduce fixed overhead costs, and accelerate decision-making cycles. This technological shift creates vital space for human employees to focus their energy on high-value innovation, strategic relationship-building, and complex problem resolution.

It effectively bridges the talent gap; for example, generating functional application code to assist resource-strapped IT teams or allowing junior marketing coordinators to execute comprehensive campaigns with the sophistication and targeting capabilities of senior strategists. This shift from isolated tools to coordinated systems mirrors the move toward the agentic enterprise, where AI works like a team instead of a single assistant.

Ultimately, artificial intelligence in 2026 represents a paradigm shift from static software to dynamic, intelligent workflows. It enables business systems that do not just passively store data, but actively read, interpret, analyze, and act upon it.

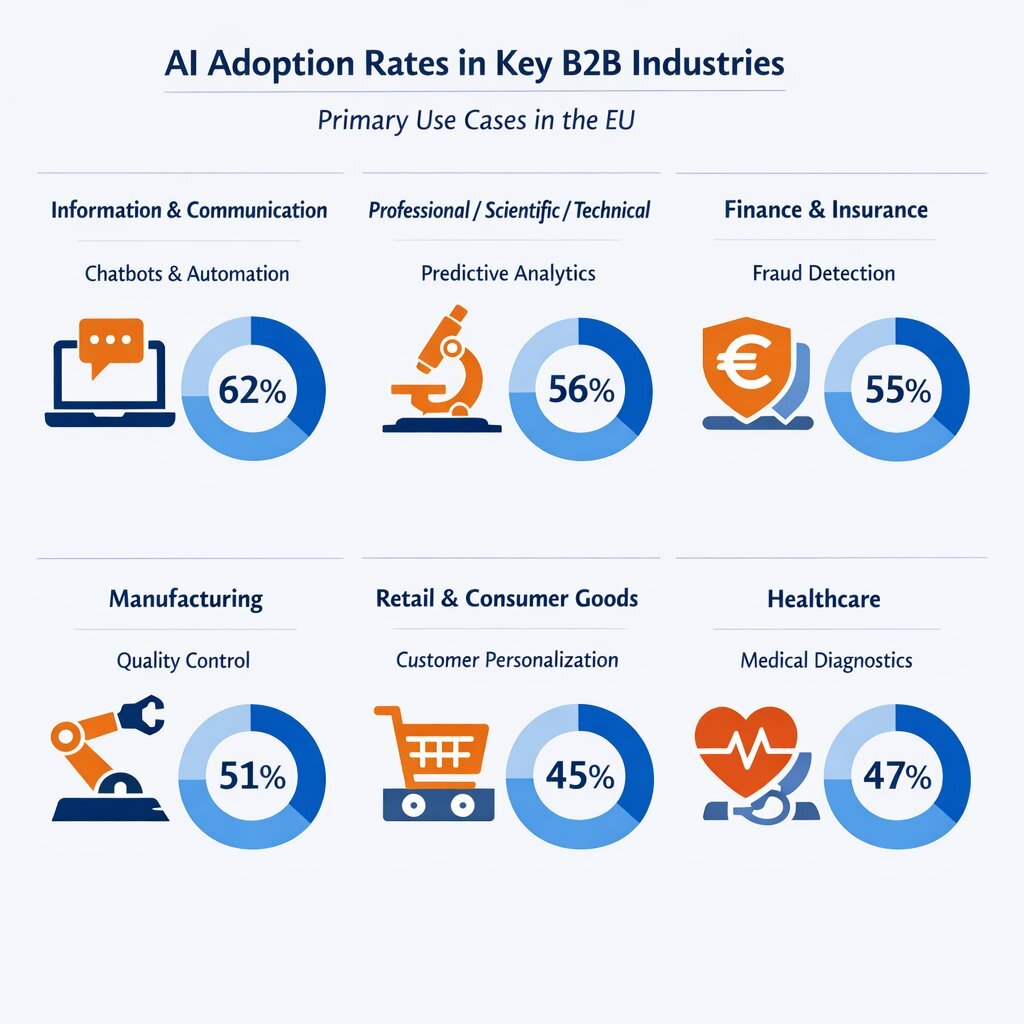

The State of AI Adoption Across Key B2B Industries

The penetration of artificial intelligence is not uniform; different sectors are adopting the technology at varying velocities based on regulatory pressures, data availability, and competitive necessity. Understanding the industry-specific adoption rates and applications provides critical context for executives mapping their own digital transformation strategies.

| Industry Sector | European Union AI Adoption Rate (2025 Baseline) | Primary Operational Use Cases |

|---|---|---|

| Information & Communication | 62.52% | Automated content curation, natural language processing for support, code generation. |

| Professional/Scientific/Technical | 40.43% | Predictive analytics, research automation, report generation. |

| Finance & Insurance | 36.11% | Credit risk modeling, fraud detection, predictive liquidity forecasting. |

| Manufacturing | 24.41% | Predictive maintenance, supply chain optimization, autonomous sensor monitoring. |

| Retail & Consumer Goods | 23.18% | Personalized recommendations, dynamic demand forecasting, 3D product modeling. |

Advertising, Marketing, and Media

The marketing sector has been fundamentally rewired by generative models. Currently, 80% of marketers use artificial intelligence for content creation, and 73% rely on generative models to craft personalized customer experiences.

The primary driver for this adoption is raw efficiency; 45% of respondents cite operational speed as the main benefit, with automated workflows reducing marketing operational costs by an average of 12.2%.

Global artificial intelligence marketing revenue, which stood at roughly $47 billion in 2025, is actively pacing toward $107 billion by 2028.

Marketing teams are utilizing AI to manage social media posting schedules automatically, conduct massive A/B testing on ad copy to optimize conversion rates, and map complex buying groups by combining LinkedIn data with website engagement metrics. For B2B teams that need more than generic content, architecting an AI sales engineer for instant RFPs can turn this marketing intelligence directly into revenue-driving proposals.

However, this saturation of synthetic content has created new challenges. With so much automated content flooding digital channels, 61% of marketers believe the industry is experiencing its biggest disruption in twenty years.

As generative text becomes ubiquitous, B2B brands are realizing that a strong, unique brand point-of-view and authentic human-led marketing are becoming the ultimate differentiators.

Gaming and Entertainment Development

The video game industry, despite some initial creative resistance, has heavily integrated artificial intelligence into its core development pipelines. Approximately 50% of development studios are now utilizing these tools.

The applications extend far beyond generating conceptual art. Major studios are utilizing machine learning-powered bots to stress-test multiplayer server updates, automating thousands of hours of quality assurance testing that would previously require massive human labor.

Furthermore, developers are using generative models to create intelligent non-player characters (NPCs) imbued with memory, unique personalities, and adaptive behaviors, effectively ending the era of repetitive, scripted dialogue.

Game-generating tools are accelerating development cycles by up to 90%, allowing smaller, independent studios to produce expansive titles that rival established AAA developers.

Real Estate, Mortgage, and Property Management

The real estate and property management sectors have transitioned from static spreadsheets to dynamic, predictive analytics. Failing to leverage these models for portfolio analytics now leaves property managers blind to emerging market trends.

Artificial intelligence analyzes historical leasing data, local market demand, and macroeconomic seasonal trends to recommend dynamic unit pricing that maximizes revenue while ensuring high occupancy rates.

Furthermore, 83% of renters now value digital-first experiences, prompting property managers to deploy AI-powered virtual property tours that feature chatbots capable of answering real-time questions about square footage, amenities, and lease terms.

In the high-risk area of tenant screening, artificial intelligence analyzes behavioral signals, document authenticity, and employment stability to flag sophisticated application fraud—such as manipulated PDF bank statements and fake pay stubs—that human leasing agents frequently miss.

Financial Services and Accounts Payable

The financial services sector shows some of the strongest adoption and return on investment (ROI) metrics globally.

In corporate finance departments, 65% of organizations utilize artificial intelligence, with an overwhelming 97% of financial leaders reporting tangible, measurable benefits.

Machine learning excels in highly structured, rule-based financial environments. AI-enhanced Accounts Payable (AP) automation systems seamlessly extract data from incoming PDF invoices, auto-populate enterprise resource planning (ERP) fields based on historical patterns, and execute complex two-way and three-way purchase order matching.

These systems provide strategic oversight by validating payment amounts, flagging potential duplicate invoices, and identifying vendors who consistently deliver late.

By predicting future liquidity needs based on cash flow patterns, finance teams can optimize their capital deployment strategies.

Healthcare Administration and Clinical Support

Healthcare presents a unique dichotomy: it offers massive potential for operational optimization but is heavily constrained by complex regulatory frameworks. Despite these hurdles, artificial intelligence is rapidly penetrating hospital administration and clinical support systems.

Ambient listening technologies are currently deployed to monitor physician-patient interactions, automatically transcribing conversations and drafting clinical documentation into electronic health records (EHR) without requiring the physician to manually type notes.

Agentic systems are managing complex scheduling tasks, patient triaging, and navigating dense insurance billing verifications.

However, the regulatory environment in 2026 demands extreme caution. States have enacted stringent laws; for instance, California's AB 489 strictly prohibits developers of AI systems from using design elements, terms, or phrases that imply the artificial intelligence possesses a medical license.

Organizations must navigate the Health Insurance Portability and Accountability Act (HIPAA) with extreme precision, ensuring that no Protected Health Information (PHI) is inadvertently exposed to public training models. For healthcare and other regulated sectors, deploying an AI firewall against prompt injection and data leakage is quickly becoming table stakes.

Software, High-Tech, and Telecommunications

For software and high-tech companies, artificial intelligence is accelerating product development cycles and fundamentally altering DevOps procedures. Generative models can produce highly functional code, making development accessible to non-developers and allowing resource-strapped SMBs to manage acute talent shortages.

Within Site Reliability Engineering (SRE) and DevOps, agentic systems are deployed to execute auto-remediation protocols. When a server failure or application crash is detected, these autonomous agents can diagnose the root cause, restart necessary services, and apply patches without human intervention, effectively resolving 20% to 40% of standard incidents autonomously.

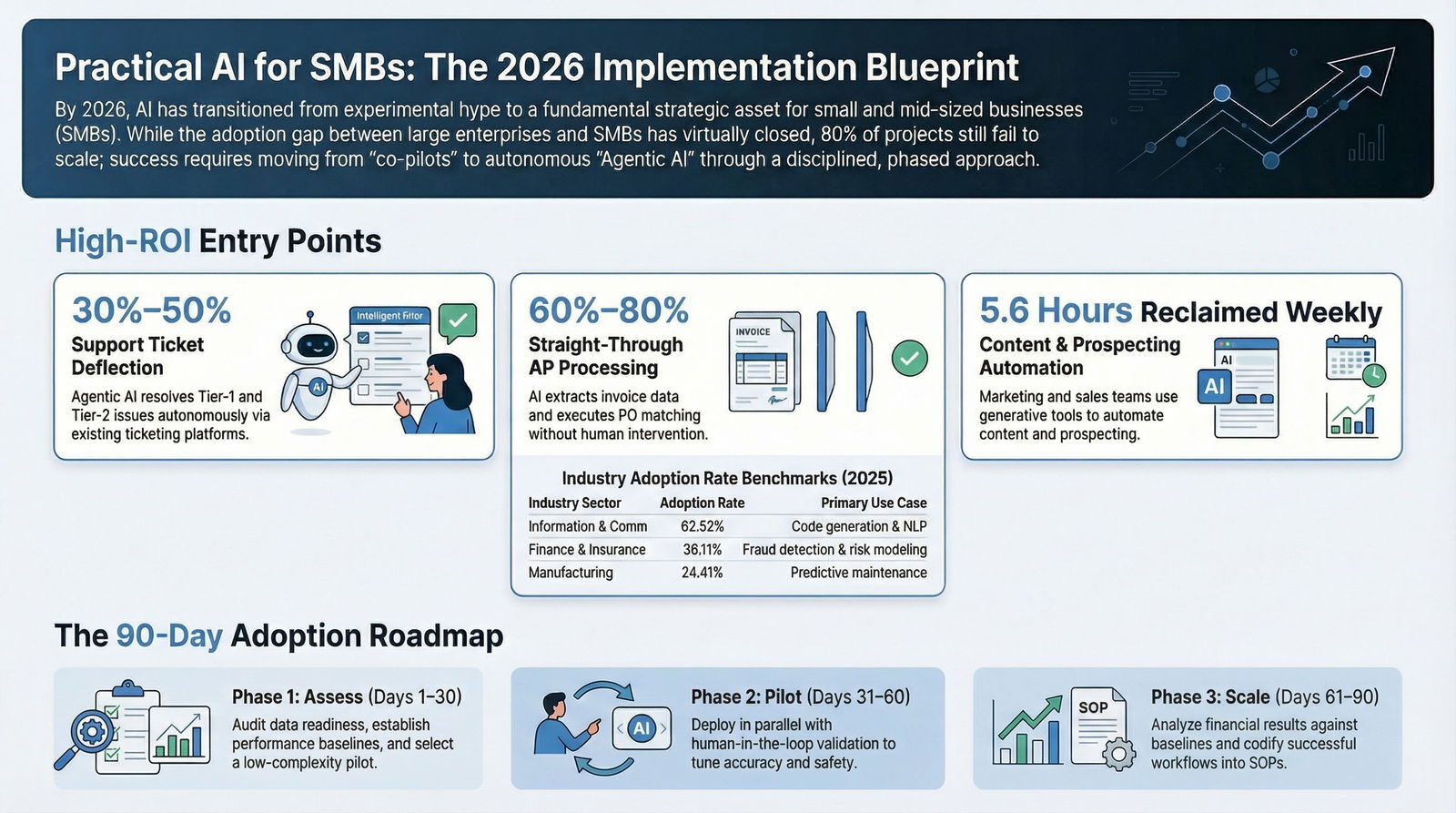

The 5 Lowest-Risk, Highest-ROI Use Cases

When evaluating where to begin the integration process, the most common and costly trap is attempting to deploy artificial intelligence across the entire enterprise simultaneously. Success requires surgical precision: selecting a few highly targeted operational nodes where the technology can deliver immediate, measurable transformation, and executing with steady, relentless discipline.

For a typical mid-sized B2B firm seeking to implement a pilot project within a strict 90-day window, the focus must remain squarely on low-risk, high-reward applications. These specific use cases deliberately avoid high-stakes regulatory environments and instead target internal bureaucratic bottlenecks, repetitive data entry tasks, and customer response latency.

1. Customer Support and CX Triage (Agentic AI)

Customer service operations represent the most mature, heavily tested, and easily measurable entry point for mid-market implementation. Traditional, first-generation chatbots relied on frustrating, hard-coded decision trees that frequently trapped customers in endless loops. In 2026, those legacy systems have been replaced. Agentic artificial intelligence systems now instantly respond to routine customer interactions using advanced natural language processing.

The Implementation: AI agents integrate directly into the company's existing support middleware and ticketing platforms (such as Zendesk, Intercom, or Salesforce Service Cloud) and actively read the company's internal knowledge base.

They do not merely deflect incoming tickets to a human queue; they actively resolve Tier-1 and Tier-2 issues autonomously. These agents can track complex shipping orders, execute secure password resets, process financial refunds within strictly preset managerial limits, and intelligently schedule human callbacks for highly complex escalations.

The ROI: Businesses implementing Agentic AI for customer support workflows frequently experience a 30% to 50% deflection in basic service tickets, alongside a 5- to 10-point improvement in overall Customer Satisfaction (CSAT) scores for the intents handled by the agent.

This automation prevents human service representatives from experiencing burnout, ensuring drastically faster response times and perfectly consistent support 24/7, without requiring the company to scale headcount aggressively during peak seasons.

2. Marketing Content and Sales Enablement

Marketing and sales teams are under relentless, constant pressure to generate highly personalized, context-aware outreach to prospects. Artificial intelligence allows small businesses to achieve scalable personalization—building brand loyalty with the exact same level of messaging sophistication as large, well-resourced global enterprises.

The Implementation: Generative tools (incorporating diffusion models for visuals and transformers for text) can craft and personalize outbound emails, dynamically generate sales pitch decks, draft highly technical blog posts, and create commercial ad mockups in seconds.

Furthermore, these systems simplify social media management by automating posting schedules at optimal times and conducting rapid A/B testing on ad copy to evaluate performance metrics.

In the B2B sales funnel, predictive systems score inbound leads based on buying intent signals and instantly trigger automated, multi-threaded nurture campaigns.

The ROI: Approximately 75% of active marketers utilize these systems to reduce manual busywork.

On average, a small business worker reclaims 5.6 hours per week using these optimization tools.

For Sales Development Representatives (SDRs), autonomous prospecting agents can effectively double the number of meetings booked within targeted segments by automating tedious account research and seamlessly executing CRM data entry.

3. Accounts Payable and Financial Operations

The corporate finance department represents a massive, highly lucrative opportunity for automation, primarily because financial data is inherently structured, standardized, and rule-based.

The Implementation: AI-enhanced Accounts Payable automation software extracts granular data from incoming invoices, auto-populates complex ERP fields based on historical categorization patterns, and executes rigorous two-way and three-way purchase order (PO) matching.

It continuously analyzes cash flow patterns to predict future liquidity needs, flags potential duplicate payments, and identifies vendor fraud anomalies with precision that human auditors lack.

The ROI: Target operational outcomes include 60% to 80% straight-through processing for standard "happy path" invoices without any human intervention required.

Finance teams eliminate hundreds of hours of manual data entry, drastically reduce the incidence of human keystroke error, and achieve a highly audit-ready operational posture that satisfies regulatory requirements. For organizations that rely heavily on analytics and reporting, this is also the place to stop trusting vector search with your numbers and move to robust, SQL-first AI reporting.

4. Meeting Documentation and Internal Knowledge Capture

Institutional knowledge is frequently lost in unrecorded virtual meetings, fragmented chat channels, and hastily written emails. Artificial intelligence now serves as a seamless, omnipresent organizational memory bank.

The Implementation: Advanced transcription and ambient listening tools (such as Fathom, Fireflies, or Otter) silently join video conferences to record, transcribe, and intelligently summarize complex multi-party discussions.

These linguistic tools isolate specific action items, identify key strategic decisions, and can automatically push these tasks directly into project management software (like Jira or Asana) or log meeting notes directly into an account record in a CRM platform.

The ROI: Immediate, compounding productivity gains. Employees completely reclaim the time previously spent writing up post-meeting reports and formatting notes. Furthermore, senior management gains complete, searchable visibility into sales pitches, client webinars, and internal strategy sessions without having to physically attend every single call.

5. Automated Data Enrichment and Signal Aggregation (Discoverability)

Because 61% of B2B buyer research occurs in the "dark funnel" before any direct vendor contact is made, achieving early discoverability and understanding anonymous buyer intent is critical for mid-sized firms.

The Implementation: Organizations use automated workflows to aggregate intent signals from install-base platforms, review websites (like G2 or TrustRadius), and professional networks (LinkedIn).

Generative systems automatically enrich raw contact records with deep firmographic and technographic data, while simultaneously developing complex buying group maps to execute multi-threaded engagement strategies across an entire target enterprise.

The ROI: This drastically improves the signal-to-opportunity conversion rate. It ensures that sales teams prioritize outreach based on mathematical correlation with closed-won deals rather than gut instinct, ultimately reducing the average duration of the consideration stage in the sales cycle.

Is Your Business Ready? The AI Data and Infrastructure Foundation

The single greatest point of failure for mid-sized businesses adopting these technologies is a profound lack of foundational readiness. According to comprehensive industry data, poor data quality is the leading cause of artificial intelligence project failure, responsible for stalling 43% of initiatives in European and global SMBs before they ever reach a production environment.

An artificial intelligence model is only as intelligent, accurate, and secure as the data it is fed. If an organization's data is heavily siloed, poorly labeled, or unsecured, the technology will simply scale and accelerate those underlying dysfunctions.

Organizations must assess their digital maturity with brutal honesty before initiating an integration project.

A scalable program connects strategy, data governance, operating practices, security, and infrastructure so initiatives can move from concept to production without creating unmanaged commercial risk.

This requires a rigorous readiness assessment.

A successful architectural flow begins with raw, siloed data residing in traditional repositories such as Customer Relationship Management (CRM) platforms, Enterprise Resource Planning (ERP) systems, and internal knowledge bases. Before this data reaches the artificial intelligence engine, it must pass through a strict Governance and Security Layer. This intermediary layer acts as a critical filtration system, enforcing Multi-Factor Authentication (MFA), strict Role-Based Access Controls (RBAC), API gateways, and automated data scrubbing protocols. Only after passing these rigorous checks does the sanitized data interact with the Large Language Model or Agentic AI engine, which then outputs actionable intelligence—such as resolved support tickets or compiled financial reports—back into the business applications.

Technology leaders must thoroughly address three critical pillars before deploying any models:

1. Data Governance, Quality, and Provenance

The very first question an organization must answer is whether it actually knows what is being fed into its systems.

This practice prevents data poisoning and algorithmic bias by providing an immutable record of a dataset’s origin and subsequent transformations. If your existing systems are fragmented or aging, it may be safer to wrap them with an AI sidecar for legacy systems instead of attempting a risky full rebuild.

- Inventory and Silos: Is there a complete, centralized inventory of all enterprise data sources? Organizations must focus on reducing data silos to improve overall quality and accessibility.

- Documentation and Metadata: Does every database table and column have a human-readable description, not just a technical naming convention? Implement strict standards for metadata tagging.

- Lineage and Tracking: Are the relationships between different data sources meticulously mapped and documented? Do teams know which data assets are actively used versus those that exist purely out of inertia? Automated lineage tracking via MLOps tools is required to log every transformation.

- Historical Curation: Do teams possess at least 6 to 12 months of exceptionally clean, labeled, and reliable historical data to properly train or fine-tune models?

2. Infrastructure and Systems Integration

Artificial intelligence systems do not exist in a vacuum; they must communicate seamlessly and rapidly with existing CRM, ERP, and Human Resource Information Systems (HRIS).

- Middleware Scalability: Is the organization's middleware and API infrastructure capable of scaling effectively with the automated, high-frequency API calls generated by autonomous agents?

- Compute Workload Placement: Does the organization have the right cloud or hybrid infrastructure to handle the computational spikes required by machine learning workloads? Planners must determine where workloads should reside based on data gravity, latency constraints, compliance rules, and infrastructure cost.

- Performance Monitoring: Organizations must establish precise methods to observe and measure model behavior in production, specifically tracking output accuracy, mathematical drift, network latency, system usage, and cost per generated outcome.

3. Cybersecurity and Risk Management

A poorly secured implementation can lead to catastrophic data breaches, the loss of proprietary intellectual property, and severe compliance violations that cripple a mid-sized business.

Disturbingly, 83% of SMBs report that the technology has actively increased their overall cyber threat level.

Moving from reactive defense to strategic risk management requires a formal model risk governance framework.

- Identity and Access Controls: Are strict Role-Based Access Controls (RBAC) rigidly enforced? An agent should never have access to sensitive financial data or employee HR records unless explicitly authorized for that specific operational workflow.

- Endpoint Protection and Immutability: Basic cybersecurity hygiene must be absolutely flawless. Organizations must enforce phishing-resistant Multi-Factor Authentication (MFA), deploy active Endpoint Detection and Response (EDR) platforms across all endpoints, and maintain immutable data backups to recover from potential ransomware or system-wide algorithmic failures.

Vendor Accountability and Supply Chain: If using third-party software, has the vendor's underlying security posture been aggressively audited? Organizations must ensure that sensitive customer data fed into a third-party tool is legally shielded from being used to train the vendor's public models.

This requires treating models like critical software and applying DevSecOps principles, including container image scanning, to the entire supply chain.

- Adversarial Attack Testing: Traditional security testing often misses unique vectors like prompt injection and model evasion. Organizations must embed automated adversarial testing to simulate attacks and discover how models fail before malicious actors exploit them.

The True Cost of AI: Investment and Realistic Payback Periods

One of the most pressing questions from Chief Financial Officers is calculating the total cost of ownership (TCO) for these implementations. By 2026, corporate strategy conversations have evolved dramatically; financial leaders are no longer asking what is technologically possible in a laboratory, they are demanding to know exactly what pays off on the balance sheet.

The total cost of development and deployment is determined by a highly complex array of factors, including use-case complexity, data cleanliness, model type selection, and legacy integration requirements.

However, for planning purposes, enterprise costs can generally be categorized into distinct investment tiers based on the scope and depth of the project.

Investment Tiers for Mid-Sized Businesses

| Investment Tier | Typical Cost Range | Scope of Implementation | Hidden Cost Drivers |

|---|---|---|---|

| Small AI Projects | 25,000 – 75,000 | Early-stage initiatives leveraging existing platform capabilities. Examples include internal knowledge chatbots, basic predictive forecasting, or single-system CRM wrappers. Software subscriptions typically run 20–50 per user per month. | Unaccounted manual data cleanup, employee training (minimum 4-8 hours per person), and disjointed pilot management. |

| Mid-Level AI Applications | 75,000 – 250,000 | Custom solutions connected directly to operational systems. Involves developing Extract, Transform, Load (ETL) data pipelines, multi-model logic, and distinct business-facing user interfaces. | Integration patches for highly fragmented legacy systems and unpredictable cloud usage spikes during initial model training phases. |

| Enterprise-Grade AI Systems | 250,000 – 1,000,000+ | Production-grade ecosystems utilizing fine-tuned Large Language Models, Retrieval-Augmented Generation (RAG) architectures, and complex real-time decision orchestration. | Rigorous security hardening, intense governance compliance controls, and massive, ongoing GPU compute spend. |

Hidden Financial Liabilities and Ongoing Maintenance

A critical, often fatal financial error many businesses make is treating artificial intelligence as a one-time capital expenditure (CapEx). It is a living, continuously evolving system. Organizations should budget approximately 15% to 25% of the initial build cost annually strictly for ongoing maintenance.

This operational expenditure (OpEx) covers necessary Machine Learning Operations (MLOps). Models inevitably experience "drift" over time as underlying market conditions, language trends, or customer behaviors shift, requiring continuous technical monitoring and periodic retraining cycles with fresh data.

Furthermore, there are inescapable ongoing cloud infrastructure costs for raw compute power (GPUs) and transactional API token usage.

Change management represents another significant hidden financial burden. Employees require dedicated, paid time to effectively learn new workflows, draft Standard Operating Procedures, and physically restructure their daily tasks. Initiatives frequently fail because enterprises drastically underestimate the preparatory work required to make a system reliable and compliant before the actual computational training even begins.

Calculating the Payback Period and ROI

Measuring the Return on Investment (ROI) requires looking far beyond simple top-line revenue increases to properly factor in operational efficiency, time reclamation, and risk mitigation.

A recent executive survey found that only 29% of leaders can measure this ROI confidently, highlighting the difficulty of translating productivity into hard financial data.

- Hard Cost Reduction: If a $75,000 implementation in the customer support department permanently reduces the need to hire three additional seasonal representatives (saving approximately $15,000 per month in payroll and benefits), the payback period is a highly favorable five months. After that exact point, the operational savings accumulate as pure financial return.

Bandwidth Expansion (Time Savings): Data indicates that 58% of employees utilizing these tools save roughly 52 minutes a day, or nearly five hours a week.

If a 50-person marketing and sales team collectively reclaims 250 hours a week, that massive bandwidth can be redirected toward outbound revenue-generating prospecting. This results in a much higher volume of closed-won deals without increasing the fixed payroll budget.

- Cost Avoidance (Risk Mitigation): Often grossly underreported, the technology delivers massive financial value by reducing failure, waste, and systemic risk. For example, anomaly detection in the finance department prevents thousands of dollars in duplicate invoice payments, while predictive maintenance models in commercial real estate avoid catastrophic, highly expensive HVAC or elevator equipment failures.

Organizations that focus their initial deployments on highly pragmatic, tightly scoped use cases generally see measurable financial impact and clear payback within a 6 to 12-month window.

Conversely, companies that chase technically impressive models to solve problems nobody actually possesses will burn through budgets rapidly, delivering zero measurable impact on the profit and loss statement.

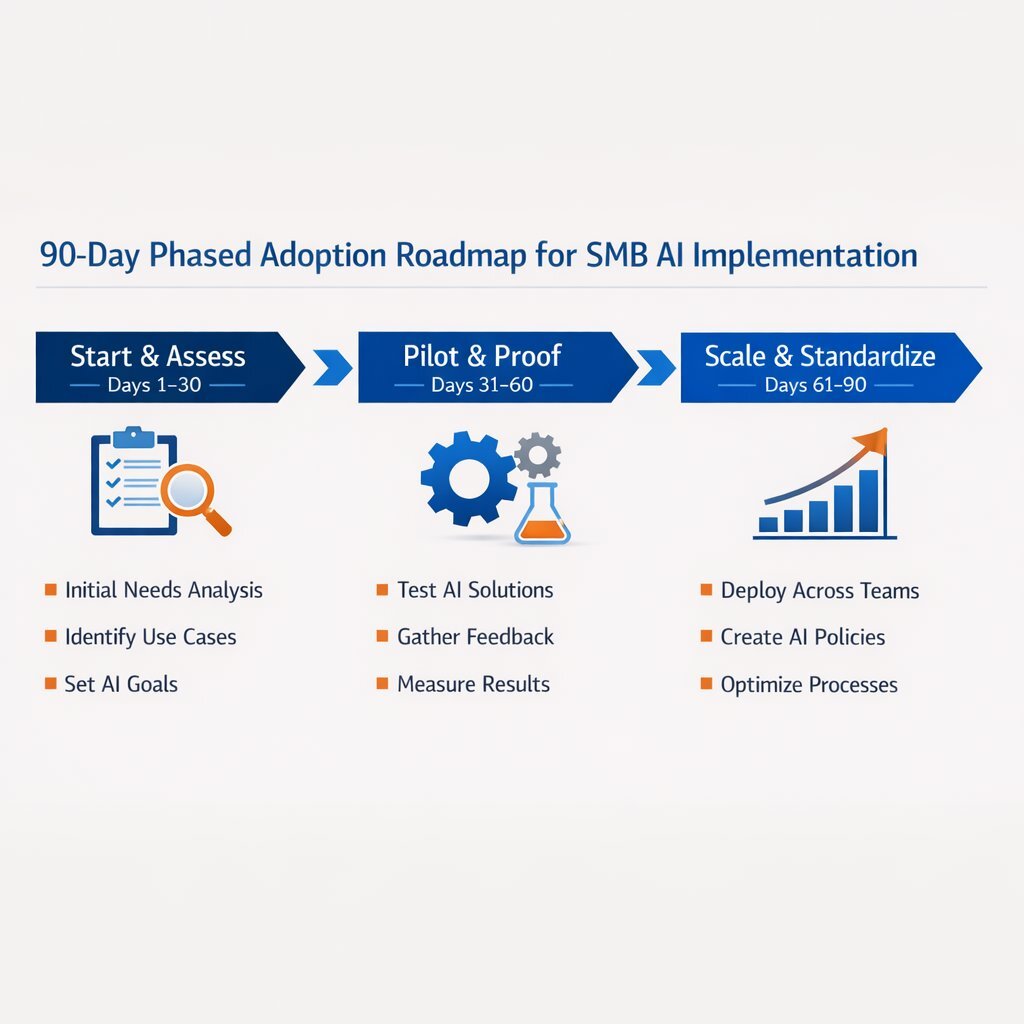

The 90-Day Phased Adoption Roadmap

Transitioning from theoretical board-level exploration to a live, value-generating implementation requires a highly structured, chronological approach. Attempting a massive, company-wide digital transformation overnight invariably disrupts core daily operations and breeds severe employee resistance.

A successful SMB pilot must be executed over a rigid 90-day timeline. The overarching goal is not broad, immediate deployment, but rather designing a small, meaningful pilot that builds internal organizational capability, generates early learning, and proves undeniable financial value.

Phase 1: Start and Assess (Days 1 – 30)

The first month is exclusively dedicated to establishing the technical foundation, selecting the appropriate target, and preparing the workforce psychologically.

- Select a High-Impact, Low-Complexity Pilot: Do not start with a mission-critical financial ledger or highly regulated healthcare records. Choose an internal, low-risk workflow that is currently a known operational bottleneck. Excellent starting points include automated meeting summarization, initial marketing content drafting, or tier-1 support email triage.

- Establish Baselines and Strict Metrics: Leadership must clearly define what success looks like numerically. Document the exact current metrics before the new tool is introduced. What is the current average time to resolve a technical ticket? How many labor hours are currently spent formatting weekly reports? You cannot mathematically measure ROI without an established baseline.

- Ensure Data and Security Readiness: Conduct a swift, comprehensive audit of the data required for the specific pilot. Ensure that strict access controls are established and verify that no sensitive Personally Identifiable Information (PII) or proprietary trade secrets will be inadvertently exposed to open-source public models.

Build Psychological Safety: Executive leadership must communicate clearly to the entire workforce that the technology is being implemented to augment their professional judgment and reduce tedious administrative burdens, not to replace their roles or initiate layoffs.

Assign an influential executive sponsor who will publicly champion the effort.

Phase 2: Pilot and Proof (Days 31 – 60)

The second month shifts focus to technical deployment, parallel testing, and rapid, daily iteration within a highly controlled operational sandbox.

Deploy in Parallel: Launch the solution inside existing operational systems, but initially mandate that it runs in parallel with traditional human methods.

For example, configure the system to draft responses to incoming customer emails, but require a human service agent to physically review, edit, and manually approve every single message before it is transmitted.

- Monitor and Tune: Hold mandatory weekly review sessions to critically analyze the generated outputs. Engineering teams must tune the linguistic prompts, adjust data access parameters, and carefully refine escalation thresholds based on real-world friction.

- Human-in-the-Loop Validation: Enforce strict, unyielding quality assurance. Implement checks for factual accuracy and demographic biases, ensuring the outputs perfectly reflect the company's specific culture, legal principles, and branding tone. This 90/10 balance between automation and review echoes the 90/10 Human-in-the-Loop trust architecture many enterprises now follow.

Phase 3: Scale and Standardize (Days 61 – 90)

The final month manages the critical transition of the pilot from a controlled experiment to standard, company-wide operating procedure.

- Analyze Financial Results: Compare the pilot's performance directly against the hard baselines established in Phase 1. Did the system actually save the projected labor hours? Did output accuracy improve or degrade?

- Codify Playbooks: Meticulously document the exact processes, system configurations, and prompt engineering techniques that led to the successful outcome. Create clear, written Standard Operating Procedures (SOPs) for all future employees utilizing the tool.

Expand Methodically: Once mathematically validated and legally cleared, begin carefully rolling out the successful pilot architecture to adjacent teams, secondary departments, or new geographical regions.

Build management dashboards that track long-term business KPIs (like revenue influenced or churn reduced) rather than just monitoring superficial software activity metrics.

Avoiding the Trap: Why Projects Fail and How to Succeed

Despite the intense optimism surrounding the technology, the reality of implementation is fraught with peril. It is imperative to understand why 80% of projects fail at twice the failure rate of traditional IT initiatives.

Companies are abandoning initiatives at alarming rates, driven by mounting costs and lack of measurable utility.

One of the most destructive phenomena is the "hallucination"—when a language model confidently generates completely false or fabricated information. In 2024 alone, LLM hallucinations cost global businesses over $67 billion in associated losses.

These are rarely spectacular failures that make international headlines; rather, they are the quiet, insidious accumulation of slightly wrong answers, degraded customer trust, and ultimately abandoned internal projects that nobody noticed were failing until the budget was exhausted.

Failures are rarely caused by the core algorithms themselves; they are caused by human organizational failures. Poor data quality is the culprit in 43% of failed initiatives.

If a company attempts to train a model on highly fragmented, outdated, or contradictory CRM records, the model will output contradictory advice. Another primary failure point is the lack of executive sponsorship; if the CEO or CFO is not directly involved in driving the agenda and enforcing adoption, the project will invariably stall at the proof-of-concept stage.

To avoid these costly traps, organizations must adhere to strict legal and technical best practices. From a legal standpoint, businesses must use vendor contracts to define absolute accountability, include specific usage policies in employee guidelines, and rigorously protect their intellectual property from being ingested by public systems.

Technically, organizations must validate outputs with secondary systems, provide curated contextual data to the model, and implement automated checks for high-stakes outputs before they are published.

How Tailored Technology Accelerates the Journey

Implementing these systems effectively requires significantly more than just purchasing off-the-shelf software subscriptions; it demands a resilient, integrated technical foundation. For mid-sized businesses, attempting to build this infrastructure entirely in-house is often a recipe for failure. Vendor-led implementations and strategic partnerships in the SMB space succeed twice as often as internal builds alone, primarily because external partners bring deep, specialized architectural knowledge that internal IT teams typically lack.

Organizations specializing in custom software development and application management, such as Baytech Consulting, create a tailored technological advantage. By focusing on enterprise-grade quality and rapid agile deployment, external engineering teams ensure that deployments are deeply, natively integrated with a business’s unique workflows rather than functioning as clunky, disconnected bolt-on tools. If you’re evaluating potential partners, comparing the top software development companies in California can help you benchmark experience, tech stack, and real-world results.

The Critical Role of Containerization and Robust Architecture

A highly common and incredibly frustrating failure point in machine learning development is the "it works on my machine" problem. A model may perform flawlessly in a local testing sandbox, but immediately break down when deployed to a live, high-traffic production server due to subtle differences in software dependencies or operating system configurations.

Modern infrastructure relies heavily on advanced containerization technologies and orchestration systems to eradicate this problem entirely.

Docker Containerization: Docker packages a model alongside all of its necessary code, libraries, settings, and structural dependencies into a single, standardized, immutable unit (a container).

This technological standard guarantees that the model will behave identically regardless of where it is deployed—whether running on a developer's local instance of VS Code/VS 2022, an on-premise server, or scalable OVHCloud servers.

Kubernetes Orchestration: Kubernetes then manages these deployed containers at massive scale. If an application experiences a sudden, massive surge in traffic—such as a Black Friday spike in customer support queries or an end-of-quarter influx of financial data processing—Kubernetes automatically scales up the number of active containers to handle the heavy workload (a process known as Horizontal Pod Autoscaling).

Once the traffic subsides, Kubernetes automatically reduces the container count, optimizing CPU and memory resources to prevent unnecessary cloud expenditures.

To manage these complex Kubernetes clusters securely and efficiently, modern engineering teams utilize solutions like Harvester HCI (Hyperconverged Infrastructure) and Rancher, ensuring seamless deployment and centralized management across multiple environments.

Furthermore, data cannot simply exist in a vacuum; it requires robust, enterprise-grade structuring. By utilizing powerful relational database management systems like Postgres (managed via pgAdmin) and Microsoft SQL Server, engineers ensure that the historical data fed into the models is highly structured, relational, and instantly accessible.

When this sophisticated backend architecture is combined with secure network perimeters (managed by tools like pfSense) and integrated seamlessly into the daily platforms employees already use (such as Microsoft 365, Teams, OneDrive, and Google Drive), the result is a highly secure, frictionless environment. This comprehensive technology stack, managed via Azure DevOps On-Prem for continuous integration and delivery, is what ultimately transforms an experimental, fragile algorithm into a resilient, production-ready enterprise product that drives undeniable commercial value.

Conclusion and Next Steps

The landscape of artificial intelligence in 2026 is no longer a futuristic, theoretical concept reserved solely for massive, multi-billion-dollar tech conglomerates; it is a highly accessible, intensely pragmatic tool for achieving rapid operational efficiency. For a 20- to 200-person company, it acts as a profound equalizer, capable of deflecting massive volumes of support tickets, automating complex financial workflows, and generating deeply personalized marketing collateral at an unprecedented scale.

However, commercial success is absolutely not guaranteed simply by purchasing a new software license. Executive leaders must embrace a highly disciplined, data-first approach that prioritizes foundational readiness over superficial technological hype. To move forward intelligently, organizations should take the following immediate steps:

- Conduct a Rigorous Data Readiness Audit: Map out all internal data sources, actively eliminate operational silos, and enforce strict, non-negotiable security protocols, including Multi-Factor Authentication (MFA) and Role-Based Access Controls (RBAC). If your data landscape is messy or siloed, investing in data readiness for enterprise AI will often deliver the highest return on your next AI dollar.

- Select One High-Impact, Low-Risk Pilot: Identify a single, highly frustrating operational bottleneck that can be addressed within a 90-day window, ensuring that clear, mathematical baseline metrics are established for future ROI calculation.

- Engage Expert Engineering Partnerships: Consider partnering with custom software and engineering specialists who deeply understand and utilize scalable backend technologies—such as Docker, Kubernetes, and enterprise SQL databases—to ensure the new solution integrates flawlessly with your existing enterprise systems.

By grounding ambitious technological goals in cold operational reality and executing a tightly managed, 90-day roadmap, small and mid-sized businesses can permanently transform their workflows, empower their human workforce, and unlock sustainable, long-term commercial growth.

Supporting Resources for Further Reading

- AI-Powered Growth Engines

- (https://www.sba.gov/business-guide/manage-your-business/ai-small-business)

- (https://www.cio.com/article/3530814/level-the-playing-field-with-genai-top-smb-use-cases.html)

Frequently Asked Question

If our company uses a free or low-cost public AI tool, who is legally responsible for the content it generates or the data it processes?

According to official guidance from the U.S. Small Business Administration (SBA), if your business utilizes free, open-source, or public artificial intelligence tools, your organization assumes the primary legal and commercial risk for the outputs.

Unlike enterprise-grade, paid software solutions where the software creator may assume certain contractual liabilities or offer copyright indemnification, free public tools lack those vital protections.

Therefore, you must establish strict, written internal governance requiring a "human in the loop" to physically review all generated products for factual accuracy, cybersecurity, and ethical alignment before they are published or utilized in any client communications.

Furthermore, sensitive proprietary company data or customer Personally Identifiable Information (PII) should never, under any circumstances, be fed into free public models. Doing so means that information may be permanently incorporated into the model's public training data pool, leading to severe data security breaches and compliance violations.

Engaging competent legal counsel to ensure your internal policies comply with local intellectual property and data privacy laws is an essential, non-negotiable best practice for any mid-sized business in 2026.

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.