Ready, Set, Scale: CTO Checklist for Enterprise AI

April 13, 2026 / Bryan Reynolds

AI Readiness Checklist for CTOs and Engineering Leaders

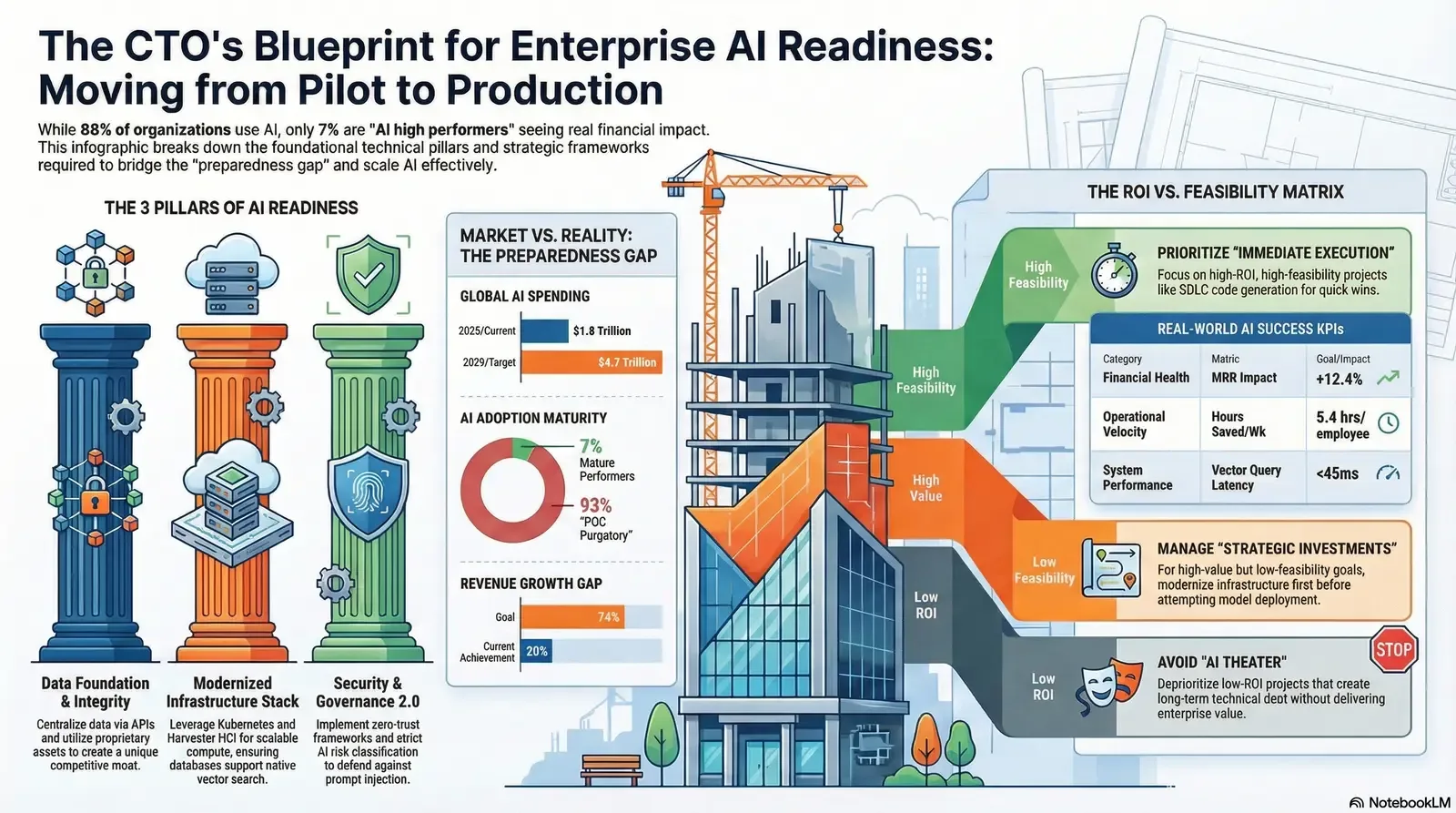

The enterprise artificial intelligence landscape has reached a definitive, irreversible tipping point. As organizations navigate the complexities of digital transformation heading into 2026, the global mandate has crystallized: the transition from experimental, siloed AI pilots to fully scaled, production-grade deployments is no longer a luxury, but a fundamental requirement for market survival. According to comprehensive market forecasts, global AI spending is projected to reach $1.8 trillion in 2025 and skyrocket to an unprecedented $4.7 trillion by 2029, growing at a compound annual growth rate (CAGR) of 33%.

Yet behind these staggering financial projections lies a sobering operational reality. While 88% of organizations report utilizing AI in at least one business function, a mere 6% to 7% qualify as mature "AI high performers" capable of capturing meaningful, enterprise-wide financial impact. The vast majority of enterprises remain trapped in a perpetual cycle of proof-of-concept (POC) purgatory. This stark maturity gap inevitably forces executive boards, visionary Chief Technology Officers (CTOs), strategic Chief Financial Officers (CFOs), and innovative department heads to confront the exact question that dictates future competitiveness: Is the organization culturally and technically ready for AI adoption?

Answering this question requires moving far beyond the surface-level implementation of generic, off-the-shelf chatbots. It demands an exhaustive evaluation of an organization's foundational technical capabilities and cultural resilience. Engineering leaders must meticulously assess whether their underlying data pipelines are structured, sanitized, and secure. They must determine whether the technology infrastructure can handle explosive compute demands and whether the workforce possesses the technical fluency to operate in an AI-augmented ecosystem.

Furthermore, navigating the complexities of modern software development requires an ecosystem built on enterprise-grade quality and agile deployment. Firms specializing in custom software development and application management, such as Baytech Consulting, illustrate that achieving a tailored tech advantage—custom-crafted with cutting-edge tools—relies on a highly integrated and mature technology stack. Whether utilizing Azure DevOps On-Prem and VS Code/VS 2022 for seamless development, managing complex relational and vector data stores via Postgres, pgAdmin, and SQL Server, or orchestrating bare-metal hybrid cloud environments with Harvester HCI, Rancher, Kubernetes, and Docker on OVHCloud servers secured by pfSense, the infrastructure must be uncompromisingly robust. Seamless internal workflows powered by Microsoft 365, Teams, OneDrive, and Google Drive further exemplify the operational maturity required before an enterprise can safely scale autonomous AI agents.

This comprehensive report delivers an exhaustive, industry-defining AI readiness checklist for CTOs and engineering leaders. By systematically dissecting infrastructure needs, data governance, security protocols, use case evaluation, engineering upskilling, and ROI measurement, this analysis provides the definitive blueprint for enterprise AI transformation in 2026 and beyond.

The State of Enterprise AI Adoption and the Preparedness Gap

To accurately gauge organizational readiness, technology executives must first comprehend the macroeconomic and technological forces driving AI adoption. The years 2024 and 2025 were characterized by aggressive, often chaotic experimentation, with generative AI adoption nearly tripling in a span of twenty-four months. By 2026, the focus has shifted entirely toward infrastructural resilience, data readiness, and the deployment of agentic AI systems—autonomous algorithms capable of executing complex, multi-step workflows without continuous human intervention.

The spending catalysts within this massive $4.7 trillion market reveal precisely where engineering leaders are directing their capital. Absolute spending is heavily dominated by physical hardware and infrastructure, but the most explosive growth rates are found in data readiness and AI cybersecurity.

- Data illustrates that while Infrastructure commands the highest absolute dollar spend, Enterprise Data Readiness is the fastest accelerating priority.

This data underscores a critical insight for CTOs: while purchasing AI software subscriptions is highly accessible, the true competitive differentiator lies in securing the underlying data and the operational environment. The staggering 155.4% CAGR in AI Data spending signals that enterprises have finally realized that AI-ready data is a non-negotiable prerequisite for scaling any intelligent system. Organizations that attempt to bypass this foundational step find themselves among the 60% of enterprises forced to abandon their AI initiatives.

The Preparedness Gap

A paradox has emerged in the corporate landscape, defined by industry analysts as the "preparedness gap." According to extensive surveys of enterprise leaders, while 42% of companies believe their overarching corporate strategy is highly prepared for AI adoption, they report feeling significantly less prepared regarding the actual operational mechanics: infrastructure, data architecture, risk management, and talent acquisition.

This disconnect between strategic ambition and operational reality is the primary reason why 74% of organizations hope to grow revenue through AI, yet only 20% are currently achieving top-line growth. Most organizations are currently capturing basic productivity and efficiency gains (66%) by using AI at a surface level, rather than undertaking the difficult work of redesigning key processes or deeply transforming their business models.

- Organizations are currently achieving benefits in productivity and efficiency (66%) and decision-making (53%) rather than top-line growth (20%).

To bridge this gap, engineering leaders must shift their focus from the technology itself to the cultural and infrastructural readiness of the enterprise. True digital readiness requires an agile organizational culture where cross-functional collaboration between developers, security teams, and business units is standard practice, supported by proven agile methodology in day-to-day delivery.

Evaluating Use Cases and Priorities Across B2B Industries

A primary barrier to achieving measurable Return on Investment (ROI) is the misalignment of AI initiatives with core business objectives. Many organizations fall into the trap of crowdsourcing AI ideas from the bottom up, resulting in fragmented, disconnected experiments that fail to move the financial needle. CTOs must evaluate use cases based on strategic priority, data availability, and technical feasibility.

The most successful deployments occur when a visionary CTO collaborates intimately with a strategic CFO, a driven Head of Sales, and an innovative Marketing Director to target workflows characterized by high-volume, repetitive tasks that are currently bottlenecked by human capacity. Increasingly, this looks like moving beyond chat widgets and designing true AI copilots that embed directly into core workflows.

Industry-Specific AI Applications and Readiness

The readiness requirements and high-ROI use cases vary significantly across B2B sectors. Engineering leaders must tailor their approach based on the specific operational and regulatory demands of their industry ecosystem.

Finance and Banking

The finance sector views AI not merely as an operational tool, but as a critical infrastructure upgrade. High-ROI use cases include real-time fraud detection in payment gateways, Anti-Money Laundering (AML) pattern detection, and hyper-personalized financial planning algorithms. AI systems in this domain must seamlessly ingest massive, continuous transactional datasets and adapt to emerging threat tactics in milliseconds. This requires exceptionally low-latency infrastructure and stringent regulatory compliance audit trails. The readiness checklist for finance CTOs must heavily index on model explainability, ensuring that every automated credit decision or risk assessment can be transparently justified to financial regulators.

Commercial Real Estate and Mortgage

The commercial real estate (CRE) and mortgage sector is moving aggressively beyond basic property listings toward intelligent contract analysis and due diligence acceleration. AI-powered lease abstraction tools can extract critical terms—such as rent schedules, escalation clauses, tenant names, and renewal options—from hundreds of pages of unstructured legal documents in a matter of minutes. This capability drastically reduces the due diligence timeline for property acquisitions, providing a massive competitive advantage in fast-moving markets. For real estate engineering leaders, readiness hinges on the ability to digitize, OCR (Optical Character Recognition), and structure decades of historical, paper-based portfolio data.

Advertising and Marketing

Heading into 2026, the advertising and ad-tech sector is prioritizing performance outcomes over platform vanity metrics. With the deprecation of third-party cookies and shifting privacy landscapes, marketing directors rely heavily on AI for real-time, identity-powered marketing and predictive customer lifetime value (LTV) modeling. AI agents optimize automated bidding ecosystems and cross-screen engagement, dynamically shifting media budgets to maximize impact based on real-time attention signals. Readiness in ad-tech requires highly resilient data clean rooms and low-latency decision engines capable of evaluating millions of programmatic bids per second, often supported by proactive AI rather than passive dashboards.

Gaming and High-Tech Software

In the gaming industry, AI-native development is revolutionizing traditional production workflows. Generative AI systems are actively utilized to procedurally design levels, animate characters, compose adaptive music, and dynamically tune difficulty curves in real-time to match player skill. For B2B software and high-tech providers, AI is deeply embedded directly into the Software Development Life Cycle (SDLC), reducing software development time by up to 55% through automated code generation, regression testing, and continuous integration. Engineering readiness here is defined by the seamless integration of AI coding assistants into the developer's Integrated Development Environment (IDE).

Education and Learning Management Systems (LMS)

Modern LMS platforms have shifted entirely from static course distribution to AI-driven, highly personalized learning at scale. AI agents integrated into educational platforms monitor learner progress in real-time, curate targeted supplementary resources, and proactively address individual skill gaps through automated content generation and adaptive delivery engines. Readiness for an LMS provider requires a robust data taxonomy capable of mapping granular skills to dynamic content modules instantly.

Healthcare

Healthcare networks and life sciences organizations prioritize risk management, utilizing AI for clinical decision support, medical imaging analysis, and administrative workflow automation. Due to the highly sensitive nature of protected health information (PHI), engineering leaders must prioritize interoperability across fragmented Electronic Health Records (EHRs). Furthermore, they must deploy federated learning models to train AI on distributed data without centralizing or compromising patient privacy (ensuring strict HIPAA compliance). Readiness is heavily dependent on the generation and utilization of synthetic datasets for safe model training.

Telecom

The telecommunications sector is highly focused on utilizing AI for cybersecurity and network optimization. AI is deployed to predict network outages, optimize routing protocols, and defend against sophisticated cyber-attacks. Telecom CTOs must ensure their infrastructure is capable of handling massive telemetry data streaming from edge devices and cell towers in real-time.

The ROI vs. Feasibility Framework

To determine which of these use cases to pursue, CTOs must utilize a rigorous evaluation framework. Initiatives should be mapped on a strategic matrix plotting Business Value (ROI) against Technical Feasibility (Data readiness, security, and infrastructure capabilities).

| Strategic Category | Defining Characteristics | CTO Execution Strategy |

|---|---|---|

| High ROI, High Feasibility | Well-structured data already exists, clear KPIs are established, and regulatory risk is low (e.g., internal IT support ticket routing, standard SDLC code generation). | Immediate Execution: Prioritize these initiatives as immediate quick wins. Demonstrating fast, measurable value builds executive trust and secures future enterprise funding. |

| High ROI, Low Feasibility | Massive potential business value, but underlying data is siloed, or the required compute infrastructure is currently lacking (e.g., predictive global supply chain modeling, autonomous financial trading algorithms). | Strategic Investment: Do not deploy AI models yet. Initiate intensive data engineering projects to clean pipelines and modernize the infrastructure stack, making this feasible in 12-18 months. |

| Low ROI, High Feasibility | Simple to build and deploy, but solves a minor or non-existent business problem (e.g., a basic internal FAQ chatbot with historically low employee usage). | Deprioritize: Avoid wasting valuable engineering cycles on "AI theater." These projects create long-term technical debt and maintenance burdens without delivering true enterprise value. |

| Low ROI, Low Feasibility | Highly complex, poorly defined business problems paired with inaccessible or corrupted data. | Discard: Completely avoid. These are the exact projects that contribute to the 60% POC abandonment rate plaguing the industry. |

Data, Security, and Governance: The Immutable Foundation

An enterprise cannot, under any circumstances, be considered ready for AI if its data architecture is fragmented and its governance policies are reactive. As McKinsey analysis emphatically indicates, organizations frequently spend 60% to 70% of their total AI project timelines solely on data preparation and sanitization.

Building an AI-Ready Data Foundation

AI models, whether generative large language models (LLMs) or predictive machine learning algorithms, are entirely dependent on the quality, accuracy, and formatting of their inputs. CTOs must evaluate data readiness across several critical dimensions:

- Data Centralization and Interoperability: Is the enterprise data siloed across legacy on-premises servers, disparate cloud environments, and isolated SaaS applications? AI requires a unified, accessible data fabric. Data must be made available via stable, well-documented APIs or centralized in modernized, highly governed data lakes and warehouses.

- Data Quality and Integrity: AI models trained on flawed, inconsistent, or duplicated data will inevitably produce hallucinations and inaccurate insights, directly undermining executive and consumer confidence. Data engineering teams must implement robust, automated pipelines that clean, standardize, and enrich data continuously. If the data is garbage, the AI will simply act as a highly efficient garbage amplifier.

- The Proprietary Data Advantage: Generic AI models trained on publicly available internet data yield generic, easily replicable business results. The true, transformative ROI of enterprise AI comes from grounding foundation models in unique, proprietary data assets—such as decades of historical customer interactions, proprietary codebase repositories, or highly specialized operational telemetry. For many B2B companies, this is also the core of their moat, and aligns directly with how modern AI moats are built.

- Synthetic Data Utilization: By 2029, industry analysts project that an astonishing 77% of the data used to train LLMs will be synthetic. Organizations must develop internal capabilities to generate highly accurate synthetic datasets. This allows data science teams to train models on realistic, edge-case scenarios without ever exposing sensitive Personally Identifiable Information (PII) or risking a data breach.

AI Security and Advanced Threat Modeling

The deployment of AI, particularly autonomous agentic systems that can execute code or authorize transactions, vastly expands the corporate attack surface. As highlighted earlier, AI cybersecurity spending is surging at a 73.9% CAGR precisely because organizations recognize the severity of these unprecedented threat vectors.

Engineering leaders must implement strict zero-trust security frameworks specifically tailored for artificial intelligence. This involves implementing rigorous access controls, enforcing the principle of least privilege across all service accounts, and ensuring that sensitive data fields are tokenized, anonymized, or fully encrypted both at rest and in transit.

CTOs must conduct rigorous, ongoing threat modeling against AI-specific vulnerabilities. These include:

- Prompt Injection Attacks: Malicious actors manipulating the input to force an LLM to execute unauthorized commands or reveal underlying system prompts, which is why many enterprises now deploy an AI firewall layer between users and models.

- Model Inversion and Data Extraction: Attackers reverse-engineering the model to extract the sensitive proprietary data it was trained on.

- Training Data Poisoning: The intentional introduction of corrupted data into the training pipeline to degrade the model's accuracy or introduce intentional backdoors.

Furthermore, organizations must vehemently secure against "Shadow AI"—the unsanctioned use of public, consumer-grade generative AI tools by well-meaning employees. Shadow AI routinely leads to the accidental, irreversible leakage of proprietary intellectual property and sensitive client data into public model training sets.

AI Governance, Ethics, and Regulatory Compliance

Governance can no longer be viewed as a post-deployment afterthought or a secondary compliance checklist; it must be embedded directly into the software development lifecycle from day one. With the enforcement of comprehensive global regulations like the EU AI Act and directives from the National Institute of Standards and Technology (NIST), organizations face immense legal, financial, and reputational risks if their AI systems act in biased, opaque, or harmful ways.

A robust, enterprise-grade AI governance framework requires several operational pillars:

- Stringent Risk Classification: Organizations must categorize all proposed AI initiatives by risk level (low, medium, high, critical). High-risk systems—such as those making automated financial credit decisions, filtering employment resumes, or delivering medical diagnoses—require mandatory, stringent human-in-the-loop oversight and highly rigorous audit trails.

- Transparency and Explainability: Stakeholders, users, and regulatory bodies must be able to understand precisely how an AI model arrived at a specific decision. Decision logs must be entirely immutable, and the specific archiving parameters (including which version of the model and what exact data was used at the time of inference) must be strictly maintained for forensic auditing.

- Bias Detection and Fairness Testing: AI models must be continuously tested against diverse, highly representative datasets to ensure they do not produce discriminatory outcomes based on race, gender, age, or other protected classes. If a model exhibits drift, engineers must have automated pipelines ready for rapid retraining.

- Incident Response and Rollback Plans: Organizations must possess a documented, heavily tested AI incident response protocol. Security teams must be prepared to rapidly contain and roll back models that exhibit severe performance drift, dangerous hallucinations, or active security breaches while running in production.

Infrastructure Requirements for Scaling Enterprise AI

While business strategy, ethical alignment, and data quality guide the theoretical AI roadmap, the physical and digital infrastructure dictates whether the organization can actually execute it in reality. The profound computational intensity of AI training and real-time inference places unprecedented strain on traditional corporate IT environments. Engineering leaders must architect a technology stack that flawlessly balances compute performance, cost efficiency, data security, and elastic scalability.

Firms that require a tailored tech advantage and rapid agile deployment, such as Baytech Consulting, demonstrate that delivering enterprise-grade quality in custom software development and application management hinges entirely on a deeply integrated, highly optimized stack. The architectural choices made at the infrastructure layer will unconditionally dictate the success or failure of the entire AI initiative.

The Rise of Edge AI and Bare-Metal Orchestration

While public cloud providers (hyperscalers) absolutely dominate the massive compute clusters required for foundation model training, enterprise inference—the actual day-to-day execution and querying of AI models—is increasingly shifting toward hybrid and edge deployments. Executing AI processing close to the data source drastically reduces network latency, significantly lowers bandwidth transmission costs, and ensures strict compliance with data sovereignty and security regulations.

To achieve this level of operational agility, engineering teams require highly efficient virtualization and advanced container orchestration. Kubernetes has firmly established itself as the de facto platform standard for scaling MLOps (Machine Learning Operations). It allows development teams to scale machine learning workloads horizontally across nodes, manage highly coveted GPU resources dynamically, and encapsulate the immense complexity of distributed data storage through native constructs like PersistentVolumes and StorageClasses.

However, running Kubernetes efficiently on bare-metal infrastructure has traditionally been a highly complex endeavor. This is precisely where solutions like Harvester HCI and Rancher become critical enterprise differentiators. Harvester is an open-source, hyperconverged infrastructure (HCI) solution built natively on Kubernetes. It provides IT operators with a unified single pane of glass to manage both legacy Virtual Machines (VMs) alongside modern, containerized AI workloads. By utilizing local, direct-attached storage (via Longhorn) and completely eliminating the need for expensive, complex proprietary Storage Area Networks (SANs), Harvester significantly reduces the Total Cost of Ownership (TCO) for on-premises AI deployments.

When this agile orchestration is combined with secure network routing, load balancing, and firewall capabilities provided by pfSense on robust bare-metal environments like OVHCloud servers, CTOs can confidently deploy a highly secure, high-performance, and incredibly cost-effective foundation for running sensitive, proprietary AI models entirely on-premises, completely shielded from public cloud vulnerabilities.

The Database Evolution: Native Vector Search Capabilities

To enable advanced, high-value AI capabilities such as Retrieval-Augmented Generation (RAG)—a technique where an LLM dynamically retrieves proprietary corporate documents to ground its answers in factual reality, thereby eliminating hallucinations—the underlying database architecture must support high-dimensional vector embeddings. In many cases, this is implemented as an AI sidecar pattern that wraps your existing systems rather than replacing them.

Historically, achieving this required engineering teams to spin up separate, specialized vector databases. This architectural choice introduced highly complex ETL (Extract, Transform, Load) pipelines, severe data synchronization and consistency issues, and massive operational overhead. In 2026, the paradigm has shifted decisively toward integrating vector search directly into the core relational database management systems that enterprises already trust and operate.

Postgres and pgvector

For environments heavily invested in robust open-source stacks, Postgres remains an absolute powerhouse. The introduction of the pgvector extension, further augmented by advanced technologies like pgvectorscale, entirely transforms Postgres into a high-performance vector database. It supports sophisticated algorithms like StreamingDiskANN (implemented efficiently in Rust) and Statistical Binary Quantization (SBQ). This allows Postgres to handle tens of millions of high-dimensional embeddings with sub-100 millisecond query latency and massive request throughput. Most importantly, this architecture allows the original text documents and their mathematical vector embeddings to live in the exact same table, ensuring complete transactional ACID compliance without the nightmare of maintaining a secondary, disjointed database.

SQL Server 2025 Native Vector Support

For large enterprises deeply integrated into the Microsoft technology ecosystem, the release of SQL Server 2025 represents a revolutionary leap forward. Moving far beyond the clunky external machine learning services of older versions, the 2025 engine introduces a native VECTOR data type and built-in vector distance search functions utilizing DiskANN indexing.

Using standard, familiar T-SQL commands, database developers can now execute functions like AI_GENERATE_EMBEDDINGS to securely pass structured or unstructured data to internal or external models (such as Azure OpenAI services or locally hosted, private Ollama instances) and seamlessly store the resulting vectors directly alongside traditional business data. This innovation allows a single T-SQL query to perform complex semantic searches—finding concepts that share meaning rather than just exact keyword matches—drastically simplifying the enterprise architecture, reducing data movement latency to near zero, and maintaining the enterprise-grade security, auditing, and role-based access controls inherent to SQL Server.

Engineering Skills, Training, and AI-Augmented Workflows

The most sophisticated infrastructure and pristine data lakes in the world cannot compensate for an engineering workforce that lacks fundamental AI fluency. The comprehensive Deloitte 2026 State of AI report explicitly identifies the AI skills gap as the single biggest barrier to successful enterprise integration, superseding even technical limitations. Furthermore, survey data reveals that only 14% of low-maturity organizations exhibit business units that actually trust and are technically ready to use new AI solutions in their daily workflows.

CTOs must drive a profound cultural shift that views AI not as a threat to job security, but as an indispensable augmentation of human capability. This requires comprehensive organizational change management and a highly structured, rigorous approach to engineering upskilling.

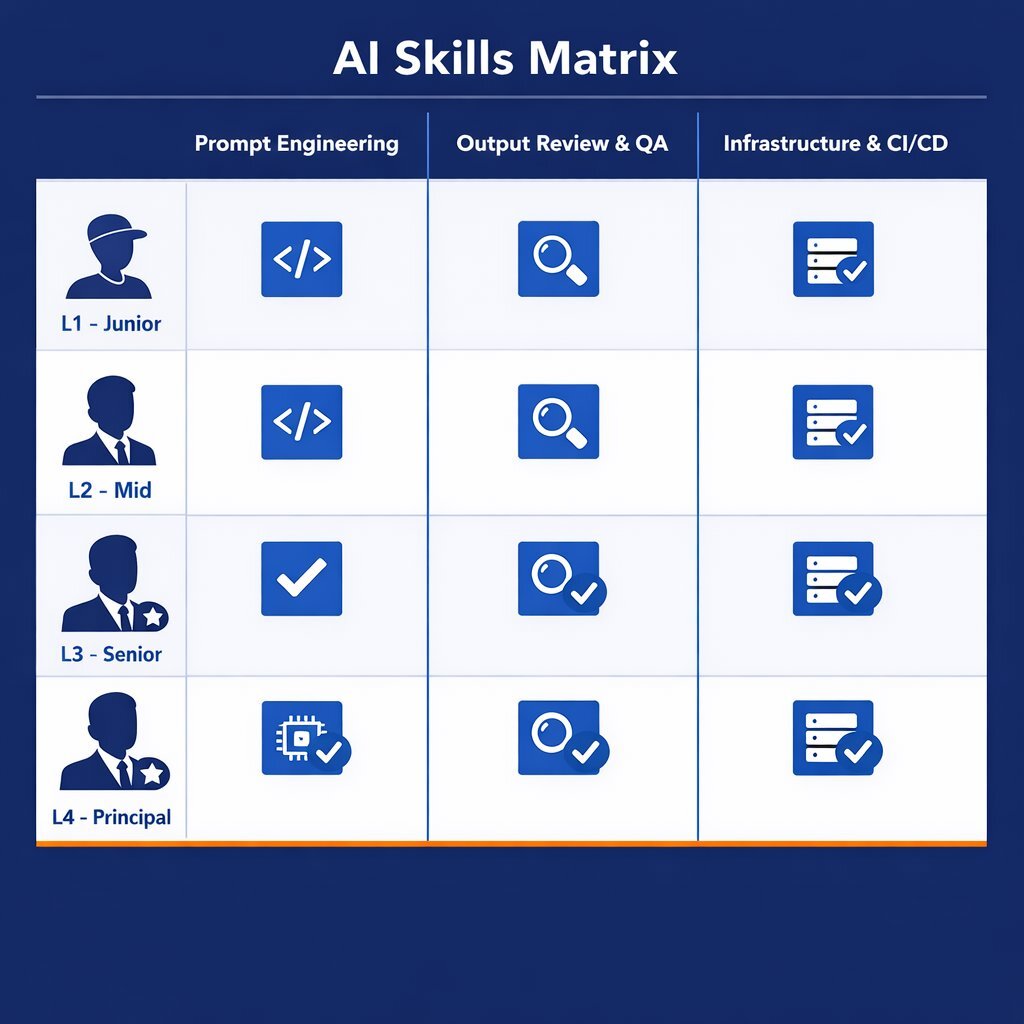

The Engineering AI Skills Matrix

To effectively operationalize this training, engineering leaders should map their development and operations teams against a structured AI Skills Matrix. This ensures that every software developer, technical writer, QA tester, and DevOps engineer explicitly understands the expectations and required competencies at their respective career levels:

| Competency Level | Prompt Engineering & Development | Output Review & QA Validation | Infrastructure & CI/CD Workflows |

|---|---|---|---|

| L1 (Junior/Associate) | Uses approved AI coding assistants for basic drafting and code completion. Writes clear, single-shot prompts with explicitly defined constraints. | Detects obvious model hallucinations, missing context, and syntax errors. Validates all outputs against core documentation and source truth. | Understands Git basics and PR workflows. Ensures no sensitive PII, passwords, or API keys are ever placed into public AI prompts. |

| L2 (Mid-Level) | Utilizes advanced few-shot prompting techniques and builds reusable, version-controlled prompt libraries. Integrates AI to improve cycle time without quality loss. | Builds comprehensive editing checklists and automated verification workflows to catch subtle logic errors or security flaws in AI-generated code. | Owns CI/CD documentation workflows. Implements automated linting and security checks specifically designed to scan AI outputs. |

| L3 (Senior) | Builds complex prompt frameworks for entire engineering teams. Measures prompt effectiveness using structured evaluation sets and regression testing. | Creates team-wide QA frameworks, anomaly detection pipelines, and sophisticated regression testing suites for AI-assisted features and dynamic flows. | Defines the core documentation infrastructure. Integrates AI directly into deployment pipelines and manages vector database schemas. |

| L4 (Principal/Lead) | Drives the overarching AI tooling strategy across the entire product and engineering organization, ensuring alignment with enterprise architecture. | Owns enterprise-wide evaluation scorecards, quality thresholds, and risk mitigation policies, acting as the final gatekeeper for production deployment. | Architects autonomous Agentic DevOps workflows, rigorously evaluating cloud vs. bare-metal compute tradeoffs and long-term scaling strategies. |

Beyond technical upskilling, executive leadership must explicitly train managers on the ethical use of AI in day-to-day operations and team leadership. For instance, when utilizing AI tools to draft performance reviews or analyze one-on-one coaching notes, leaders must be trained to meticulously separate factual performance data from AI-generated assumptions or interpretations, ensuring that human managers maintain ultimate accountability and empathy for all personnel decisions.

AI-Augmented Development and "Agentic DevOps"

An AI-ready infrastructure also comprehensively encompasses the specific tools used by the software engineers building the applications. Development environments must be modernized to incorporate AI coding assistants securely and natively.

The deep integration of GitHub Copilot with Azure DevOps On-Prem and industry-standard Integrated Development Environments (IDEs) like VS Code and VS 2022 is fundamentally transforming the Software Development Life Cycle (SDLC). In 2026, this integration has evolved beyond simple autocomplete into the era of "Agentic DevOps." Engineers can now utilize natural language conversational interfaces directly inside VS Code to interact with the Azure DevOps MCP (Model Context Protocol) server. This allows AI agents to autonomously read user stories, write the corresponding code, automatically generate pull requests (PRs), provision necessary Azure resources, and update Kanban boards.

This level of integration reduces developer context switching by up to 70% and enables unprecedented rapid agile deployment by automating the tedious boilerplate coding and preliminary testing processes. Furthermore, seamless, secure enterprise collaboration platforms—such as Microsoft 365, Teams, OneDrive, and Google Drive—ensure that the cross-functional teams managing these complex AI lifecycles remain perfectly aligned, secure, and highly communicative regardless of their geographic location.

Defining Success: Metrics, KPIs, and the ROI Dashboard

The era of funding speculative AI science projects simply for the sake of technological innovation is definitively over. In 2026, executive boards and shareholders demand strict financial accountability and demonstrable returns. A primary reason organizations struggle to scale AI effectively is the inability to correlate AI maturity with tangible, irrefutable business impact.

Defining success requires establishing robust, ungameable metrics before a single line of code is written or a compute cluster is provisioned. Engineering leaders must differentiate between, and relentlessly track, three distinct layers of Return on Investment:

- Hard ROI (Direct Financial Impact): These are direct, measurable changes to the bottom line that the CFO cares about. This includes metrics like Customer Acquisition Cost (CAC) reduction, Monthly Recurring Revenue (MRR) growth, tangible reductions in cloud computing storage costs, and the precise percentage of customer support tickets resolved entirely without human intervention.

- Soft ROI (Operational Efficiency): These represent improvements in workflow velocity, employee productivity, and risk mitigation. Key Performance Indicators (KPIs) here include the reduction in software development cycle times, increased pull request merge rates, the aggregate number of hours saved per employee weekly, and the measured reduction in manual data entry errors.

- Strategic ROI (Systemic Agility): This defines the long-term value of building a scalable, future-proof architecture. This is measured by the organization's ability to launch new AI features rapidly, the flexibility and reusability of data pipelines, and the degree to which modern AI infrastructure reduces legacy technical debt over time.

| Financial Health | Operational Velocity | System Performance |

|---|---|---|

| MRR Impact: +12.4% | PR Merge Rate: +15.0% | Vector Query: 45ms |

| Support Cost: -22.1% | Code Authored: 41.0% | Uptime: 99.9% |

| Compute Spend: $42k/mo | Hours Saved/Wk: 5.4 hrs | API Latency: 112ms |

| Token Usage: 1.2B/mo | Anomaly Alerts: 0 Active | Drift Score: 0.02 |

To make these metrics actionable rather than theoretical, organizations must deploy real-time KPI dashboards. Modern Business Intelligence (BI) dashboards must utilize live, secure data connections to data warehouses and operational databases (like Postgres or SQL Server) to visualize metrics dynamically. Integrating AI-driven anomaly detection directly into these dashboards allows engineering leaders to instantly identify sudden, unexpected drops in conversion rates, massive spikes in infrastructure token costs, or gradual model drift, enabling proactive course correction before minor issues escalate into catastrophic failures. For many teams, cutting runaway compute and API bills also means deliberately addressing the token tax baked into large-scale LLM usage.

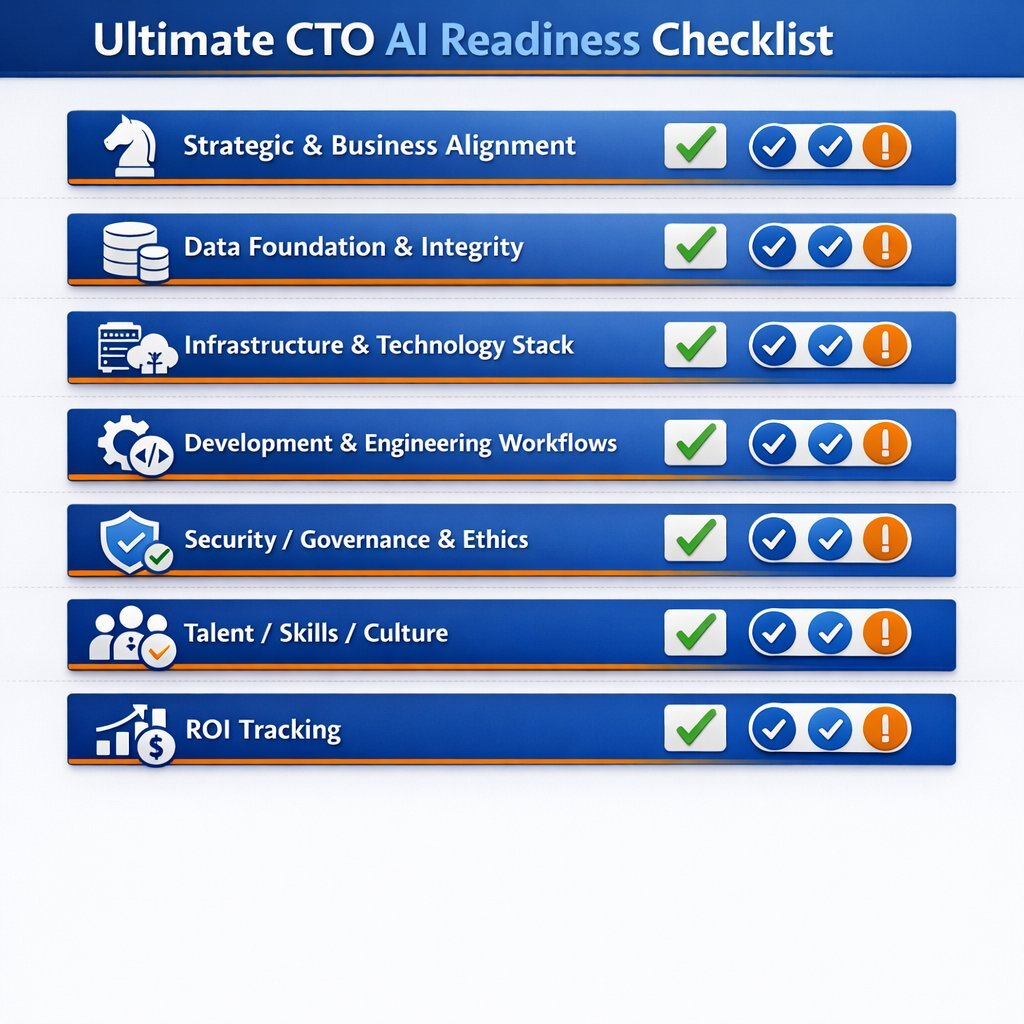

The Ultimate CTO AI Readiness Checklist

Synthesizing the strategic, technical, infrastructural, and cultural requirements outlined throughout this report, the following master checklist provides a definitive, unyielding framework for CTOs to evaluate their enterprise's true AI readiness.

| Readiness Dimension | Critical Evaluation Checkpoints | Status / Required Action |

|---|---|---|

| 1. Strategic & Business Alignment | - Executive alignment exists on specific, mathematically measurable business problems AI will solve. - High-ROI, highly feasible use cases have been prioritized over generic, low-value chatbots. - A dedicated, empowered cross-functional AI task force (IT, Legal, Finance, Operations) is actively governing initiatives. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 2. Data Foundation & Integrity | - Enterprise data is centralized, scrubbed, and accessible via secure, documented APIs. - Proprietary data assets are clearly identified to train models for unique competitive advantage. - Data lineage, strict retention schedules, and synthetic data generation strategies are operationalized. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 3. Infrastructure & Technology Stack | - Bare-metal or hybrid infrastructure (e.g., Harvester HCI, Kubernetes, Docker) is deployed to manage scalable AI compute locally. - Relational databases are modernized to support native vector embeddings (e.g., Postgres with pgvector, SQL Server 2025). - Edge security (pfSense on OVHCloud) and network routing are robust enough for high-volume, sensitive token transit. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 4. Development & Engineering Workflows | - AI coding assistants (e.g., GitHub Copilot) are securely integrated into primary IDEs (VS Code/VS 2022). - CI/CD pipelines (Azure DevOps On-Prem) are configured for automated linting and testing of AI-generated code. - Collaboration platforms (M365, Teams, Google Drive) are utilized to ensure seamless communication across distributed agile teams. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 5. Security, Governance, & Ethics | - AI risk classification frameworks are enforced (strictly aligning with EU AI Act and NIST guidelines). - Zero-trust security, strict Role-Based Access Control (RBAC), and encryption protect against prompt injection and data exfiltration. - A rapid AI incident response plan and model rollback protocol is fully documented, tested, and ready for production emergencies. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 6. Talent, Skills, & Corporate Culture | - A comprehensive engineering AI skills matrix is implemented to guide training from junior developers to principal architects. - "Shadow AI" policies are explicitly clear, ensuring all employees know exactly which tools are safe for proprietary corporate data. - Change management plans are highly active to reduce employee anxiety regarding job displacement and foster a culture of AI augmentation. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

| 7. ROI Tracking & Performance Measurement | - Hard (Financial), Soft (Operational), and Strategic ROI metrics are defined and agreed upon prior to project launch. - Real-time KPI dashboards featuring anomaly detection are live to track model performance, uptime, and compute costs. - Human-in-the-loop oversight operational costs are accurately factored into the total project ROI calculation. | [ ] Fully Defined [ ] In Progress [ ] Critical Gap |

Conclusion

The frantic, gold-rush mentality to adopt artificial intelligence over the past two years has left countless organizations burdened with fragmented pilot programs, exorbitantly bloated cloud computing bills, and unacceptable security vulnerabilities. By 2026, the enterprises that are successfully dominating their respective markets are those that possessed the discipline to pause, evaluate, and ensure their foundational architecture was unequivocally solid.

True enterprise AI readiness is not achieved by simply purchasing an API key. It requires a holistic, uncompromising approach: an unwavering commitment to data hygiene, a modernized, containerized infrastructure capable of handling localized vector search and heavy inference workloads, a deeply secure and meticulously governed software development lifecycle, and a workforce comprehensively trained to collaborate alongside autonomous systems. For many teams, this also means moving from scattered scripts and SaaS tools to a coherent agentic enterprise strategy built around a coordinated “team” of AI agents.

Engineering leaders and CTOs who rigorously apply this readiness checklist will successfully transform artificial intelligence from a highly risky, speculative cost center into a sustainable, scalable catalyst for unprecedented enterprise value. By obsessively prioritizing resilient infrastructure, ethical governance, and mathematically measurable ROI, technology executives can confidently navigate the complex $4.7 trillion AI landscape, ensuring their organizations are not merely participating as spectators in the AI revolution, but actively leading it.

Frequently Asked Question

Q: How long does it actually take to transition an organization from isolated AI pilots to a fully scaled, enterprise-wide production deployment?

A: According to extensive market data and proven implementation frameworks, moving from a successful pilot to a fully scaled production environment typically requires a highly structured 12 to 18-month roadmap, assuming the foundational data readiness and infrastructure have already been achieved. This timeline lines up well with modern AI automation roadmaps that start with a few focused workflows and expand as trust and ROI grow.

The first 1 to 3 months must focus exclusively on organizational readiness, establishing governance frameworks, and conducting exhaustive data auditing. Months 4 through 6 involve launching 2 to 3 tightly scoped, highly feasible pilot projects with clear, measurable success metrics. Months 7 through 12 are dedicated to expanding those successful pilots, implementing robust MLOps (Machine Learning Operations) for continuous monitoring, and establishing a centralized Center of Excellence to guide further development. Beyond month 12, the organization enters the true transformational phase, deeply integrating AI into core proprietary workflows and finally realizing compounded, enterprise-wide ROI.

Further Insights

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- https://softwarestrategiesblog.com/2025/12/22/data-readiness-security-driving-ai-4-7-trillion/

https://medium.com/@quocboy159/harnessing-vector-search-in-sql-server-2025-c59351c9241b

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.