Enterprise AI Implementation Plan: A 90-Day Roadmap for Leaders

April 20, 2026 / Bryan Reynolds

The Enterprise AI Implementation Plan: A 90-Day Digital Transformation Roadmap

In boardrooms across the globe, business leaders are grappling with a profound shift in technology. The enterprise artificial intelligence market is currently experiencing an unprecedented explosion, growing from an estimated 24 billion in 2024 to a projected 150 billion to 200 billion by 2030, representing compound annual growth rates exceeding 30%.

As this technology transitions from a consumer-facing novelty to mission-critical business infrastructure, executives consistently raise three critical questions: How long does it take to build an artificial intelligence solution? What are the precise phases of an AI project? And what should stakeholders expect in the foundational first month of engagement?

Despite the reality that 78% of global companies now utilize AI in their daily operations—a significant jump from 55% the previous year—a stark divide remains between successful deployments and costly, stalled experiments.

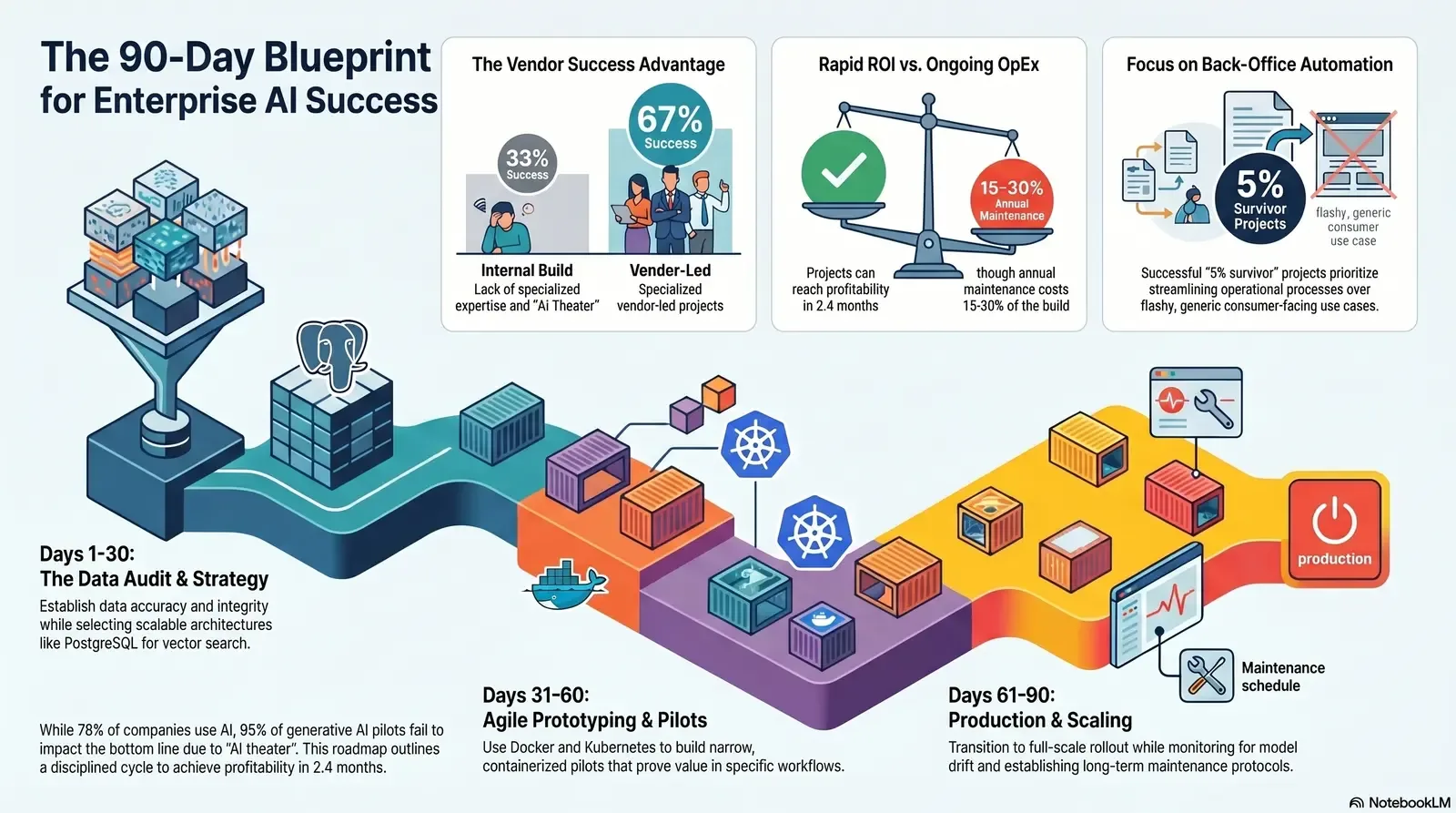

To navigate this complex landscape, organizations require a realistic, structured AI Implementation Plan. This exhaustive digital transformation roadmap details the complete software development lifecycle for artificial intelligence, breaking down the timeline into three critical phases: the Data Audit (Days 1-30), the Prototype and Pilot Program (Days 31-60), and Production and Refinement (Days 61-90). For leaders who want to pressure-test their readiness in more detail, the Ready, Set, Scale: CTO Checklist for Enterprise AI offers a deeper, step-by-step readiness framework.

By adopting a structured methodology, organizations can build trust among stakeholders and set transparent, achievable expectations. Firms specializing in custom software development and application management, such as Baytech Consulting, demonstrate that leveraging a tailored tech advantage alongside rapid Agile deployment is the definitive path to achieving enterprise-grade quality and on-time delivery.

The State of Enterprise AI: Economics, Adoption, and the "GenAI Divide"

Before exploring the technical roadmap, it is crucial to understand the macroeconomic realities and historical performance metrics of enterprise AI adoption. The integration of large language models (LLMs) and predictive algorithms is no longer theoretical; it is actively reshaping global commerce. For much of the past three years, the visible impact of AI was confined to consumer applications. Today, the history of general-purpose technologies dictates that massive economic value is created when firms translate underlying capabilities into scaled, specialized use cases.

The Illusion of Instant ROI and the 95% Failure Rate

The marketing hype surrounding artificial intelligence often leads strategic Chief Financial Officers and visionary Chief Technology Officers to expect instantaneous returns on investment. This expectation is fundamentally flawed. A comprehensive study released by MIT researchers revealed a sobering statistic: 95% of generative AI pilot programs fail to deliver a measurable impact on the profit and loss (P&L) statement.

This high failure rate does not indicate that the technology is ineffective; rather, it highlights a fundamental misalignment in execution. The vast majority of failed pilots suffer from an overemphasis on "AI theater"—flashy, generic use cases that lack workflow integration, rely on poor data quality, and fail to address specific, domain-level business problems. These projects are often launched without a clear software development lifecycle strategy, treating AI as a plug-and-play installation rather than a complex software engineering endeavor. Leaders who want to avoid these traps often start by reassessing data quality and governance, as outlined in Garbage In, Gold Out: How Data Readiness Unlocks Enterprise AI.

The 5% Survival Pattern: Workflow Integration and Vendor Partnerships

Conversely, the 5% of projects that survive and successfully scale reveal a distinct, repeatable pattern. These initiatives focus heavily on back-office automation, streamlining complex operational processes, reducing outsourcing dependencies, and directly cutting overhead costs.

Furthermore, the data indicates that deployment strategy is a massive determinant of success. Internal builds—where organizations attempt to develop an AI solution entirely from scratch using in-house resources—succeed only about 33% of the time.

Organizations that lack deep, in-house AI expertise frequently struggle with the specialized skills required in machine learning, deep learning, and MLOps (Machine Learning Operations). In stark contrast, specialized vendor-led projects boast a success rate of approximately 67%. For many SaaS and internal product teams, this is why they’re moving from one-off chat widgets to fully integrated copilots, as described in From Chat Widgets to Copilots: The SaaS AI Revolution.

Partnering with established custom software development firms provides access to highly skilled engineers who are already versed in managing complex application lifecycles. By leveraging an external firm's expertise, B2B executives mitigate risk, ensuring that the integration aligns deeply with existing day-to-day operations and core enterprise platforms. Mid-market companies leveraging these partnerships are noted for moving swiftly and decisively, with top performers reporting an average timeline of exactly 90 days from the initial pilot to full production implementation.

Understanding the True Total Cost of Ownership

To build trust and set realistic expectations, the financial narrative surrounding the enterprise rollout must be entirely transparent. Deploying artificial intelligence involves significant upfront capital expenditures (CapEx) for infrastructure, software licensing, data architecture, and staff training.

However, the most frequently overlooked element of an AI implementation plan is the ongoing operational expenditure (OpEx).

Artificial intelligence systems require persistent maintenance, monitoring, and computational resources. The cost of computing, particularly cloud-based inference and training, is a critical issue that 70% of surveyed executives cite as a barrier to scaling successfully.

Across most implementations, the annual maintenance costs for an AI system typically equal 15% to 30% of the original build cost. For instance, a system requiring a 100,000 dollar initial development investment will likely demand an ongoing budget of 15,000 to 30,000 dollars annually. For cost-conscious teams, techniques like model routing, caching, and prompt optimization—covered in The Token Tax: Stop Paying More Than You Should for LLMs—are now part of standard financial planning.

When building the business case, strategic CFOs must balance these long-term costs against the demonstrable efficiencies gained. Fortunately, organizations that adhere to a disciplined implementation roadmap report substantial benefits: 66% achieve productivity and efficiency gains, 53% realize enhanced decision-making insights, and 40% report hard cost reductions. A well-structured business case—calculating current operational costs and multiplying them by volume and error rates to find the projected savings delta—frequently reveals a rapid time to positive ROI, sometimes reaching profitability within just 2.4 months after launch.

| Deployment Strategy | Success Rate | Primary Focus | Key Failure Risks |

|---|---|---|---|

| Internal Builds | ~33% | Flashy, generic use cases; "AI Theater" | Lack of in-house expertise; poor workflow integration; high infrastructure costs. |

| Vendor-Led Projects | ~67% | Back-office automation; domain-specific workflows | Overlooking ongoing maintenance costs; ignoring data governance. |

Phase 1: The Data Audit and Strategic Foundation (Days 1-30)

The foundation of any successful AI implementation plan is laid in the first thirty days. A common misconception among B2B buyers is that artificial intelligence can instantly ingest and organize chaotic, siloed enterprise data. In reality, machine learning models are entirely dependent on the quality, structure, and integrity of the underlying datasets. The adage "garbage in, garbage out" remains the defining rule of artificial intelligence; weak data quality and rigid legacy processes will derail an AI initiative long before the performance of the actual model comes into play.

Establishing Rigorous Data Quality Metrics

During the initial month of engagement, the primary objective is to execute an exhaustive data audit. Technology leaders, data architects, and engineers must collaborate to establish a clear data strategy that cuts across various modalities, including public, synthetic, licensed, and internal enterprise datasets. This is where a dedicated all major database systems practice is invaluable, because it ensures every critical source—CRM, ERP, line-of-business apps—is profiled and understood.

The audit process involves evaluating data sources against strict quality dimensions:

- Accuracy: Assessing the historical correctness of data values. Mislabeled data or incorrect historical records fundamentally degrade model training and lead to unreliable predictions.

- Completeness: Evaluating the percentage of data fields that are fully populated. Sparse datasets restrict the model's ability to generalize findings and draw accurate correlations across the enterprise.

- Consistency: Standardizing disparate data formats. For example, date strings, currency nomenclatures, and categorical tags must be standardized across all aggregated systems, particularly when pulling from disparate CRM and ERP modules.

- Integrity: Ensuring that data elements make logical sense when combined. The relationships between different data points must be coherent to allow the AI to extract valid insights.

This phase requires an intense focus on identifying missing information and auditing data transformation pipelines (ETL/ELT). Teams utilize advanced profiling techniques to spot outliers and anomalies. If the data audit reveals severe deficiencies, the timeline must accommodate a comprehensive data cleansing effort before any model training can commence.

Architectural Decisions: The Case for PostgreSQL in AI Workloads

Simultaneous with the data quality assessment, the engineering team must finalize the core data infrastructure. The architecture must be capable of handling the massive scale and unique data types associated with machine learning. A critical decision frequently faced by visionary CTOs is whether to build upon traditional, proprietary relational databases like Microsoft SQL Server or pivot to robust, open-source object-relational databases like PostgreSQL.

When architecting a tailored tech advantage for AI, PostgreSQL offers highly compelling benefits over SQL Server. While SQL Server is a powerful RDBMS well-suited for traditional transactional processing, its rigid schemas and commercial licensing models can present bottlenecks for Agile AI development.

PostgreSQL acts as an Object-Relational Database Management System (ORDBMS), providing the versatility necessary for modern AI applications. Three specific advantages make it ideal for the enterprise rollout:

- JSONB Support for Semi-Structured Data: Artificial intelligence systems frequently ingest diverse, unstructured, or semi-structured data, such as API responses, log files, and rich text. PostgreSQL’s advanced JSONB support allows developers to store, index, and query this JSON data natively and efficiently, bypassing the rigid row-and-column constraints of traditional databases.

- Vector Search Capabilities: The rise of Retrieval-Augmented Generation (RAG) models—which allow LLMs to query a company's proprietary documents securely—relies on vector embeddings. PostgreSQL’s thriving extension ecosystem includes tools like

pgvector, which enables the database to store and perform similarity searches on high-dimensional vectors natively. SQL Server generally requires separate, bolted-on systems to achieve comparable vector processing capabilities. Patterns like the AI Sidecar Pattern build directly on these capabilities to add “chat with data” safely around existing systems. - Horizontal Scalability and Favorable Economics: Scalability is paramount for growing datasets. PostgreSQL scales horizontally with ease, utilizing techniques like sharding and partitioning without incurring additional licensing fees. Conversely, SQL Server is licensed commercially, often costing thousands of dollars per core (for example, up to 13,748 dollars for the Enterprise Edition for two cores). For fast-growing startups and enterprises looking to control the total cost of ownership, PostgreSQL—managed via robust tools like pgAdmin—provides enterprise-grade reliability without budget-breaking commercial constraints.

| Feature / Attribute | Microsoft SQL Server | PostgreSQL |

|---|---|---|

| Database Architecture | Relational Database Management System (RDBMS) | Object-Relational Database Management System (ORDBMS) |

| Licensing Model | Commercial (Per-core or Server/CAL model); high enterprise costs | Open Source; globally free for commercial use |

| Unstructured Data Handling | Traditional relational constraints; robust but rigid | Superior JSONB support for native querying of semi-structured data |

| AI Vector Capabilities | Requires complex external integrations or newer, specific cloud tiers | Native extension ecosystem (for example, pgvector) for advanced similarity search |

| Scalability Economics | Vertical scaling is expensive; horizontal scaling incurs heavy licensing fees | Excellent horizontal scalability (sharding, partitioning) with zero added licensing cost |

Security, Governance, and Setting Executive Expectations

By the close of the first month, the foundation must also include stringent governance and security protocols. The cybersecurity conundrum in artificial intelligence includes risks such as adversarial attacks and data poisoning, where bad actors manipulate training datasets to corrupt the model's outputs.

Implementing AI-specific security measures and establishing clear data boundaries to prevent the mixing of proprietary client information are non-negotiable requirements. A well-architected network utilizing enterprise firewalls like pfSense ensures secure perimeters during this sensitive data-ingestion phase. Many enterprises now add an AI firewall between user interfaces and LLMs to block prompt injection, prevent data leakage, and enforce governance rules.

Equally important during Days 1 to 30 is the alignment of executive expectations. Technology leaders must educate the C‑suite on the fundamental difference between deterministic software and probabilistic AI systems. Traditional code produces identical outputs every time it runs. Generative AI models and predictive algorithms are probabilistic; they calculate the most likely correct response, which introduces a degree of inherent variance. Acknowledging this reality early builds a resilient culture prepared for the iterative nature of the upcoming phases.

Phase 2: The Prototype and Pilot Program (Days 31-60)

With a pristine data architecture established, the project transitions into the Prototype and Pilot phase. Days 31 to 60 represent the core engineering effort within the software development lifecycle. The objective during this month is not to deploy a massive, company-wide artificial intelligence oracle. Rather, the goal is to develop a narrow, highly specific pilot program that targets a defined business workflow and proves undeniable value.

The Imperative of Rapid Agile Deployment

Traditional "Waterfall" software development methodologies—characterized by rigid, linear sequential phases—are fundamentally incompatible with the fluid, experimental nature of machine learning. Because models require constant iteration, feedback loops, and hyperparameter tuning, Agile methodologies are mandatory. Recent industry analyses indicate that a commanding 95% of technology professionals affirm Agile's critical relevance to modern operations.

An effective AI Pilot Program utilizes rapid Agile deployment. Highly skilled engineering teams leverage this approach to compress development cycles and deliver functional prototypes quickly. The benefits of Agile in AI engineering are multi-faceted:

- Iterative Quality and Proactive Testing: Models are tested early and often, which prevents the accumulation of technical debt. Engineers can instantly refine prompt parameters or adjust algorithms based on edge cases discovered during short sprint cycles.

- Stakeholder Visibility: Business stakeholders, particularly the driven head of sales or innovative marketing director, receive frequent, functional demonstrations of the prototype. This ongoing visibility builds confidence, aligns the product with actual end-user needs, and prevents the development team from working in a silo.

- Adaptability: If a selected machine learning technique fails to achieve the necessary confidence scores or hallucinates excessively, Agile teams can pivot to alternative frameworks immediately without derailing the entire project timeline.

For teams new to this way of working, adopting a proven Agile methodology playbook helps keep sprints, ceremonies, and stakeholder feedback loops tight and predictable.

Modernizing the CI/CD Pipeline with Azure DevOps

To facilitate rapid Agile deployment, the underlying development operations must evolve into MLOps. Integrating AI into an application requires automating the pipeline that handles data preparation, model training, validation, and deployment.

Azure DevOps On-Prem serves as an exceptional centralized platform for managing this complex lifecycle. Developers writing code in powerful environments like VS Code or Visual Studio 2022 can push their commits directly into Azure Repos. From there, Azure Pipelines coordinate Continuous Integration and Continuous Delivery (CI/CD).

This pipeline automation is critical for enterprise-grade quality. When a developer submits a pull request, the CI/CD pipeline instantly runs unit tests and code analysis. Advanced teams even use AI-powered tools within the pipeline to predict which parts of the codebase are most likely to fail based on historical patterns, making quality control a proactive, automated safeguard rather than a manual bottleneck. Once the code passes review, the pipeline automatically builds the software artifacts required for deployment.

Containerization and Orchestration: Docker and Kubernetes

One of the most notorious challenges in artificial intelligence development is the "it works on my machine" problem. A model trained successfully on a data scientist's local workstation frequently breaks when pushed to a production server due to differing operating systems, missing library dependencies, or conflicting software versions.

To guarantee on-time delivery and flawless execution, modern AI architectures rely on Docker and Kubernetes. Docker introduces containerization. Put simply, Docker packages the machine learning model, its runtime environment, the precise versions of required libraries, and all configuration files into a single, isolated container.

Because each container has its own isolated file system, the model is guaranteed to run consistently regardless of where it is deployed.

However, running a few isolated containers is insufficient for processing enterprise-scale data. This is where Kubernetes becomes the critical orchestration engine. During the AI Pilot Program, Kubernetes manages these Docker containers across clusters of servers, providing massive operational advantages:

- Distributed Training and High Availability: Training complex models requires vast computational power, often spread across multiple GPUs. Kubernetes orchestrates multi-node clusters, utilizing features like "gang scheduling" to ensure that distributed workloads launch, process, and terminate synchronously, optimizing resource allocation.

- Continuous Delivery with Zero Downtime: When the Agile team pushes a refined, smarter version of the AI model, Kubernetes handles the deployment via rolling updates. It gradually routes traffic to the new containers while simultaneously spinning down the old ones, ensuring that the end-user experiences zero application downtime.

- Swift Rollbacks: If a new model version begins to hallucinate or underperform in the live environment, Kubernetes allows administrators to execute a swift rollback to the previous stable state instantly, mitigating operational risk.

- Infrastructure Independence: Kubernetes abstracts the underlying hardware infrastructure. This prevents vendor lock-in, allowing enterprises to run their AI workloads smoothly across on-premise servers, Azure environments, or bare-metal hostings like OVHCloud servers. Teams that specialize in .NET, Docker & Kubernetes can standardize these patterns across many projects, which shortens delivery time and improves reliability.

By Day 60, the organization transitions from theory to practice. A containerized, functional pilot program should be actively processing a subset of enterprise data in a secure staging environment, providing the initial metrics required to validate the business case.

Phase 3: Production, Refinement, and Scaling (Days 61-90)

The final thirty days of the 90-day AI implementation plan mark the critical inflection point where the controlled pilot evolves into a scalable enterprise rollout. During this phase, the technology is embedded directly into the daily workflows of end-users. The engineering focus shifts from active feature development toward infrastructure scaling, rigorous monitoring, and continuous refinement.

Combating Model Drift and the "Set It and Forget It" Fallacy

A dangerous misconception among enterprise leaders is that artificial intelligence functions like traditional software—once deployed, it runs perpetually without intervention. In reality, models suffer from a phenomenon known as "model drift" or "data drift." As the real-world data the model encounters in production inevitably shifts away from the historical data it was originally trained on, the model's predictive accuracy and reliability will steadily degrade.

To combat this production predicament, the organization must fully embrace MLOps as an ongoing discipline. Technology leaders prevent uncontrolled drift by maintaining strict "data freshness" protocols and utilizing user prompts as a continuous feedback loop to ensure the model adapts. Engineers integrate observability tools directly into the deployment environment to monitor confidence scores. If the system detects that accuracy is dropping below acceptable thresholds, automated alerts trigger the CI/CD pipeline to initiate a retraining sequence using the most recent data sets, ensuring the AI remains a highly accurate, living system.

Scaling Infrastructure: Harvester HCI and Rancher

As the user base expands and data ingestion scales, the underlying network and server infrastructure must be resilient enough to handle massive concurrency without latency. For organizations maintaining on-premise or hybrid cloud environments, specialized tools are required to manage this complexity.

Harvester HCI (Hyperconverged Infrastructure) serves as a modern foundation for on-premise virtualization, allowing IT departments to seamlessly manage and deploy virtual machines alongside containerized workloads on bare-metal servers. Working in tandem with Harvester, Rancher provides an intuitive, centralized management plane for Kubernetes clusters. Rancher simplifies the deployment of complex AI clusters, enforces consistent security policies, and provides deep visibility into node performance across multiple environments. By utilizing this robust stack on powerful infrastructure like OVHCloud servers, enterprises guarantee that their AI applications remain highly available and responsive during peak operational hours. Many teams bring in external experts who specialize in Harvester HCI to get this layer right the first time.

Simultaneously, seamless operational communication is maintained across the enterprise using established platforms like Microsoft 365, Microsoft Teams, OneDrive, and Google Drive, ensuring that human-in-the-loop oversight and inter-departmental feedback are captured instantly and routed back to the engineering team.

Transitioning to Long-Term Maintenance and Cost Management

By Day 90, the AI solution is fully integrated, and the project officially transitions into the maintenance lifecycle. At this stage, the strategic CFO must maintain vigilant oversight of operational expenditures. Because AI systems require constant retraining, cloud computing allocations, and rigorous security auditing, the total cost of ownership extends far beyond the initial pilot phase.

Organizations must strictly adhere to their budgetary forecasts, recognizing that 15% to 30% of the initial CapEx will recur annually as OpEx. To maximize profitability during the refinement phase, teams must prioritize model efficiency—optimizing algorithms to require fewer compute resources, adopting more cost-effective localized LLMs where appropriate, and continuously pruning obsolete data from the RAG vector databases. Many enterprises back this with formal service contracts or ongoing support agreements so that optimization and monitoring are treated as standard operating work, not ad-hoc emergencies.

B2B Industry Masterclass: Tailoring AI Use Cases for 2026

The true power of this 90-day roadmap is realized when the core methodology is applied to solve specific, highly complex domain challenges. Across diverse B2B sectors, executives are utilizing tailored tech advantages to completely redefine operational efficiency and customer engagement.

Real Estate and Mortgage Finance

In the commercial real estate and mortgage sectors, the transaction lifecycle is historically burdened by manual documentation and sluggish underwriting processes. Following a successful enterprise rollout, firms leverage artificial intelligence to execute predictive property valuations, analyzing vast datasets that include historical pricing, satellite imagery, local zoning changes, and demographic shifts to price assets dynamically.

Furthermore, AI-driven algorithms drastically optimize the mortgage approval workflow. Natural Language Processing (NLP) models automatically parse unstructured financial histories, W‑2s, and credit reports to detect fraudulent transaction patterns instantly, empowering underwriters to make faster, highly accurate lending decisions.

Financial Services and Asset Management

For institutional finance and wealth management, latency and accurate risk assessment are the primary currencies of success. Deployed AI solutions engage in sophisticated, real-time portfolio management. Investment managers utilize these tools to run thousands of Monte Carlo simulations simultaneously, stress-testing asset allocations against volatile global economic conditions to ensure portfolio resilience.

Operationally, AI fundamentally upgrades regulatory compliance; machine learning models recognize complex Anti-Money Laundering (AML) patterns across millions of transactions, drastically reducing the false-positive alerts that traditionally overwhelm compliance officers. Many firms supplement these systems with AI compliance bots that continuously scan code, documents, and communications to catch violations before they happen.

Healthcare Operations and Technology

In B2B healthcare software and hospital network operations, the AI implementation plan frequently targets provider support and administrative efficiency. High-performing healthcare organizations deploy AI-enabled diagnostic tools that synthesize vast libraries of medical imaging alongside fragmented patient histories, offering clinicians data-backed recommendations for personalized treatment plans.

Operationally, predictive analytics forecast patient admission rates and disease outbreaks, allowing hospital administrators to optimize staff scheduling dynamically, ensure adequate medical supply inventory, and minimize overhead costs.

Education and Learning Management Systems (LMS)

For software vendors developing enterprise Learning Management Systems, artificial intelligence shifts static digital classrooms into hyper-adaptive training environments. Advanced AI algorithms process user engagement metrics, quiz scores, and reading pace in real time, subsequently adjusting the difficulty and format of learning materials to create truly personalized learning paths for individual corporate trainees or students.

Generative AI tools also alleviate the massive administrative burden on educators by automating course content generation, providing instant grading for standardized assessments, and deploying intelligent chatbots to handle routine student inquiries.

Advertising, Telecom, and Marketing Strategy

The B2B buyer journey in the advertising and technology sectors has fundamentally changed; buyers now rely heavily on AI assistants like ChatGPT to evaluate vendors long before contacting a sales representative.

For the innovative marketing director, agentic AI is the future of automation. Marketing teams deploy generative models to rapidly audit existing content libraries, identify SEO gaps, and generate personalized copy variations at scale for Account-Based Marketing (ABM) programs.

AI workflows autonomously make decisions, optimize ad spend across digital channels, and adapt campaign messaging in real time, allowing human marketers to focus entirely on high-level brand strategy and creative direction. When combined with secure, integrated AI integration into existing martech stacks, these systems become durable engines for demand, not just one-off experiments.

Software, High-Tech, and Fast-Growing Startups

In the competitive software sector, the driven head of sales utilizes AI to eliminate blind spots in the revenue pipeline. Currently, only 17% of B2B software buyers utilize automated activity capture, yet those who adopt AI-powered coaching tools see massive advantages.

Predictive AI seamlessly analyzes historical CRM data and customer interaction points to provide sales representatives with low-latency, real-time coaching during active deals, ensuring that proven sales methodologies are followed meticulously.

This technology drastically reduces the onboarding time for new hires and increases the operational bandwidth of mid-level sales managers.

| Industry | Primary AI Use Case | Measurable Business Impact |

|---|---|---|

| Real Estate / Mortgage | Automated property valuation; NLP-driven loan underwriting | Accelerated deal cycles; reduced fraud exposure |

| Financial Services | Real-time portfolio stress-testing; AML pattern detection | Minimized compliance false-positives; optimized risk allocation |

| Healthcare Operations | Predictive patient admission forecasting; diagnostic synthesis | Optimized staffing overhead; enhanced patient care outcomes |

| Education (LMS) | Adaptive learning path generation; automated grading | Scalable personalization; massive reduction in administrative hours |

| B2B Marketing/AdTech | Agentic AI for ABM content generation and campaign optimization | Scaled personalized messaging; improved conversion rates |

| Software / High-Tech | AI-powered sales coaching; automated CRM activity capture | Reduced rep onboarding time; highly accurate revenue forecasting |

Measuring Success: Building the Ultimate Business Case for AI

As the 90-day timeline concludes, executive leadership naturally demands empirical proof of the project's value. A successful AI business case is built on quantifiable metrics, not theoretical potential. To secure ongoing buy-in from the board and justify the 15% to 30% annual maintenance budget, the project must demonstrate clear financial advantages.

The formula for proving ROI is highly practical: establish a baseline by calculating the current operational cost multiplied by the volume of tasks and the manual error rate. Then, contrast this figure with the projected, post-implementation cost using AI automation. The delta between these two figures represents the tangible value generated by the pilot.

By targeting high-impact back-office workflows—such as streamlining intensive data entry, cutting Business Process Outsourcing (BPO) expenditures, or drastically accelerating complex document reviews—enterprises can witness remarkably fast returns. Data shows that meticulously executed AI projects can achieve a positive ROI in as little as 2.4 months after the initial launch.

However, maximizing this return requires a profound cultural shift within the organization. The most successful deployments treat AI not as an autonomous replacement for human workers, but as a powerful, collaborative assistant. Utilizing a human-in-the-loop strategy—where the AI rapidly drafts reports, flags anomalies, or processes bulk data, while a human expert provides final strategic approval—accelerates output exponentially while safeguarding the rigorous quality control demanded by B2B enterprises. The best teams also track outcomes with modern engineering metrics—such as those discussed in The Future of Developer Productivity: Metrics That Matter—to prove that AI is driving both speed and quality.

Conclusion: Executing Your Digital Transformation

The transition to an AI-driven enterprise is neither instantaneous nor guaranteed. It is a highly systematic software engineering endeavor that demands rigorous data governance, rapid Agile deployment methodologies, and a transparent understanding of ongoing operational economics. By adhering strictly to this phased 90-day implementation roadmap—executing a thorough data audit, containerizing applications through a modern pilot program, and scaling securely into production—organizations can confidently avoid the pitfalls that derail 95% of generic AI initiatives. Strategic alignment, coupled with enterprise-grade engineering execution, guarantees that artificial intelligence moves out of the experimental sandbox and becomes a durable, profit-generating pillar of the modern business.

Frequently Asked Questions

How long does it take to build an AI solution? For mid-market firms and agile enterprises leveraging specialized external vendor partnerships, a highly integrated AI solution generally takes exactly 90 days from the initial data audit to a functional production deployment.

Complex, massive-scale enterprise rollouts (for organizations with more than 100 million dollars in revenue) attempting internal builds may struggle for nine months or longer, often failing due to infrastructural and compliance complexities.

What are the phases of an AI project? A successful enterprise rollout is divided into three core phases:

- Data Audit and Strategy (Days 1-30): Profiling unstructured data for accuracy, selecting the optimal architecture (such as PostgreSQL for JSONB and vector capabilities), and establishing strict security boundaries.

- Prototype and Pilot (Days 31-60): Utilizing MLOps and Azure DevOps for continuous integration, containerizing the model with Docker, and deploying it via Kubernetes for stable, iterative testing.

- Production and Refinement (Days 61-90): Expanding infrastructure via Harvester HCI and Rancher, addressing model drift through continuous monitoring, and transitioning to ongoing maintenance.

What should I expect in the first month of engagement? During the first 30 days, expect an intense, highly technical focus on data architecture rather than flashy end-user interfaces. The engineering team will perform deep analyses of existing databases to identify missing data, standardize formats, and build secure ETL pipelines. Stakeholders will collaborate to establish measurable success metrics, navigate governance requirements, and select a narrow, high-value workflow to serve as the initial pilot, laying a flawless, risk-mitigated foundation for subsequent development.

Supporting Resources for Strategic Leaders

- https://trullion.com/blog/why-95-of-ai-projects-fail-and-why-the-5-that-survive-matter/

- https://snyk.io/articles/data-quality-in-ai-challenges-implementation-audits-and-best-practices/

- https://www.cognizant.com/us/en/insights/insights-blog/how-to-power-up-agility-with-ai-driven-software-development-wf2052694

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.