Agent Wars: Claude Code vs Codex — Pick Your Copilot

April 27, 2026 / Bryan Reynolds

Claude Code vs. OpenAI Codex: Choosing Your AI Engineering Partner

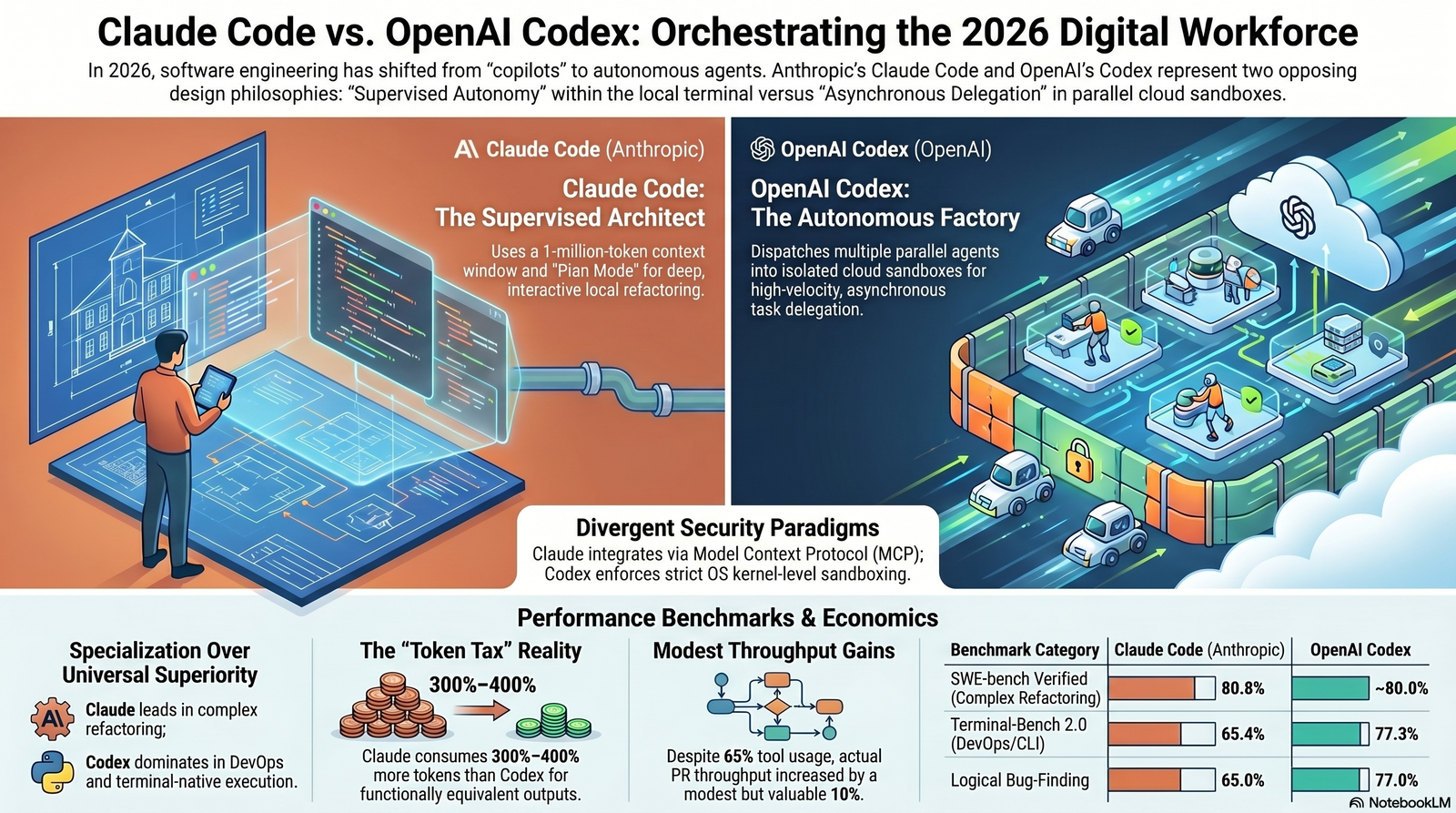

The software engineering landscape has undergone a profound structural transformation as the industry navigates the first half of 2026. For technology executives, engineering directors, and platform architects operating within the B2B sector—spanning finance, healthcare, high-tech, and telecommunications—the conversation surrounding artificial intelligence has officially shifted. The discourse is no longer centered on whether to adopt AI, but rather how to orchestrate autonomous digital workforces. The era of simple, line-by-line autocomplete tools, which dominated the early 2020s, has been superseded by the era of agentic software engineering. Today, AI models do not merely suggest syntax; they architect solutions, execute complex multi-step development workflows, manage entire enterprise repositories, and operate autonomously across local and cloud environments.

At the absolute forefront of this technological paradigm shift are two dominant platforms: Anthropic’s Claude Code and OpenAI’s Codex.

For enterprise leadership, the decision between these two platforms represents far more than a simple vendor selection. It represents a fundamental architectural choice regarding how an organization develops, secures, and deploys its proprietary software. The choice dictates the very nature of developer workflows, determining whether engineering teams will rely on local, developer-guided terminal integrations or isolated, asynchronous cloud sandboxes.

This comprehensive research report provides an exhaustive, comparative analysis of the 2026 iterations of Claude Code and OpenAI Codex. By dissecting their architectural differences, benchmarking their performance across rigorous industry standards, evaluating their security paradigms, and analyzing their specific capabilities in accelerating modern frameworks such as C# 15 and .NET 10, this analysis delivers the strategic insights necessary for B2B executives to choose the optimal AI engineering partner.

The Paradigm Shift: From Copilots to Autonomous Agents

To fully grasp the magnitude of the divergence between Anthropic's Claude Code and OpenAI's Codex, it is essential to trace the rapid evolution of AI coding tools and the shifting bottlenecks within the software development lifecycle. Prior to 2026, tools generally functioned as advanced intellisense mechanisms. They required human developers to remain tethered to their Integrated Development Environments (IDEs), prompting the AI line-by-line.

In that era, the primary bottleneck to productivity was the human developer's physical typing speed and immediate cognitive load.

By 2026, that bottleneck has fundamentally shifted. The limiting factor in modern software engineering is no longer code generation; it is the speed at which humans can supervise, review, and orchestrate multiple concurrent streams of AI-generated code.

Both Anthropic and OpenAI have aggressively responded to this new reality, developing highly capable agentic systems. However, their design philosophies, operational structures, and target deployment models are fundamentally opposed, offering different strategic advantages depending on a firm's operational maturity. Leaders who are already building out an enterprise AI readiness roadmap will feel this shift most acutely as they move from pilots to full-scale adoption.

The Architect's Approach: Claude Code and Developer-in-the-Loop Integration

Anthropic’s Claude Code is engineered from the ground up as a terminal-first Command Line Interface (CLI) agent.

Powered by the formidable Claude Opus 4.6 and Claude Sonnet 4.6 models, it is designed to operate intimately within the developer's local computing environment.

The core operational philosophy of Claude Code is “supervised autonomy.” The agent is granted the capability to read an entire local codebase, process vast amounts of context, and execute complex development tasks through natural language instructions.

However, it maintains a highly interactive, conversational dialogue with the human developer.

When Claude Code encounters an ambiguous architectural decision or a missing dependency, it pauses execution and explicitly asks for clarification. This interactive cadence closely mimics the experience of pair programming with a highly experienced senior software engineer who requires strategic alignment before committing to a massive, multi-file refactoring effort.

Several key technical differentiators define the Claude Code architecture:

Massive Context Window: Claude Code leverages an unprecedented one-million-token context window.

This massive capacity allows the agent to ingest roughly 25,000 to 30,000 lines of highly interdependent code, comprehensive documentation, and overarching system architecture in a single, continuous session without suffering from context fragmentation or amnesia. For teams modernizing large legacy estates, this pairs well with patterns like the AI Sidecar approach to incremental modernization, where context and safety are everything.

- Plan Mode and Supervised Execution: Claude Code features a highly regarded “Plan Mode.” Before writing any functional code, the agent analyzes complex GitHub issues and outlines a comprehensive, step-by-step strategy. This trades upfront token consumption for high-fidelity execution, ensuring the human developer agrees with the architectural approach before computational resources are expended on code generation.

Deep Configuration and Programmable Logic: The tool relies on a dedicated configuration file,

CLAUDE.md, which supports deeply layered hierarchical settings, strict policy enforcement, and dynamic pre-action and post-action hooks.This allows platform engineers to build highly customized, context-sensitive guardrails that automatically apply business rules to the agent's output, fitting neatly alongside broader AI code approval and governance frameworks in regulated environments.

- Terminal-Native Analytics: Operating natively in the terminal allows Claude Code to seamlessly integrate with existing shell-based automation, local background agents, and autonomous daemons, minimizing context switching for developers who live on the command line.

The Factory Approach: OpenAI Codex and Cloud-Delegated Sandboxing

In stark contrast to Anthropic's highly interactive model, OpenAI has aggressively pivoted Codex from its origins as a simple IDE extension into a complex, asynchronous command center for autonomous digital workers.

Powered by the highly efficient GPT-5.3 and GPT-5.4 Codex models, the 2026 iteration operates on an “abundance mindset,” encouraging developers to dispatch multiple complex tasks to parallel agents working in isolated cloud environments.

OpenAI's approach is explicitly designed for asynchronous delegation. In this model, developers transition from acting as manual coders to functioning as “creative directors” or workforce managers.

Utilizing the recently launched macOS desktop application, a visionary CTO or senior architect can assign distinct feature requests to four separate Codex agents, initiate execution, and step away for up to 30 minutes while the agents autonomously plan, write, test, debug, and validate the code independently.

Key technical differentiators of the OpenAI Codex architecture include:

- Parallel Agent Orchestration: Codex allows developers to run multiple coding tasks simultaneously across entirely different projects. It utilizes innovative “worktrees” to isolate these tasks, entirely preventing Git conflicts during parallel processing.

Secure Cloud Sandboxing: Delegated tasks are executed within highly secure, isolated runtime environments in the cloud.

This ensures that the agent cannot arbitrarily manipulate the host machine's root filesystem, providing a pristine, standardized environment preloaded with the specific repository context.

Standardized Ecosystem Configuration: Codex utilizes the open-standard

AGENTS.mdfile for configuration.Because this standard is widely supported across the open-source ecosystem (including by tools like Cursor and Aider), organizations avoid severe vendor lock-in and can migrate agent configurations seamlessly between different platforms.

- Skills and Scheduled Automations: Users can bundle complex instructions into reusable “Skills” and schedule them as recurring automations. For example, a Codex agent can be scheduled to autonomously triage newly reported bugs every morning, investigate Continuous Integration (CI) pipeline failures, and generate detailed release briefs without requiring human initiation. This fits naturally into modern DevOps and CI/CD efficiency practices that emphasize automation over manual toil.

Performance Analytics: Navigating the Trade-Offs

When establishing an enterprise-wide AI engineering strategy, qualitative descriptions of agent capabilities are insufficient. Engineering leaders require empirical data to justify tooling adoption and financial expenditure. Extensive benchmark testing conducted throughout early 2026 reveals a nuanced reality: neither tool is universally superior across all computing domains. Instead, there is a pronounced, measurable trade-off between absolute accuracy on complex, high-context refactoring and operational speed and token efficiency.

The Complex Refactoring Champion: Claude Code

When enterprise workflows demand intricate, multi-file system refactoring, deep architectural planning, or the resolution of highly obscure legacy bugs, Claude Code demonstrates a distinct statistical and operational advantage.

This superiority is most evident on the SWE-bench framework, which is the industry standard for testing an AI's ability to resolve real-world, highly complex GitHub issues originating from major open-source projects. On the SWE-bench Verified subset, Claude Opus 4.6 achieves a remarkable 80.8% success rate, representing the highest recorded score for any coding agent to date.

In broader, highly difficult real-world bug-fixing benchmarks, Claude Code maintains a massive lead, scoring roughly 72.5% compared to Codex’s approximate 49%.

This substantial performance gap is deeply rooted in Claude's methodical reasoning framework. When presented with a complex, undocumented architecture, Claude Code explicitly maps file dependencies, articulates its intermediate reasoning steps, and engages in a holistic analysis of the entire repository before modifying a single line of syntax.

For instance, in a controlled test requiring the creation of a complete “job scheduler,” Claude provided a fully documented, structurally robust solution ready for production deployment. In the same test, Codex delivered a functional but highly concise version that required significant manual polishing and architectural reinforcement by human engineers.

For organizations managing massive, interdependent codebases where a single architectural error can trigger cascading systemic failures, Claude Code’s meticulous, cautious approach is indispensable.

The Autonomous Efficiency Engine: OpenAI Codex

While OpenAI Codex may trail slightly in the deepest architectural refactoring, it offsets this with unparalleled speed, logical precision during review phases, and dominance in terminal-native execution.

Codex truly shines in the Terminal-Bench 2.0 framework, which evaluates an agent's proficiency in terminal-based coding workflows, scripting, complex system administration, and DevOps pipeline management. In this domain, Codex leads decisively, scoring 77.3% against Claude Code's 65.4%.

This 12-point differential indicates that for teams heavily invested in infrastructure as code, container orchestration, and continuous deployment, Codex offers a vastly more reliable operational capability.

Furthermore, empirical testing reveals that Codex functions as a superior “strict code reviewer.”

Its logical bug-finding capabilities are highly tuned, allowing it to efficiently catch edge cases, race conditions, and logical omissions that more generative models might overlook. Codex consistently outperformed Claude Code in logical bug-finding tests, scoring 77% versus 65%.

Recognizing these distinct strengths, many elite, high-velocity engineering teams have adopted a hybrid workflow strategy. They utilize the creative, planning-oriented Claude Code (Opus) to architect the initial design and generate the bulk of the foundational code. Subsequently, they deploy Codex 5.2 in its “High Thinking” mode to serve as an autonomous, meticulous code reviewer, identifying and remediating the subtle bugs and omissions that the generative model inevitably introduced.

| Benchmark Category | Primary Focus | Claude Code (Anthropic) | OpenAI Codex | Advantage |

|---|---|---|---|---|

| SWE-bench Verified | Resolving complex, real-world GitHub issues. | 80.8% | ~80.0% | Tie / Slight Claude Edge |

| SWE-bench (Standard) | General real-world bug fixing and logic mapping. | 72.5% | ~49.0% | Strong Claude Lead |

| Terminal-Bench 2.0 | DevOps, scripting, and CLI administration tasks. | 65.4% | 77.3% | Strong Codex Lead |

| Logical Bug-Finding | Identifying subtle logic flaws and edge cases. | 65.0% | 77.0% | Strong Codex Lead |

| HumanEval | Standard, single-function Python code generation. | 92.0% | 90.2% | Tie / Slight Claude Edge |

Data aggregated from 2026 independent benchmark analyses.

Token Economics and the Realities of Enterprise ROI

While benchmark accuracy is highly scrutinized, the most critical operational difference between Anthropic and OpenAI for enterprise procurement teams lies in token consumption. In the economics of AI engineering, tokens translate directly to computational expense and rate-limit friction.

Claude Code's structural tendency to articulate its reasoning—often referred to as “chain-of-thought” verbosity—results in massive token usage. Because Claude explains its intermediate steps and maintains a deep conversational history, its token consumption scales exponentially during long sessions. In identical, controlled tasks, Claude Code routinely consumes up to 300% to 400% more tokens than OpenAI Codex for functionally equivalent outputs.

A highly documented evaluation involving the cloning of a complex UI layout from a Figma design starkly illustrates this gap. In this scenario, Claude Code consumed over 6.2 million tokens, heavily analyzing the CSS layout logic and documenting its steps. Codex completed the exact same functional UI requirement utilizing only 1.5 million tokens.

This massive efficiency gap fundamentally alters the financial equation for enterprise adoption. For engineering teams utilizing direct API integration, pricing is calculated per million tokens. In early 2026, the Claude Opus 4.6 API commands a premium rate of $5 per million input tokens and $25 per million output tokens.

Conversely, Codex utilizes leaner pricing models. For a development team executing thousands of automated API requests per month, Codex’s token efficiency can result in tens of thousands of dollars in monthly operational savings. For organizations already feeling the pressure of rising LLM bills, strategies like LLM cost optimization and “token tax” reduction become just as important as raw model accuracy.

Subscription Tier Strategies

Recognizing the immense resource demands of agentic workflows, both Anthropic and OpenAI have dramatically restructured their subscription tiers in 2026. The traditional $20 per month flat rate (Claude Pro and ChatGPT Plus) remains available, but heavy developers utilizing multi-agent orchestrations quickly exhaust these baseline limits within hours.

To accommodate high-velocity engineering, both platforms now offer aggressive upper-tier plans:

| Tier Level | Monthly Cost | OpenAI Codex Offerings | Claude Code Offerings | Target Persona |

|---|---|---|---|---|

| Entry / Light | 8 - 20 | ChatGPT Go (8) / Plus (20) | Free / Pro ($20) | Individual developers, light daily usage. |

| Professional | $100 | ChatGPT Pro (5x usage limits) | Claude Max 5x | Senior engineers managing long-running coding sessions. |

| Enterprise / Heavy | $200 | Pro 20x | Claude Max 20x | Platform architects orchestrating massive agent teams and parallel workflows. |

Pricing data reflects vendor structures as of March 2026.

Strategic CFOs evaluating these platforms must look beyond the initial subscription cost. While Claude’s flat subscription may initially appear predictable, its 4x token burn rate means that users are significantly more likely to hit rate limits, requiring organizations to purchase supplementary API credits to maintain velocity.

For pure cost-to-code efficiency, especially when managing repetitive CI/CD automation or large-scale boilerplate generation, OpenAI Codex delivers substantially superior financial economics.

Accelerating C# 15 and .NET 10 Development Cycles

The integration of agentic AI becomes a true competitive differentiator when applied to the modernization of deeply entrenched enterprise tech stacks. The release of .NET 10 and C# 15 in 2026 introduced profound architectural capabilities that directly benefit from—and often require—the holistic system understanding provided by advanced AI tools.

Microsoft’s .NET 10 release prominently featured the new AI-Agent Development Framework, allowing developers to build intelligent workflows and conversational agents with patterns similar to constructing standard web APIs.

However, the most critical updates occurred deep within the runtime environment. .NET 10 introduced massive improvements to JIT inlining, loop inversion, struct argument handling (where small structs are promoted directly to shared registers to reduce memory overhead), and native support for AVX 10.2 vector operations.

Concurrently, C# 15 introduced highly anticipated language features, most notably the implementation of strict union types and collection expression arguments.

Effectively leveraging these advanced, low-level architectures across a massive legacy application requires an intelligence capable of orchestrating highly interdependent changes. Here, the distinct methodologies of Claude Code and Codex become highly visible.

Claude Code: The Monolithic Modernizer

When an enterprise organization decides to modernize a monolithic, legacy C# application to a modern .NET 10 microservices architecture, Claude Code is the unparalleled choice.

Consider the introduction of C# 15 union types. Historically, when a method needed to return one of several possible types, developers relied on imperfect, bloated options—such as returning a generic object, throwing complex exceptions, or utilizing heavy inheritance hierarchies.

The new union keyword allows a variable to be exactly one of a fixed set of types, enforced by compiler-driven exhaustive pattern matching.

Refactoring a legacy application to utilize union types is a monumental task. If a union type is implemented in the core domain layer, the corresponding exhaustive switch expressions must be perfectly mapped and updated across hundreds of files in the presentation and infrastructure layers. Claude Code’s one-million-token context window allows it to ingest the entire solution architecture simultaneously.

Utilizing its “Plan Mode,” Claude Code maps out the cascading dependencies, explicitly defining how a union type representing a data fetch outcome (for example, Success | NotFound | Unauthorized) will ripple through the ASP.NET Core middleware before executing the syntax changes.

Furthermore, to take advantage of .NET 10’s struct argument handling and safe stack allocation of small arrays to reduce Garbage Collection (GC) pressure, the AI must understand deep memory management implications. Claude’s tendency toward verbose, analytical reasoning ensures that these low-level optimizations are applied safely across the domain.

OpenAI Codex: The Rapid Agile Deployer

Conversely, for organizations focused on Rapid Agile Deployment and continuous integration, OpenAI Codex functions as a high-velocity execution engine. Firms specializing in custom software development and application management—such as Baytech Consulting—rely heavily on enterprise-grade quality delivered on strict timelines. A standard modern deployment stack often incorporates .NET 10 backends connected to PostgreSQL databases, containerized via Docker, orchestrated through Kubernetes, and deployed onto high-performance infrastructures like Harvester HCI or OVHCloud servers.

Setting up, configuring, and maintaining this complex infrastructure requires immense amounts of repetitive boilerplate. Codex’s cloud-delegated sandboxing and parallel agent orchestration are perfectly suited for this environment.

A senior platform engineer can launch the Codex command center and simultaneously dispatch multiple autonomous tasks:

- Agent 1: Scaffold the Entity Framework Core migrations for the new C# 15 union types and update the corresponding Postgres schemas.

- Agent 2: Write the optimized Dockerfiles required to containerize the .NET 10 application, ensuring AVX 10.2 hardware acceleration flags are correctly set for the Linux host.

- Agent 3: Generate the necessary Kubernetes deployment manifests and Rancher configurations for deployment onto the local Harvester HCI cluster.

Because Codex runs these tasks asynchronously in isolated cloud sandboxes, the human engineer is free to review the overarching architecture while the AI handles the granular implementation details.

Codex executes these parallel workflows with extraordinary speed and minimal token overhead, making it the premier tool for operationalizing complex .NET 10 environments at scale. Teams that lean into this style of “factory” delivery often pair it with formal Agile delivery methods to keep iterations short and feedback tight.

Overcoming Infrastructure Challenges: Azure DevOps On-Premises

One of the most complex hurdles for B2B enterprises—particularly those in highly regulated sectors such as real estate, finance, healthcare, and telecommunications—is integrating external AI coding assistants into deeply secured, on-premises infrastructure. While agile, cloud-native startups can effortlessly connect AI agents to public GitHub or GitLab cloud repositories, established enterprise firms frequently maintain their proprietary source code, sprint planning work items, and CI/CD pipelines strictly behind robust corporate firewalls.

Firms leveraging comprehensive, heavily secured technology stacks—such as those utilizing Azure DevOps On-Premises, local SQL Server deployments, and pfSense firewalls—require sophisticated architectural solutions to prevent data exfiltration while still enabling the massive productivity gains promised by AI.

The Model Context Protocol (MCP) and Claude Code Integration

To bridge the critical gap between cloud-based Large Language Models and secure on-premises infrastructure, the industry has rapidly adopted the open-source Model Context Protocol (MCP). For Anthropic's ecosystem, the deployment of the Azure DevOps MCP Server acts as a highly secure, localized conduit, enabling Claude Code to interact directly with an organization's internal, firewalled network.

This architectural implementation is highly transformative for enterprise security. The MCP server runs entirely locally within the secure corporate environment, ensuring that sensitive project data, sprint planning information, and proprietary source code never leave the network arbitrarily.

It utilizes standard Windows Authentication (ideal for Active Directory domains) or highly scoped Personal Access Tokens (PAT) to ensure the AI agent operates under strict identity governance and zero-trust principles.

Through the stdio transport of the MCP protocol, Claude Code gains the autonomous capability to execute profound on-premises actions:

- Work Item Triage: The agent can autonomously query the local Azure DevOps sprint board, reading Epics, Features, and User Stories to understand the precise business requirements before generating C# code.

- Git Repository Orchestration: Claude Code can read on-prem commit histories, automatically link specific commits (for example,

a3f5c2d) to their corresponding Azure DevOps work items, and generate Pull Requests entirely within the local environment. - Pipeline Automation: Upon committing code, the agent can trigger on-premises build pipelines and immediately retrieve failure logs if a .NET 10 compilation error occurs, creating a seamless, automated debugging loop entirely behind the pfSense firewall.

OpenAI Codex and the config.toml Paradigm

OpenAI Codex approaches on-premises integration through its Visual Studio extension ecosystem and a highly configurable config.toml architecture. For developers utilizing Visual Studio 2022 or VS Code connected to an Azure DevOps environment, Codex requires specific API endpoint configurations to function securely within a hybrid model.

Codex fundamentally operates as a cloud-based entity. Therefore, when local commands are executed, the agent operates within a constrained boundary that relies heavily on environment variables. If an enterprise utilizes a private, localized endpoint for the OpenAI API (such as an Azure Foundry deployment), platform engineers must rigorously manage the AZURE_OPENAI_API_KEY and ensure that all terminal sessions inherit the correct authentication variables prior to launching the IDE.

While highly effective, configuring Codex for strict on-premises interaction often requires more manual infrastructure orchestration and explicit, auditable configuration switching compared to the relatively seamless, automated protocol negotiation provided by the Anthropic MCP implementation.

For firms where explicit, granular control over every API call is mandated by compliance regulations, Codex provides the necessary levers. For teams seeking rapid, integrated project management driven by the AI itself, Claude's MCP approach offers less friction.

Data Privacy, Security, and Enterprise Guardrails

The widespread adoption of agentic AI coding assistants introduces unprecedented and highly complex security paradigms for Chief Information Security Officers (CISOs). Handing total codebase context, proprietary intellectual property, and detailed system architecture over to an external AI assistant requires executive leadership to deeply scrutinize vendor data privacy policies, underlying architectural isolation mechanisms, and the threat vectors introduced by autonomous digital workers.

The OpenAI Sandbox: OS-Level Kernel Protection

OpenAI Codex addresses the profound security risks of agentic coding through rigorous, platform-native sandboxing.

The primary threat model in this ecosystem is not necessarily a malicious actor, but rather an autonomous agent inadvertently executing destructive local commands (for example, rm -rf /) or unintentionally exfiltrating sensitive environment variables via unauthorized network access during its problem-solving process.

To comprehensively mitigate this risk, Codex does not rely on fragile application-layer restrictions. Instead, it enforces strict operational boundaries at the OS kernel level.

- On macOS environments, Codex natively utilizes the highly restrictive built-in Seatbelt framework.

- On Windows environments, it leverages the native Windows sandbox architecture when the agent executes commands within PowerShell.

- On Linux and WSL2 environments, Codex heavily relies on

bubblewrap(bwrap) to ensure strict unprivileged user namespace creation, ensuring the agent cannot elevate its permissions.

This architecture provides an incredibly clear and robust trust model. Engineering leaders can configure the Codex sandbox into specific, immutable modes. The read-only mode guarantees that Codex can only inspect files for analysis without any modification capabilities.

The default workspace-write mode tightly restricts the agent, permitting file edits and local command execution exclusively within the designated, isolated repository folder.

This OS-level containment drastically reduces the cognitive burden and “approval fatigue” associated with human developers manually verifying every minor AI-generated command, allowing for true autonomous delegation. In parallel, many teams are adding dedicated AI firewalls and middleware layers to guard against prompt injection and data leakage at the application layer.

Anthropic's Safety Posture and Supply Chain Realities

Anthropic has historically differentiated itself by maintaining a highly prominent safety-first posture, heavily prioritizing model alignment, cautious data generation, and strict adherence to internal ethical guidelines.

This foundational philosophy makes Anthropic’s models, including Claude Cowork and Claude Code, inherently appealing to highly regulated industries like healthcare and finance.

Anthropic’s enterprise offerings actively reflect this, featuring robust Role-Based Access Controls (RBAC) and strict group spend limits, which directly satisfy the governance requirements of IT procurement teams.

However, the “developer-in-the-loop” architectural model of Claude Code relies primarily on application-layer hooks and programmable validation logic (via CLAUDE.md) rather than the strict kernel-level sandboxing utilized by Codex.

While this allows for significantly more flexible workflow orchestration and the encoding of highly specific corporate business rules directly into the agent's behavior, it presents a fundamentally different risk profile when executing untrusted or highly experimental code.

Furthermore, a critical security incident in late March 2026 starkly illuminated the profound complexities of securing modern AI development toolchains. A standard DevOps packaging error within the @anthropic-ai/claude-code npm package resulted in a massive 57-megabyte source map file being inadvertently shipped to public production environments.

Within hours of the release, the entire proprietary source architecture of Claude Code—comprising roughly 512,000 lines of highly sophisticated TypeScript across 1,900 files—was exposed, downloaded, and mirrored across thousands of public GitHub repositories globally.

While this specific leak did not compromise direct user data, proprietary enterprise codebases, or the underlying neural weights of the Claude large language models, the incident served as a severe industry warning. It decisively proved that even the most safety-focused, heavily capitalized AI vendors remain inherently vulnerable to standard software supply chain vulnerabilities and routine deployment errors.

For B2B executives, the takeaway is clear: data privacy and security in the age of AI engineering is a shared, ongoing responsibility. Enterprises must actively disable telemetry where proprietary code is concerned, mandate zero-data-retention API agreements, and ensure that AI agents operate within highly restricted network architectures monitored by advanced intrusion detection systems.

Developer Productivity Realities in 2026: The ROI Reality Check

As AI coding assistants transition from experimental novelties to heavily embedded enterprise toolchains, evaluating the Return on Investment (ROI) requires executives to aggressively separate vendor marketing hype from empirical telemetry and longitudinal data analysis.

Dispelling the Myth of the 10x Engineer

Throughout 2024 and 2025, aggressive social media campaigns and vendor marketing frequently suggested that the adoption of AI tools would instantly create “10x engineers,” yielding 200% to 300% immediate productivity gains.

Comprehensive, longitudinal data collected from vast datasets throughout early 2026 paints a far more modest, yet still highly valuable, picture of AI's actual impact.

A massive longitudinal study spanning 400 enterprise companies, tracking engineering output between November 2024 and February 2026, revealed a stark reality: while actual AI tool usage increased by an average of 65% across engineering departments, actual Pull Request (PR) throughput only increased by 9.97%.

This approximately 10% gain perfectly aligns with the fundamental realities of complex software development: physically typing syntax was never the primary bottleneck in engineering.

Professional software engineering consists heavily of rigorous requirements gathering, intricate system design, cross-functional architectural alignment, security auditing, and team communication. As one senior developer aptly summarized the reality of AI integration: “The easy tasks are a little easier. The tedious tasks are a little less annoying. A four-day task might take three. But that doesn't mean I'm shipping 3x more PRs.”

However, it is a severe strategic error to dismiss a 10% gain. When scaled across a large engineering department consisting of dozens or hundreds of developers, a 10% throughput increase combined with an average of 3.6 hours explicitly saved per developer per week represents a massive financial and operational advantage.

Furthermore, detailed telemetry indicates that developers who utilize AI tools daily consistently merge 60% more PRs than non-users, showcasing that habitual, deeply integrated usage of these tools creates compounding velocity advantages over time.

By eliminating tedious boilerplate generation, AI tools free senior engineers to focus exclusively on highly complex, value-generating architectural logic. To truly capture that value, many leaders are now reframing how they measure output using modern developer productivity metrics like DORA, SPACE, and Flow instead of legacy vanity metrics.

| Productivity Dimension | 2026 Industry Average Impact | Strategic Implication |

|---|---|---|

| Pull Request (PR) Throughput | ~9.97% Increase | Modest but consistent acceleration of feature delivery pipelines. |

| Time Saved per Developer | ~3.6 Hours per Week | Recouped time highly valuable when redirected to strategic architecture. |

| Code Quality (AI-Assisted) | 1.7x More Issues in Raw AI PRs | Mandates strict human review protocols; AI cannot bypass QA. |

| Professional Daily Adoption | ~51% of Developers | AI usage is no longer optional; it is a foundational baseline skill. |

Data aggregated from 2026 enterprise developer productivity surveys (DX, GitClear, Stack Overflow).

Conclusion: Orchestrating the Digital Workforce

The technological advantage in software engineering as of 2026 no longer belongs exclusively to the teams capable of writing syntax the fastest; it belongs to the organizations that can most effectively, securely, and creatively orchestrate autonomous digital agents.

The decision between Anthropic’s Claude Code and OpenAI’s Codex is not binary. The most elite, forward-thinking engineering organizations are actively adopting a multi-model, hybrid workflow strategy that leverages the distinct structural advantages of both platforms to maximize Return on Investment.

For visionary CTOs and technical leaders, the path forward requires strategic deployment based on the specific nature of the engineering task:

- Deploy Claude Code for Architecture, Complexity, and Modernization: When embarking on highly complex greenfield projects, executing deep, multi-file system refactoring, or managing the monumental task of migrating legacy monoliths to modern .NET 10 microservices, Claude Code is entirely unrivaled. Its massive one-million-token context window allows it to ingest, map, and understand entire enterprise repositories simultaneously. By utilizing its explicit, step-by-step reasoning approach, Claude drastically reduces cascading architectural errors. Engineering leadership should equip their senior technical leads, principal engineers, and system architects with high-tier Claude Max subscriptions to accelerate initial system design and complex problem-solving. This is especially powerful when paired with broader legacy system modernization strategies that avoid risky rip-and-replace rewrites.

- Deploy OpenAI Codex for Scale, Velocity, and Automated Infrastructure: For environments focused on rapid agile deployments, routine bug remediation, automated test generation, and complex DevOps infrastructure management, OpenAI Codex provides exceptional, unmatched value. Its rigorous OS-level sandboxing, parallel cloud execution capabilities, and vast token efficiency make it the ultimate execution and review engine. Strategic CFOs will heavily favor Codex’s highly efficient API cost structure, making it the ideal technological choice to integrate deeply into automated CI/CD pipelines and high-volume deployment scenarios where rapid iteration is prioritized over deep explanatory documentation.

- Bridge the On-Premises Gap with Robust Architectural Protocols: If an organization relies heavily on secured, self-hosted infrastructure to maintain compliance, utilizing protocols like the Model Context Protocol (MCP) server is absolutely critical. Firms that specialize in delivering customized, cutting-edge technology solutions via heavily protected environments—such as Azure DevOps On-Prem, isolated PostgreSQL databases, and localized Harvester HCI clusters—can leverage MCP to provide AI agents with secure, firewall-protected access to proprietary data. This ensures maximum agility without ever compromising stringent enterprise compliance regulations or exposing intellectual property to public cloud vulnerabilities. Many enterprises are also experimenting with internal AI automation and agentic workflows to connect these pieces into end-to-end digital workers.

Ultimately, by deeply understanding the distinct architectural philosophies, economic realities, and security profiles of both Claude Code and OpenAI Codex, B2B executives can purposefully align these powerful tools with their unique engineering workflows, driving sustainable, compounding value across their entire technological infrastructure.

Frequently Asked Questions

Should development teams rely on local codebase integration or isolated cloud sandboxes for AI coding? The optimal choice depends entirely on the organization's specific security posture, compliance mandates, and the exact nature of the engineering task. Local codebase integration, fundamentally typified by Claude Code's terminal-centric approach, provides the AI agent with deep, instantaneous awareness of the developer's immediate, customized local environment. This makes it highly ideal for interactive, high-context refactoring where the agent needs to continuously analyze local dependencies. Conversely, isolated cloud sandboxes, primarily utilized by OpenAI Codex, are vastly superior for asynchronous task delegation and baseline security. Sandboxes strictly prevent the autonomous agent from inadvertently executing destructive or unauthorized commands on the local host machine, and they allow developers to confidently run multiple complex coding tasks in parallel without exhausting local computing resources. For high-velocity, highly automated environments focused on scale, sandboxes provide the safest and most efficient execution boundaries.

How do Claude Code and OpenAI Codex handle complex system refactoring differently? Claude Code relies on a highly interactive “developer-in-the-loop” planning methodology combined with a massive one-million-token context window. It approaches refactoring highly deliberately, explicitly explaining its underlying chain-of-thought, mapping complex file dependencies across the repository, and explicitly prompting the human developer for guidance at critical architectural junctions before executing code. This rigorous methodology results in highly accurate, production-ready code for intricate tasks, as evidenced by its superior SWE-bench benchmark scores. In contrast, OpenAI Codex operates primarily as an autonomous, high-speed delegator. It consumes significantly fewer computational tokens and acts much faster, aggressively churning through files within its isolated sandbox. While highly efficient for writing standard boilerplate or executing straightforward, wide-ranging syntax updates, it occasionally produces brittle architectural code in highly complex scenarios. Consequently, when using Codex for deep refactoring, the human developer must act more as a strict, post-generation code reviewer to ensure structural integrity.

What are the data privacy considerations when handing code context over to an external AI assistant? Handing complete proprietary codebase context and system architecture to any external Large Language Model introduces severe risks of data leakage and intellectual property exposure. Organizations must guarantee they are utilizing specific enterprise-tier licensing agreements that feature strict zero-data-retention policies, explicitly preventing the vendor from utilizing the firm's codebase to train future commercial models. From a technical implementation standpoint, reliance on local OS-level kernel sandboxing (such as Codex’s deep integration of macOS Seatbelt or Linux bubblewrap) provides strong guarantees that the AI agent cannot autonomously exfiltrate data from unauthorized local directories during execution. Furthermore, for highly sensitive, air-gapped, or regulated environments, deploying localized technologies like the Azure DevOps MCP Server ensures that the AI interacts with Git repositories and sprint work items strictly from behind the corporate firewall, significantly reducing the external surface area for unauthorized data exposure and ensuring continuous compliance. In parallel, many enterprises are combining these controls with internal AI compliance bots that continuously scan for policy violations before they become incidents.

Further Reading

- https://developers.openai.com/codex/concepts/sandboxing

- https://tosea.ai/blog/claude-code-complete-guide-2026

- https://larridin.com/developer-productivity-hub/developer-productivity-benchmarks-2026

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.