LPU Unleashed: How Groq is Rewriting AI Inference

March 25, 2026 / Bryan Reynolds

What is Groq.com? The Definitive Enterprise Guide to LPU Architecture, Models, and Agentic AI Inference

The question of how to effectively and economically scale artificial intelligence is echoing through the boardrooms of modern B2B enterprises. Over the past few years, the narrative surrounding large language models (LLMs) has shifted dramatically. The initial phase of awe and experimentation has concluded, replaced by a rigorous, numbers-driven mandate for positive Return on Investment (ROI) and seamless production deployment. Generative AI is no longer a novelty; it is a critical infrastructure layer. However, as organizations transition these sophisticated models from the research laboratory into customer-facing production environments, a fundamental hardware bottleneck has emerged. The foundational AI models of 2026 exhibit extraordinary capabilities in complex reasoning, dynamic code generation, and nuanced language comprehension, but deploying these massive computational engines at a global scale exposes the severe limitations of legacy semiconductor architecture.

Traditional Graphics Processing Units (GPUs), which were initially designed for parallel rendering in video games and later brilliantly adapted for the mathematics of training massive neural networks, fundamentally struggle with the sequential, token-by-token nature of AI inference. The result of this architectural mismatch is variable latency, exorbitant operational costs, and the infamous "memory wall" that restricts real-time application performance. For a visionary Chief Technology Officer (CTO) attempting to build a real-time conversational agent, or a strategic Chief Financial Officer (CFO) trying to forecast cloud computing expenses, the GPU bottleneck represents a massive liability.

This critical friction point has catalyzed the rise of specialized, purpose-built inference hardware. At the absolute forefront of this paradigm shift is Groq.com, an AI infrastructure company that has fundamentally redesigned the underlying physics of machine learning compute. By introducing a new category of processor known as the Language Processing Unit (LPU), Groq promises deterministic execution, sub-millisecond latency, and linear scaling, effectively addressing the exact hardware pain points that plague traditional GPU-based inference systems.

For executives steering B2B firms in industries such as advertising, finance, high-tech software, and healthcare, understanding the nuances of the Groq ecosystem is no longer optional. The ability to deploy autonomous AI agents at lightning speed and minimal cost is a profound competitive advantage. This exhaustive research report delivers a comprehensive, transparent analysis of the Groq platform. It explores the foundational engineering of the LPU architecture, evaluates the diverse catalog of open-weight models available on GroqCloud, dissects the revolutionary "Compound" built-in tooling designed for agentic workflows, and provides a rigorous, data-backed cost-benefit analysis comparing Groq to industry incumbents like OpenAI, Cerebras, and NVIDIA. To put Groq in context with broader platform choices, it also connects to how a strategic .NET-first AI stack can help enterprises operationalize this kind of infrastructure safely.

The Hardware Revolution: Deconstructing the Groq LPU

To comprehensively answer what Groq.com is, one must first examine its origins and the radical engineering philosophy that separates it from legacy semiconductor giants. The company was founded in 2016 by Jonathan Ross, a key architect behind Google’s original Tensor Processing Unit (TPU) project. While at Google, Ross recognized a critical divergence in AI computation: the hardware requirements for training an AI model (processing massive datasets simultaneously to adjust weights) are fundamentally different from the hardware requirements for inference (running a trained model to generate sequential outputs for an end-user). Groq was established with a singular, unapologetic focus: optimizing AI inference.

Shattering the GPU Memory Wall

In modern data centers, the defining performance constraint for Large Language Models (LLMs) is universally recognized as the "Memory Wall". Traditional GPUs rely on High Bandwidth Memory (HBM) located physically off-chip. When an LLM generates text, it must fetch massive, gigabyte-scale weight matrices from this external memory for every single token (word or sub-word) it produces. This constant, high-volume data movement creates a severe physical bottleneck; the immensely powerful compute cores of the GPU sit idle for precious fractions of a second, waiting for data to traverse the bus from memory. This waiting period wastes massive amounts of energy and introduces intolerable latency into real-time applications.

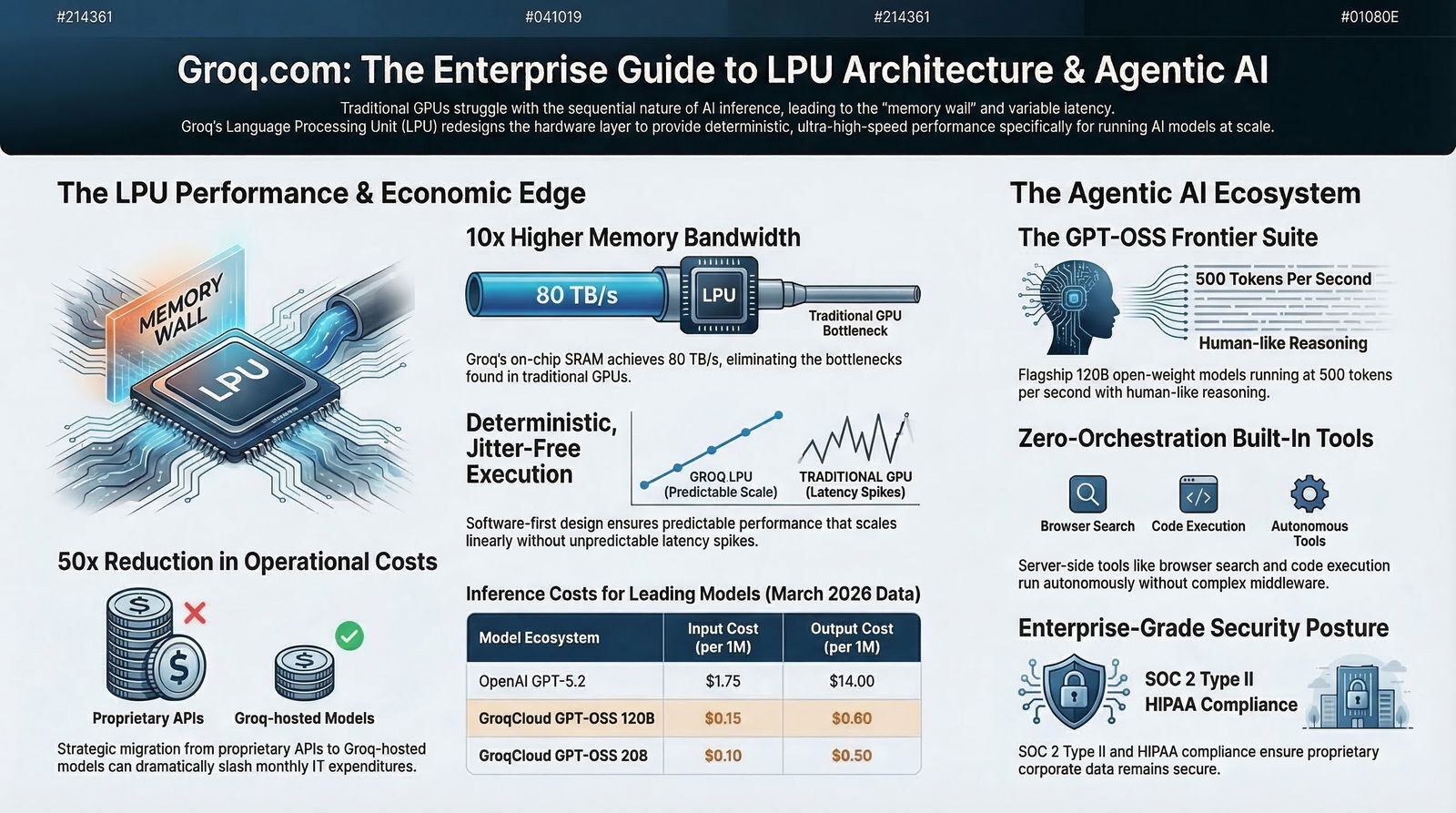

Groq’s Language Processing Unit (LPU) abandons this traditional architecture entirely. Instead of relying on off-chip HBM, the LPU integrates hundreds of megabytes of Static Random-Access Memory (SRAM) directly onto the processor chip alongside the compute logic. SRAM is inherently vastly faster than HBM, serving as the primary weight storage rather than merely a temporary cache. While a standard, top-tier GPU's off-chip memory bandwidth clocks in at approximately 8 terabytes per second, Groq's on-chip SRAM achieves staggering memory bandwidths upwards of 80 terabytes per second.

This 10x architectural advantage allows the LPU to feed its compute units at maximum speed, entirely bypassing the memory bottleneck. The data movement happens instantaneously on the silicon, driving unprecedented Time-to-First-Token (TTFT) metrics and overall throughput speeds that frequently exceed human reading capabilities by an order of magnitude. From an environmental and operational perspective, this localized data movement also renders the LPU up to 10x more energy-efficient than traditional GPUs for inference workloads. Furthermore, the LPU and its accompanying GroqRack systems are air-cooled by design, eliminating the need for the complex, hyper-expensive liquid cooling infrastructure increasingly required by modern GPU clusters, thereby drastically cutting operational overhead.

Software-First Design and the Power of Determinism

Perhaps the most radical departure from conventional chip design is Groq’s "software-first" philosophy. The Groq engineering team designed the compiler's software architecture before they laid out a single transistor for the physical silicon. The Groq compiler has complete, omniscient control over the hardware, enabling a concept known as static scheduling.

In traditional GPU systems, execution is dynamic and speculative. The hardware constantly makes educated guesses about which data will be needed next. When it guesses wrong, or when multiple users access the system simultaneously, the GPU must flush its cache and retrieve new data, leading to variable performance, jitter, and unpredictable slowdowns. This is particularly problematic under heavy concurrent load or when utilizing batch processing techniques designed to maximize GPU utilization at the expense of individual user latency.

Groq’s compiler operates entirely differently. It accepts workloads from various machine learning frameworks and maps the entire program across one or multiple LPUs before execution even begins. Every single data movement, memory allocation, and compute cycle is planned down to the exact nanosecond. There is no speculation, no dynamic routing, and no waiting for resources.

This meticulous orchestration yields deterministic execution. For enterprise applications, determinism equates directly to reliability. The performance observed by a developer during a single-user prototype test on a laptop is the exact, mathematical performance the system will deliver at production scale with millions of concurrent users, completely devoid of the unpredictable latency spikes that plague standard cloud GPU deployments.

This predictability is not just a technical curiosity; it is a critical business requirement. For mission-critical systems, real-time customer service voice agents, interactive gaming environments, and high-frequency financial platforms, a delay of even a few hundred milliseconds can severely degrade the user experience or result in millions of dollars in lost transaction value. If you are designing proactive, always-on analytics or alerting, this is the same kind of low-latency foundation you need to support proactive AI systems that replace static dashboards.

The GroqCloud Model Ecosystem

To leverage this groundbreaking hardware, the company operates GroqCloud, a robust API platform that provides developers and enterprise teams immediate access to leading generative AI models running natively on the LPU infrastructure. It is crucial to understand that Groq does not spend billions of dollars training its own foundational LLMs from scratch; rather, it acts as the ultimate high-performance execution engine for the industry's top open-weight and partnered models.

The GroqCloud platform supports a highly comprehensive suite of AI modalities, moving far beyond simple text generation to include Large Language Models (LLMs), Speech-to-Text (STT), Text-to-Speech (TTS), and advanced image-to-text vision models. This diverse, multimodal catalog empowers organizations to build incredibly complex applications—such as real-time, emotionally aware voice assistants or autonomous visual inspection tools—entirely within the low-latency boundaries of the Groq ecosystem.

Leading Open-Weight Models Available

Groq hosts a highly curated selection of the most performant models on the market, each rigorously optimized by the Groq compiler for the LPU architecture:

The Meta Llama Family: Groq is renowned for providing day-zero support for Meta's flagship model releases. The platform currently hosts the highly efficient Llama 3.1 8B, the incredibly versatile Llama 3.3 70B, and the massive, frontier-class Llama 4 Maverick (featuring 400 billion parameters). Meta's post-training techniques have pushed these models to state-of-the-art levels in areas like logical reasoning, advanced mathematics, and general knowledge retention, rivaling closed-source proprietary models.

Alibaba's Qwen: The platform features Alibaba's highly regarded Qwen3-32B model, which provides exceptionally strong multilingual support, deep coding proficiency, and advanced tool-calling capabilities within a highly efficient parameter footprint.

Speech and Audio Modalities: For organizations building next-generation conversational interfaces, Groq hosts OpenAI’s Whisper Large V3 (and the even faster V3 Turbo variant) for rapid, highly accurate transcription of spoken audio, alongside advanced Text-to-Speech (TTS) models. The strategic combination of Whisper STT, a low-latency Llama LLM for reasoning, and a TTS model allows enterprise organizations to construct conversational voice bots that react with human-like, sub-second response times—a feat nearly impossible on standard cloud GPU infrastructure.

The Game-Changer: OpenAI GPT-OSS Models on Groq

One of the most consequential developments in the 2026 AI ecosystem is Groq's deep integration of OpenAI's newest open-weight models, officially branded as the GPT-OSS suite. This strategic partnership democratizes access to OpenAI-tier reasoning capabilities while allowing developers to leverage Groq's unparalleled inference speeds and highly disruptive cost structure. OpenAI specifically worked with industry leaders like Groq to ensure these open models achieved optimized performance across custom hardware systems.

The GPT-OSS 120B Flagship

The openai/gpt-oss-120b represents OpenAI's flagship open-weight language model. It is constructed upon a highly sophisticated Mixture-of-Experts (MoE) architecture. While the model possesses 120 billion total parameters, the MoE design ensures that only approximately 5.1 billion parameters are actively utilized during any single forward pass. This sparse architectural approach is a masterclass in efficiency, ensuring high-end intelligence without the massive computational overhead of activating a dense 120B model for every single token generated.

Operating at an astonishing speed of approximately 500 tokens per second (tps) on the GroqCloud infrastructure, the GPT-OSS 120B model matches or surpasses proprietary models like OpenAI's own heavily utilized o4-mini across numerous independent benchmarks. It features a massive context window of 131,072 tokens. To put this into perspective, a 131K context window allows the model to ingest and analyze full enterprise code bases, perform deep retrieval-augmented generation (RAG) across hundreds of pages of legal documentation, and execute complex, multi-step agentic research tasks without losing context.

The Compact GPT-OSS 20B

For highly latency-sensitive applications or inherently budget-conscious enterprise deployments, Groq also offers the highly optimized openai/gpt-oss-20b model. Also utilizing an efficient MoE architecture, this smaller model runs at a blistering speed of 1,000 tokens per second on the LPU infrastructure—speeds that visually appear instantaneous to human users. Despite its notably smaller memory footprint, it retains remarkably strong performance in coding, logical reasoning, and multilingual processing tasks, and supports the exact same 131K context window as its larger 120B counterpart. It is specifically positioned by OpenAI and Groq for cost-efficient deployment in low-latency agentic workflows.

Comparative Value Against Proprietary APIs

The economic and performance advantages of running the GPT-OSS suite on Groq are highly substantial when compared directly to OpenAI's proprietary hosted APIs. According to official pricing data from March 2026, OpenAI's highly popular proprietary model, gpt-4o-mini, costs 0.15 per million input tokens and 0.60 per million output tokens.

The gpt-oss-120b hosted on Groq is priced to compete directly, positioned at 0.15 per million input tokens and 0.60 per million output tokens (with slight variations reaching $0.75 depending on the exact service tier), but it delivers significantly faster token generation speeds and includes built-in agentic tool execution. Furthermore, the gpt-oss-20b drops the price floor even lower, operating at just 0.10 per million input tokens and 0.50 per million output tokens. This dynamic allows CFOs to drastically reduce their API expenditures while simultaneously providing their development teams with vastly superior inference latency.

Built-In Tools and the "Compound" Agentic AI System

While raw token generation speed is an excellent metric for benchmark charts, the true differentiator for Groq in the complex enterprise space is how that speed uniquely enables sophisticated, multi-step agentic workflows. In traditional LLM application development, integrating external tools (such as live web search or a Python code interpreter) requires highly complex client-side orchestration using frameworks like LangChain or the Model Context Protocol (MCP). The client application must send a prompt to the model, receive and parse a requested tool call, execute the tool locally or via a third-party API, and then feed the resulting data back into the LLM to continue the reasoning process. This constant back-and-forth communication introduces massive latency overhead, making real-time autonomous agents sluggish and prone to failure.

Groq has fundamentally revolutionized this process through its native Built-In Tools and the highly advanced Compound AI System.

Zero-Orchestration Built-In Tools

Built-in (or server-side) tools represent the easiest and most performant way to add agentic capabilities to a corporate application. By utilizing these features, execution happens entirely on Groq's high-speed servers. The model autonomously calls the built-in tools and handles the entire iterative agentic loop internally. The end-user or client application receives a single, final response once the entire complex process is completed.

Unlike local tool calling or remote MCP, built-in tools require absolute zero orchestration from the user's infrastructure. Developers simply call the standard Groq API, specify an allowed_tools parameter, and Groq's internal systems seamlessly handle the entire loop: executing the tool, parsing arguments, orchestrating the external API call, and returning the final, synthesized answer.

For the highly popular GPT-OSS models (gpt-oss-120b and gpt-oss-20b), Groq explicitly supports a specific, highly useful subset of built-in tools:

- Browser Search (

browser_search): Allows the AI model to autonomously fetch real-time data from the web, ensuring answers are not limited by the model's static training cutoff date. Code Execution (

code_interpreter): Enables the model to write, execute, and evaluate Python code within a secure, isolated sandbox, returning the mathematical or logical results directly into the context window for further reasoning.

The Groq Compound Systems

For truly advanced enterprise workflows that require deep, recursive reasoning and the sequential use of multiple distinct tools to solve open-ended problems, Groq engineered the Compound System. It is vital to understand that "Compound" is not a single, monolithic model; rather, it is an integrated, autonomous AI ecosystem powered dynamically by Llama 4 Scout and GPT-OSS 120B, specifically designed for intelligent reasoning and multi-tool orchestration.

Compound Systems offer a unified programming interface that completely abstracts away infrastructure complexity. They support a significantly broader, more powerful suite of built-in tools compared to standalone open-weight models, incurring minor usage-based surcharges:

Advanced Web Search: For deep, multi-page research queries ($8 per 1000 requests).

Visit Website: Directly parsing, reading, and extracting data from specific, targeted URLs ($1 per 1000 requests).

Code Execution: Running highly complex analytical scripts ($0.18 per hour of execution).

Browser Automation: Allowing the AI to interact dynamically with complex web elements, forms, and JavaScript-heavy pages ($0.08 per hour).

Wolfram Alpha: Accessing the world's premier computational intelligence and curated mathematical data (billed via the user's own Wolfram API key).

The system is strategically available in two distinct variants to balance speed and capability:

groq/compound: The full-scale, highly capable system capable of executing multiple tools sequentially within a single API request. This is ideal for deep, multi-step research tasks where the AI must search, read, calculate, and summarize.groq/compound-mini: A streamlined, faster version designed to execute a single tool per request, offering 3x lower latency for rapid, highly specific agentic tasks where speed is prioritized over complex orchestration.

Users maintain total visibility into the AI's autonomous actions; the API response includes an executed_tools array, allowing developers to audit exactly which tools were called, the specific arguments used, and the raw outputs returned.

Regulatory Note for Healthcare Executives: Currently, the Compound system and the broader suite of Built-In Tools execute on shared Groq infrastructure and are explicitly not considered HIPAA Covered Cloud Services under Groq's Business Associate Addendum (BAA). Furthermore, these specific tools are not available for use with regional or sovereign endpoints. Organizations dealing with Protected Health Information (PHI) must utilize the standard Groq API for raw inference and manage all tool orchestration locally within their own secure enclaves.

The Economics of Inference: Cost Savings and ROI Analysis

The transition from legacy GPU-based inference to Groq's dedicated LPU architecture yields profound economic benefits for an enterprise. Because LPUs process tokens significantly faster and require drastically less energy to move data across the silicon, Groq is able to offer frontier-level machine intelligence at a fraction of the cost of incumbent, hyperscale cloud providers.

For a Strategic CFO analyzing cloud expenditures, the concept of "Token Arbitrage" has become highly relevant in 2026. If an enterprise application consumes 1 billion tokens monthly for continuous Retrieval-Augmented Generation (RAG) and conversational workflows, utilizing premium proprietary models from 2024 could result in a monthly API bill approaching $20,000. By shifting that exact same workload to the price floor—utilizing baseline open-weight models hosted on highly efficient hardware—the monthly burn rate drops instantly to under $50. The underlying parameters of open models like Llama-3 are functionally identical regardless of the provider; the only true variables are the API wrapper, the custom silicon powering it, and the price. When you pair those savings with disciplined token-cost optimization techniques for LLMs, the ROI picture becomes even stronger.

Pricing Matrix: Groq vs. Market Alternatives

To effectively quantify the potential OpEx savings, it is necessary to examine the per-million token pricing across the broader AI industry. The table below provides a comprehensive comparison of the input and output costs of leading models hosted on various platforms (data current as of March 2026).

| Provider Ecosystem | Model Specification | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) | Strategic Market Positioning |

|---|---|---|---|---|

| OpenAI | GPT-5.2 | $1.75 | $14.00 | Flagship proprietary intelligence; highly expensive |

| Gemini 3.1 Pro | $2.00 | $12.00 | Flagship proprietary intelligence | |

| Anthropic | Claude Opus 4.6 | $5.00 | $25.00 | Highest cost flagship proprietary |

| OpenAI | GPT-4o-mini | $0.15 | $0.60 | Standard small proprietary model |

| GroqCloud | GPT-OSS 120B | $0.15 | $0.60 | Frontier-level intelligence at small model pricing |

| GroqCloud | GPT-OSS 20B | $0.10 | $0.50 | Ultra-low latency, highly affordable agentic logic |

| GroqCloud | Llama 3.3 70B | $0.59 | $0.79 | Fast, versatile, general-purpose inference |

| Cerebras Systems | Llama 3.3 70B | $0.60 | $0.60 | Wafer-scale throughput; blended pricing similar to Groq |

| DeepInfra | Llama 3.3 70B | $0.36 | $0.36 | Aggressive low-cost provider; variable latency trade-offs |

Real-World OpEx Reductions: Enterprise Case Studies

The raw per-token economic data translates directly into massive operational expense (OpEx) reductions for deployed enterprise applications.

Consider CaseIQ, an enterprise infrastructure support platform that extracts key facts from complex customer cloud support tickets. Their original backend system, powered heavily by OpenAI's GPT-4o, required over 3 seconds to execute five distinct inference tasks (platform detection, severity inference, asset ID extraction, etc.). This high latency prevented the system from feeling responsive and made real-time integration into customer support channels like Slack practically impossible.

By migrating to a strategic hybrid approach hosted on Groq's specialized LPU hardware, CaseIQ fundamentally transformed their application. They utilized the highly efficient Llama 3.1 8B for simple classification tasks and the more powerful Llama 3.3 70B for complex extraction. The results were staggering: they reduced total inference time to under 1 second (a 4x overall speedup) and achieved up to a 50x reduction in operational costs by migrating away from proprietary APIs, all while maintaining a 90%+ quality agreement rate with their original GPT-4o baseline.

Similarly, organizations operating massive marketing automation platforms have noted that utilizing aggressively priced, fast inference for high-volume background tasks is the only way to scale sustainably. Groq's highly competitive pricing tiers enable enterprises to execute continuous, high-volume token processing tasks—such as live document summarization, persistent RAG vector updates, and automated code review—without inadvertently bankrupting departmental IT budgets.

Performance Benchmarks: Speed, Throughput, and the LPU Edge

While cost and economics are critical, raw performance remains the primary driver of the LPU value proposition. In late 2025 and early 2026, the AI inference hardware market witnessed an intense, highly publicized benchmarking war between specialized ASIC providers like Groq and Cerebras Systems, and NVIDIA's newest, highly touted B200 and Blackwell GPU architectures.

Rigorous data from Artificial Analysis, a highly respected independent AI benchmarking firm that conducts live, 72-hour update cycles of live API endpoints, highlights the nuanced competitive landscape facing the CTO today. The core takeaway from these independent evaluations is straightforward: there is no single "best" model or hardware; there is only the best solution for a specific combination of intelligence requirements, latency tolerance, and budget.

The Latency King (Groq): Groq's LPU architecture is unapologetically optimized for small-batch inference and unmatchable Time-to-First-Token (TTFT) metrics. For latency-sensitive applications like real-time Voice Bots, where the system must run Speech-to-Text, process an LLM prompt, and synthesize Text-to-Speech in under 1.5 seconds, Groq is vital for minimizing total roundtrip response times. Independent evaluations routinely record Groq's TTFT as low as 0.19 seconds. Furthermore, the LPU delivers the massive Llama 3.3 70B model at approximately 276 tokens per second consistently, ensuring sub-second response times regardless of context length.

The Throughput Titan (Cerebras): While Groq optimizes relentlessly for single-user, deterministic latency, Cerebras currently dominates in massive, steady throughput utilizing its unique Wafer-Scale Engine. In recent, highly publicized tests involving Meta's absolutely massive 400-billion parameter Llama 4 Maverick model, Cerebras set a world record by achieving over 2,522 tokens per second per user, vastly outpacing NVIDIA's flagship Blackwell architecture (1,038 t/s) and Groq (549 t/s).

The GPU Incumbent (NVIDIA): NVIDIA's newer B200 systems demonstrate excellent system-wide throughput under heavy concurrent load (batching), offering a 3x throughput advantage over older H200 chips. However, traditional GPUs inherently suffer from higher per-user latency at smaller scales compared to dedicated inference ASICs like Groq. Furthermore, GPU performance scales variably depending on load, whereas Groq's static scheduling ensures performance scales linearly without unpredictable slowdowns.

The Strategic Takeaway for the Enterprise CTO: Technology leaders must explicitly match hardware architectures to the specific workload profile. For massive background batch processing, offline data structuring, or large-scale LLM training, Cerebras Wafer-Scale Engines or massive NVIDIA GPU clusters offer superior aggregate throughput and raw capacity. However, for interactive, user-facing applications, real-time agentic tools executing code in a loop, dynamic customer service voice bots, and operational scenarios requiring absolute, predictable, jitter-free execution, Groq's deterministic LPU architecture remains entirely unmatched in delivering the lowest possible latency. This predictable speed is exactly why Groq was recognized as a 2025 Gartner® Cool Vendor in AI Infrastructure—the report specifically highlighted Groq's "what you see is what you get" performance at scale.

Enterprise Security, Safety, and Compliance Posture

In deploying foundational AI at the enterprise level, raw intelligence and blistering speed are completely irrelevant if corporate data sovereignty and robust security cannot be absolutely guaranteed. Organizations operating in regulated industries cannot afford to transmit highly sensitive financial or patient data to consumer-grade APIs. Groq has systematically matured its infrastructure to meet stringent B2B enterprise compliance requirements, ensuring that proprietary corporate data remains hermetically secure.

Compliance Frameworks and Third-Party Certifications

Groq demonstrates a highly robust security posture validated continuously by independent, third-party auditors. As of mid-2024 and successfully maintained through 2025, Groq proudly holds the SOC 2 Type II certification. Unlike a Type I report which merely takes a snapshot in time, this Type II certification rigorously verifies that Groq’s security practices, internal controls, unique production database authentication, and data encryption mechanisms effectively protect customer data over an extended, continuous observation period. Groq undergoes these independent audits at least annually, including deep penetration testing, to rapidly remediate vulnerabilities and ensure strict alignment with their comprehensive Enterprise Security Framework.

Crucially for organizations operating within the heavily regulated healthcare and insurance sectors, Groq is fully HIPAA compliant. Customers subject to the Health Insurance Portability and Accountability Act can execute a formal Business Associate Addendum (BAA) with Groq, legally ensuring the protection of Protected Health Information (PHI) processed through supported cloud services.

However, system architects must note a critical, explicit limitation: currently, the Compound AI systems and Groq's Built-in Tools are not covered under the BAA. Because these tools dynamically interact with the open internet (via Browser Search) or execute arbitrary code, they represent a different risk profile. Therefore, healthcare organizations building agentic workflows handling PHI must utilize the core, standard Groq API for raw LLM inference and manage all tool orchestration locally within their own secure, audited enclaves.

Data Sovereignty and Zero-Retention Policies

For global enterprises deeply concerned with strict data residency—a critical, non-negotiable issue under GDPR and various emerging national data protection laws—Groq’s Enterprise tier offers highly configurable Regional Endpoint Selection. This capability allows organizations to mandate that their proprietary data is processed exclusively within specific geographic regions or countries (e.g., European data centers), ensuring full compliance with local data localization laws.

Furthermore, Groq operates on a strict Zero-Data Retention policy for its commercial tiers. This means that customer prompts, deeply proprietary context windows, and generated outputs are never stored on Groq's servers after the API call concludes, nor are they ever utilized by Groq to train future AI models. This entirely eliminates the existential risk of proprietary intellectual property leaking into the weights of a public foundational model. For many organizations, this needs to be paired with disciplined AI governance and secure SDLC practices so that the whole end-to-end system stays compliant.

Real-World Enterprise Use Cases and Implementation Architecture

The potent combination of deterministic low latency, agentic built-in tools, and rigorous security makes Groq uniquely suitable for highly demanding industry applications that traditional cloud GPUs simply cannot service effectively.

Transforming Industry Workloads

Financial Risk & Trading Intelligence: In the high-stakes realm of high-frequency trading and live financial risk analysis, milliseconds literally dictate significant profit and loss. Groq delivers the deterministic, high-throughput inference necessary to instantly turn massive, chaotic streams of transaction data and live market context into actionable, automated trading intelligence. The inherent determinism of the LPU architecture ensures that critical financial algorithms never stall waiting for a batched, delayed GPU response.

Defense and Real-Time Threat Detection: Modern military command centers and enterprise cybersecurity environments generate staggering terabytes of telemetry and sensor data every single second. Groq provides the sustained, ultra-low-latency processing required to continuously detect anomalies, analyze adversarial network signals, and automate rapid command center responses before digital or physical threats escalate.

Next-Generation Healthcare AI Agents: While standard, slower inference is often adequate for offline medical imaging diagnostics, healthcare administrators leverage Groq's unparalleled speed to power real-time clinical co-pilots. These advanced agents can ingest and analyze highly complex patient histories on the fly, generating real-time, evidence-based recommendations for physicians during live patient consultations without causing awkward, disruptive conversational delays.

Generative Engine Optimization (GEO) for Marketing: With up to 70% of B2B software buyers now utilizing AI assistants (like Perplexity or Google AI Overviews) rather than traditional search engines for vendor research, the field of SEO has fundamentally evolved into Generative Engine Optimization (GEO). Innovative Marketing Directors rely on extremely fast, long-context LLMs to continuously analyze these AI search engines, synthetically generating and testing massive volumes of highly structured product content designed specifically for machine ingestion and citation.

Implementing Groq with Modern Enterprise Stacks

For organizations looking to deploy Groq-powered applications, the transition is remarkably straightforward. Because Groq provides a fully OpenAI-compatible API architecture, migrating existing workloads from standard cloud providers simply requires updating a few API keys and base URLs within the application code.

However, building enterprise-grade, resilient AI applications requires more than just a fast API. Organizations often turn to specialized technology partners to manage this deep integration. For technology partners like Baytech Consulting, which specializes in custom software development and sophisticated application management, Groq represents a profound tool for delivering a Tailored Tech Advantage.

Using Rapid Agile Deployment methodologies, highly skilled engineering teams can seamlessly integrate Groq inference into existing, highly secure enterprise infrastructure. For instance, developers can encapsulate Groq API connection microservices within isolated Docker containers, orchestrating them dynamically via Kubernetes on Harvester HCI to handle massive, fluctuating user scale. When this infrastructure is paired with an experienced AI integration partner, enterprises can move from proofs of concept to stable production systems much faster.

Crucially, highly sensitive proprietary enterprise data—which is absolutely essential for Retrieval-Augmented Generation (RAG) workflows—can be securely managed and stored locally within PostgreSQL or robust SQL Server databases managed on-premise. Development teams utilizing modern IDEs like VS Code can rapidly prototype complex agentic behaviors using Groq's lightning-fast LLMs, while managing the entire strict deployment lifecycle through secure Azure DevOps On-Prem pipelines. This sophisticated, hybrid approach allows enterprises to maintain absolute, uncompromising control over their structured data while simultaneously leveraging the cloud-based, unparalleled speed of Groq's LPU architecture for the raw intelligence processing. It also aligns cleanly with an AI-native software development lifecycle, where prompts, agents, and human review are all first-class citizens.

Navigating the Future of AI Compute

The artificial intelligence industry is rapidly maturing past the initial phase of sheer capability demonstration and accelerating into an era strictly defined by operational economics, predictable scalability, and flawless user experience. As the expansive data and analysis within this report clearly indicate, traditional GPU architectures, heavily constrained by the physical High Bandwidth Memory wall and speculative dynamic scheduling, fundamentally struggle to deliver the deterministic, sub-millisecond latency required for production-scale, autonomous agentic workflows.

Groq.com and its proprietary Language Processing Unit (LPU) represent a profound, necessary hardware reimagining. By seamlessly combining massive on-chip SRAM with a ruthless, software-first compiler design, Groq ensures that state-of-the-art models execute with absolute, clockwork predictability. The platform’s highly robust model ecosystem—featuring the versatile Meta Llama models, Alibaba's powerful Qwen, and the highly disruptive, immensely capable OpenAI GPT-OSS suite—provides the foundational intelligence required for any modern enterprise task. Furthermore, the strategic introduction of the autonomous Groq Compound systems and server-side Built-In tools masterfully abstracts the immense complexity of agentic orchestration. This allows B2B organizations to rapidly deploy autonomous systems that can securely search the live web, execute complex Python code, and reason through data without relying on convoluted, latency-inducing client-side middleware.

Supported comprehensively by true enterprise-grade security, including rigorously audited SOC 2 Type II controls and formal HIPAA compliance for core inference, Groq is undeniably positioned as a premier infrastructure choice for highly latency-sensitive applications in finance, healthcare, defense, and high-tech software. For visionary B2B executives seeking to aggressively maximize their AI ROI while mitigating technical debt, transitioning core inference workloads to specialized hardware like the LPU is no longer just a clever optimization strategy—it is a clear, definitive competitive necessity. Many leaders will also pair Groq’s speed with a team-of-agents architecture rather than a single “super bot,” so they can scale complex workflows safely.

Frequently Asked Questions (FAQ)

Can I run my own custom models on Groq's hardware? Yes. While the standard Developer tiers focus on hosted open-weight models, Groq's Enterprise tier specifically supports the deployment of custom models and LoRA (Low-Rank Adaptation) fine-tunes. This critical feature allows B2B organizations to securely utilize highly specialized, proprietary weights on LPU hardware without exposing their IP to the public cloud.

What exactly happens if my application exceeds its API usage limits on the Free tier? Groq provides a generous Free tier for prototyping, governed by specific rate limits (requests or tokens per minute and per day). If your application exceeds these limits, the API will temporarily reject requests until the quota resets. For production workloads or high-volume testing, organizations must upgrade to the pay-as-you-go Developer tier to unlock significantly higher limits, batch processing capabilities, and priority support.

Are Groq's Built-In Tools safe and compliant for processing healthcare data? This is a critical distinction. While the core Groq platform is fully HIPAA compliant and offers a formal Business Associate Addendum (BAA) for standard inference, the Compound AI System and the specific Built-In Tools (like dynamic web search and code execution) are currently explicitly excluded from the BAA. Therefore, they must not be used to process Protected Health Information (PHI).

Why was Groq recognized as a Gartner Cool Vendor? In late 2025, Groq was named a Gartner® Cool Vendor in AI Infrastructure. According to Gartner analysts, this recognition stems from Groq's deterministic execution model. Because LPU performance does not degrade under heavy batching or concurrent scale, it provides a "what you see is what you get" predictability that is highly attractive to enterprise I&O leaders trying to forecast costs and guarantee application uptime.

Supporting Research and Further Reading

- https://www.unic.ac.cy/ai-lc/2025/05/02/a-breakdown-of-openai-anthropic-google-and-grok-models-thus-far-available-in-powerflow/

- https://groq.com/blog/new-ai-inference-speed-benchmark-for-llama-3-3-70b-powered-by-groq

- https://groq.com/blog/groq-recognized-gartner-cool-vendor

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.