The Future of Enterprise AI: Building Internal App Stores

May 04, 2026 / Bryan Reynolds

Building the Internal App Store for AI Agents: A Blueprint for Bespoke Enterprise Architecture

The enterprise technology landscape in 2026 is undergoing a profound and rapid architectural shift. According to recent industry projections, up to 40 percent of enterprise applications will feature integrated, task-specific artificial intelligence (AI) agents by the end of this year—a staggering leap from less than 5 percent just twelve months prior.

This exponential growth shows that the era of treating artificial intelligence as a novel, conversational sideshow is over. AI is rapidly becoming the core engine of enterprise operations, not just another widget bolted onto existing tools.

However, as organizations rush to deploy these capabilities, a stark operational reality has emerged: while nearly 89 percent of enterprises have adopted AI tools, only 23 percent can accurately measure their return on investment (ROI) with hard data.

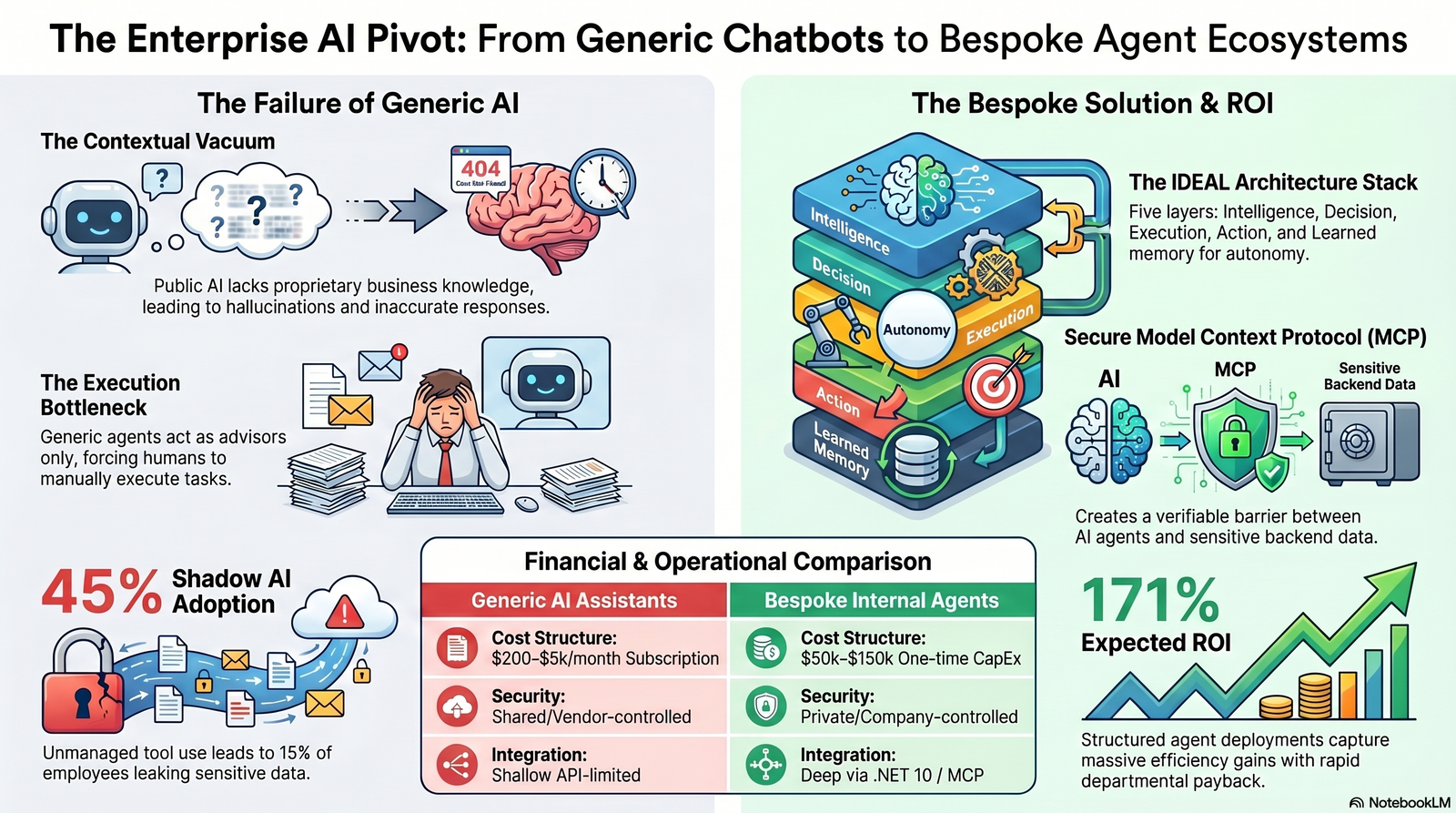

This massive disconnect highlights a critical failure in how large organizations initially approached artificial intelligence. The reliance on generic, horizontal AI assistants—public platforms designed for mass-market appeal—has resulted in scattered experimentation, rampant shadow IT, and a fundamental inability to execute complex, organization-specific workflows. To move beyond basic chatbots and unlock true operational leverage, forward-thinking organizations are fundamentally changing their infrastructure. They are transitioning toward bespoke, internal "app stores" of specialized AI agents.

These centralized, privately hosted agentic ecosystems are purposefully built for distinct internal groups, ranging from human resources (HR) and finance to executive forums and IT operations. If your organization is wrestling with this shift, it helps to look at broader market patterns, like the pricing and architecture changes described in The SaaSocalypse: How AI Is Reshaping Software Markets in 2026, where per-seat SaaS gives way to outcome-focused, AI-driven platforms.

Building this internal infrastructure requires rigorous software architecture, strict data governance, and high-performance backend frameworks. By leveraging modern development stacks like .NET 10, C# 14, and secure compartmentalization protocols, enterprises can transform AI from a generic web interface into a secure, integrated operational powerhouse. This report explores why generic platforms fail, the architectural advantages of using .NET 10 for custom orchestration, the financial models driving this shift, and the security protocols required to compartmentalize enterprise data safely.

The Great ROI Disconnect: Why Generic AI Platforms Fail the Enterprise

Generic AI platforms provide broad, impressive utility, but they consistently fail when exposed to the nuanced, heavily regulated, and deeply interconnected realities of enterprise operations. These failures are not merely superficial interface issues; they stem from profound architectural and operational deficiencies inherent in off-the-shelf public models. For the visionary Chief Technology Officer (CTO) or strategic Chief Financial Officer (CFO) evaluating software investments, understanding these failure points is the first step toward operational maturity.

The Contextual Vacuum

Publicly available AI agents lack an intrinsic awareness of an organization’s proprietary operations. They possess vast amounts of general knowledge but zero specific business context. A generic agent does not know which internal policies apply to specific user roles, which database systems are considered authoritative, or which compliance frameworks govern specific internal datasets.

When a generic agent is queried about an internal process, it relies on generalized pre-training or shallow, bolted-on retrieval-augmented generation (RAG) that often hallucinates or provides information misaligned with actual internal procedures. For example, if an employee asks a public AI about the company's hardware procurement policy, the AI might generate a perfectly logical, industry-standard response that completely ignores the company's actual, negotiated vendor agreements stored in an internal repository.

Interaction Without Execution: The Ticketing Bottleneck

The most significant limitation of horizontal AI is its fundamental inability to take meaningful action. In a typical generic implementation, an AI assistant provides an answer, but the interaction ends before the task is truly finished.

If a finance manager asks a public agent to reconcile a discrepancy in an invoice, the agent might accurately output the standard accounting steps required to resolve the issue. However, the manager must still manually open the enterprise resource planning (ERP) system, update the records, request the necessary approvals via email, and navigate multiple software interfaces to execute the task. The AI acted as an advisor, not an executor. This dynamic forces human workers to remain the "middleware" between the AI's advice and the company's systems, ultimately failing to reduce cycle times, minimize human error, or deliver the massive productivity gains promised by software vendors. For finance leaders trying to tie automation to real business value, frameworks like those in Reframing AI ROI: How CFOs Can Justify Tech Investments can provide a practical lens.

Deep System Disconnection and Governance Risks

Generic AI platforms are often bolted onto existing infrastructure rather than deeply integrated into core operational systems. This shallow integration means they operate entirely outside the organization's existing identity providers, business rules, and security perimeters.

Relying on these generic platforms creates massive, often unquantifiable security vulnerabilities. Without centralized governance and bespoke internal tools, employees inevitably seek the path of least resistance, leading to rampant "shadow AI" adoption. The data on this is alarming: 45 percent of AI tool adoption currently happens outside formal IT procurement processes. Employees, looking for productivity boosts, frequently paste sensitive data—such as financial records, proprietary source code, or personally identifiable information (PII)—into public models. Current metrics indicate that approximately 15 percent of employees have pasted sensitive information into public large language models (LLMs), creating massive compliance and security liabilities. You can see how quickly these risks escalate in the scenarios outlined in Shadow AI Risks in 2026 and Strategies for Secure Adoption.

Furthermore, generic integrations are highly susceptible to novel attack vectors. In highly publicized security incidents, such as the CVE-2025-32711 vulnerability (often referred to as EchoLeak), security researchers demonstrated that a single crafted email sent to a generic enterprise copilot was enough to trigger automatic data exfiltration without any user clicks. When organizations deploy generic agents across their entire software suite without custom, hardened boundaries, a compromise in one integration can cascade across the entire ecosystem.

To truly understand the operational gap between these approaches, it is helpful to delineate the capabilities clearly.

Table 1: Architectural Comparison of Generic vs. Context-Aware AI

| Architectural Dimension | Generic Public AI Assistant | Context-Aware Internal AI Agent |

|---|---|---|

| Business Context | Inferred, generic, or highly limited. | Explicit, structured, and organization-specific. |

| Data Sources | Broad, uncontrolled web data and shallow uploads. | Approved, proprietary operational databases and APIs. |

| Role in Workflows | Advisory and conversational only; requires human action. | Execution-aware, action-oriented, and autonomous. |

| System Integration | Shallow, API-limited, or completely disconnected. | Deep integration via Model Context Protocol (MCP). |

| Governance & Security | Bolted on, user-dependent, prone to shadow IT. | Built-in by design (RBAC, ReBAC, Zero Trust scopes). |

| Production Readiness | Often stuck in pilot phase or isolated individual use. | Designed for operational use at massive enterprise scale. |

The Architecture of Autonomy: Building the Internal AI App Store

To resolve the limitations of horizontal AI, enterprises are fundamentally restructuring their AI provisioning through the "internal AI app store" model. This approach eliminates Software-as-a-Service (SaaS) sprawl by providing a single, unified, privately hosted platform where IT and business leaders can instantly provision department-specific, bespoke AI agents.

In this mature ecosystem, an enterprise does not rely on a single, omniscient AI attempting to do everything for everyone. Instead, it deploys a network of highly specialized agents—an "agentic AI mesh"—that collaborate dynamically. A marketing department might utilize a campaign generation agent connected to previous performance metrics, while the finance department utilizes a distinct tax compliance and invoice reconciliation agent connected directly to the ERP. These agents are hosted privately, trained or fine-tuned purely on compartmentalized organizational data, and exposed to employees through a secure internal marketplace. For a deeper dive into how these autonomous workers behave in practice, compare the approaches in OpenClaw: How AI Agents Are Rewriting Enterprise Workflows.

The IDEAL Architecture Stack

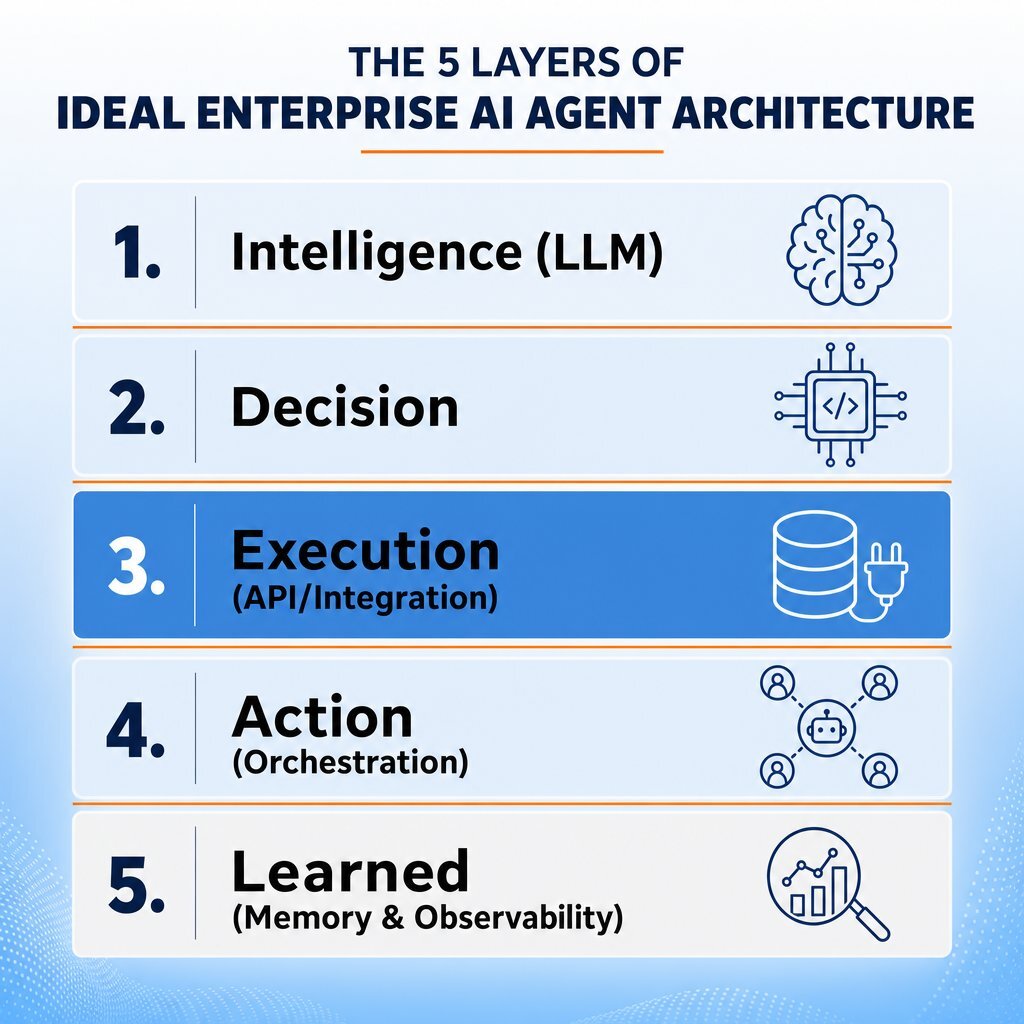

Transitioning from horizontal tools to vertical, function-specific agents requires a sophisticated architectural framework. The emerging industry standard for designing these internal platforms is the IDEAL stack, which breaks down enterprise agent architecture into five critical, independent layers:

- Intelligence: The foundational LLM. In an internal app store, this is often a self-hosted open-source model (like Llama or Mistral) running on private infrastructure, or a secure, private tenant of a frontier model. This ensures data never leaves the corporate boundary.

- Decision: The reasoning engine. This layer utilizes advanced planning algorithms, tight feedback loops, and highly structured RAG to determine how to solve a problem before taking action.

- Execution: The integration layer. This defines the specific internal tools, databases, and APIs the agent is authorized to use.

- Action: The orchestration layer. This coordinates multi-agent workflows, managing the handoffs between specialized agents and ensuring tasks are executed in the correct sequence.

- Learned: The memory and observability layer. This maintains an immutable audit trail of every agent decision, tracks performance over time, and allows the system to continuously improve through documented memory.

Building this sophisticated five-layer stack requires a backend development environment that prioritizes type safety, extreme performance, and enterprise-grade integration capabilities. This is where modern C# and .NET 10 become indispensable, especially when paired with disciplined DevOps efficiency and automation practices.

Powering Bespoke Agents with .NET 10 and C# 14

Powering the backend of an internal AI app store requires a framework capable of handling high-throughput, concurrent AI operations while maintaining uncompromised enterprise-grade security. .NET 10, released alongside the highly expressive C# 14, has rapidly emerged as the premier ecosystem for building these custom orchestration layers.

Firms that require a distinct, tailored tech advantage frequently partner with specialized enterprise software engineering groups to bring these systems to life. Baytech Consulting, for example, specializes in custom software development and sophisticated application management. By leveraging a modern, battle-tested tech stack encompassing Azure DevOps On-Prem, Postgres, Kubernetes, Docker, and Visual Studio 2022, complex .NET 10 AI orchestration architectures can undergo rapid agile deployment. This ensures the timely, adaptive, and transparent delivery of enterprise-grade solutions that off-the-shelf vendors simply cannot match. This kind of partnership mindset is at the core of our Partnership Approach with clients.

Unified AI Abstractions: Microsoft.Extensions.AI

Historically, integrating AI models into backend enterprise systems required developers to code directly against proprietary vendor software development kits (SDKs). If an enterprise wanted to switch from OpenAI to a locally hosted Ollama model for privacy reasons, it required a massive rewrite of the application's core logic.

.NET 10 solves this through Microsoft.Extensions.AI, a thin, incredibly powerful abstraction layer for core AI primitives. It provides standardized interfaces, most notably IChatClient and IEmbeddingGenerator<TInput, TEmbedding>, which allow developers to plug in concrete implementations for Azure OpenAI, GitHub Models, or any other provider seamlessly at application startup.

This provider-agnostic approach is the bedrock of the internal app store. It allows a CTO to switch underlying intelligence models based on cost, regulatory changes, or performance upgrades without rewriting the agent's core orchestration logic. Furthermore, it provides a unified middleware pipeline for cross-cutting concerns. Developers can inject standard .NET Dependency Injection (DI) patterns to add robust caching, structured logging, and built-in OpenTelemetry across all AI calls, ensuring comprehensive observability of agent behavior. That flexibility is crucial if you want to avoid “renting” all your intelligence from vendors, as discussed in Stop Renting Intelligence: Build the AI That Keeps Your Edge.

The Microsoft Agent Framework (MAF)

While Microsoft.Extensions.AI handles the base layer, facilitating the "Action" and "Decision" layers of the IDEAL stack requires sophisticated orchestration. .NET 10 introduces the Microsoft Agent Framework (MAF), designed specifically for building intelligent, agentic systems. MAF simplifies the orchestration of complex workflows by combining the mature capabilities of Semantic Kernel with the research-driven, multi-agent collaboration techniques of AutoGen into a single, unified developer experience.

The Microsoft Agent Framework provides the infrastructure to build complex, multi-agent orchestration systems with minimal boilerplate code. It allows developers to "Scale Up" from a single utility agent to a highly capable system of specialized agents by supporting several advanced workflow patterns necessary for an internal app store:

- Sequential Routing: Agents execute tasks in a strict, predefined order, passing validated outputs down the chain. Using the

AgentWorkflowBuilder, developers can chain operations—for example, a data extraction agent pulls financial metrics, passes them to a reasoning agent for variance analysis, which then passes the finalized insights to a reporting agent for formatting. - Concurrent Execution: Multiple agents analyze different facets of a massive dataset simultaneously. A legal compliance agent and a financial risk agent can review the same proposed vendor contract in parallel, drastically accelerating document processing times.

- Handoff Protocols: Responsibility shifts dynamically between agents based on operational context. If a level-one IT support agent detects a complex network vulnerability, it executes a programmatic handoff to a specialized cybersecurity threat response agent.

- Group Chat Collaboration: Multiple specialized agents operate in a shared, real-time conversational space, debating approaches and collaborating to solve complex, multi-variable problems autonomously.

Polyglot Orchestration with .NET Aspire 13

Modern enterprise architecture is rarely a monolith. An internal AI app store might rely on Python-based machine learning models for predictive analytics, PostgreSQL vector databases for semantic memory, and modern JavaScript/TypeScript frontends for the user interface. .NET Aspire 13, integrated natively with .NET 10, serves as the ultimate control plane for this distributed, agentic development.

Aspire 13 allows an AppHost to seamlessly orchestrate, debug, and deploy multi-language services. It dynamically handles project references, service discovery, and automatic metadata generation. This means that a C# orchestrator can execute and manage a Python data science container as effortlessly as it manages a local .NET class library. With the introduction of TypeScript AppHost authoring and an agent-native CLI, Aspire 13 ensures that the entire distributed stack—including databases like Postgres or Redis—is consistently provisioned. By automatically integrating structured logs, console output, and distributed traces into a unified dashboard, Aspire 13 provides the deep observability required to monitor and debug autonomous AI agents in real-time.

This polyglot control plane becomes even more powerful when you pair it with an enterprise application architecture that’s intentionally designed around modular services and clear integration contracts.

Unprecedented Hardware Acceleration and Performance

AI orchestration layers are highly compute-intensive, requiring rapid JSON serialization, massive vector processing, and minimal latency to maintain realistic human-agent interaction speeds. .NET 10 introduces profound hardware acceleration and Just-In-Time (JIT) compiler optimizations that make it uniquely suited for heavy AI workloads.

- AVX10.2 and Arm64 SVE Vectorization: .NET 10 adds robust support for Advanced Vector Extensions (AVX) 10.2 for x64 processors and Scalable Vector Extensions (SVE) for Arm64. This fundamentally accelerates the matrix multiplication and tensor processing required for local embedding generation and rapid semantic searches across massive organizational knowledge bases.

- Arm64 Write-Barrier Enhancements: For backend enterprise servers running on Arm64 architecture, .NET 10 introduces dynamic write-barrier switching. This precision memory management allows the generational Garbage Collector (GC) to track object references far more efficiently, resulting in GC pause time reductions of 8 to 20 percent. In the context of AI, minimizing GC pauses is critical for maintaining fluid, uninterrupted token streaming during real-time agent interactions.

- JIT Devirtualization and NativeAOT: Enhancements to the JIT compiler, specifically regarding expanded escape analysis and loop inversion, drastically reduce the "abstraction penalty" of modern C#. Operations that previously required expensive heap allocations—such as closures and delegates used heavily in data pipelines—can now be safely allocated on the stack. Combined with improvements to NativeAOT (Ahead-of-Time compilation) type preinitializers, .NET 10 generates smaller, faster, and highly memory-efficient applications that allow orchestration layers to scale effectively with minimal resource overhead.

- C# 14 Language Features: The release of C# 14 alongside .NET 10 brings extension properties and static extension members. This allows enterprise developers to add clean, expressive orchestration logic to types they don't even own (such as third-party AI libraries), vastly improving code maintainability in complex zero-allocation scenarios.

Future-Proofing Security with Post-Quantum Cryptography

As internal AI agents handle an organization's most sensitive, mission-critical data, securing the transmission of this data across the internal network is paramount. .NET 10 networking and cryptography libraries have been substantially upgraded. Most notably, they now include expanded Post-Quantum Cryptography (PQC) support, incorporating algorithms like ML-KEM (FIPS 203) for key encapsulation and ML-DSA (FIPS 204) for digital signatures. By integrating these algorithms natively into the AI orchestration layer, developers ensure that agent-to-agent and agent-to-database communications remain mathematically secure against both contemporary attacks and future quantum computing threats.

Safely Compartmentalizing Data Access for Internal Agents

Deploying an internal app store means hosting multiple agents that serve wildly different business units. An HR agent automating onboarding has no business accessing corporate acquisition strategies, and a marketing agent optimizing ad copy must be cryptographically barred from accessing employee payroll data. Safely compartmentalizing data access requires moving beyond legacy, perimeter-based defenses to strict, identity-first access controls designed explicitly for autonomous software.

The Imperative of Non-Human Identities (NHI)

Organizations must fundamentally shift their security posture to treat every AI agent as a "first-class" Non-Human Identity (NHI). Each agent deployed from the internal app store must have a uniquely verifiable identity, defined authorization boundaries, and an assigned human owner for ultimate accountability.

Traditional Role-Based Access Control (RBAC) frameworks, which were designed to assign static permissions to human users logging into a dashboard, consistently fail to account for the autonomous, cross-session nature of AI agents. Without stringent permission discipline, a compromised agent could succumb to indirect prompt injection and manipulate its broad access to exfiltrate data.

Effective access models for AI systems require absolute Zero Trust implementation:

- Granular Fine-Grained Authorization (FGA): Restricting agent access to the narrowest possible set of specific API endpoints, output formats, and execution windows.

- Short-lived Scoped Tokens: Utilizing OAuth scoped tokens with incredibly tight Time-To-Live (TTL) parameters. This ensures that even if an agent's execution layer is somehow compromised, the token expires before significant lateral movement or data exfiltration can occur.

- Separation of Reasoning and Execution: The LLM (the reasoning layer) should decide what action needs to be taken, but the actual execution of API calls or database mutations must occur in a heavily sandboxed control layer that independently verifies the agent's permissions before acting.

Relationship-Based Access Control (ReBAC)

For industries managing highly sensitive, legally protected, or compartmentalized information—such as healthcare, defense, or high finance—standard RBAC is completely insufficient. These environments require Relationship-Based Access Control (ReBAC) to enforce deeply context-aware constraints.

For example, an AI agent analyzing patient medical records cannot simply be granted a blanket "Doctor" role. It must computationally understand the specific relationship between the querying doctor and the target patient. Similarly, an agent working on a classified engineering project (Project A) must be blocked from accessing data related to Project B, even if the human user has access to both. ReBAC prevents unauthorized "mosaic effects" across compartmentalized intelligence by evaluating the relationship context of every single query in real-time, allowing organizations to securely deploy agents without forcing the dangerous centralization of sensitive data.

Model Context Protocol (MCP): The Ultimate Security Bridge

One of the most significant architectural breakthroughs in agentic data security is the widespread adoption of the Model Context Protocol (MCP). Operating as an open standard, MCP provides a highly structured, secure mechanism for AI applications to connect with external data sources and execution environments.

Rather than granting an LLM direct, raw SQL access to a PostgreSQL database or a proprietary CRM, enterprise developers build standalone MCP servers. These servers expose explicitly defined, heavily restricted "tools" to the MCP clients (the AI agents).

Imagine a scenario within an organization utilizing Baytech Consulting's preferred infrastructure. A Postgres database containing sensitive financial records is managed via pgAdmin on an OVHCloud server, protected by pfSense firewalls. The AI agent never touches Postgres. Instead, a C# MCP server is deployed alongside the database. If the financial AI agent needs to retrieve a vendor record, it requests the data through the MCP server. The server authenticates the agent's NHI, validates the request against ReBAC policies, executes the carefully constructed query, and returns only the sanitized, redacted data back to the LLM.

This MCP architecture creates a flawless, verifiable barrier between the AI's natural language processing capabilities and the enterprise's mission-critical backend data repositories, ensuring that even a fully compromised LLM cannot execute unauthorized database mutations. For regulated teams that also need a formal approval trail for AI-generated code and automation, a framework like the one in Secure AI Code: A 7-Stage Regulatory Compliance Framework pairs well with MCP-based access controls.

Table 2: Governance Framework for Internal Agent Deployments

| Governance Pillar | Traditional Software Approach | Agentic AI Approach |

|---|---|---|

| Identity Management | User logins and SSO (Single Sign-On). | Non-Human Identities (NHI) with human ownership mappings. |

| Access Control | Role-Based Access Control (RBAC). | Relationship-Based Access Control (ReBAC) and Fine-Grained Authorization (FGA). |

| Data Integration | Direct database connections or wide APIs. | Model Context Protocol (MCP) servers exposing strict, single-purpose tools. |

| Security Philosophy | Perimeter defense and network firewalls. | Zero Trust, short-lived scoped tokens, and execution sandboxing. |

| Audit & Observability | System logs and crash reports. | Immutable tracking of reasoning traces, data access, and action execution via OpenTelemetry. |

The Financial Blueprint: Cost Comparisons and ROI Economics

The transition from purchasing off-the-shelf SaaS applications to engineering a custom internal AI app store is fundamentally an exercise in strategic capital allocation. For a strategic CFO, the decision relies on understanding the hidden costs of software sprawl versus the long-term leverage of bespoke infrastructure.

The Hidden Costs of Generic AI Sprawl

Currently, the average enterprise maintains 23 different AI tools across its organization. This unchecked "shadow AI" creates immense, overlapping subscription sprawl. Recent industry analyses indicate that average monthly enterprise AI software spending will reach $85,521 in 2025, representing a 36 percent year-over-year increase, and pushing annualized costs over $1 million for many mid-sized organizations.

Generic, off-the-shelf agents typically operate on subscription models ranging from 200 to 5,000 per month for discrete, limited use cases, with costs scaling linearly as usage and employee headcount increase. More importantly, the hidden costs of generic platforms—the time lost to context switching, the manual data entry required because the agent cannot execute actions, the costly compliance remediation when data is pasted into public models, and the lack of true end-to-end automation—severely degrade their perceived financial value.

The Economics of Bespoke Engineering

Building a bespoke internal AI platform requires a targeted, upfront capital expenditure. Custom AI agent development typically ranges from 50,000 to 150,000 as a one-time build cost, depending heavily on the complexity of the orchestration layer and the number of required legacy integrations.

However, because the infrastructure is company-controlled (hosted securely on private cloud or on-premises servers), the marginal cost of deploying subsequent agents drops significantly. Once the foundational .NET 10 orchestration layer, the MCP servers, and the access control policies are established, spinning up a new agent for a different department becomes an exercise in configuration rather than ground-up engineering.

The mathematical formulation for evaluating this transition heavily favors custom builds for critical workflows:

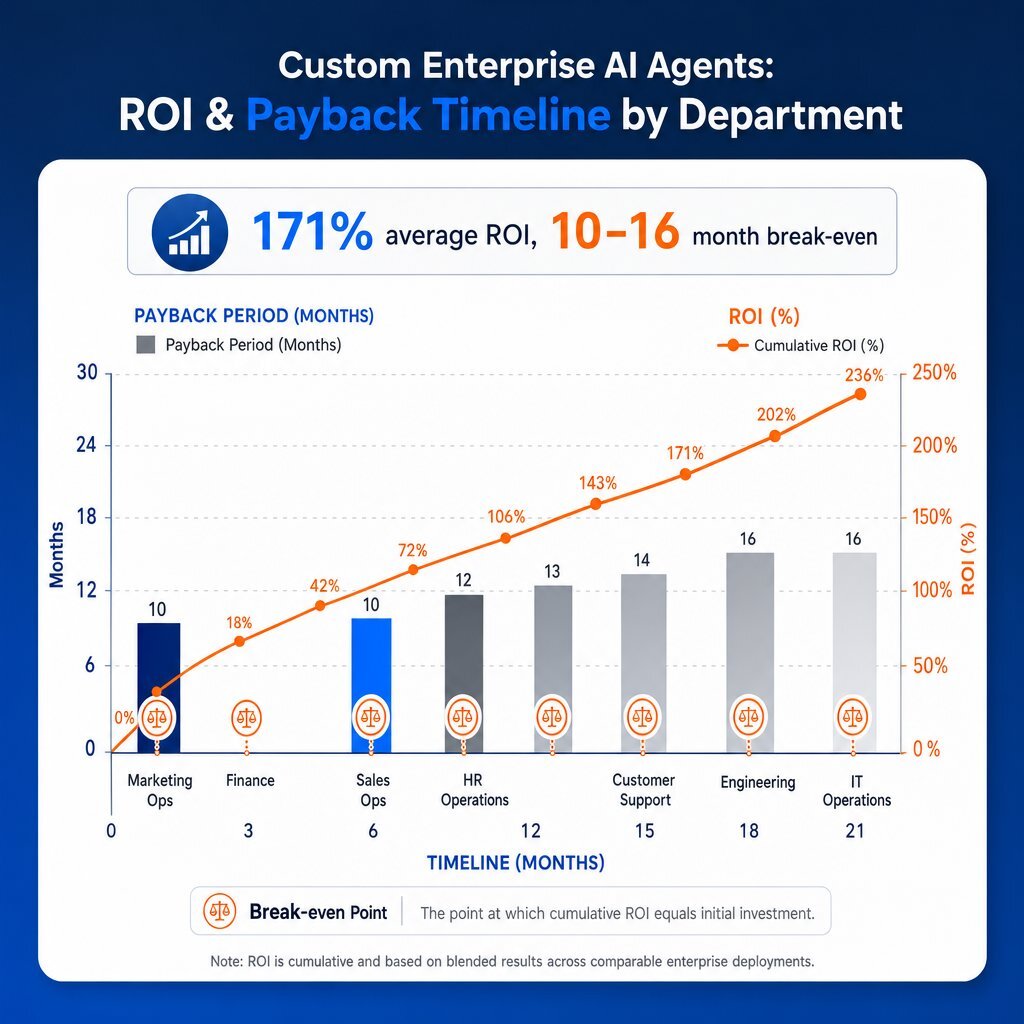

Organizations deploying agentic AI in this structured manner report an average expected return of 171 percent on their investment, with 62 percent of companies expecting ROIs exceeding 100 percent. Industry data reveals distinct payback periods across organizational departments. Recent 2026 benchmarks indicate median payback periods of 4.1 months for customer service agents, 6.7 months for marketing operations, and 9.3 months for complex engineering rollouts. The total break-even point for the overarching custom platform build typically lands between 10 and 16 months, after which the organization captures near-total margin on the automated labor, avoiding the perpetual subscription trap of generic vendors. For leaders mapping this out over the first few months, the 90-day playbook in Enterprise AI Implementation Plan: A 90-Day Roadmap for Leaders is a useful companion.

The global AI agents market itself is reflecting this value capture, projected to grow from $7.63 billion in 2025 to over $50.31 billion by 2030 at a compound annual growth rate (CAGR) of 45.8%.

Table 3: Financial Comparison and ROI Matrix

| Metric / Factor | Off-the-Shelf Generic AI Agents | Custom Bespoke AI Agents (.NET 10 / MCP) |

|---|---|---|

| Typical Cost Structure | 200 to 5,000 / month (perpetual subscription) | 50K to 150K (one-time CapEx build) |

| Data Access & Integration | API-based, limited to vendor's predefined capabilities. | Native, real-time, full internal system integration. |

| Security Model | Vendor-controlled, shared infrastructure. | Company-controlled, private cloud, or on-premises. |

| Typical Payback Period | 6 to 12 months (if the narrow use case fits) | 4.1 to 16 months (with massive long-term scale) |

| Enterprise Value Capture | Low (Creates dependency on external vendor logic). | High (Builds proprietary operational intelligence and IP). |

Bespoke AI Agent Workflows Across B2B Industries

The theoretical architecture and financial models translate into massive operational leverage when specialized agents are deployed to streamline highly specific, domain-centric workflows. A centralized internal app store allows distinct organizational groups to access custom-crafted solutions that address their precise bottlenecks without compromising security.

Human Resources and Finance

In corporate back-office operations, automation drastically reduces administrative overhead and prevents process bottlenecks.

- HR Workflows: Bespoke agents integrated deeply with platforms like Workday or UKG can autonomously manage the entire employee lifecycle. When an employee requests time off, an AI agent does not just provide a link to a form. It validates the request against company policy, dynamically checks department schedules to ensure operational coverage, initiates the multi-tier approval workflow, and updates the payroll database—all natively within messaging platforms like Microsoft Teams. Furthermore, specialized HR agents can analyze anonymized internal sentiment data to accurately predict employee flight risks, allowing management to intervene proactively. Early adopters report that custom HR agents have increased recruiter capacity by up to 54 percent.

- Finance Workflows: Financial agents eliminate the drudgery of manual reporting. By connecting an agent securely to a corporate data warehouse via MCP, finance teams can request real-time variance analysis or compliance checks in plain English. Agents can autonomously process hundreds of thousands of complex legal embargo documents or vendor invoices in minutes, standardizing unstructured data extraction and executing algorithmic reconciliations. Implementations in this sector have shown up to a 49 percent efficiency increase in Financial Planning & Analysis (FP&A) workflows.

Gaming and Software Development

For high-tech firms and gaming studios, AI agents serve as autonomous infrastructure engineers and quality assurance testers. Internal app stores provide developer-focused agents capable of scanning massive source code repositories to flag security vulnerabilities, automatically generating comprehensive documentation, and orchestrating complex CI/CD pipeline deployments. In game development, specialized agents act as tireless, automated play-testers, running millions of simulations overnight to detect physics engine bugs, find out-of-bounds exploits, or balance virtual in-game economies long before a human QA tester ever touches the build.

Real Estate and Mortgage

The commercial real estate and B2B mortgage sectors require the rapid processing of complex, highly regulated, and unstructured documentation. Bespoke agents can autonomously extract critical data from massive appraisal documents, title reports, and commercial loan applications, instantly cross-referencing the extracted data against dynamic federal lending guidelines or internal risk metrics. Furthermore, portfolio management agents can continuously analyze local market trends, property valuations, and interest rate fluctuations to provide automated, compliance-checked portfolio rebalancing recommendations for institutional investors.

Education and Learning Management Systems (LMS)

In the EdTech space, a generic conversational chatbot cannot replace a structured pedagogical framework. Custom AI agents integrated deeply into an LMS act as personalized, scalable digital mentors. These agents continuously track student engagement metrics, identify specific learning gaps through historical assessment data, and dynamically generate custom learning pathways tailored to individual cognitive styles. On the administrative side, agents can automate the complex grading of structured assessments and assist faculty in generating compliant syllabus materials, freeing educators to focus on high-value student interactions.

Healthcare

Healthcare agents operate under the strictest regulatory compliance mandates in the world, such as HIPAA. Bespoke healthcare agents are designed so they never move Protected Health Information (PHI) to public clouds. Instead, using ReBAC and highly secure local .NET 10 infrastructure, they assist clinicians by summarizing complex, multi-year medical histories instantly, tracking real-time patient vitals from integrated IoT devices, and autonomously executing complex medical coding and billing workflows. They provide the intelligence of modern AI without the catastrophic risk of generic data exposure.

Telecommunications and Advertising

Telecom providers manage massive, global networks requiring continuous optimization. Internal AI agents monitor terabytes of network traffic patterns, predict equipment failures through edge IoT sensor data (predictive maintenance), and reroute bandwidth dynamically to prevent outages before they affect customers. In the B2B advertising sector, campaign agents orchestrate complex cross-platform marketing initiatives by analyzing consumer behavioral data, executing programmatic ad buys at machine speed, and dynamically altering creative visual assets based on real-time engagement metrics.

Table 4: B2B Industry AI Agent Use Cases and Value Realization

| Industry | Example Agentic Workflow | Primary Value Driver | Expected ROI Impact |

|---|---|---|---|

| Finance / Banking | Autonomous invoice reconciliation, risk modeling, algorithmic fraud detection. | Replaces manual data entry; identifies anomalies at scale. | 49% efficiency increase in FP&A; massive risk reduction. |

| Human Resources | End-to-end Workday automation, policy querying, flight risk prediction. | Eliminates ticketing bottlenecks; improves employee experience. | 54% increase in recruiter capacity; 3-6 month payback. |

| Healthcare | HIPAA-compliant vitals monitoring, automated medical coding and billing. | Reduces administrative burden on clinicians; ensures compliance. | Error reduction; significant hours saved per practitioner. |

| Real Estate | Unstructured appraisal data extraction, portfolio rebalancing. | Accelerates complex document processing and market analysis. | Faster transaction closing; optimized investment yields. |

| Gaming / Software | Autonomous QA play-testing, CI/CD pipeline orchestration, code audits. | Enables continuous testing; reduces technical debt. | Shorter development cycles; higher code quality at launch. |

The Enterprise AI Maturity Model

Navigating the complex transition from off-the-shelf SaaS applications to a custom-engineered internal app store is not an instantaneous process. Organizations must objectively benchmark their current infrastructure against established AI maturity frameworks to identify capability gaps, mitigate risks, and prioritize their technology investments effectively.

The progression toward a fully autonomous, agentic enterprise generally follows a structured, multi-stage maturity model. Financial performance demonstrably improves as an enterprise advances through these stages. Research indicates that organizations reaching the higher maturity stages see a revenue impact translating to a 14 percent increase in revenue per employee, alongside an $8,700 annual cost reduction per employee generated by automated efficiency gains.

- Stage 1: Exploration & Preparation. At this stage, organizations are experimenting with AI, but it is highly fractured. There might be 1 to 3 isolated, generic agents in use, often implemented as shadow IT without formal procurement. Governance is ad-hoc, relying on acceptable use policies rather than hard-coded permissions. The focus is on education and attempting to make data accessible, but ROI is largely unmeasurable, and organizations often see a temporary dip in profit margins as they absorb initial software costs.

- Stage 2: Piloting & Semi-Autonomous Integration. The organization begins departmental rollouts. Agents are used to handle routine, predefined tasks, but they operate as semi-autonomous assistants requiring constant human oversight and verification. The IT department begins implementing initial security audits and basic RBAC controls. Crucially, the organization starts building the foundational data pipelines required for future orchestration, though the agents are still largely disconnected from core execution systems.

- Stage 3: Operational Scaling & The Internal App Store. This is the inflection point. The enterprise transitions away from generic public models and deploys a centralized internal app store. Multiple, highly specialized agents are in production, handling cross-functional workflows autonomously. Governance transitions from written policies to hard-coded Fine-Grained Authorization (FGA) and Zero Trust enforcement. The architecture utilizes .NET 10 orchestration, MCP servers, and robust RAG implementations. At this stage, continuous improvement loops are established, and the organization begins seeing measurable, substantial ROI. Many CTOs use checklists like those in Ready, Set, Scale: CTO Checklist for Enterprise AI to guide progress through this phase.

- Stage 4: Agentic Transformation. In the final stage, AI agents operate as core enterprise infrastructure. The organization utilizes dynamic, multi-agent collaboration where agents hand off complex tasks to one another without human intervention. Human roles are fundamentally redesigned around agent capabilities—employees become orchestrators rather than executors. Security is managed via ReBAC and continuous autonomous compliance monitoring. The IDEAL stack is fully realized, utilizing deep hardware acceleration (like AVX10.2) to power a distributed, highly profitable autonomous execution engine.

Conclusion

The era of generic, horizontal AI assistants is rapidly coming to an end in the enterprise sector. Organizations can no longer afford the unquantifiable security risks, the high hallucination rates, the perpetual subscription sprawl, and the severe operational bottlenecks associated with forcing off-the-shelf, conversational models to navigate complex, proprietary workflows.

By building bespoke agentic ecosystems—powered by the incredibly robust, high-performance architecture of .NET 10, C# 14, and the Model Context Protocol—enterprises can fundamentally transform how work is executed. This architecture allows organizations to safely compartmentalize their sensitive data, enforce rigorous identity-first governance protocols like ReBAC, and deliver targeted, highly specialized digital workers directly to the departments that need them most via an internal app store.

The path forward requires a definitive shift from scattered experimentation to industrialized, scalable software delivery. Executives must audit their current AI footprint, eradicate shadow IT, and invest in the foundational backend orchestration required to turn artificial intelligence from a costly SaaS subscription into a true, proprietary operational asset. When done well, this journey blends modern AI integration patterns with disciplined software engineering and change management.

Frequently Asked Questions

Why do generic AI platforms fail to understand specific organizational cultures and workflows? Generic AI platforms are trained on broad, publicly available datasets. They lack intrinsic awareness of an organization's proprietary rules, accepted data hierarchies, negotiated contracts, and nuanced operational contexts. Because they sit outside an enterprise's core software infrastructure, they cannot execute actions; they merely provide conversational advice that humans must then manually implement. This creates a bottleneck that fails to deliver true automation or understand the specific constraints of the company's internal systems.

What is the advantage of using .NET 10 to build custom orchestration layers for internal agents? .NET 10 provides unparalleled performance, type safety, and standardization for AI orchestration. Features like Microsoft.Extensions.AI offer provider-agnostic abstractions, allowing developers to switch underlying LLMs without rewriting core logic. The Microsoft Agent Framework (MAF) natively supports complex multi-agent workflows (like concurrent execution and handoffs). Furthermore, hardware accelerations—such as AVX10.2 for vectorization and Arm64 write-barrier improvements for garbage collection—combine with .NET Aspire 13 to enable the high-throughput, secure, and observable distributed computing essential for enterprise-grade AI. For teams upgrading legacy systems in parallel, the vendor comparisons in Top Enterprise Software Development Companies in the USA to De-Risk IT Modernization are also helpful.

How can you safely compartmentalize data access when deploying multiple internal agents? Data access is compartmentalized by treating every AI agent as a verifiable Non-Human Identity (NHI) governed by strict Zero Trust principles. Instead of granting LLMs direct access to a database, organizations implement the Model Context Protocol (MCP) to serve as a highly secure, sandboxed intermediary. Combined with granular Role-Based Access Control (RBAC) and Relationship-Based Access Control (ReBAC), MCP ensures that agents can only interact with data that is explicitly authorized for their specific role, task context, and relationship to the querying user, entirely preventing unauthorized data exfiltration.

Recommended Reading

- https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025

- https://www.mckinsey.com/capabilities/quantumblack/our-insights/seizing-the-agentic-ai-advantage

https://www.okta.com/identity-101/improve-ai-agent-data-privacy-and-security/

About Baytech

At Baytech Consulting, we specialize in guiding businesses through this process, helping you build scalable, efficient, and high-performing software that evolves with your needs. Our MVP first approach helps our clients minimize upfront costs and maximize ROI. Ready to take the next step in your software development journey? Contact us today to learn how we can help you achieve your goals with a phased development approach.

About the Author

Bryan Reynolds is an accomplished technology executive with more than 25 years of experience leading innovation in the software industry. As the CEO and founder of Baytech Consulting, he has built a reputation for delivering custom software solutions that help businesses streamline operations, enhance customer experiences, and drive growth.

Bryan’s expertise spans custom software development, cloud infrastructure, artificial intelligence, and strategic business consulting, making him a trusted advisor and thought leader across a wide range of industries.